Why Does AI Recommend Certain Chiropractors for Back Pain in 2026?

AI recommends specific chiropractors for back pain by cross-checking two things: Consensus Trust — whether a practice's name, address, and services match consistently across every platform AI queries — and Entity Strength — how clearly AI can classify that practice for a specific patient problem.

If the data holds up everywhere AI checks, you're a recommendation candidate. If anything conflicts, you're out.

Not keyword matching. Not review counting. A background check.

The second piece is "Entity Strength" — how specifically AI can classify what you treat. Listed as "chiropractic care" everywhere? You get vague matches. Consistently positioned as "sciatica treatment specialist"? You get precise matches when someone asks ChatGPT exactly that.

Those two things decide who's in the 1.2% AI recommends — and who's in the other 98.8%.

Gartner projects traditional search volume drops 25% by 2026 as patients switch to asking AI instead of scrolling results. When that happens, there's no page 2 to fall back on.

One answer. Either it's you or it isn't.

Last Updated: March 24, 2026

The 1.2% Rule — And What It Actually Means for Your Clinic

SOCi's 2026 Local Visibility Index analyzed over 350,000 business locations and found one brutal stat: ChatGPT recommends just 1.2% of local businesses in any given market.

Let that sit for a second.

Your city probably has 50–60 chiropractors. ChatGPT's gonna name one. Maybe two on a good day. The rest? Not ranked lower. Not on page 2. Just gone.

Most clinic owners have no idea this is happening in their market right now.

Why One Answer Changes Everything

Most docs still think about AI the way they think about Google rankings.

Old world: you land at position 4 or 7. Still visible. Still clickable. Patients scroll the options and pick someone.

That model's dying.

When someone asks ChatGPT "best chiropractor for lower back pain near me," they get a recommendation — not a list. If your clinic isn't named, you don't get a consolation spot. You just don't exist in that decision.

Sound familiar? Great practice, solid reviews, years in the community — and some doc down the street keeps showing up in AI answers while you don't. That's not a reputation problem. That's an infrastructure problem.

Why Google Rankings Don't Translate

Now... this is the part that catches most docs off guard.

Strong Google rankings have almost no predictive power for AI recommendations. SOCi found only 45% overlap between brands dominating traditional local search and brands recommended by AI platforms. Nearly half the clinics owning Google are getting completely skipped by ChatGPT.

That's not a glitch. That's how it's built.

ChatGPT pulls from Bing — not Google. And Bing relies heavily on Foursquare as a local data aggregator. Gemini has its own ecosystem. Perplexity pulls from multiple sources. The result: rank #1 on Google and be totally absent from AI — because the pipelines are completely different.

Your competitors are optimizing for yesterday. That's your opening.

| Signal Type | Traditional SEO Weight | AI Recommendation Weight |

|---|---|---|

| Google keyword ranking | Very High | Low |

| Consistent NAP across directories | Medium | Very High |

| Schema markup / structured data | Low | Very High |

| Review sentiment (4.3+ stars) | Medium | High |

| Foursquare / Bing Places data | Low | Very High |

| Clinical niche specificity | Low | High |

| Website content depth (AEO) | Medium | Very High |

What "Consensus Trust" Actually Means

I've run this diagnostic with practices that were convinced they were in good shape.

Most weren't. And the reason almost never had anything to do with their reputation. Great reviews. Full schedule. Years in the community. But their business data was a mess across the platforms AI actually checks.

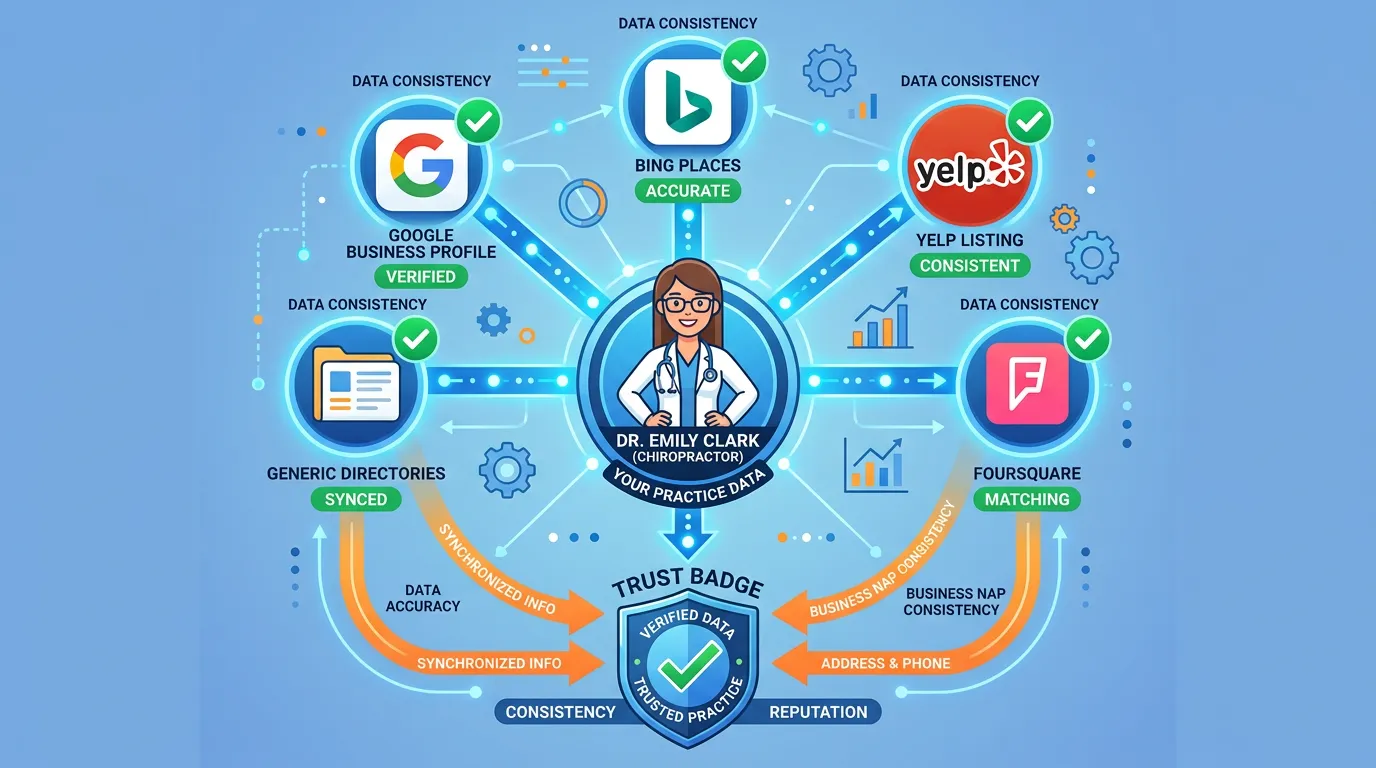

That's what Consensus Trust is about. When AI cross-checks your identity across multiple independent sources and the story matches everywhere — same name, same address, same services — it reads that as a verified entity. Safe to recommend.

AI not recommending you usually has nothing to do with your quality. It has everything to do with whether your data holds up under that cross-check.

Unresolved conflicts don't get recommended. Fragmented data doesn't get recommended. Missing data definitely doesn't.

That's exactly the gap the authority infrastructure at iTech Valet is built to close — not just Google, but every platform AI actually queries.

The Foursquare Factor Nobody Talks About

Quick question: where are you spending your local optimization time right now?

Google, right? GBP, Google reviews, Google rankings. Makes sense — it's what everyone told you to focus on.

Here's the problem. ChatGPT uses Bing as its primary local data source. And Bing relies heavily on Foursquare. That means your Foursquare listing — which you've probably never thought about — is directly influencing whether ChatGPT recommends you.

Most of your competitors have never touched their Foursquare data either. That's why the same two or three clinics keep showing up in AI answers while everyone else is confused.

What "Consistent" Actually Looks Like

Here's what AI is actually verifying — and most of it is stuff you've never had a reason to look at before.

- Business name — "Smith Chiropractic & Wellness Center" on Google and "Smith Chiro" on Yelp reads as two different entities.

- Address — Suite formats, building names, zip codes need to match everywhere. One wrong digit breaks the chain.

- Phone number — Call-tracking numbers create mismatches. Local numbers beat them in AI entity graphs.

- Service language — If your website says you treat sciatica, your GBP, Bing listing, and directories should say it too. Inconsistent language dilutes the signal.

Category tags — "Chiropractor" vs. "Chiropractic Clinic" vs. "Sports Chiropractor" can read as different entities.

| Data Point | Platform to Check | What Breaks It |

|---|---|---|

| Business name | GBP, Bing, Yelp, Foursquare | Abbreviations, punctuation, "& Wellness" add-ons |

| Address | All directories | Suite format, "Ste" vs "#", zip mismatches |

| Phone | Website, GBP, directories | Call tracking numbers vs. direct line |

| Hours | GBP, Yelp, Bing | Holiday hours not updated across platforms |

| Services | Website, GBP, directories | Generic vs. condition-specific language |

Entity Strength — Why "Chiropractor" Isn't Specific Enough

Here's the part that frustrates me most when I talk to docs about this.

They've been in practice 15 years. Built a great reputation. Treat everything from pediatric cases to sports injuries to post-accident rehab. And when I ask them what they're known for — they say "everything."

That's the Entity Strength problem.

AI needs to classify your practice to make a confident recommendation. High Entity Strength means it can answer clearly: "this clinic treats sciatica in adults in [city]." That gets recommended. Low Entity Strength means AI gets "they do general chiropractic stuff somewhere" — and moves on to whoever is more specific.

This is where chiropractic entity authority becomes the actual competitive moat. Not your logo. Not your 20 years of experience. Not your Instagram.

How Clinical Wedges Work

A wedge is the specific condition or patient focus your practice builds authority around.

Strong wedges:

- Sciatica and disc issues — "Back pain" is too broad. "Sciatica from disc herniation in adults over 40" is a wedge. Matches a specific query type.

- Pediatric chiropractic — Parents searching for a kids' chiropractor want a specialist. "Also treats kids" doesn't cut it.

- Sports chiropractic — Active adults with repetitive motion injuries have their own query patterns. Own that space.

- Post-accident recovery — Whiplash and soft tissue injuries carry high commercial intent. Highly searchable, specific niche.

Every article, FAQ, and service page you build around a wedge is another data point telling AI: this practice owns this topic, in this location, for this patient type. The signal compounds. That's how you win the match.

Why "We Treat Everyone" Kills Your AI Visibility

This one's uncomfortable, but it needs to be said.

If your positioning is "all ages, all conditions," AI has no idea when to recommend you.

You're not the sciatica doc. Not the sports chiro. Not the pediatric specialist. You're just... a chiropractor. Generic. Interchangeable. Easy to skip.

I'm not questioning your clinical skills. You might genuinely be great across the board. But AI needs a clear entity classification to make a confident recommendation — and "all conditions, all welcome" doesn't give it one.

Narrower wedge positioning beats generalists in AI recommendations — even when the generalist has more reviews and a better reputation. Specificity is currency.

The Old SEO Playbook Is Why Clinics Are Invisible

I've watched this play out too many times.

Docs paying $1,500/month for SEO. Monthly reports showing page 1 rankings. Feeling good about things.

ChatGPT recommending their competitor. Every. Single. Time.

A clinic can rank #1 on Google for "chiropractor [city]" and be completely invisible when a patient asks ChatGPT the same question. Rankings and recommendations are two different outcomes driven by two different systems.

Traditional SEO optimizes for an algorithm. AEO optimizes for an inference engine. Not the same thing.

Why the Old Playbook Fails

An algorithm asks: does this page have the right keywords and backlinks? Rank it.

An inference engine asks: can I confidently recommend a specific, verified practice for this exact patient problem in this exact location?

Those are completely different questions. And the future of chiropractic search runs on the second one.

Keyword stuffing doesn't build AI trust. Backlinks don't verify your services. A perfect meta description doesn't update your Foursquare listing. Wrong tools, wrong fight.

Traditional SEO agencies aren't bad at what they do. They're just doing the wrong thing.

What AI Actually Reads on Your Site

Auditing your clinic's AI visibility starts with entity signals, not keyword signals.

Here's what actually moves the needle:

- FAQPage schema — Structured Q&A AI can extract cleanly. Pages without schema get passed over.

- LocalBusiness schema — Machine-readable code telling AI your business type, services, and location.

- Service-specific pages — One generic "services" page tells AI almost nothing. Individual pages for "sciatica treatment" and "sports injury chiropractic" each build a separate entity match.

Content depth — AI rewards articles that fully answer a patient's question. Shallow content gets skipped. Depth gets cited.

Reputation Signals — Quality Beats Quantity

Here's something most docs don't expect: 86% of AI citations come from sources you already manage.

Yext analyzed 6.8 million AI citations across ChatGPT, Gemini, and Perplexity. AI isn't pulling from random corners of the internet. It's pulling from your website, your listings, your reviews. Infrastructure you control — or should control.

That means the problem is fixable. But only if you're actually managing those sources.

It's not review volume doing the heavy lifting either. It's sentiment, recency, and whether the review content reinforces your entity signals. SOCi found that ChatGPT-recommended locations average 4.3 stars — not 5.0. Consistent quality with enough volume to signal legitimacy.

Why Review Content Matters

"Dr. Jones fixed my sciatica, I can finally sit through a full workday without pain."

That's entity reinforcement. Condition name. Practitioner name. Specific clinical outcome. AI reads that as a trust signal for the "sciatica chiropractor" classification.

"Great office, very professional."

Nice for humans. Nearly worthless for AI entity building.

Auditing your clinic's AI visibility includes the language inside your reviews — not just the star count. Condition-specific language in review text compounds your entity signals in ways most practices have never thought about.

Recency Matters More Than You Think

20 reviews in the last 90 days beats 200 reviews from three years ago. Every time.

Stale reviews don't just lose engagement — they signal the business might not be actively operating. Consistent review generation isn't just about patient credibility. It's a freshness signal telling AI your practice is currently open and worth recommending.

Whitespark's Local Search Ranking Factors research consistently ranks review sentiment and profile completeness as top drivers of local visibility — for both traditional search and AI recommendations.

| Review Attribute | AI Value | Why It Matters |

|---|---|---|

| Star rating (4.3+) | High | Baseline quality gating |

| Condition-specific language | Very High | Entity reinforcement for wedge |

| Practitioner name mentioned | High | Person-entity trust signal |

| Recency (last 90 days) | High | Active operation freshness signal |

| Volume across platforms | Medium | Cross-platform Consensus Trust |

| Practice response | Medium | Engagement and completeness signal |

Who This Is Not For

Let's be straight about this.

AI authority is compound growth. It builds over months — not 30 days. If you want a campaign that produces measurable AI recommendations by next month, that's not what this is.

Not for the "Try It and See" Crowd

This isn't for the doc who wants to "try it for a quarter." Authority doesn't work like a paid ad you can pause and resume. Pull the plug early and the compound effect never materializes.

This isn't for the practice owner who thinks they can DIY this after a quick explanation. The content architecture, schema implementation, and cross-platform entity management that actually move AI needles require systematic execution — not a one-time setup and hope.

Not for Anyone Expecting a 90-Day Guarantee

And this definitely isn't for anyone expecting a 90-day ROI guarantee. Wanting fast results is understandable. It's just not how infrastructure works. Anyone promising guaranteed AI recommendations in a specific window is selling a timeline they can't deliver. (We've seen the pitch decks. Creative stuff.)

If you're an established clinic frustrated that competitors keep showing up in AI while you don't — this is built for you. The problem isn't your clinical quality. AI just can't verify it yet.

The Measurement Lie — And Why Agencies Are Selling Hopium

Here's something you're probably not hearing from most agencies: reliable AI citation tracking doesn't exist.

No dashboard. No monthly report showing how many times ChatGPT mentioned you this week. AI engines don't expose that data. There's no AI Search Console.

Why Agencies Sell It Anyway

Why does this matter? Because agencies are selling "AI citation tracking" as a deliverable right now. Charts. Monthly reports. Numbers that look precise and reportable.

That data doesn't exist in a reliable format. It's estimated, manually spot-checked, or made up.

The Only Method That's Actually Honest

The only honest method: systematic manual discovery. Query multiple AI engines with specific search phrases. Record what comes back. That's the whole method. It's directional — not a dashboard metric.

This is why the AI Visibility Check at iTech Valet uses a live AI-powered diagnostic. You see exactly what's happening in real time. Not a spreadsheet someone filled in earlier.

You'll know the infrastructure is working when your clinic starts showing up in patient queries. That's the measurement. Not a monthly number that sounds specific but isn't.

How to Actually Build AI Visibility

I get asked all the time: what does this actually look like in practice?

The chiropractic AI authority system runs on four layers. Each one builds on the previous. Skip one and the rest become unstable.

Layer 1: Lock Your Entity

Everything else fails if this isn't done first. And most practices skip it entirely — they go straight to tactics without ever nailing down what they're actually building.

Your clinical wedge. Your geographic focus. Your exact patient type. The canonical business name, address, and phone number that goes on every platform from this point forward. Get it wrong here and you're fixing it later — everywhere. Get it right once and every layer above it gets stronger.

Layer 2: Consistent Data Everywhere

This is where I find the most damage. Practices that have been operating for years with mismatched listings across every platform AI checks — and they have no idea.

Once the identity's locked, it gets distributed consistently across every platform AI queries:

- Google Business Profile — Complete, accurate, condition-specific

- Bing Places — Massively underused. Directly feeds ChatGPT.

- Foursquare — Behind a huge share of ChatGPT's local data

- Apple Maps — Feeds Siri and growing AI assistant traffic

- Major directories — Yelp, Healthgrades, WebMD, ZocDoc

- Niche chiro directories — Condition-specific citations that reinforce your wedge

Same name. Same address. Same phone. Same service language. No exceptions.

Layer 3: Schema-Ready Website

A pretty site that AI can't parse is an expensive liability. Your site needs to be readable by machines, not just patients. That means:

- LocalBusiness schema — Machine-readable business identity in your code

- FAQPage schema — Structured Q&A AI can extract and cite directly

- Service pages — Individual, condition-specific pages with deep content

- BreadcrumbList — Site structure AI can follow

Author markup — Practitioner identity that builds personal authority signals

Layer 4: AEO Content at Depth

Your practice needs a library of articles that answer the exact questions patients are asking AI right now.

Not 500-word keyword posts. Articles that answer every layer of a patient's question — the direct question, the real goal behind it, what they didn't know to ask, the objections they have, what comes next.

AI treats comprehensive content as authority. Shallow content gets skipped. Depth gets cited.

Each article reinforces the entity definition. Each one gives AI another reason to classify you as the trusted authority for your wedge topic in your market. That's the compound play — and it works in ways a one-time sprint never will.

Frequently Asked Questions

Why is ChatGPT recommending my competitor even though they have fewer reviews?

AI doesn't count stars — it verifies trust. A competitor with 40 reviews and perfectly consistent data across Bing, Foursquare, Yelp, and Google beats a clinic with 200 reviews and fragmented listings every time.

AI is triangulating multiple independent sources. If they all say the same thing, you're in. If anything conflicts, AI moves on.

Does how my website looks affect whether AI recommends me?

Only if bad design breaks machine readability. AI doesn't care what your site looks like.

A $20,000 website with no schema markup and no clearly defined service pages is invisible to AI. No structured signals, no citation.

Can I track how many times I've been cited in AI search?

No — and any agency telling you otherwise is selling something that doesn't exist.

The only honest method is manual discovery: query multiple AI engines, record what comes back. That's it. Build infrastructure that earns citations. Don't pay for dashboards measuring made-up numbers.

What are clinical wedges and why do they matter to AI?

A clinical wedge is the specific condition your practice builds authority around. Instead of being "a chiropractor," you become "the sciatica specialist in [city]."

When a patient asks ChatGPT who treats sciatica near them, AI matches to the practice with the clearest authority signals around that exact topic. Generic positioning gives AI nothing to match against.

Most practices see measurable shifts in authority visibility and AI citations within 60–90 days of correct implementation.

Entity signals take time to propagate. Google re-crawls schema. Directories sync. Every platform has to update and agree.

But the more important point: entity authority compounds in a way ad spend never does. Work done in month one is still working in month twelve. That's a return profile that's genuinely rare in marketing.

How long does it take for AI to update its recommendation of my clinic?

There's no timeline — and anyone quoting you one isn't being straight with you.

AI authority is compound growth. Every article, every updated listing, every new review adds a layer. It deepens over months, not weeks. The "90-day guarantee" pitch is hopium. Don't buy it.

What data sources does ChatGPT use to recommend local chiropractors?

ChatGPT pulls from Bing's index — not Google's. Bing relies heavily on Foursquare as a local data aggregator.

That's why clinics optimizing only for Google stay invisible in ChatGPT recommendations. The pipelines are different. Your data needs to be consistent across Foursquare, Bing Places, your website, and the aggregators feeding into Bing's local entity graph.

Why does AI only recommend one or two chiropractors in a city with dozens of options?

Because that's what it's designed to do. Patients want an answer, not a list.

SOCi's 2026 Local Visibility Index analyzed over 350,000 business locations and found ChatGPT recommends just 1.2% of local businesses in any market. The other 98.8% don't get a consolation mention. The 1.2% aren't lucky — they built the infrastructure.

Is Answer Engine Optimization the same as SEO?

Not even close. SEO optimizes for keyword ranking in a list. AEO optimizes for being the one answer AI gives to a direct question.

SEO asks "how do I rank higher?" AEO asks "how does AI verify I'm the trusted authority for this condition in this city?" Different signals. Different architecture. A perfect SEO strategy can leave you completely invisible in AI recommendations.

AI Gives One Answer

Nobody in chiropractic marketing wants to say this out loud, so I will:

AI gives one answer. If you're not that answer, you don't exist.

Not ranked lower. Not harder to find. Just not in the conversation. A patient asks ChatGPT who to see for back pain, gets a recommendation for someone else, books the appointment. You never crossed their mind.

The window to get ahead of this is still open. Gartner's forecast of a 25% drop in traditional search volume by 2026 isn't fringe — it's a behavioral shift already happening in your market right now. That trajectory doesn't reverse.

Clinics building authority infrastructure today compound that advantage over the next 12–18 months. Practices that wait have to close a gap that keeps growing.

This isn't about being the biggest clinic or having the deepest pockets. The practices winning AI recommendations right now have the most consistent, specific, machine-readable entity trust signals. Not the biggest ad budget. Not the nicest website.

The infrastructure problem is solvable. The only question is whether you solve it before your competitor does — or after.

You just learned why AI skips most clinics and recommends the select few with verified entity trust. The AI Authority System lays out exactly why this gap exists — but knowing the framework is only half of it. The other half is seeing your specific gaps.

Stop wondering whether ChatGPT mentions you. Start knowing exactly what it says — and why.

See Where Your Clinic Stands — the AI Visibility Check shows you what ChatGPT and Gemini return when a patient asks who to trust for back pain in your market. If competitors are being named and you're not, you'll see the specific reason.

Every month without the infrastructure, the gap widens.