Cheap Content Isn't a Bargain — It's a Liability That's Making You Invisible to AI

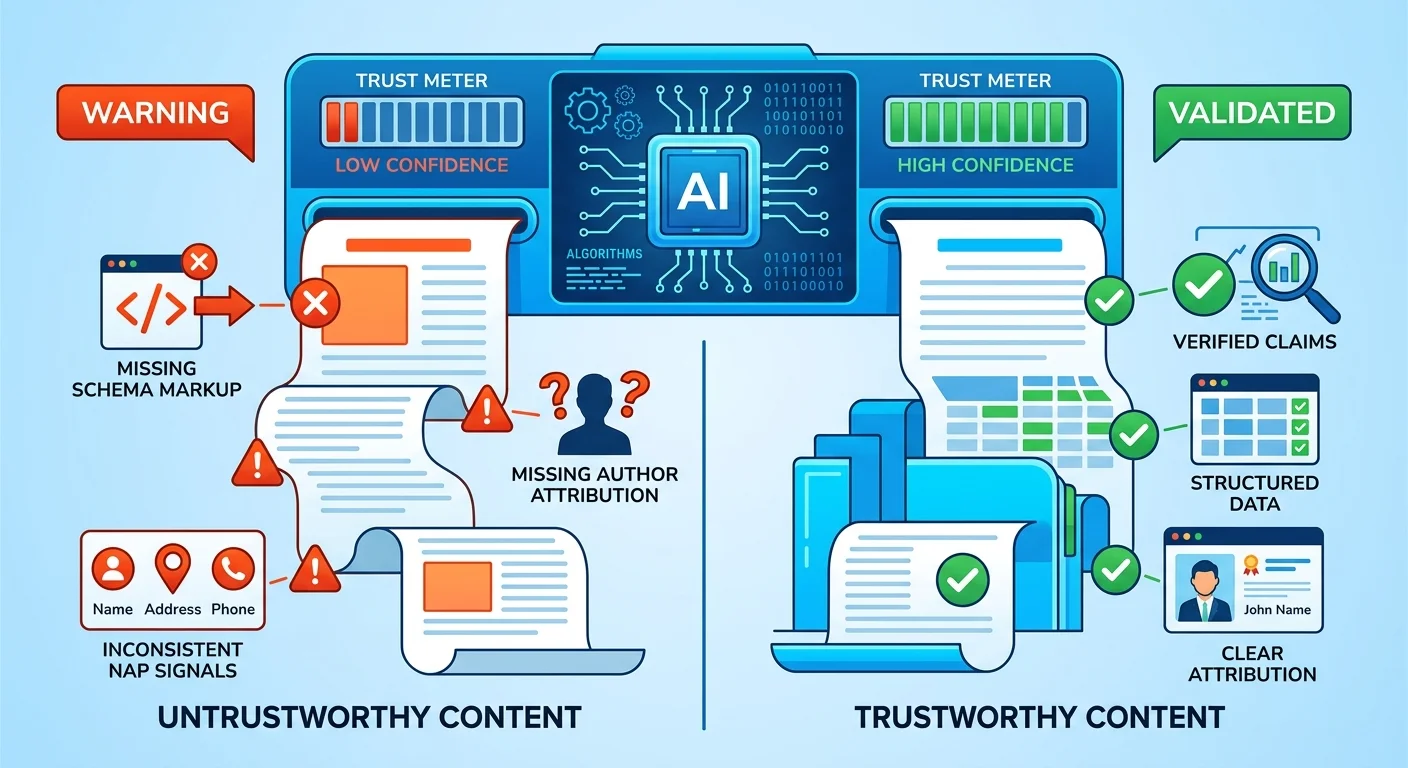

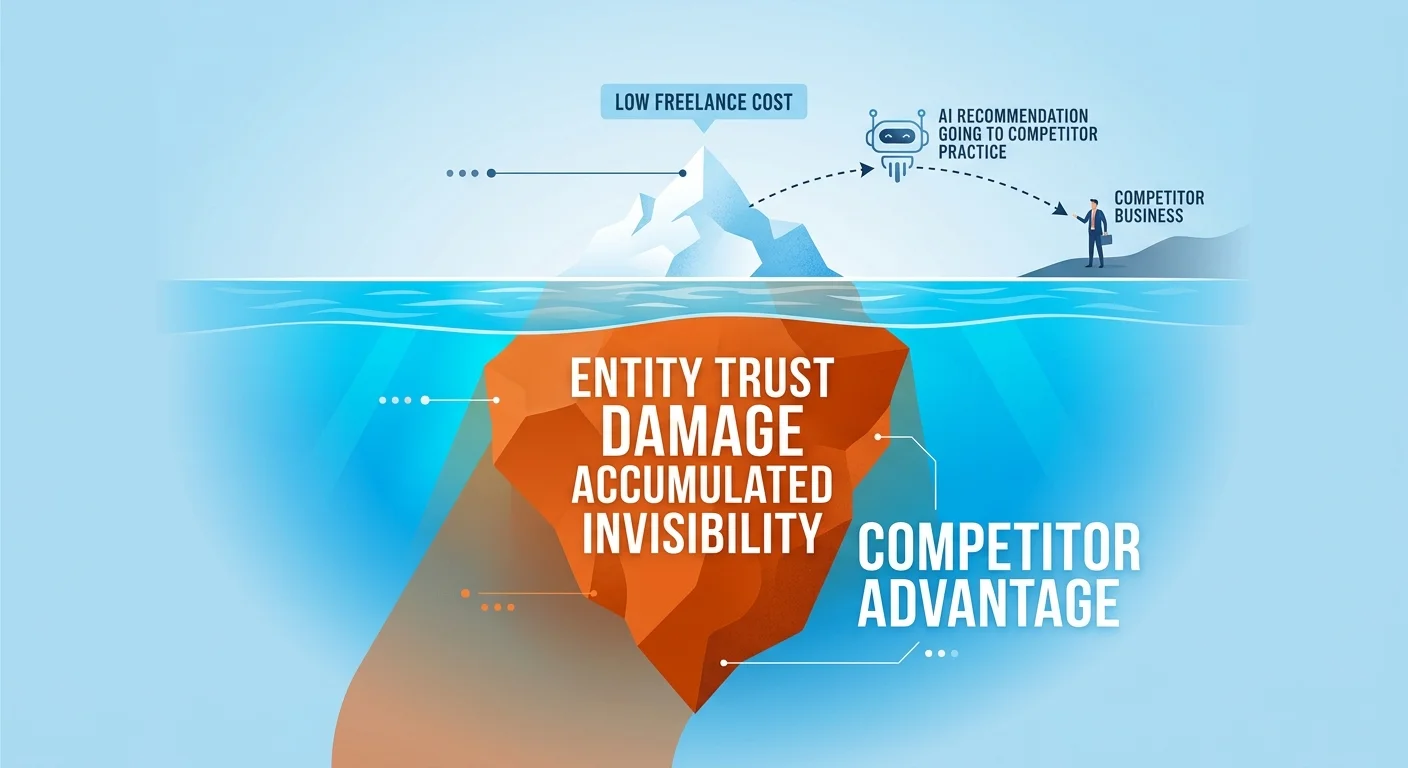

Cheap content from freelancers directly damages your business's entity trust by producing factually thin, structurally weak, and inconsistently attributed articles. AI answer engines like ChatGPT, Gemini, and Grok evaluate your content for factual depth, structural correctness (schema markup, author attribution, NAP consistency), and cross-referenced verification against their knowledge graphs. When your content fails these checks — which cheap freelance content routinely does — these engines mark your entire entity as less trustworthy.

Here's what that means in practice. When someone asks ChatGPT "Who's the best chiropractor near me?" the engine doesn't just scan keywords. It evaluates entity trust — a measure of confidence in your business's identity, expertise, and reliability built from consistent, verifiable signals across the web. Cheap content creates chaotic, contradictory signals. Missing author attribution. Inconsistent business details. Claims that can't be verified. Structural gaps that make your content machine-unreadable.

The result isn't just "less visibility." It's active invisibility. AI engines learn not to trust you. They stop citing you. They recommend competitors instead. And every month you publish more cheap content, you're compounding the problem — teaching AI that your practice isn't worth naming.

This article breaks down the technical mechanism of how cheap content fails AI's trust checks, why the "more content is better" mindset is a liability in the zero-click search era, and what entity trust damage actually costs you when AI makes the recommendation and you're not in it.

Last Updated: April 27, 2026

- • Why Cheap Content Fails AI's Trust Checks

- • The Technical Mechanism: How AI Measures Content Quality

- • The "More Is Better" Myth and Why It's Killing Your Visibility

- • Entity Trust vs. Traffic Volume: What Actually Matters Now

- • What Cheap Content Actually Costs You

- • How to Audit Your Existing Content for Entity Trust Damage

- • FAQ

- • Conclusion

Why Cheap Content Fails AI's Trust Checks

AI doesn't read your content the way a patient does.

A human skims your blog post and thinks "this sounds helpful." AI scans it and sees red flags everywhere. No schema markup. No verified author. Your business name mentioned three different ways across five articles. A claim about treatment success rates with zero citations.

That's not "fine." That's failing every trust check AI uses to decide whether you're worth recommending.

The Three Trust Failures Cheap Content Creates

Most freelancers you hire on Upwork or Fiverr produce content that fails three trust checks every single time.

- No Author Attribution: They ghost-write, so AI sees no verifiable expertise behind the content. Red flag.

- Inconsistent NAP Signals: They'll use "Smith Chiropractic" in one post and "Dr. Smith's Office" in another, teaching AI you're not a coherent, trustworthy entity. Red flag.

- Factually Thin Claims: They write "vibes" like "improves quality of life." AI can't verify that, so it flags the content—and your entire practice—as untrustworthy. Red flag.

First — missing author attribution.

Most freelance writers don't want their name on commodity work. So your content has no byline. Or it's ghost-written under your business name with no Person schema connecting it to a real human with credentials.

AI sees that and asks: who wrote this? Where's the expertise signal? If there's no verified author entity, there's no way to confirm this person has any authority in chiropractic care.

Second — inconsistent NAP signals.

Your business name, address, and phone number should be identical across every page. Every article. Every mention. Cheap content gets this wrong constantly.

One article says "Smith Chiropractic Clinic." Another says "Smith Chiropractic." A third says "Dr. Smith's Practice." AI doesn't know if those are the same entity or three different businesses. That confusion erodes trust fast.

Third — factually thin claims AI can't verify.

Generic statements like "chiropractic care improves quality of life" sound fine to a human. To AI, they're noise. There's no specific claim. No measurable outcome. No way to cross-reference it against PubMed or NIH data.

AI can't use that content to build trust in your entity. It just flags the whole article as unverifiable and moves on.

Why "Good Enough for Humans" Isn't Good Enough for AI

Here's the thing most practices miss.

AI doesn't care if your content sounds good to a human reader. It cares whether the content passes machine-readable trust checks.

Cheap content optimizes for the wrong audience. A freelancer writes something that reads fine to you. You publish it. A patient might even read it and think it's helpful.

But ChatGPT scans it and sees: no structured data. No authorship entity. No verifiable claims. No internal linking that establishes topical authority.

AI marks your entire entity as low-trust and moves on.

You paid someone to make you invisible. That's the actual ROI of cheap content.

| Signal Type | What Humans See | What AI Needs | Cheap Content Delivers |

|---|---|---|---|

| Author Attribution | Byline or "Posted by Dr. Smith" | Schema markup connecting author entity to knowledge graph node with credentials | No schema, ghost-written byline, or no attribution at all |

| NAP Consistency | Business name in article text | Identical business name, address, phone across all content matching Google Business Profile | Name mentioned differently across articles, address incomplete or missing |

| Factual Claims | "Chiropractic care helps back pain" | Claim cross-referenced against PubMed, NIH, peer-reviewed sources AI trusts | Generic statement with no source, no link, no way to verify |

| Structural Data | Article with headings and paragraphs | Schema markup (Article, FAQPage, BreadcrumbList) telling AI what this content is | No schema — AI can't parse the content type or authority signals |

The Technical Mechanism: How AI Measures Content Quality

The E-E-A-T framework isn't just Google's thing.

It's the blueprint every AI answer engine uses to decide whether your content is worth citing.

When you ask Gemini "Who's the best chiropractor near me?" it doesn't randomly pick a name. It evaluates every potential answer against E-E-A-T criteria.

Does this business have verifiable expertise? Is the content factually accurate? Can the claims be cross-validated? Is the entity consistently represented across the web?

Cheap content fails every single check.

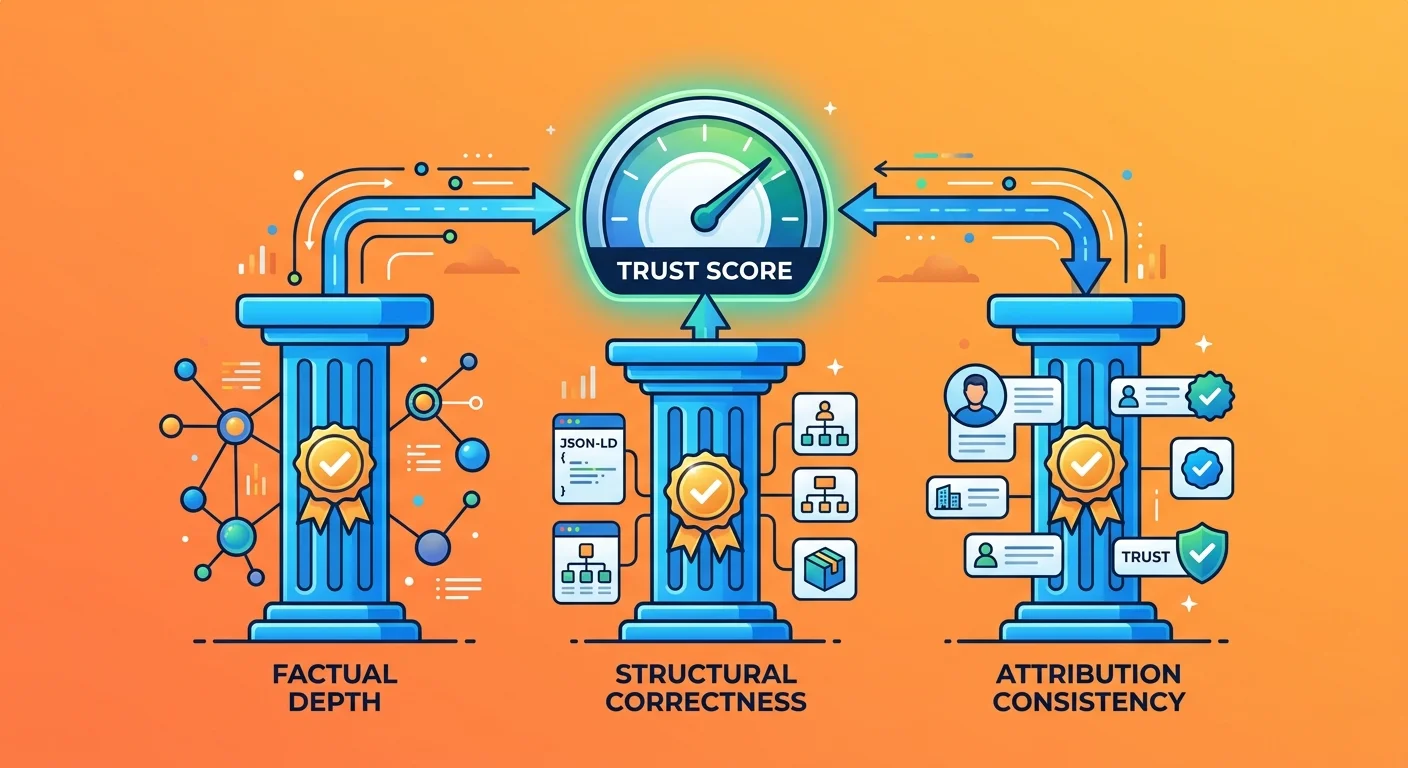

Factual Depth: Cross-Validation Against the Knowledge Graph

AI engines cross-reference every claim in your content against their knowledge graphs.

A knowledge graph is the structured database of verified facts AI uses to understand the world. When your article says "80% of back pain resolves with conservative care," AI checks that claim against PubMed, NIH, peer-reviewed journals, and institutional health sources.

If the claim matches verified sources — green light.

If it doesn't — red flag.

Generic statements fail verification immediately. "Chiropractic care improves quality of life" sounds fine to a human. To AI, it's noise. There's no specific claim. No measurable outcome. No way to confirm or deny it.

AI can't use that content to build trust in your entity.

Specific, sourced claims pass. "A 2019 study published in JAMA found that spinal manipulation reduced pain intensity by 1.5 points on a 10-point scale compared to usual medical care."

AI can verify that. It knows the source. It can cross-reference the data. That claim builds trust.

Cheap freelancers write the first version. AEO content delivers the second.

Structural Correctness: Schema, Attribution, and NAP Consistency

Missing schema markup makes your content invisible to AI.

Schema is the structured data layer that tells AI engines what your content is, who wrote it, what it's about, and how it connects to your entity.

No schema means AI has to guess. And when AI has to guess, it doesn't cite you.

Inconsistent NAP signals create entity confusion. If your business name is "Smith Chiropractic" on your homepage, "Smith Chiropractic Clinic" in one article, and "Dr. Smith's Practice" in another — AI doesn't know if those are the same business.

That confusion erodes trust across your entire entity.

No author attribution signals no expertise. If there's no verified author entity attached to your content — no Person schema connecting the byline to a knowledge graph node with credentials — AI sees the content as authorless.

And authorless content has no E-E-A-T signal to validate.

This is where semantic anchoring for AI becomes critical. Every article must connect to your entity's core authority infrastructure — not just exist as isolated pages on your site.

The Two-Layer Validation Most Cheap Content Never Gets

Here's a question I get a lot: "But can AI really tell the difference between expert content and cheap freelance content?"

Yes. And it's not even close.

AI evaluates content in two layers.

First — structural validation. Does this content have schema markup? Is the author entity verified? Are NAP signals consistent? Is the content connected to the broader entity through internal linking and topical authority signals?

Second — factual validation. Can the claims in this content be cross-referenced against trusted institutional sources? Do the statistics match verified data? Are the recommendations supported by peer-reviewed research?

Cheap content fails both layers. It has no structure. And it has no depth.

AI sees that and marks the entire entity as untrustworthy.

That's not a theory. That's how entities are understood and evaluated by every AI answer engine making recommendations right now.

- Structural Layer — Schema markup present, author attribution verified, NAP signals aligned, content connected to entity authority infrastructure through internal linking

- Factual Layer — Claims cross-referenced against institutional sources, statistics verified, recommendations supported by peer-reviewed research, no generic unverifiable statements

The "More Is Better" Myth and Why It's Killing Your Visibility

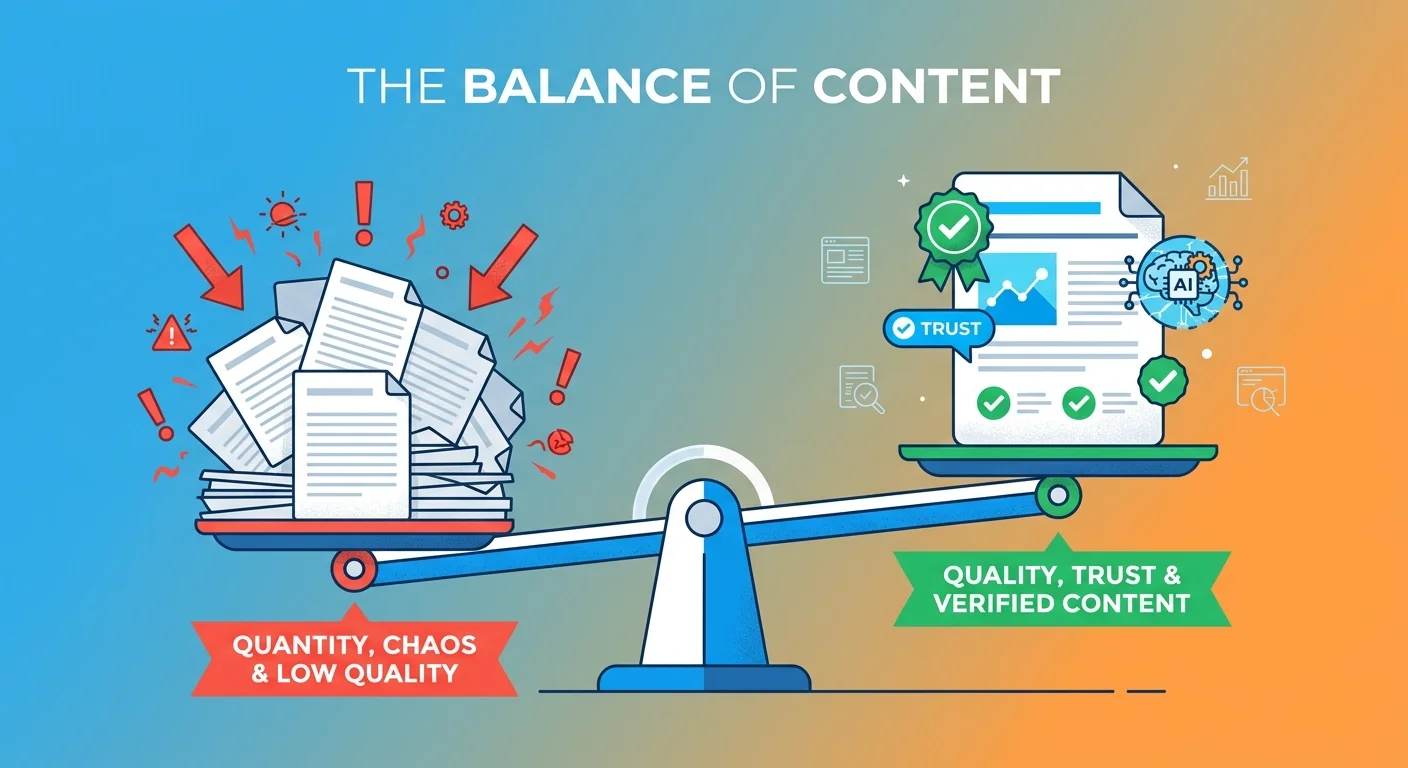

The old SEO playbook said publish as much as possible.

Flood the site with keywords. Get indexed. Rank for long-tail queries. That logic made sense when Google returned 10 blue links and users clicked through to compare options.

AI gives one answer. It doesn't send users to five websites. It names one.

And if your content isn't trusted, you're not in that answer. Period.

Why Publishing Volume Dilutes Entity Trust

Here's what nobody told you about the "blog more" strategy.

Blog posts work. The execution is what fails.

The problem isn't the format. It's the commodity-level freelance execution that lacks depth, structure, and factual validation.

Publishing more weak content doesn't build authority. It actively erodes it.

Every weak article teaches AI not to trust your entity. You publish ten thin posts in a month. AI scans them. Sees no schema. No author attribution. Generic claims it can't verify. Inconsistent NAP signals. No internal linking establishing topical authority.

AI doesn't think "this business is prolific." It thinks "this business publishes untrustworthy content."

Volume without verification creates noise, not authority. And noise doesn't get cited.

AI engines weight consensus. One trusted, verified, structurally sound answer beats 100 untrusted pages every single time.

Research from HubSpot shows that high-quality content is the top priority for successful marketers — because quality directly correlates with engagement and trust, which are the only metrics that matter in the zero-click era.

- Every Weak Article Teaches AI Not to Trust Your Entity — Publishing thin, unverified content isn't neutral. It's actively telling AI your business doesn't meet E-E-A-T standards.

- Volume Without Verification Creates Noise, Not Authority — AI doesn't reward publishing frequency. It rewards factual accuracy, structural correctness, and consistent entity signals.

- AI Engines Weight Consensus — One trusted answer beats 100 untrusted pages. The practices AI cites aren't the ones publishing the most. They're the ones publishing the best.

The Zero-Click Search Reality

AI doesn't send users to 10 blue links. It names one answer.

If your content isn't trusted, you're not in that answer.

This is the shift that replaced SEO rankings as the primary visibility mechanism. Page one used to be the goal. Now the goal is being the answer AI says out loud when someone asks who to trust.

Zero-click searches mean most queries never leave the AI interface. The user asks. AI responds. The interaction ends.

No click. No traffic. No chance to "win them over" with your website design or conversion funnel.

You're either cited or you're invisible. There's no middle ground.

| Metric | Traditional SEO Goal | AEO Reality |

|---|---|---|

| Publishing Volume | 4-8 posts per month to stay indexed and rank for long-tail keywords | Quality over quantity — one verified article per month beats ten thin posts |

| Traffic Source | 10 blue links drive clicks to your site where conversion happens | Zero-click search means most users never visit your site — AI recommendation IS the conversion event |

| Success Indicator | Ranking position (page one, top three, featured snippet) | Being named in AI's verbal response — citation is the new ranking |

Entity Trust vs. Traffic Volume: What Actually Matters Now

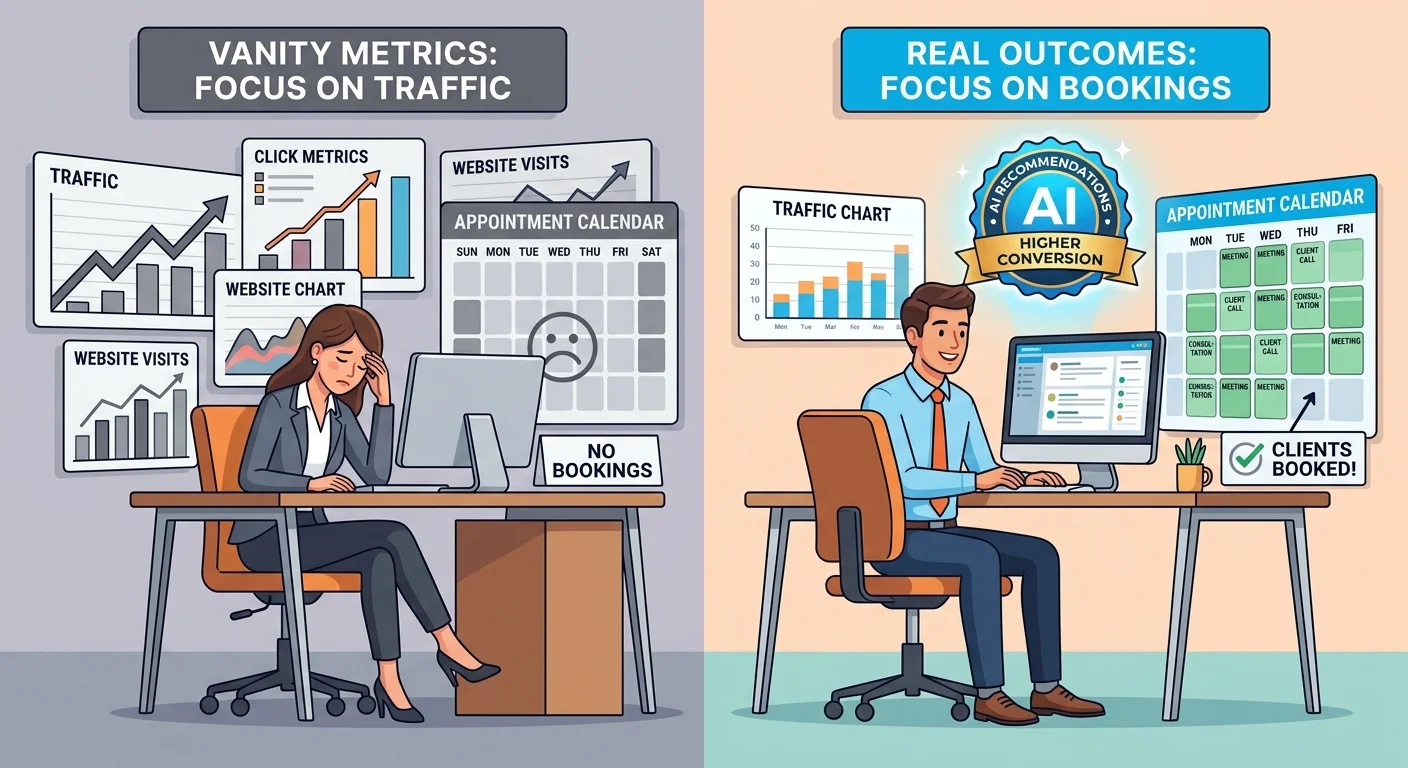

High-volume traffic used to be the goal.

Get eyeballs. Drive clicks. Convert a percentage. That math worked when users were actively shopping and comparing.

In the zero-click era, consensus trust from AI engines matters more than click volume.

Because if AI doesn't trust you enough to recommend you, traffic is irrelevant. The patient never gets to your site in the first place.

Quick pause before we go further.

If you're looking for a way to game the system with cheap volume, this isn't it. Entity trust is built through consistent, verified, structurally sound content execution. There is no shortcut.

The practices that try to DIY this with freelancers end up invisible.

The Traffic That Never Converts

Click volume from traditional SEO doesn't equal patient bookings when AI answers the question before users ever click.

Think about it.

Someone asks ChatGPT "Who's the best chiropractor near me?" AI responds with a name. The user books an appointment with that practice.

Your website? Never visited. Your traffic charts? Irrelevant.

Zero-click searches mean most queries never leave the AI interface. Being cited in the AI response is the new conversion event. Traffic metrics become vanity numbers when AI makes the recommendation without sending the user to your site.

I've watched practices obsess over Google Analytics while their phones don't ring. They show me traffic charts going up. Then they ask why new patient bookings are flat.

The answer: traffic isn't the goal anymore. Trust is.

- Zero-Click Searches Mean Most Queries Never Leave the AI Interface — Users get the answer, book the appointment, and never visit your website. Traffic charts don't capture that.

- Being Cited in the AI Response Is the New Conversion Event — The recommendation IS the booking trigger. If you're not named, the traffic you're chasing doesn't matter.

- Traffic Charts Become Vanity Metrics When AI Makes the Recommendation — You can have 10,000 monthly visitors and zero new patients if AI isn't recommending you when it counts.

Authority as Compounding Asset

Entity trust compounds monthly.

Every verified article strengthens your position. Every cheap article weakens it.

This is where the build the authority infrastructure model separates practices that grow from practices that stagnate.

Authority isn't a one-time build. It's a system. Schema markup. Verified authorship. Consistent NAP signals. AEO content that cross-validates against AI's knowledge graph. Internal linking that establishes topical authority. All of it working together, month after month.

Practices that invest in this infrastructure see compounding returns. AI cites them more often. Citations drive bookings. Bookings generate reviews. Reviews strengthen entity trust. The cycle reinforces itself.

Practices relying on cheap content see the opposite. Every weak article erodes trust. AI stops citing them. Competitors who invested in authority take their spot. The gap widens.

And six months later, they're invisible while competitors own the AI recommendations in their market.

Research shows that E-E-A-T principles apply directly to how AI engines evaluate content. The practices passing those checks compound authority. The ones failing them compound invisibility.

| Metric Type | What It Measures | Traditional SEO Value | AEO Value |

|---|---|---|---|

| Monthly Traffic | Number of site visits from search engines | High value — more traffic meant more conversion opportunities | Low value — traffic doesn't drive bookings if AI recommends competitors before users click |

| Page One Rankings | Position in Google's search results | High value — ranking on page one drove clicks and visibility | Irrelevant — AI doesn't use rankings to decide who to recommend |

| Entity Trust Score | AI's confidence in your business's expertise, consistency, and authority | Not measurable in traditional SEO tools | Critical value — the primary factor determining whether AI cites you |

| Content Verification Rate | Percentage of claims cross-validated against institutional sources | Not tracked — thin content ranked if it had keywords | Core metric — unverified claims damage entity trust and exclude you from AI recommendations |

What Cheap Content Actually Costs You

The line-item cost is $50 per article.

The actual cost is compounding invisibility.

You hired a freelancer. Published ten articles. Paid $500. Felt productive.

Six months later, you're still not getting calls. Competitors are. You run your AI Visibility Check and find out ChatGPT, Gemini, and Grok are recommending other practices. Not you.

That's when you realize: you didn't just fail to gain visibility. You paid to actively damage your entity trust.

The Visibility Debt You're Accumulating

Every month AI doesn't recommend you, competitors compound their authority advantage.

This is the part nobody explains when they sell you cheap content.

Authority is a race. Every month your competitors publish verified AEO content, they build entity trust. AI learns to cite them more often. Citations drive bookings. Bookings generate reviews. Reviews strengthen trust. The cycle compounds.

Meanwhile, you're publishing cheap articles that fail every trust check. AI learns not to cite you. Your entity trust erodes. The gap between you and your competitors widens.

And every month that gap grows, it gets harder to close.

Entity trust damage takes 6-12 months of consistent execution to reverse. That's how long it takes AI engines to re-evaluate your entity after you've published enough verified content to prove you're trustworthy again.

Competitors building authority during your invisible period lock in market position. By the time you realize cheap content was a liability, they've already taken the spot AI recommends when someone asks who to trust.

- Entity Trust Damage Takes 6-12 Months of Consistent Execution to Reverse — AI doesn't forget. Once it marks your entity as low-trust, it takes sustained verified content to earn that trust back.

- Competitors Building Authority During Your Invisible Period Lock in Market Position — Every month you're invisible is a month they're compounding. The gap widens.

- The Gap Widens Every Month Cheap Content Remains Published — Weak content isn't neutral. It's actively teaching AI not to trust you. Every day it stays live, you lose ground.

The Wasted Spend That Made You Less Visible

You didn't just fail to gain visibility.

You paid to actively damage your entity trust.

That's the real cost. Not the $50 per article. The real cost is the patients you didn't get because AI recommended someone else. The bookings that went to competitors. The revenue you lost while cheap content eroded your authority.

I've seen practices spend $5,000 on cheap content and end up worse off than if they'd published nothing.

Because even great writing fails if the infrastructure isn't built to support it. And cheap content doesn't just lack infrastructure — it actively creates signals that confuse and erode AI's trust in your entity.

Calculating the Real ROI

Compare the cost of cheap content to the cost of the patients you didn't get because AI recommended someone else.

Let's say you paid a freelancer $50 per article. Published 20 articles over six months. Total spend: $1,000.

Sounds reasonable. Until you realize those 20 articles created inconsistent NAP signals, missing schema, no author attribution, and generic unverifiable claims that taught AI your entity isn't trustworthy.

Now ChatGPT recommends a competitor when someone asks who the best chiropractor is. That competitor gets 10 new patients a month from AI recommendations. Average patient lifetime value: $2,000.

That's $20,000 per month in revenue your competitor is getting. You're not.

Over six months? $120,000 in lost revenue. Because you saved $950 by hiring a cheap freelancer instead of investing in verified AEO content.

That's the actual ROI of cheap content.

Data from the Content Marketing Institute shows that successful content marketers invest more in quality and have a documented strategy. The ones cutting costs on content execution are the ones seeing flat or declining results.

| Cost Type | Cheap Freelance Content | AEO Content Execution |

|---|---|---|

| Per-Article Cost | $50-$100 per article | $500-$1,000 per article (verified, structured, factually validated) |

| Time to Entity Trust Impact | Immediate negative impact — every article erodes trust | 3-6 months to measurable trust improvement, 6-12 months to consistent AI citations |

| Structural Compliance | No schema, no author attribution, inconsistent NAP | Schema markup on every article, verified authorship, NAP consistency enforced |

| Factual Verification | Generic claims, no sources, no cross-validation | Every claim sourced, cross-validated against institutional sources AI trusts |

| Long-Term ROI | Negative — compounding invisibility and lost bookings to competitors | Positive — compounding authority and AI citations drive bookings without ongoing ad spend |

How to Audit Your Existing Content for Entity Trust Damage

The first step is identifying which content is damaging your entity trust.

You can't fix what you can't see. And most practices have no idea how much of their published content is actively eroding AI's confidence in their entity.

The audit finds it.

The Four Entity Trust Checks

Run every article on your site through four checks: schema markup present, author attribution consistent, NAP signals aligned, claims cross-verifiable.

First — schema check. Go to Google's Schema Markup Validator. Paste your article URL. Does it show Article schema? FAQPage schema? Author schema?

If not — that article has no structural data telling AI what it is or who wrote it. Red flag.

Second — author attribution check. Is there a byline? Does it match the name on your About page exactly? Is there Person schema connecting the author to a knowledge graph node with credentials?

If the answer to any of those is no — AI sees that content as authorless.

Third — NAP consistency check. Search your site for your business name. How many variations appear? "Smith Chiropractic," "Smith Chiropractic Clinic," "Dr. Smith's Practice."

Every variation is a signal to AI that you're not a coherent entity.

Fourth — factual verification check. Pick five claims from your content. Can you find a verifiable source for each one? PubMed study, NIH data, peer-reviewed journal.

If you can't — AI can't either. And if AI can't verify your claims, it marks the content as untrustworthy.

- Run Schema Validator on Every Article URL — No schema means AI can't parse your content type or authority signals. That's a structural failure that excludes you from recommendations.

- Verify Author Attribution Matches Your About Page Exactly — Inconsistent author names or missing attribution tells AI there's no verified expertise behind your content.

- Search for Business Name, Address, Phone — Consistency Check — Every variation in how your business is mentioned creates entity confusion. AI doesn't know if those are the same entity or different businesses.

- Identify Unsourced Claims That Can't Be Verified — Generic statements AI can't cross-reference against institutional sources damage your entire entity's trust score.

Remove or Replace

Thin content isn't neutral. It's a liability.

Remove it or replace it with verified AEO articles.

Here's the decision framework: if an article fails two or more of the four trust checks above — kill it or replace it. Don't try to patch it. The structural damage is already done. AI has already marked it as untrustworthy.

Removing content won't hurt you. The myth that "more pages equal more visibility" died with traditional SEO. For AI, quality beats quantity by an order of magnitude.

Ten weak articles actively harm your entity trust. One verified article builds it.

If the topic is worth keeping, replace it with AEO content writing services that passes all four checks. Schema markup. Verified authorship. Consistent NAP. Sourced, verifiable claims.

That content compounds authority. The weak content you're replacing was compounding invisibility.

The Diagnostic That Shows What AI Actually Sees

The AI Visibility Check runs your practice through ChatGPT, Gemini, and Grok in real time.

It shows you exactly what they say when someone asks who to trust.

Most practices think they're fine. They've published content. They have a website. They rank for some keywords.

Then they run your AI Visibility Check and see ChatGPT recommend a competitor. Gemini doesn't mention them at all. Grok says "I don't have enough verified information to recommend a specific practice."

That's the moment the problem becomes real. Not theoretical. Not something that might happen in the future.

Happening right now. Every time a patient asks AI who to trust, you're invisible.

The check takes 15 minutes. It's free. And it makes the cost of cheap content impossible to ignore.

FAQ

What is "entity trust" and why does it matter for AI recommendations?

Entity trust is AI's measure of confidence in your business's identity, expertise, and reliability. It's built from consistent, verifiable signals across the web — schema markup, author attribution, NAP consistency, factually validated claims, and internal linking that establishes topical authority.

When someone asks ChatGPT or Gemini "Who's the best chiropractor near me?" the engine evaluates every potential answer against its trust score. High trust entities get recommended. Low trust entities get ignored. It's that simple.

Cheap content creates inconsistent, unverifiable signals that erode entity trust. AI learns not to cite you. And once AI decides you're untrustworthy, reversing that perception takes 6-12 months of consistent verified content execution.

Can AI engines like ChatGPT tell the difference between expert content and cheap freelance content?

Yes. And it's not even close.

AI evaluates content for factual depth (claims cross-referenced against its knowledge graph), consistent attribution (verified author entity connected to credentials), and structural correctness (schema markup, NAP consistency, internal linking). Cheap freelance content fails all three checks.

A human might read cheap content and think it sounds fine. AI reads it and sees: no schema. No author entity. Generic claims it can't verify. Inconsistent business name mentions. No topical authority established through internal linking.

That's not "fine." That's a series of red flags telling AI your entity isn't trustworthy.

Isn't publishing more content, even if it's lower quality, better for visibility?

No. This is a myth from old SEO that's actively costing practices visibility in the AI era.

Traditional SEO said publish as much as possible. Get indexed. Rank for long-tail keywords. That logic worked when Google returned 10 blue links and users clicked through to compare options. Quality mattered less than volume.

AI search operates on the opposite logic. Quality and authority are paramount. AI gives one answer. If your content isn't trusted, you're not in that answer.

Publishing more weak content doesn't build authority — it actively dilutes it. Every thin article teaches AI not to trust your entity.

One verified, structurally sound article per month beats ten commodity blog posts. Because AI engines weight consensus. The practices they recommend aren't the ones publishing the most. They're the ones publishing the best.

How does Google's E-E-A-T framework relate to being recommended by AI?

E-E-A-T (Experience, Expertise, Authoritativeness, Trust) is the blueprint for content quality that all AI answer engines use to decide whether your content is worth citing.

Google's Search Quality Rater Guidelines define E-E-A-T as the standard for evaluating content quality. But it's not just Google using this framework. ChatGPT, Gemini, Grok — every AI answer engine making recommendations evaluates content against the same criteria.

Does this content demonstrate real-world experience? Is there verifiable expertise behind it? Is the entity authoritative in this field? Can the claims be trusted?

Cheap content fails every single check. No verified authorship (no expertise signal). Generic claims that can't be cross-validated (no trust). Inconsistent entity signals (no authority). AI sees that and moves on to a competitor whose content does meet E-E-A-T standards.

How can I fix the damage to my entity trust from months of cheap content?

First — run a content audit to identify and remove thin, unverified articles. Use the four trust checks: schema markup present, author attribution consistent, NAP signals aligned, claims cross-verifiable. Any article failing two or more checks is a liability. Kill it or replace it.

Second — replace weak content with a consistent strategy of publishing high-depth, factually validated AEO articles. One verified article per month that passes all four trust checks builds entity trust faster than ten thin posts ever could.

Third — be realistic about the timeline. Entity trust damage takes 6-12 months of consistent execution to reverse. AI doesn't forget overnight. You're not going to publish three good articles and suddenly get recommended. You're rebuilding trust one verified claim, one consistent signal, one structurally sound article at a time.

But here's what I know: every month of verified content execution compounds. And the practices that stick with it stop being invisible. The ones that quit hand that authority to whoever kept going.

Why can't I just hire a better freelancer and fix this myself?

Because this isn't about hiring a better writer. It's about implementing a system that validates every claim through a two-AI verification process, maintains consistent schema and attribution across every article, and aligns content to your authority infrastructure.

That's not freelance work. That's methodology.

A better freelancer can write cleaner sentences. They might even source some claims. But they're not building schema markup. They're not connecting author entities to knowledge graph nodes. They're not running cross-validation checks against AI's knowledge base to ensure every claim is verifiable. They're not maintaining NAP consistency across your entire content library. And they're definitely not integrating every article into a semantic anchor system that establishes topical authority AI can recognize.

AEO content execution isn't writing. It's authority infrastructure. The practices that understand that difference stop being invisible. The ones that keep thinking this is just "better blog posts" stay stuck wondering why their phone doesn't ring.

How long does it take to reverse entity trust damage?

6-12 months of consistent verified AEO content execution.

That's not what you wanted to hear. I get it. But it's the truth. AI doesn't trust quickly. And once it's marked your entity as untrustworthy because of months of cheap content, it takes sustained, verified execution to prove you've changed.

Authority compounds monthly. Every verified article strengthens your position. Every article that passes schema checks, author attribution, NAP consistency, and factual validation tells AI you're getting more trustworthy. But it's a gradual rebuild. Not a flip you can switch.

The practices that stick with it see measurable trust improvement in 3-6 months. Consistent AI citations in 6-12 months. And compounding authority that drives bookings without ongoing ad spend after that.

The ones that quit after two months because "it's not working yet" hand that entire compounding advantage to competitors who kept going.

What's the difference between cheap content and AEO content execution?

We don't publish vibes. We publish receipts.

Every statistic sourced. Every claim verified. Gemini research. Claude writing. Gemini validation. Claude refinement. The content your freelancer produces isn't just thin — it's structurally unverifiable by the AI engines that decide whether to recommend you.

Cheap content: no schema markup, no author attribution, inconsistent NAP signals, generic claims AI can't cross-validate. Written once, published without verification, forgotten.

AEO content execution: schema markup on every article, verified authorship connected to your entity's knowledge graph node, NAP consistency enforced across your entire content library, every claim sourced and cross-validated against institutional sources AI trusts. Researched by one AI engine, written by another, validated again, refined, and integrated into your authority infrastructure as a semantic anchor that compounds topical authority.

The difference isn't "better writing." It's a system designed to pass every trust check AI uses to decide who to recommend. And the practices whose content passes those checks own the AI recommendations. The ones whose content doesn't stay invisible.

Conclusion

The most expensive content is the cheap content that makes you invisible.

Every month you publish weak articles, you're teaching AI not to trust your practice. The gap between you and the competitors AI does trust widens. And that gap isn't neutral — it's compounding.

They're building authority. You're building invisibility. Every month that dynamic continues, it gets harder to close.

This isn't a neutral problem you can ignore until it feels urgent. The practices building entity trust today will own AI recommendations six months from now. The ones optimizing for cost will still be wondering why their phone isn't ringing — while competitors who invested in verified content execution are fully booked from AI citations they didn't pay for with ads.

There's no version of this where doing nothing works out. AI is already making recommendations in your market. Either your name is in the answer or a competitor's is. That decision gets made every time someone asks who to trust.

And if your content isn't passing AI's trust checks, the decision isn't going your way.

Want to know if cheap content has already damaged your entity trust? Run My AI Visibility Check. It takes 15 minutes and shows you exactly what ChatGPT, Gemini, and Grok say when someone asks who the best chiropractor in your area is. If the results don't make the problem self-evident — walk away. But if they do, you'll know exactly what needs to be fixed before another month of invisibility compounds the gap.