Word Count Doesn't Matter to AI. This Does.

Word count is the wrong metric for AI because answer engines don't measure value by length — they measure it by authority signals like semantic density, entity trust, and comprehensive intent coverage. While longer content often correlates with depth, AI prioritizes the structured, verifiable information within the content, not the container's size. Focusing on word count leads to shallow, diluted content that AI ignores.

When ChatGPT or Gemini decides who to recommend, they're not counting paragraphs. They're scanning for entity verification, topical authority, and whether your content actually answers the question at multiple intent layers. The 1,500-word article you bought from a content mill? AI sees right through it. Not because it's too short or too long — but because it's empty calories dressed up as nutrition.

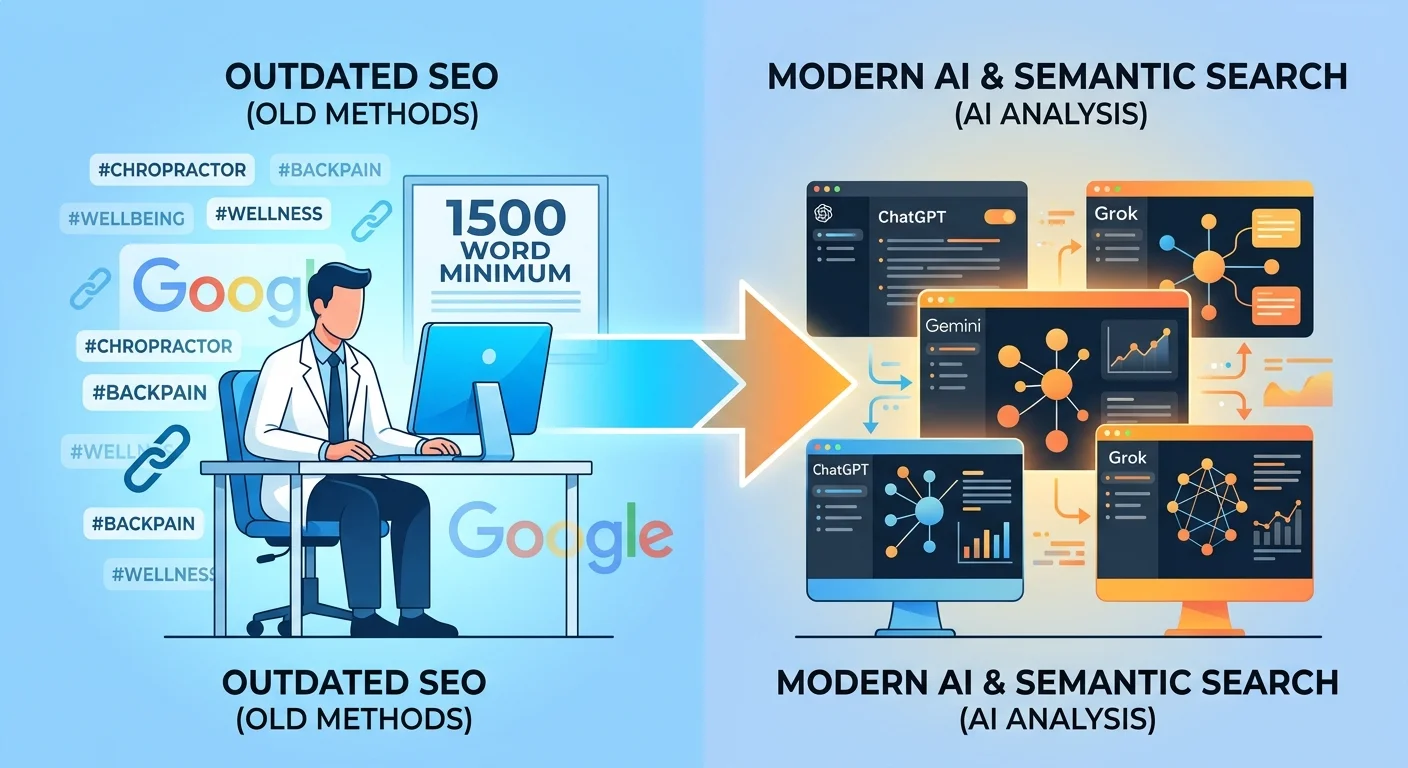

Here's what's actually happening: you've been sold a vanity metric from the old SEO playbook. Word count mattered when Google ranked pages based on keyword density and backlink volume. That era is over. AI answer engines operate on a fundamentally different model. They don't care if you hit an arbitrary number. They care if you're the verified, trusted answer to the question being asked. And if your content infrastructure can't prove that — no amount of padding will fix it.

This article breaks down why word count fails in the AEO era, what metrics actually matter, and how to shift your content strategy from chasing numbers to building real authority that AI engines trust and recommend.

Last Updated: April 27, 2026

- • Why Word Count Became the Default (And Why It's Wrong Now)

- • What AI Answer Engines Actually Measure

- • Semantic Density vs. Word Count: The Real Depth Metric

- • Entity Trust: The Foundation AI Relies On

- • The DIY Trap: Why "Just Writing More" Backfires

- • Intent Coverage: The Framework Word Count Can't Deliver

- • How the Two-AI Validation System Enforces Real Depth

- • FAQ

- • Conclusion

Why Word Count Became the Default (And Why It's Wrong Now)

The industry chased a number for years.

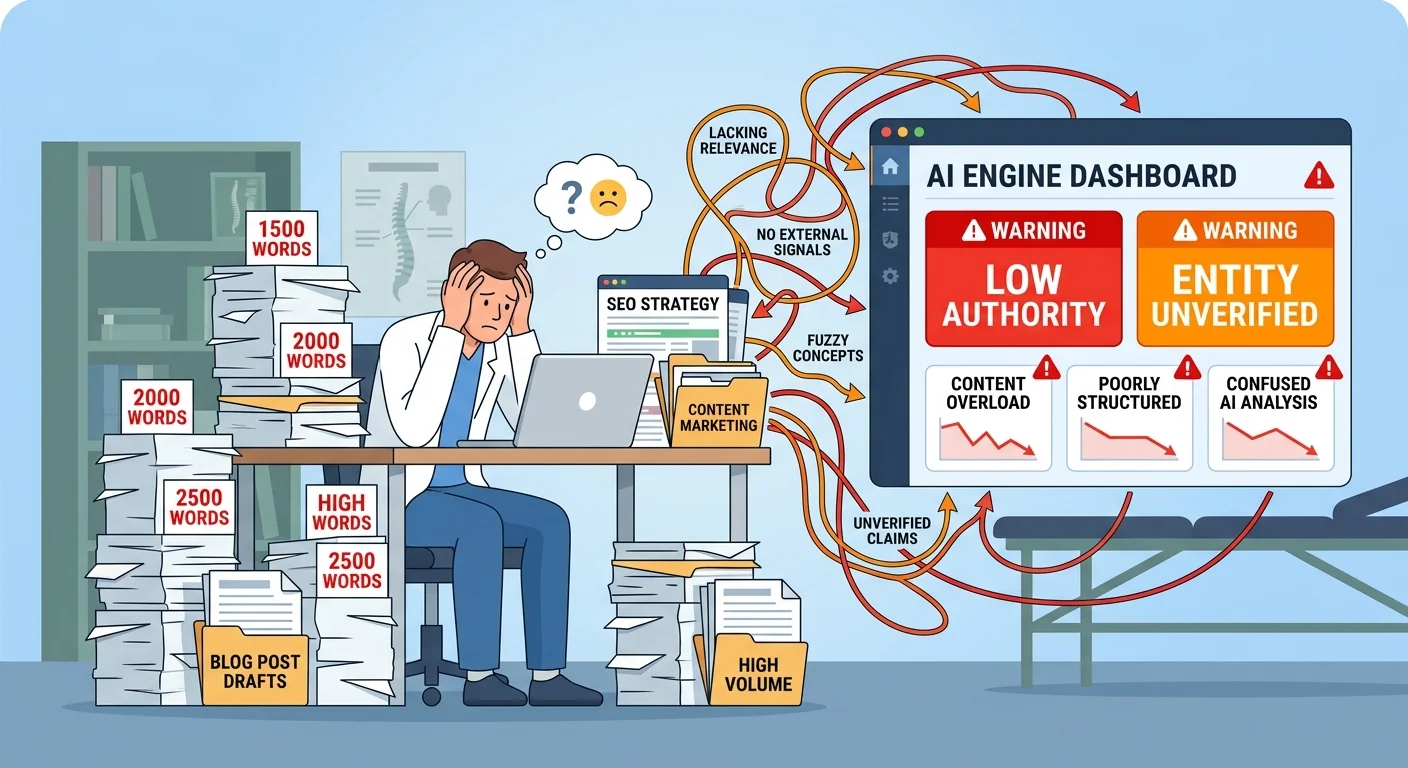

1,500 words. 2,000 words. 2,500 if you wanted to "dominate." Agencies priced around it. Content mills standardized it. Chiropractors paid monthly for AI Authority articles that hit the target and changed nothing.

The logic looked solid. Studies showed longer articles ranked higher. Backlinko analyzed 1 million Google search results and found the average first-page result sat around 1,890 words. The industry saw correlation and sold it as causation.

But here's the thing: word count was never the mechanism.

It was the byproduct.

Longer articles tended to rank better because they tended to cover topics more completely. The depth drove the result. The word count just came along for the ride.

The Correlation Trap

Saying word count causes rankings is like saying a big gas tank makes a car fast.

The tank supports the engine. It doesn't create the speed.

A 3,000-word article full of fluff and repetition loses to an 800-word piece that nails every intent layer, verifies every claim, and connects the semantic dots AI is scanning for.

HubSpot debunked the myth of magic word counts years ago. Their research showed content length correlates with topic coverage — not rankings directly. But the industry kept selling the shortcut anyway.

Because "1,500 words for $150" is easier to package than "semantic density validation."

Why AI Doesn't Count Words

The industry sold chiropractors "1,500-word articles" as a deliverable.

Content mills produced them. Practices paid. Traffic didn't move. Bookings didn't change.

The metric was the con.

AI engines don't count words. They parse entities. They verify claims. They measure semantic coverage and topical relationships. Google's documentation on how search works with structured data mentions entity recognition, schema markup, and conceptual relationships. Word count? Nowhere.

The algorithm can't see it.

What it can see: whether your content proves — through structure, citations, and entity signals — that you're the verified authority on the topic.

What AI Answer Engines Actually Measure

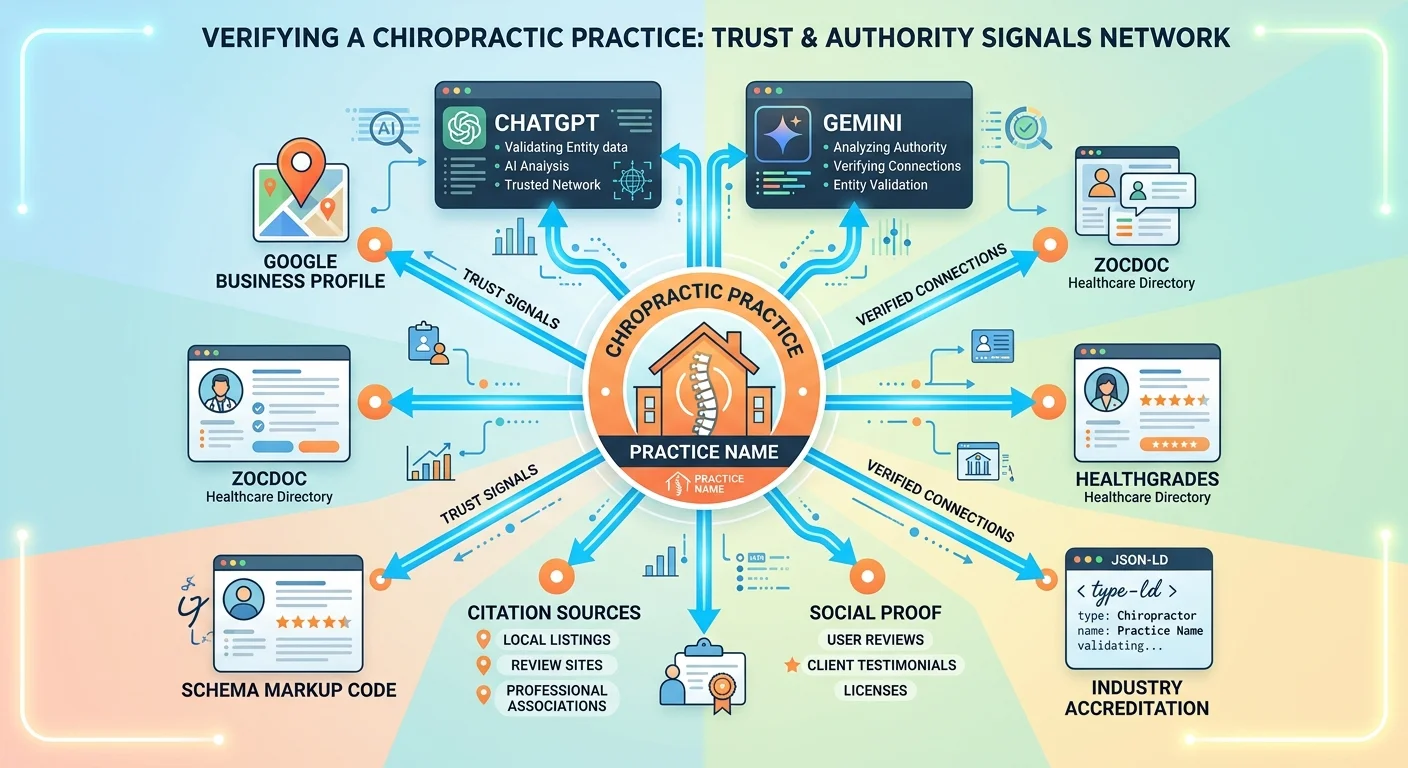

AI answer engines run on three core systems: entity recognition, semantic understanding, and trust verification.

Word volume doesn't appear on that list.

When ChatGPT evaluates whether to recommend your practice, it's not asking "How long is this?" It's asking "Who is this? Can I verify them? Do they understand the topic deeply enough to be trusted?"

Infrastructure answers those questions. Not paragraph count.

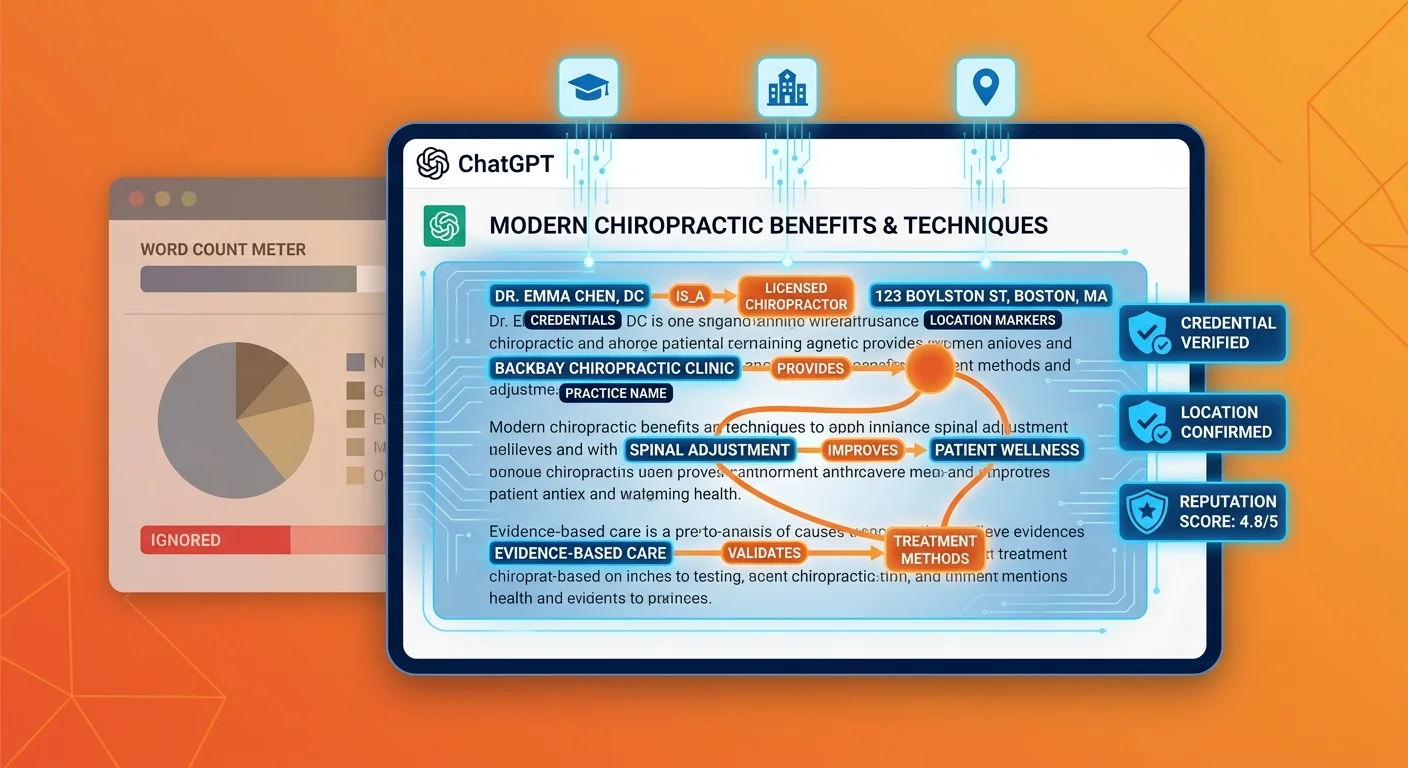

Entity Recognition and Verification

AI needs to know WHO you are before it says your name.

Schema markup tells AI what type of business you run. Structured data confirms your name, address, phone number. Consistent NAP signals across Google Business Profile, healthcare directories, and citation sources validate you're real and verifiable.

None of that comes from adding words.

It comes from authority infrastructure — the machine-readable foundation that tells AI you exist and can be trusted.

Google's E-E-A-T documentation makes this explicit. Experience, Expertise, Authoritativeness, and Trust are the core quality signals. Word count? Still not on the list.

Semantic Relationships and Topical Authority

AI scans for how well you connect related concepts within a topic.

It's not "how many words about sciatica."

It's "do you connect sciatica to disc herniation, nerve impingement, spinal alignment, treatment protocols, patient outcomes, and alternative approaches?"

Depth is relational, not volumetric.

Semantic search evaluates context and relationships between words. A 600-word article hitting eight related subtopics with verified citations beats a 2,500-word piece restating three points five different ways.

AI can tell the difference.

Trust Signals and Citation Velocity

AI tracks how often your content gets cited, referenced, and validated by authoritative sources.

That's citation velocity.

A 600-word article cited by institutional sources like the CDC or peer-reviewed journals beats a 4,000-word piece living in isolation with zero external validation.

Entity trust builds through third-party verification. Not self-published volume.

Semantic Density vs. Word Count: The Real Depth Metric

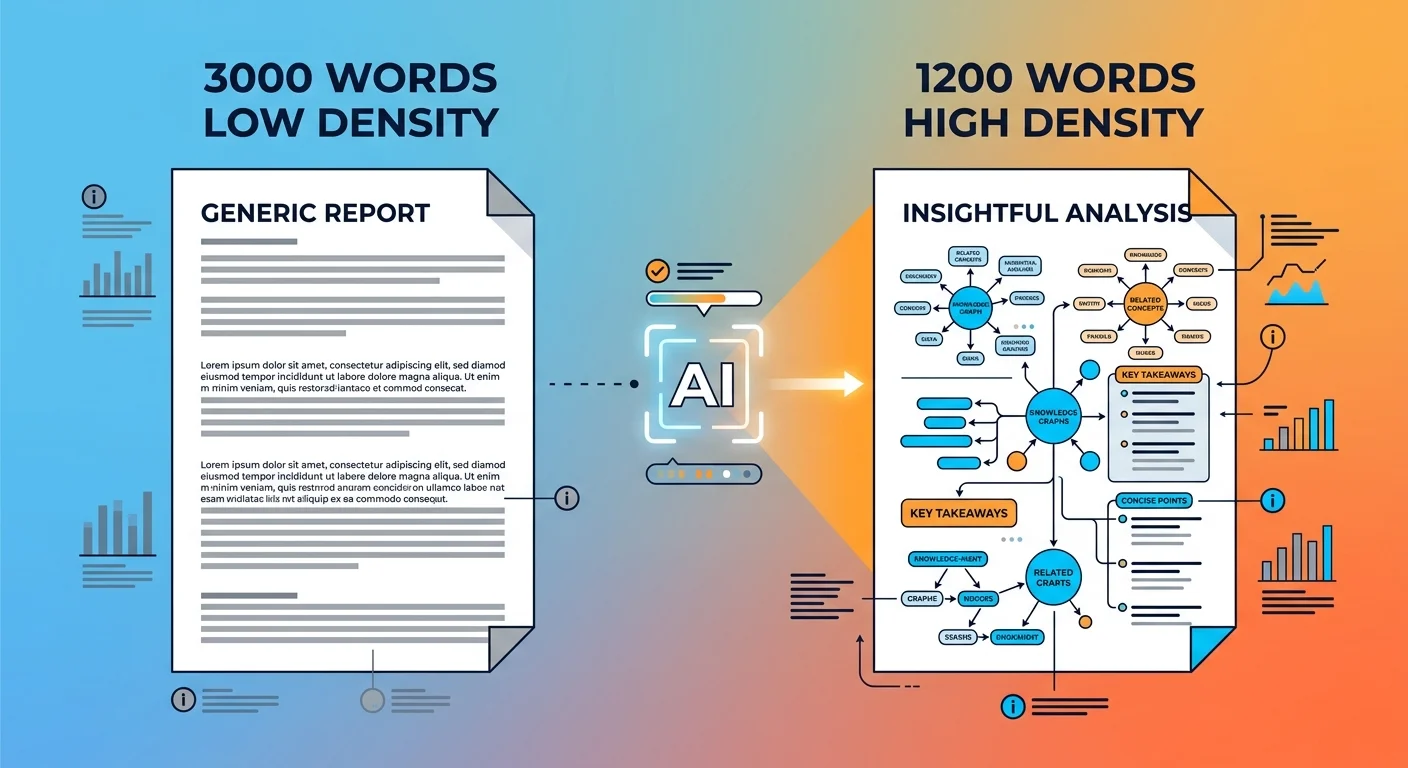

Semantic density is the ratio of meaningful, verified, interconnected information per unit of content.

Let's be clear: 3,000 words of fluff is just 3,000 words of noise. It's repetition, generic advice, and the same point restated five ways just to hit a number.

Or you've got 800 words with high semantic density — every paragraph introduces a new verified concept, connects to related topics, addresses a distinct intent layer, and cites institutional sources.

This is why commodity content packages promising "12 articles per month at 1,500 words each" fail. They're optimizing for the wrong metric. AEO Content Writing Services aren't sold by word count — they're sold by depth execution and validation infrastructure.

AI doesn't see the first scenario as "comprehensive." It sees noise.

| Metric | Low Density (3000 words) | High Density (1200 words) | What AI Sees |

|---|---|---|---|

| Unique concepts introduced | 4–6 | 12–15 | High density = topical authority |

| Intent layers addressed | 1–2 (Direct, maybe Indirect) | All 5 (Direct, Indirect, Latent, Counter, Post) | High density = comprehensive coverage |

| External sources cited | 0–2 (generic, non-institutional) | 6–8 (institutional, peer-reviewed) | High density = verification signals |

| Semantic connections made | Minimal (isolated points) | Dense (every concept connects to 2–3 related subtopics) | High density = relational depth |

| Entity verification | Weak or absent (no schema, no structured data) | Strong (schema, NAP consistency, citation network) | High density = trust signals |

How to Measure Semantic Density

Word count is easy to track. Semantic density requires actual analysis.

Here's what matters instead:

- Unique concepts per 100 words — How many distinct, non-redundant ideas does the content deliver?

- Intent layers addressed — Does it cover Direct, Indirect, Latent, Counter, and Post-Intent?

- Authoritative sources cited — How many institutional, peer-reviewed, or .gov/.edu sources validate your claims?

- Internal topic connections — How many related subtopics does the article link to semantically?

- Entities verified — Schema markup, structured data, consistent NAP signals present?

None of those care about word count.

All of them determine whether AI recommends you.

The Fluff Problem

AI is trained to identify low-value repetition.

Restating the same point three different ways to hit a word count target? AI flags that as low-density content.

It doesn't help your authority. It actively harms it.

Fluff dilutes the core message. It frustrates the user. And it signals to AI you don't actually have enough depth on the topic to justify the length.

The algorithm isn't stupid. It knows the difference between substance and padding.

Entity Trust: The Foundation AI Relies On

Entity trust is how well AI can verify who you are, what you do, and whether you're qualified to be recommended.

Built through structured data, third-party validation, and consistent identity signals.

None of which are affected by word count.

The AI Authority Engine doesn't start with content. It starts with infrastructure. Because if AI can't verify your entity, your content doesn't matter.

Why Infrastructure Beats Volume

You can publish 50 articles at 2,000 words each.

If your website has no schema, weak entity signals, and inconsistent NAP data across directories, AI won't trust you.

Content volume doesn't override infrastructure deficit.

This is why practices with "great content" still get ignored. The foundation is broken. The AI-readable infrastructure that tells AI who you are, where you are, and what you're qualified to do — it's either missing or contradictory.

AI sees the gap. And it moves on to a competitor whose entity signals are clean.

| Signal Type | What It Does | Word Count Relevance |

|---|---|---|

| Schema Markup | Tells AI what type of business you are, what services you offer, and where you're located | Zero — schema is code, not content |

| NAP Consistency | Confirms your Name, Address, and Phone number match across Google, directories, and citations | Zero — NAP is data validation, not article length |

| Healthcare Directory Presence | Validates you exist on Zocdoc, Healthgrades, Vitals — platforms AI trusts | Zero — directory listings are external, not word-dependent |

| Citation Network | Shows how often institutional sources reference or validate your content | Indirect — citations reward depth, not length |

| Author Credentials | Verifies professional qualifications, degrees, certifications through structured data | Zero — credentials are entity attributes, not article metrics |

The DIY Trap: Why "Just Writing More" Backfires

You think this is about adding words to a page.

It's not.

Writing 2,000 words about "back pain treatment" doesn't build authority if you haven't mapped intent layers, verified entity signals, connected semantic relationships, or structured the content for machine readability.

The real work isn't typing. It's the strategy, validation, and infrastructure underneath.

If you think you can replicate that after reading a few articles, you're underestimating what's actually required.

AI will prove it by ignoring you.

Why More Words = More Problems (Without Strategy)

The rejected method: agencies selling commodity AI Authority article packages based on word count tiers.

"$150 for 1000 words, $250 for 2000 words."

That's not content strategy. That's renting a word factory.

The problem: without semantic mapping, intent coverage, and entity verification, longer content just means more surface area for AI to identify as low-value.

You're not building authority. You're building noise.

I've seen practices pay $1,200/month for "premium SEO content" — 12 articles, 1,500 words each, keyword-optimized, professionally written — and get zero movement in AI recommendations.

Not because the writers were bad. Because the entire framework was wrong.

The articles hit a word count. They didn't hit an intent map. They didn't verify entities. They didn't build citation velocity. They didn't connect semantic relationships.

AI didn't care that they were long. AI cared that they were shallow.

Intent Coverage: The Framework Word Count Can't Deliver

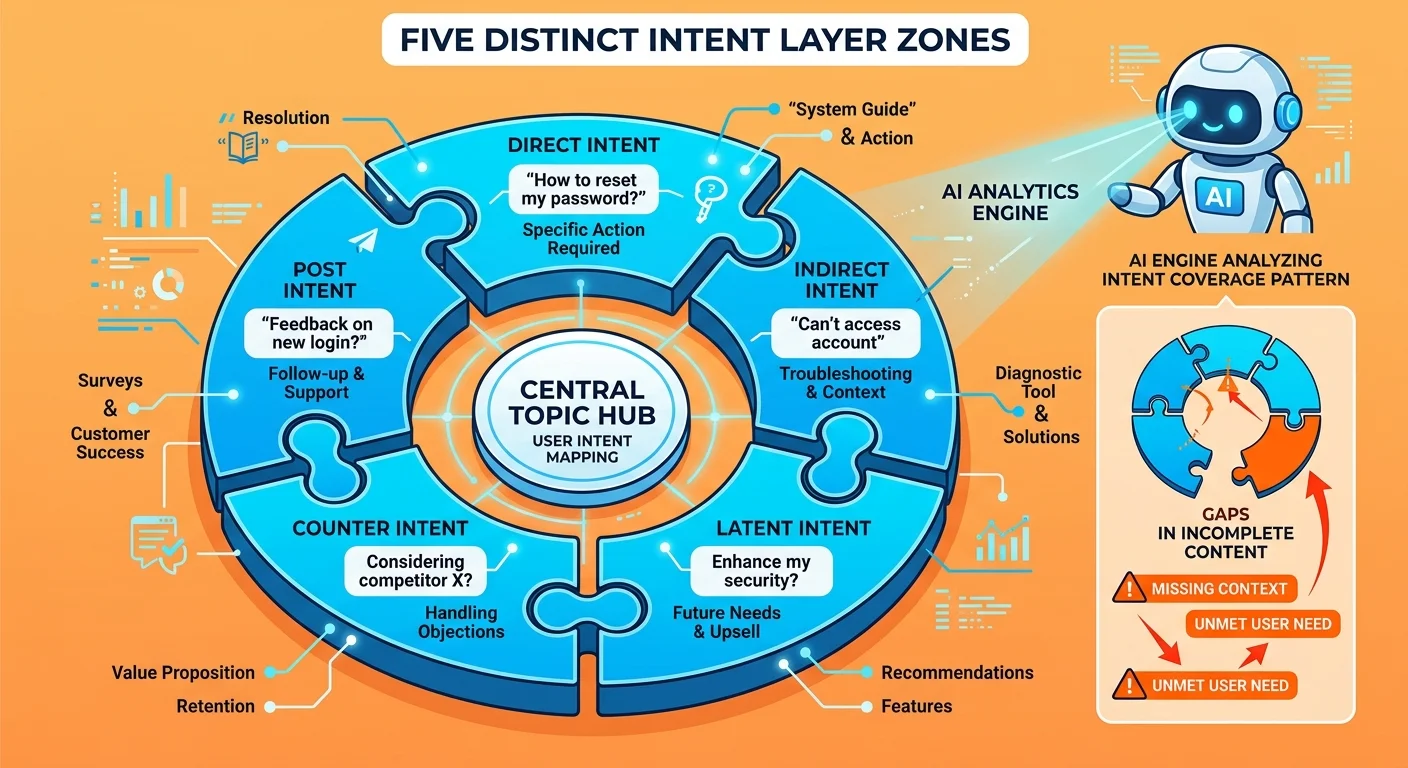

AI evaluates whether your content answers not just the literal question (Direct Intent), but the real goal behind it (Indirect Intent), the related considerations (Latent Intent), the reasons someone might NOT choose the answer (Counter-Intent), and what happens next (Post-Intent).

Word count doesn't guarantee you hit all five.

Most long-form content hits one or two and pads the rest.

Answer Engine Optimization (AEO) is built on this framework. If your content doesn't address every layer, AI sees the gap — even if the article is 4,000 words long.

| Intent Type | What It Addresses | Example for "Best Chiropractor Near Me" |

|---|---|---|

| Direct Intent | The literal question being asked | "Who are the top-rated chiropractors in this area?" |

| Indirect Intent | The real goal behind the question | "I need someone who can fix my back pain without surgery or drugs." |

| Latent Intent | Related considerations the user hasn't asked yet | "What's the first visit like? Do they take my insurance? How long does treatment usually take?" |

| Counter-Intent | Why they might NOT choose this answer | "Is chiropractic care safe? What if it doesn't work? Are there risks I should know about?" |

| Post-Intent | What happens after they get the answer | "How do I book? What should I bring? What should I expect in the first session?" |

Why Generic Long-Form Content Fails All Five Intents

Walk through a typical 2,500-word "chiropractic care" article from a content mill.

It answers Direct Intent. "What is chiropractic care? It's a hands-on approach to treating musculoskeletal issues..."

Maybe it touches Indirect Intent. "Why might you need it? If you're experiencing back pain, neck pain, or headaches..."

Then it repeats those two points in five different paragraph structures for the next 1,800 words.

Latent Intent? Ignored. The article never addresses insurance, costs, what the first visit looks like, or how long treatment takes.

Counter-Intent? Ignored. No discussion of risks, contraindications, or when chiropractic care isn't the right fit.

Post-Intent? Ignored. No guidance on booking, preparation, or realistic expectations.

AI sees the gap. The word count is irrelevant if the framework is incomplete.

How the Two-AI Validation System Enforces Real Depth

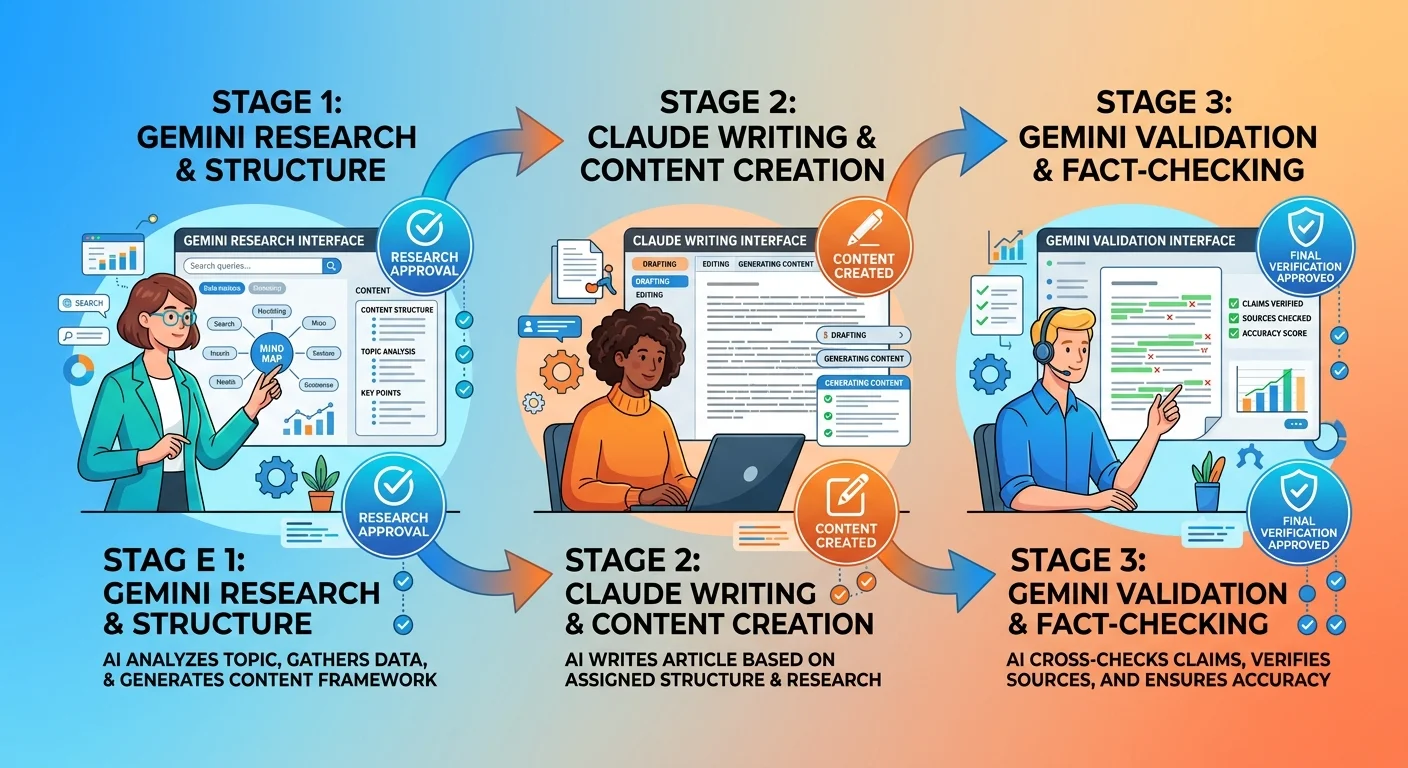

This is how iTech Valet writes AEO content.

Not by hitting a word count target. By running every article through a dual-validation loop.

Gemini researches the topic, identifies intent layers, maps external evidence, and builds the semantic blueprint.

Claude writes the article against that blueprint.

Then Gemini validates every claim, checks every source, and flags anything that can't be verified.

The output isn't "1500 words." The output is "verified, comprehensive, and AI-readable."

The Two-AI Validation System doesn't let fluff through. If a claim can't be sourced, it gets cut. If an intent layer isn't addressed, the draft gets rejected. If the semantic density is weak, the article goes back for another pass.

Why This Can't Be Replicated Manually

You could write 3,000 words in a weekend.

You can't manually cross-reference entity databases, verify citation accuracy across institutional sources, map semantic density, and ensure every intent layer is structurally addressed without the system.

That's the gap the DIY-er doesn't see.

The word count is easy. The validation infrastructure is not.

| Process Step | Manual Approach | Two-AI System | Result Quality |

|---|---|---|---|

| Topic Research | Google search, skim 3–5 articles, outline based on what feels relevant | Gemini analyzes institutional sources, maps intent layers, identifies semantic gaps | Manual = incomplete. Two-AI = comprehensive. |

| Claim Verification | Assume widely-repeated info is accurate | Gemini cross-checks every claim against Tier 1/2 sources, flags unsourced statements | Manual = hallucinations likely. Two-AI = verified only. |

| Semantic Mapping | Write what sounds good, hope it covers the topic | Claude structures content against Gemini's blueprint, addresses all 5 intent layers | Manual = random depth. Two-AI = systematic coverage. |

| Entity Integration | Mention practice name, maybe add a schema plugin | Schema pre-built, NAP verified, internal links semantically mapped | Manual = weak signals. Two-AI = machine-readable trust. |

| Final Validation | Self-edit for typos, publish | Gemini re-validates final draft, checks all sources, verifies intent coverage | Manual = hope. Two-AI = enforcement. |

FAQ

Does longer content still rank better on Google?

Longer content correlates with rankings because it tends to cover topics more comprehensively. The mechanism is depth, not length.

AI doesn't reward word count. It rewards complete, verified, intent-mapped content that happens to require more words to deliver.

Semrush's ranking factors study found top-ranking pages are often longer — but the study also found content depth, backlinks, and E-E-A-T signals were the primary drivers.

Length was a correlation. Not the cause.

What is a better metric than word count for AEO?

Semantic density. Entity trust. Intent coverage.

These are measurable.

Track how many unique concepts you introduce. How many intent layers you address. How many institutional sources you cite. How many entity signals you verify.

Word count is a byproduct, not the target.

How many words should an AI Authority article be?

As long as it needs to be to completely answer the user's question and cover all related sub-topics and intents.

Could be 800 words. Could be 4,000 words.

The number is irrelevant. The depth is everything.

If you finish covering all five intent layers in 900 words, publish 900 words. If it takes 3,500 to do it right, publish 3,500.

Letting the word count land naturally based on topic completeness is the strategy. Forcing it to hit a target is the mistake.

Can AI tell if content is just fluffed up to hit a word count?

Yes.

AI spots fluff. It's trained to flag low-density repetition the same way you'd spot a fake Rolex. The padding doesn't hide the mechanism — it exposes it.

Restating the same point three different ways to pad length actively harms your authority signals. AI sees fluff for what it is — noise that dilutes the core message.

Google's algorithm has been optimized for years to detect thin, low-value content. Answer engines like ChatGPT and Gemini operate on the same principle. They're not counting words. They're measuring information density.

If I stop focusing on word count, what should I focus on first?

Start by mapping the user's entire journey around a single topic.

What's the literal question? What's the real goal behind it? What related considerations haven't they asked about yet? What objections might stop them? What happens after they get the answer?

Cover all five intent layers. Let the word count land where it lands.

That's how you build content AI trusts.

Why do some chiropractors still see results from long-form articles?

They're confusing correlation with causation.

If a practice publishes a 3,000-word article and sees traffic increase, it's not because the article was 3,000 words. It's because the article happened to cover the topic comprehensively, which required 3,000 words.

The length didn't cause the result. The depth did.

Strip the depth out and keep the length, and the result disappears.

What happens if I keep paying for word-count-based content packages?

You're renting visibility you'll never own.

Content mills optimize for delivery speed and volume, not authority signals. You'll get articles. You won't get entity trust, semantic density, or AI recommendations.

The gap between you and practices running real AEO execution will widen every month.

That's not a scare tactic. That's the math.

Conclusion

Word count is a relic from an era when Google ranked pages by keyword density and backlink volume.

That system is dead.

AI answer engines operate on entity trust, semantic depth, and verified authority. You can chase the old metric and hope it works. Or you can build content the way AI actually measures it — by intent coverage, citation velocity, and infrastructure strength.

One approach keeps you invisible. The other makes you the answer.

There's no version of this where clinging to word count targets closes that gap.

The practices that figure this out first will own the AI recommendations in their markets. The ones that don't will keep wondering why their "great content" isn't moving the needle.

You've been sold the idea that "more words" equals better content. That if you just hit 1,500 or 2,000 or 2,500 words, AI will notice you.

It won't.

Because AI doesn't count paragraphs. It scans for entity signals, semantic relationships, and verified trust markers — none of which come from padding length.

Want to see what AI actually says when someone asks who to trust in your market? Run your AI Visibility Check. It takes 15 minutes and shows you exactly where you stand — not based on word count, but based on the authority signals AI actually uses to make recommendations.

If the results don't make the problem self-evident, walk away. But if they do? You'll know exactly what needs to be fixed — and it won't be adding another 500 words to your articles.