Why Your AI Authority Content Is Decaying (And How Quarterly Audits Fix It)

Your authority content is decaying right now. Not because you did something wrong — because the AI models that used to cite you have moved past what your content reflects. This creates "semantic drift" — where once-authoritative content no longer aligns with what answer engines trust. Without regular audits, your content decays. The facts become outdated. The entity signals weaken. The intent alignment breaks. And the AI engines that used to cite you as the primary answer stop recommending you entirely.

This isn't about keyword rankings dropping or traffic declining. Those are lagging indicators of a deeper problem. The real issue is authority decay — the slow erosion of trust signals that AI uses to determine whose name to say. When someone asks ChatGPT, Gemini, or Grok who the best chiropractor in their area is, they're asking a question that gets answered based on entity trust, factual accuracy, and semantic density. If your content no longer reflects what AI considers current and authoritative, you're not part of that conversation anymore.

Quarterly AEO audits prevent this decay. They identify outdated facts, broken entity signals, and misaligned intent before your competitors exploit the gap. Because here's what most practices don't realize: the businesses that audit quarterly aren't just maintaining their position. They're actively widening the authority gap while everyone else assumes their "evergreen" content is still working.

Here's the breakdown: why authority decays, what semantic drift really is, and how audits become an offensive strategy — not a chore. If you paid for authority content and assumed it would work indefinitely, this is the reality check that prevents that investment from becoming invisible.

Last Updated: April 27, 2026

The Authority Content Trap: Why "Evergreen" Is a Liability Now

Here's the trap most chiropractors fall into: you paid for content. You published it. The work's done.

That assumption worked when Google's algorithm changed twice a year and "authoritative" stayed relatively stable. Write once, rank forever, collect leads while you sleep.

AI search broke that model entirely.

The content you published six months ago was optimized for an AI model that doesn't exist anymore. Entity signals? Outdated. Semantic density? Misaligned. The model moved. Your content didn't. And every month it sits untouched, the gap widens.

This isn't theoretical. Practices dominating AI recommendations nine months ago are invisible today. Not because their content was bad. Because they thought "evergreen" still meant "permanent."

It doesn't.

The Set-It-and-Forget-It Assumption

The belief killing most authority investments: "I paid for the content. I published it. The work is done."

Made sense in the traditional SEO era. Once you ranked on page one, you could ride that position for months or years. The algorithm rewarded established content. Age was an advantage.

AI search inverts that logic.

AI prioritizes recency signals. Factual accuracy verified against current institutional sources. Semantic alignment with constantly evolving models. "Established" means nothing if your content no longer reflects what the model considers current and trustworthy.

I've watched practices invest $15k in authority infrastructure, launch a content library, see strong initial AI visibility — and then assume the system would maintain itself indefinitely.

Twelve months later? They're calling because their competitor is the one being named in AI responses. And they have no idea why.

The why is simple: they stopped maintaining what they built.

Authority compounds when you feed it. It decays when you don't.

The businesses dominating AI recommendations aren't the ones with the best original content. They're the ones auditing quarterly and fixing drift before it becomes invisibility.

Why AI Models Change Faster Than Your Content

ChatGPT, Gemini, and Grok undergo continuous updates.

Knowledge cutoffs shift. Training data expands. The criteria these engines use to evaluate trust and authority evolve with every iteration.

What was authoritative six months ago may not meet the current model's standards. A statistic cutting-edge in January is outdated by June. A treatment protocol aligned with best practices last quarter doesn't match this quarter's institutional guidance.

Your content doesn't update itself.

The model moves. Your content stays static. And every day that gap widens, you're one step closer to being the practice AI used to recommend.

This isn't about major model overhauls once or twice a year. According to Search Engine Journal, the shift is continuous. The engines train on expanding datasets that include newer research, updated professional standards, current entity information. If your content reflects last year's data, AI sees it as less trustworthy than content reflecting this quarter's data.

You're not competing against your competitor's website.

You're competing against your competitor's most recent content update.

| Factor | Traditional SEO Model | AEO Model Reality |

|---|---|---|

| Content Lifespan Assumption | 2-3 years before refresh needed | 3-6 months before semantic drift begins |

| Update Frequency | Annual or as-needed | Quarterly minimum to prevent authority decay |

| Decay Trigger | Algorithm update or competitor outranking | Model evolution and semantic misalignment |

| Consequence of Neglect | Gradual ranking decline over months | Sudden citation loss — AI stops naming you |

The False Security of Page Views

Your Google Analytics might show steady traffic.

That doesn't mean AI is still citing you.

Traffic metrics create a dangerous illusion. You're seeing visitors from old backlinks, branded searches, residual Google rankings from months ago. None of that tells you whether ChatGPT is still recommending you when someone asks for the best chiropractor in your area.

By the time traffic drops, authority decay has already progressed for months.

You're diagnosing the problem after the competitor took your spot. The damage is done. The rebuild takes longer than the maintenance would have.

I tell practices this all the time: traffic is a lagging indicator of AI authority. You lose the citation first. Then you lose the traffic.

The practices that wait for Analytics to show a problem before acting are the ones calling six months later asking why they're invisible — and discovering they're now 18 months behind the competitor who audited quarterly the entire time.

What Semantic Drift Actually Means (And Why It's Accelerating)

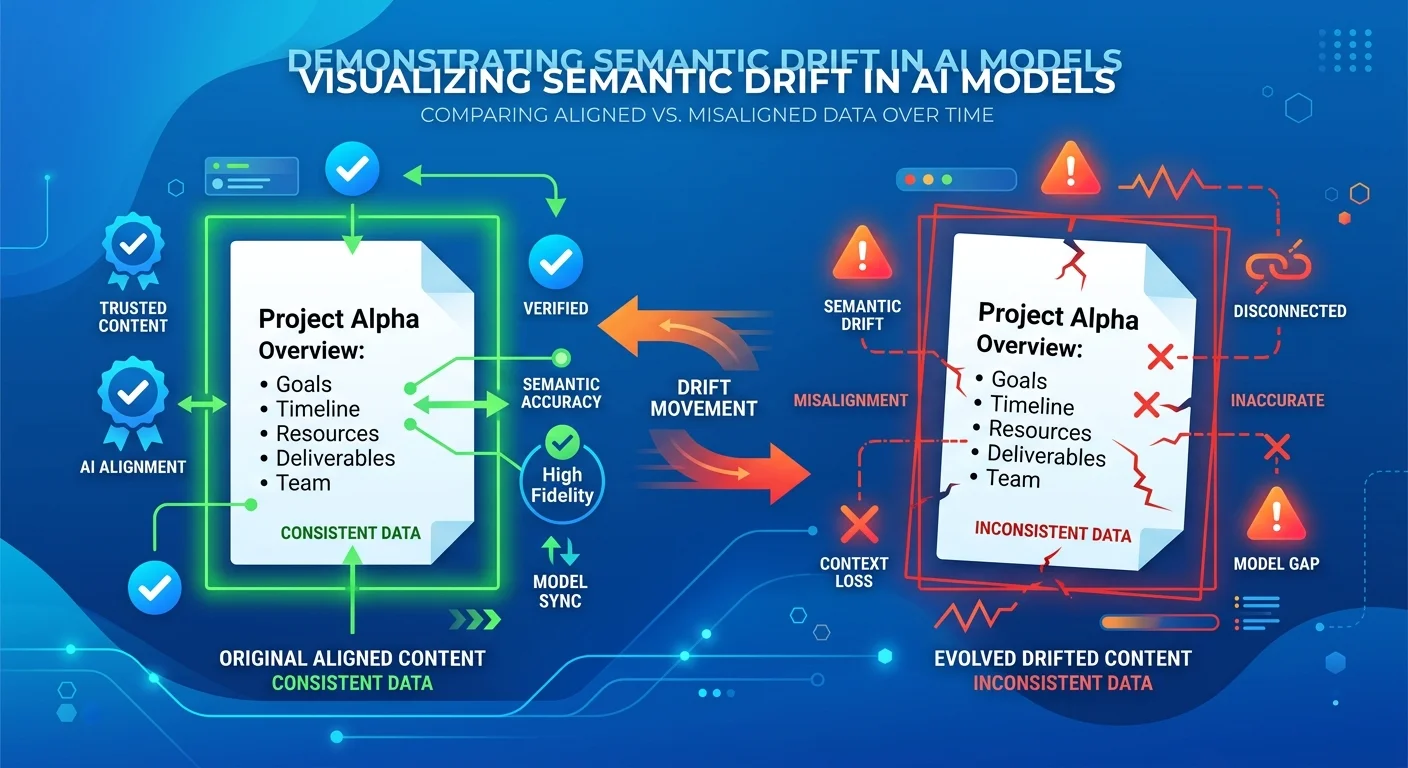

Semantic drift is the gap between what your content says and what AI engines currently consider trustworthy and accurate.

It's not about your content becoming factually wrong overnight.

It's about the meaning AI assigns to your topic shifting while your content stays static.

Here's the mechanism: AI models train on institutional datasets that evolve continuously. Medical guidelines get updated. Professional standards change. Research supersedes older studies. Regulatory language shifts. And when the model's understanding of "authoritative" evolves, content that doesn't reflect that evolution gets downgraded — even if it was perfect when published.

Your content doesn't decay because it's old.

It decays because the model's definition of "current and trustworthy" has moved past it.

And the practices that don't audit quarterly are building authority on a foundation that's eroding beneath them — while their competitors build on a foundation that's being actively maintained.

The Three Layers of Semantic Drift

Factual Drift is the obvious one.

Statistics change. Treatment protocols evolve. Regulations update. Professional certifications get revised.

If your content cites a 2022 study and a 2024 study has superseded it, AI sees your content as less authoritative — even if the core conclusion hasn't changed. The model prioritizes recency. Outdated citations signal that your content hasn't been maintained.

Entity Signal Drift happens when your business information changes but your content doesn't reflect those updates.

You added a new location. Updated certifications. Expanded service offerings. Hired a new associate. None of that reflected in your schema markup, entity descriptions, or content structure. AI sees inconsistency between what your content claims and what your entity signals confirm — and downgrades trust.

Intent Drift is the subtlest and most damaging.

The questions people ask evolve. The way AI interprets those questions evolves. And if your content was written to answer last year's version of the question, it no longer aligns with what AI considers the "correct" answer today.

This is why traditional content audits fail entirely.

They check whether your facts are wrong. They don't check whether your facts, entity signals, and intent alignment still match what AI trusts.

Semantic drift accelerates because, as Microsoft Research has documented, information has a half-life. Digital content loses relevance over time without intervention — and that decay is faster now than it's ever been.

Why Traditional Content Audits Miss This Entirely

Traditional SEO content audits are built for an algorithm that doesn't exist anymore.

They check for broken links. Verify keyword density. Make sure your meta descriptions are optimized. Scan for duplicate content and confirm your images have alt text.

All surface-level stuff that assumes the ranking algorithm is still the mechanism determining visibility.

That entire framework is obsolete.

AEO audits don't check whether your content is optimized for Google's algorithm. They check whether AI engines trust your entity signals enough to cite you as the primary answer.

That requires reverse-engineering how ChatGPT, Gemini, and Grok establish confidence.

It means validating factual accuracy against the institutional sources AI models use for training. It means checking whether your semantic density still aligns with what Gemini validates as authoritative. It means testing whether your content answers the current version of the user's question — not the version from six months ago.

Traditional audits are checklists.

As Semrush notes in their content audit guide, AEO audits are diagnostics.

The first optimizes for rankings. The second optimizes for trust.

And the practices running traditional audits are measuring the wrong things entirely — which is why they don't see authority decay until it's already cost them months of visibility.

| Audit Focus Area | Traditional SEO Audit | AEO Audit | Why It Matters for AI |

|---|---|---|---|

| Factual Accuracy Verification | Rarely checked — assumed accurate at publish | Every claim cross-referenced against current institutional sources | AI downgrades content citing outdated or unverifiable data |

| Entity Signal Validation | Not included in most audits | Schema, business info, credentials checked for consistency | Entity trust erodes when signals conflict across platforms |

| Semantic Alignment | Not measured | Content tested against AI model responses to confirm alignment | Misalignment causes AI to cite competitors instead |

| Intent Mapping | Keyword-focused only | Validated against current user questions AI interprets | Intent drift makes content irrelevant to AI's answer logic |

| Citation Structure | Checks for broken external links | Validates source authority and recency against AI training standards | Weak or outdated sources reduce content authority by association |

| Source Trust Verification | Not prioritized | Institutional tier verification required | AI engines evaluate trust of sources — not just presence of citations |

The Acceleration Problem

Semantic drift used to be gradual.

In the traditional SEO era, you could audit content annually and stay ahead of decay.

Now? Quarterly is the new minimum.

AI model updates are accelerating. According to HubSpot's State of Marketing research, the volume of competing content is exploding. And the engines prioritize recency signals more aggressively than they did even six months ago.

Every practice that publishes AEO content is now in an active maintenance race.

The ones that audit quarterly compound their authority advantage. The ones that don't are standing still while the gap widens — and by the time they notice, they're 12 months behind.

This isn't a future trend.

It's the present reality.

The practices dominating AI recommendations right now are the ones that treat authority maintenance as a system — not a one-time project they can forget about.

The Quarterly Audit Framework: What Gets Checked and Why

A quarterly AEO audit is not a checklist.

It's a diagnostic system that reverse-engineers whether AI engines still consider your content trustworthy enough to cite.

Here's what that looks like in practice.

This is where the AI Authority Engine model separates from template-based content strategies. Authority isn't claimed once and maintained passively. It's built in layers — and those layers require active reinforcement every quarter to prevent semantic drift from eroding what you've already established.

The audit framework has four core components. Each one addresses a specific decay vector that causes AI engines to stop citing you as the primary answer.

Factual Accuracy Verification

Every statistic, study, treatment protocol, regulation, or professional standard cited in your content is checked against current authoritative sources.

If the data has been updated or superseded, the content is revised.

This isn't optional. AI engines cross-reference claims. Outdated facts don't just weaken individual articles — they erode entity trust across your entire content library.

- Statistics and research findings — Verified against institutional sources (NIH, CDC, peer-reviewed journals). If a newer study exists, the citation is updated.

- Professional standards and protocols — Checked against current licensing board guidelines, accreditation body updates, and regulatory changes.

- Treatment efficacy claims — Cross-referenced with the most recent clinical evidence. Even if the core claim remains valid, the supporting evidence must reflect current research.

- Business-specific data — Practice size claims, patient volume references, years in operation — all confirmed accurate as of the audit date.

The goal isn't to rewrite content from scratch every quarter.

It's to identify the 5-10% of claims per article that have drifted out of alignment with what AI currently validates as trustworthy — and fix them before the model downgrades your authority.

Entity Signal Validation

Your business information, credentials, service descriptions, and schema markup are validated for accuracy and completeness.

Entity signals decay when business details change but content doesn't reflect those updates. AI sees inconsistency between what your schema claims and what your content confirms — and downgrades trust.

- Schema markup accuracy — LocalBusiness, MedicalBusiness, and Service schema checked for current addresses, phone numbers, hours, and service descriptions.

- Credential and certification updates — New licenses, certifications, or professional memberships added to author bios and entity descriptions.

- Service offering alignment — If you've added or removed services, all content referencing your service menu is updated to maintain consistency.

- Staff and location changes — New providers, relocated offices, expanded facilities — all reflected in schema and content to prevent entity signal conflicts.

This is where Building Entity Trust either compounds or decays.

Consistent signals across every touchpoint strengthen AI confidence. Conflicting signals — even minor discrepancies — weaken it.

Semantic Alignment Check

This is where AEO audits diverge entirely from traditional audits.

Semantic alignment measures whether your content still reflects the meaning AI assigns to your topic. It's validated by running the content through AI engines themselves and checking whether the response aligns with your positioning.

Here's the test: ask ChatGPT, Gemini, and Grok the question your article is supposed to answer.

If your business isn't named in the response — or if the answer provided conflicts with your content's framing — you've drifted out of semantic alignment.

- Question-answer validation — Your article's H1 question is tested against live AI responses. If the engines are now answering that question differently than your content does, intent has drifted.

- Terminology and language evolution — Professional language shifts. Terms fall out of favor. New phrasing becomes standard. If your content uses outdated terminology, AI sees it as less current.

- Topical relevance scoring — AI engines assign relevance scores based on how well content matches their training data. If your Semantic Density no longer aligns with what the model expects for your topic, you're being deprioritized.

Semantic alignment isn't something you can check with a keyword tool or a content grader.

It requires testing your content against the AI engines themselves — and adjusting based on how those engines currently interpret authority in your topic space.

Citation Structure and Source Trust

External sources cited in your content are checked for continued authority.

If a source has been updated, retracted, or replaced by more authoritative research, the citation is updated. AI engines evaluate the trust of your sources — if you're citing outdated or weak sources, your content's authority suffers by association.

- Source recency validation — Citations checked against publication dates. If newer research exists on the same topic, the citation is upgraded.

- Institutional trust verification — Every external link validated against AI's source tier hierarchy (.gov, .edu, peer-reviewed journals prioritized over industry blogs and tools).

- Retraction and correction scanning — Studies cited in content checked for retractions, corrections, or contradictory follow-up research.

- Citation density balance — Too few citations signal weak authority. Too many signal keyword stuffing. The ratio is recalibrated quarterly to match AI's current expectations.

The practices that dominate AI recommendations aren't just citing sources.

They're citing the right sources — the ones AI engines trust most — and updating those citations quarterly to maintain authority.

As BrightEdge defines it, content decay isn't just about your content aging. It's about the sources your content relies on aging — and AI engines noticing that lag.

| Audit Component | What Gets Checked | Red Flag Indicators |

|---|---|---|

| Factual Accuracy | Statistics, studies, protocols, regulations verified against current institutional sources | Data cited is more than 12 months old with no recent confirmation; studies cited have been superseded |

| Entity Signals | Schema markup, credentials, service descriptions, business info consistency across platforms | Conflicting addresses, outdated certifications, service menu mismatches between schema and content |

| Semantic Alignment | Content tested against live AI responses to confirm positioning matches current model interpretation | Business not named in AI responses; article framing conflicts with how AI currently answers the question |

| Citation Trust | External sources validated for recency, institutional authority, and absence of retractions | Sources older than 18 months; citations from weak-tier domains; studies retracted or contradicted |

| Intent Mapping | H1 question validated against current user search patterns and AI interpretation of intent | Question phrasing no longer matches how users ask; AI interprets intent differently than article addresses |

| Schema Validation | LocalBusiness, MedicalBusiness, Service schema checked for completeness and accuracy | Missing required fields; outdated values; conflicts between schema types across pages |

Authority Decay vs. Content Decay: They're Not the Same Thing

Most businesses conflate these two concepts.

That confusion costs them visibility.

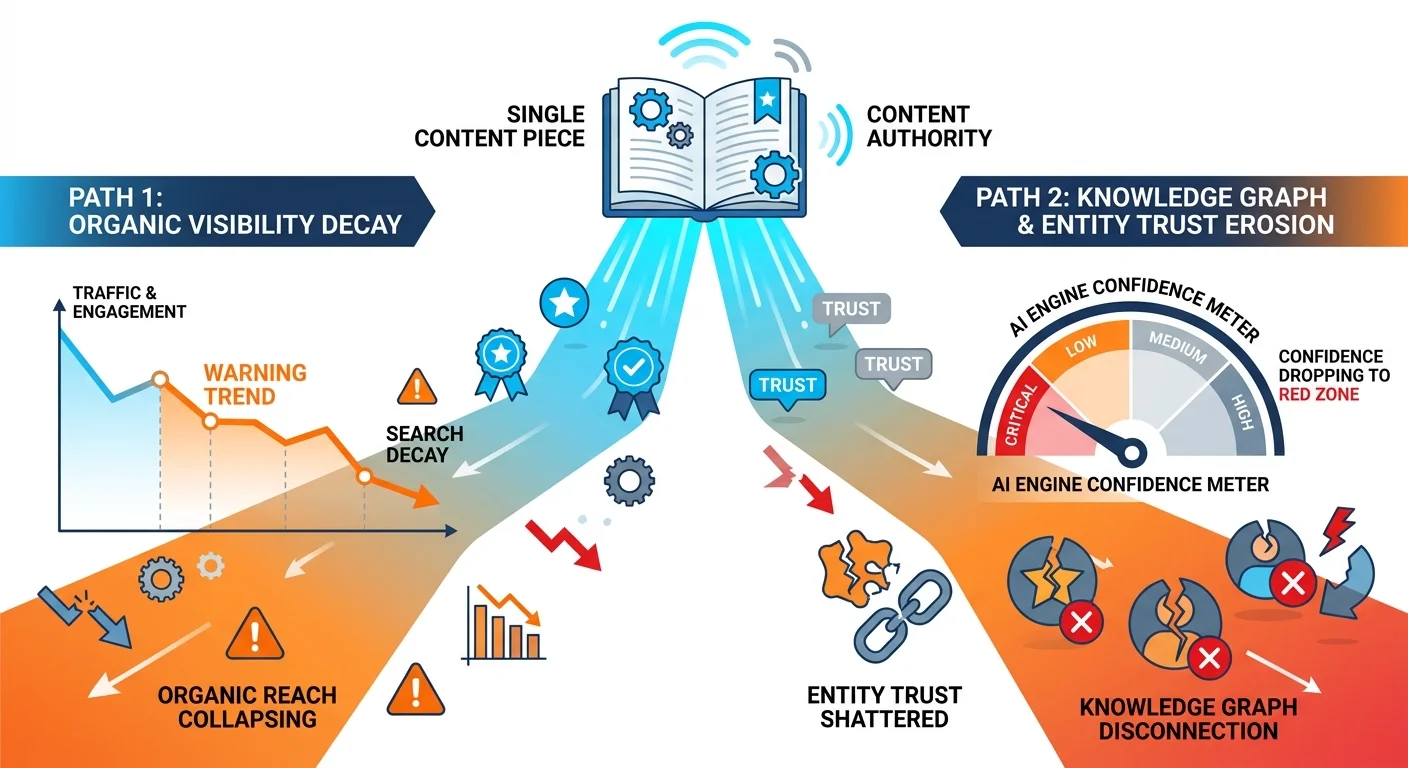

Content decay is what happens when traffic drops. Authority decay is what happens when AI stops citing you.

The second precedes the first by months.

By the time you see content decay in Google Analytics, authority decay has already progressed to the point where AI engines have replaced you with a competitor in their recommendations.

You're diagnosing the symptom after the disease has run its course.

The practices that dominate AI recommendations understand this distinction. They don't wait for traffic to drop before acting. They audit quarterly to catch authority decay before it becomes content decay — because by the time you see the traffic impact, you've already lost 6-12 months of compounding authority to whoever kept auditing while you stood still.

Content Decay: The Symptom

Content decay is measurable through traditional metrics.

Traffic declines. Rankings drop. Engagement falls. Conversion rates weaken. All the signals you'd see in Google Analytics or Search Console that tell you something is wrong.

It's visible. It's quantifiable.

And it's too late.

Content decay is what happens after authority decay has already caused AI engines to stop recommending you. The traffic drop is a lagging indicator of a trust problem that started months earlier.

- Traffic from AI search declines — Zero-click searches stop sending visitors because AI is no longer naming you in responses.

- Branded search volume weakens — Fewer people search for your business name because they're not hearing it from AI engines.

- Conversion rates fall — The visitors you do get are less qualified because they're not coming from AI recommendations that pre-sold your authority.

- Competitor mentions increase — Patients start asking about competitors by name during consultations — because that's who ChatGPT told them to see.

Content decay is real.

But it's a symptom, not a cause. Treating it reactively is like taking aspirin for appendicitis. You're addressing pain while ignoring the underlying problem.

Authority Decay: The Cause

Authority decay is invisible to traditional analytics.

It's the erosion of entity trust. The weakening of semantic alignment. The breakdown of citation structure. The drift between what your content claims and what AI currently validates as authoritative.

AI engines stop recommending you before your traffic drops.

The authority decay timeline runs 6-12 months ahead of the content decay timeline. By the time Analytics shows you a problem, competitors have already taken your spot — and rebuilding takes longer than maintenance would have.

- Entity trust signals weaken — Outdated credentials, inconsistent business information, and conflicting schema markup reduce AI confidence in your authority.

- Semantic alignment breaks — Your content no longer reflects how AI currently interprets the questions you're trying to answer.

- Citation trust erodes — The sources you cited six months ago are now outdated or have been replaced by stronger institutional research.

- Intent mapping drifts — The way users phrase questions evolves, and your content no longer matches the current version of those questions.

Authority decay doesn't show up in traffic reports.

It shows up when you ask ChatGPT who the best chiropractor in your area is — and it names your competitor instead of you.

That's the diagnostic that matters. And by the time you run it reactively, the damage is done.

The audit catches authority decay before it becomes content decay.

That's the entire point. Building Entity Trust isn't a one-time project. It's a system that requires quarterly reinforcement — or it erodes while you're not looking.

Why Waiting for Traffic Drops Guarantees Invisibility

Here's what happens when you wait for traffic to drop before acting.

Your content is published. Authority signals are strong. AI engines cite you frequently. Traffic grows. Everything looks good.

Six months pass. You don't audit. Semantic drift begins. Facts become outdated. Entity signals weaken. Intent alignment breaks. AI confidence in your content drops — but you don't see it yet because traffic is still steady from residual backlinks and branded searches.

Nine months pass. AI engines stop citing you in new recommendations. Competitors who audited quarterly have overtaken you. But traffic is still holding because of lag. Analytics looks fine. You assume everything is working.

Twelve months pass. Traffic finally drops.

You investigate. You discover AI is now naming your competitor in every response where it used to name you. You realize the problem started six months ago — but you're only seeing it now.

You call for an audit. The damage is quantified. Outdated facts, broken entity signals, semantic misalignment across your entire content library. The fix takes three months and costs more than quarterly audits would have cost for the entire year.

And during those three months, your competitor — who never stopped auditing — widens the gap even further.

That's not a hypothetical timeline.

That's the pattern I've watched play out with practices that assumed "evergreen" meant permanent.

If you're waiting for traffic to drop before you act, you're not being cautious. You're choosing to let authority decay while competitors who audit quarterly exploit that vulnerability.

Quick pause. If you think authority is a one-time build, you're in the wrong place. This is ongoing maintenance — quarterly, non-negotiable. If that doesn't fit your decision framework, walk away now. It requires quarterly audits to prevent semantic drift from eroding what you've built.

If that timeline doesn't fit your decision framework, no hard feelings.

But if you're tired of watching competitors take spots you used to own — and you're willing to treat authority as a system instead of a one-time project — you're in the right place.

The practices that dominate AI recommendations aren't hoping their content stays relevant.

They're auditing quarterly to make sure it does.

| Quarter | Authority Signals | AI Citation Behavior | Traffic Impact |

|---|---|---|---|

| Quarter 1 | Content published, entity signals strong, semantic alignment validated | High citation rate — business named in most relevant AI responses | Traffic growing, conversion rates strong |

| Quarter 2 | No audit performed, semantic drift begins, facts aging, entity signals weakening | Citation rate declining — AI starting to prefer competitors with fresher content | Traffic plateaus, still looks stable in Analytics |

| Quarter 3 | Drift accelerating, outdated data conflicts with AI training, intent misalignment | AI rarely cites business — competitors dominate recommendations | Traffic beginning to decline, slower conversion |

| Quarter 4+ | Authority fully decayed, content seen as outdated, entity trust eroded | Zero citations — AI exclusively recommends competitors | Traffic dropped 40-60%, branded searches declining |

Frequently Asked Questions

What's the difference between a traditional SEO content audit and an AEO audit?

Traditional SEO audits focus on keyword rankings, backlink health, and on-page optimization. They check whether your title tags are correct, your meta descriptions are compelling, and your internal linking structure is sound. All surface-level signals designed to help you rank on Google's search results page.

AEO audits focus on entity trust, factual accuracy verified against institutional sources, semantic alignment with AI model expectations, and citation structure that AI engines use to validate authority.

The former optimizes for an algorithm that ranks pages. The latter optimizes for machines that think — and decide whose name to say when someone asks a question.

An SEO audit tells you if you're ranking.

An AEO audit tells you if AI trusts you enough to recommend you.

Those aren't the same thing.

And in a world where zero-click searches are replacing traditional search results, optimizing for rankings is optimizing for a mechanism that's being replaced.

How often do AI models like Gemini and ChatGPT actually update?

Major model updates occur periodically — GPT-4 to GPT-4.5, Gemini 1.0 to Gemini 1.5. Those are the ones that get announced and covered in the press.

But the underlying training data and evaluation criteria evolve continuously.

Knowledge cutoffs shift. The way these engines assess trust changes subtly but constantly. The models are learning from an expanding dataset that includes newer research, updated professional standards, and current entity information.

This creates semantic drift.

Your content stays static while the model's definition of "authoritative" moves. What was perfectly aligned in January may be subtly misaligned by June — and significantly misaligned by December.

Quarterly audits track this drift before it becomes authority decay. By the time a major model update is announced, the practices auditing quarterly have already adjusted their content to match the direction the training data was heading.

They're ahead of the shift — not reacting to it.

Can I perform an AI content audit myself?

You can check basic facts with a checklist. You can update statistics and fix broken links. You can verify your business hours are correct in your schema markup.

You cannot reverse-engineer entity trust validation or semantic alignment without specialized tools and an understanding of how AI models establish confidence.

This isn't "reading through your blog posts and updating old statistics."

It's a diagnostic process that measures signals invisible to traditional analytics.

- Entity trust scoring — Requires testing how AI engines interpret your business information across multiple platforms and identifying conflicts.

- Semantic alignment validation — Requires running your content through AI models and analyzing whether their responses align with your positioning.

- Citation trust verification — Requires evaluating whether your sources meet the institutional tier standards AI prioritizes in training data.

The DIY approach catches surface-level decay.

It misses the structural decay that causes AI engines to stop citing you.

The practices that audit quarterly aren't doing it themselves. They're using AEO Content Writing Services that include quarterly maintenance as part of the model — because authority doesn't compound when it's only checked once. The businesses dominating AI recommendations are measuring answer dominance quarterly to track their citation rates.

What is "authority decay"?

Authority decay is the erosion of trust signals that causes AI engines to stop citing your content as the primary answer.

It's caused by outdated facts that conflict with what AI validates as current, weakened entity signals that reduce confidence in your business information, and semantic misalignment where your content no longer matches how AI interprets the questions you're answering.

Unlike traffic drops, authority decay is invisible until it's too late.

You don't see it in Google Analytics. You see it when you run the AI Visibility Check and discover your competitor is the one being named — not you.

Audits catch authority decay early, before it progresses to the point where AI has replaced you entirely in recommendations.

Is there such a thing as "evergreen" content in the age of AI?

The concept of "evergreen" has fundamentally changed.

Foundational topics remain relevant — "What is chiropractic care?" will always be a valid question. But the specific facts, entity signals, and semantic alignment within that content must be actively maintained.

"Evergreen" now means "continuously updated," not "write once and forget."

The content that stays authoritative in the AI era isn't content that was perfect when published. It's content that's been audited quarterly to prevent semantic drift from eroding the trust signals AI uses to validate authority.

The practices treating "evergreen" as permanent are the ones losing visibility to competitors who understand that AI doesn't set and forget. It validates continuously.

How do I know if my content is experiencing authority decay?

Run the AI Visibility Check.

Ask ChatGPT, Gemini, and Grok the questions your content is supposed to answer. Ask them to recommend the best chiropractor in your area. Ask them to explain the treatment approach you specialize in.

If your business isn't named in the response, authority decay has already progressed.

The audit quantifies how far and identifies the specific signals causing the erosion.

If you're seeing steady traffic in Analytics but you're not being cited by AI engines, you're 6-9 months away from watching that traffic collapse. Authority decay runs ahead of content decay. By the time you notice the traffic drop, you've already lost the authority position.

The diagnostic isn't "how's my traffic?"

The diagnostic is "what does AI say when someone asks my question?"

Answer that question quarterly — not when traffic drops.

What happens if I skip audits for a year?

Your competitors widen the gap.

Every quarter you don't audit, your authority signals weaken while competitors who do audit strengthen theirs. AI engines don't wait for you to catch up. They recommend whoever has the strongest current signals.

The Authority Sibling Rule applies here: isolated content that isn't maintained loses to maintained content clusters. The competitor auditing quarterly isn't just keeping pace. They're compounding their advantage while you're standing still.

By the time you notice the traffic drop — usually 9-12 months after you stopped auditing — you're not just behind.

You're 12-18 months behind, because the rebuild takes longer than the maintenance would have.

Authority isn't neutral. It either compounds or decays.

There's no standing still.

Can't I just update my content when I notice a problem?

By the time you notice, authority decay has already caused AI engines to stop citing you.

Reactive updates are too late.

The practices that dominate AI recommendations audit proactively — before the problem becomes visible. They're measuring answer dominance quarterly to track whether they're still being cited at the rate they were three months ago.

You can fix outdated content reactively.

You can't fix the 6-12 months of compounding authority your competitor gained while you waited to notice the problem.

The Cost of Inaction

Authority maintenance is not optional in the AEO era.

The practices that audit quarterly are actively exploiting the vulnerability created by competitors who assume their content is evergreen. Authority compounds for those who maintain it. It erodes for those who don't.

The gap widens every quarter you wait.

You already invested in the Authority Engine. You already paid for the content. Not auditing is choosing to let that investment decay into invisibility.

The question isn't whether you can afford quarterly audits.

The question is whether you can afford to lose the authority you already built — and watch a competitor take the spot you used to own while you wait for traffic to drop before acting.

There's no neutral position here.

Either you're maintaining authority and widening the gap, or you're standing still while competitors take your spot. AI doesn't wait for you to catch up. It recommends whoever has the strongest current signals. And every quarter you don't audit, those signals weaken while your competitor's strengthen.

I've seen practices lose 12 months of authority positioning because they assumed "evergreen" meant permanent.

By the time they called for an audit, the competitor had compounded so far ahead that the rebuild took twice as long as maintenance would have cost.

Run the check. See where you stand.

If semantic drift has already set in, you'll know exactly what needs to be fixed. If your signals are still strong, you'll know what to protect.

But waiting for traffic to drop before you act guarantees you're diagnosing the problem 6-9 months after it started — and every month of that delay is a month your competitor spent widening the gap.

Want to know whether your content is still being cited — or if semantic drift has already caused AI to replace you with a competitor?

The AI Visibility Check takes 15 minutes and shows you exactly what ChatGPT, Gemini, and Grok say when someone asks who to trust in your market.

If your business isn't named in those responses, you're not just losing traffic. You're losing authority — and every quarter you wait to fix it is a quarter your competitor spends compounding theirs.

See what AI says about your practice right now.

The practices that audit quarterly aren't reacting to problems. They're preventing them. The ones that don't are the ones calling 12 months later asking why they're invisible.