Authority Signals vs. Vanity Metrics: How to Measure What Fills a Chiropractic Waiting Room

In the AI-first patient discovery landscape of 2026, traditional metrics — website traffic, keyword rankings, page impressions — are no longer valid predictors of clinic growth. Search has shifted from "a ranked list of links" to "a conversational verdict." Visibility is now binary: you're either the cited recommendation or you're invisible.

Success must now be measured through Authority Signals — the machine-readable data points AI engines like ChatGPT, Gemini, and Grok use to verify entity trust before they'll stake their reputation on naming a practice.

Three signals carry the most weight:

- Entity Consensus — Identical NAP (Name, Address, Phone) data confirmed identically across 15 or more authoritative directories including Google Business Profile, Healthgrades, Zocdoc, and Vitals. AI engines cross-reference these sources before recommending any practice. A single inconsistency — a name variation, a phone number mismatch, an outdated address — is enough to break the verification chain and remove the practice from consideration.

- Schema Markup Depth — Technical JSON-LD code that maps clinical specialties, service areas, and conditions treated in a machine-readable format. Without it, the website's content is written for humans — invisible to the reasoning engine trying to determine what the practice actually does.

- Citation Frequency — How often the practice is named as the primary answer when patients ask AI engines condition-specific questions like "best chiropractor for sciatica near me."

According to Gartner, traditional search engine volume is projected to drop 25% by 2026 as patients shift to AI chatbots for recommendations. In that environment, ClickVision research confirms that more than 80% of searches already end without a click to any external website. Patients get the answer — and a specific practice name — inside the AI response. They act on it. They never visit your site.

A practice can rank #1 on Google and receive zero AI recommendations. Google ranks pages based on relevance. AI recommends entities based on verified trust. These are fundamentally different systems with different criteria, and only one of them is filling waiting rooms right now.

This article breaks down what vanity metrics are actually measuring, what Authority Signals are and why AI engines care about them, and how to determine which side of that divide your practice currently sits on.

Last Updated: April 10, 2026

- The Report Says Green. The Waiting Room Says Empty.

- What Authority Signals Are — And Why AI Engines Care

- The Economic Autopsy of a Green Report

- How iTech Valet Measures What Actually Fills Waiting Rooms

-

Frequently Asked Questions

- Why is website traffic considered a vanity metric in 2026?

- What are the most important Authority Signals for a chiropractor?

- Can I rank number one on Google and still have zero AI recommendations?

- How does iTech Valet measure success if not through traffic?

- What is the 1.2% Rule and how does it affect my clinic?

- What is the zero-click problem and how does it affect chiropractic practices?

- How long does it take for Authority Signals to influence AI recommendations?

- The Verdict Is the Only Metric That Matters Now

The Report Says Green. The Waiting Room Says Empty.

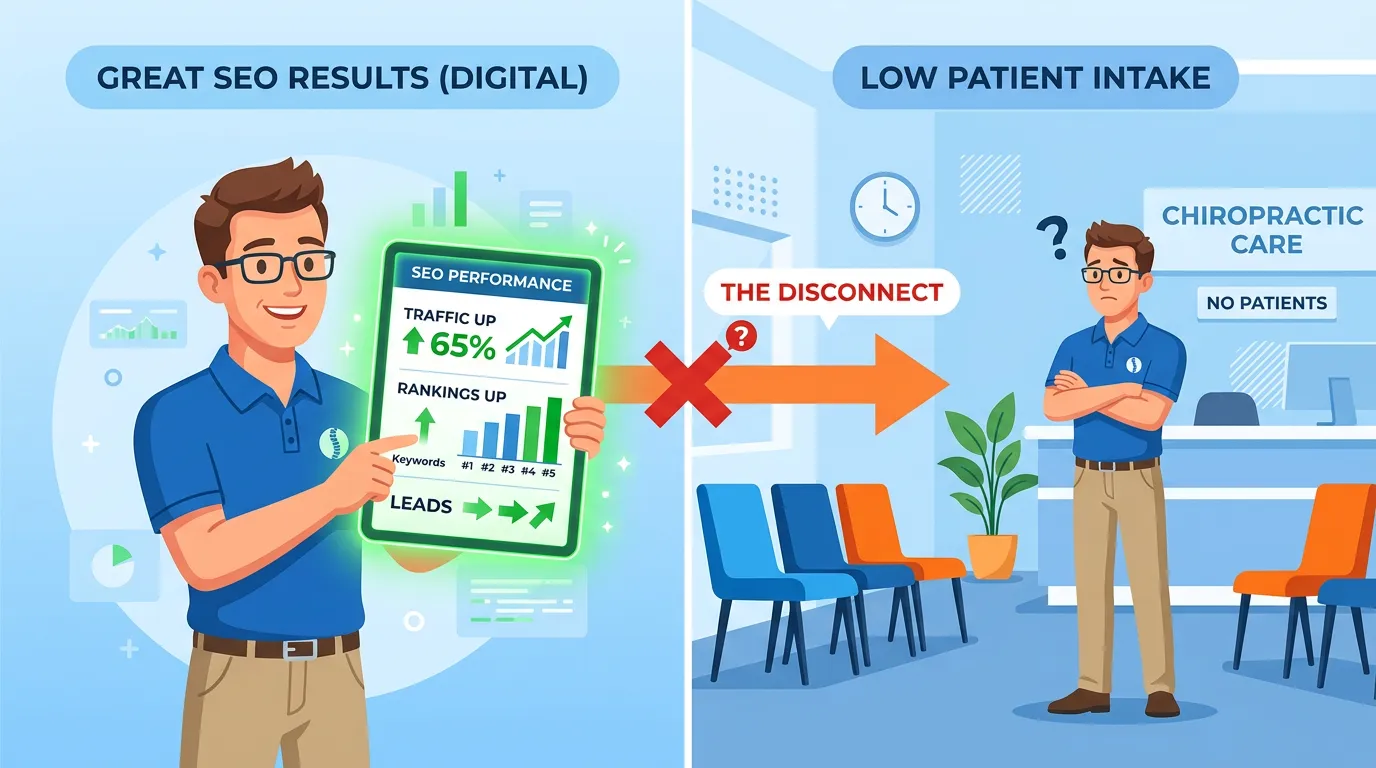

Picture it. Tuesday afternoon. The agency report hits the inbox — green across the board. Rankings up. Traffic up. Fourteen KPIs pointing the right direction. And there are four empty chairs in the waiting room.

That gap doesn't appear anywhere in the report.

The dashboard is showing you the score of a game your patients already stopped watching.

Why Traditional SEO Reports Can't See What AI Can't See

That's the Digital Brochure Fallacy. A website built to rank on Google is not the same infrastructure built to be trusted by a reasoning engine. Most agencies don't know the difference — and there's no incentive for them to point it out. The discovery channel moved. The AI authority agency space exists because the metrics they were selling never moved with it.

Traditional SEO does one thing.

It moves a page up a list.

Keywords, backlinks, technical audits, domain authority scores — all of it designed for a world where Google shows ten results and a human clicks one. AI doesn't produce a list. AI produces a verdict. Those aren't variations of the same thing. A list gives the patient options. A verdict gives the patient a name. One of those fills your waiting room. The other gets a click that leads somewhere, eventually, maybe.

I've watched this wreck practices that had everything going for them. Solid reputation. Great reviews. An SEO vendor billing faithfully for three years — always a green report, never an explanation for why new patients weren't matching the traffic numbers. And then a patient stops at the front desk: "My friend asked ChatGPT and it gave me your name." That's nice when it happens. It's a disaster when it doesn't — and for most practices right now, it doesn't.

Gartner projects a 25% drop in traditional search engine volume by 2026 as AI chatbots take over how patients find providers. Ranking-chasing is a legacy tactic. The channel is shrinking. Every dollar you spend on it this month is a dollar not going toward the infrastructure AI actually uses to pick a name.

The Metrics That Look Right and Mean Nothing

Let's run the autopsy on what's actually in that report.

- Keyword Rankings — Your page is moving up a list. ClickVision research confirms that more than 80% of searches now end without any click at all. The list exists. Fewer patients are using it. The ranking is real. The relevance is declining.

- Organic Traffic — Counts people who clicked through to the website. Doesn't count patients who got a name from ChatGPT and called directly. That discovery event is invisible to your analytics — because it happened before any click occurred.

- Impressions — How many times a page showed up in results. Has zero relationship to AI recommendation frequency. Zero.

- Backlinks / Domain Authority — Legacy signals from an older version of how trust worked on the web. AI engines don't verify entity trust through backlink profiles. They verify through structured data, directory consistency, and citation depth. These are different systems.

- Bounce Rate / Session Duration — Website engagement metrics for people who actually showed up. Completely irrelevant to patients who acted on an AI recommendation before they ever visited the website.

None of these are lies. They're just answering the wrong question. And they'll keep answering it every month you pay for them.

What Authority Signals Are — And Why AI Engines Care

AI engines have a reputational problem.

They're asked to name specific businesses to real people making real healthcare decisions. Recommend a practice that's closed, wrong, or unverifiable — and the trust hit goes to the AI engine, not the practice. That's a problem worth solving aggressively.

So they got selective. Brutally selective. The machine isn't trying to rank a website. It's deciding whether an entity is safe to recommend. Those are completely different operations — and understanding how AI reads every layer of patient intent makes that clear. The signals AI checks have nothing to do with keyword rankings, backlinks, or page speed.

Entity Consensus: The Machine Needs Certainty

Before AI will recommend a practice, it needs to confirm the practice exists.

Sounds obvious. It isn't simple.

I've seen practices with great reputations get skipped entirely. Not because their clinical outcomes were weak. Because the machine couldn't confirm they existed with certainty.

That's the Entity Consensus problem. AI cross-references your Name, Address, and Phone number across 15-plus authoritative directories before it'll stake a recommendation on you — Google Business Profile, Healthgrades, Zocdoc, Vitals, and more. If the data matches across all of them, you're verifiable. If it doesn't, you're a question mark. AI doesn't recommend question marks.

The drift happens slowly and quietly. A phone number updates on the website but sits wrong in eight directories. An old address stays live on three platforms. The practice name appears four different ways because different people handled different listings at different times.

To a human, minor inconsistency. To an AI engine running entity verification — a practice it can't confirm. And a practice it can't confirm doesn't get named.

Schema Markup Depth: Speaking AI's Language

A website without schema markup is a medical chart written in a language the AI can't read.

Your content is all there. The machine can't use it. It can't identify your specialties, your service area, or the conditions you treat — because that information is written for humans, not structured for machines.

Schema fixes it. It's code that lives in the background of your site and maps your clinical services, specialties, and service area into something a reasoning engine can actually verify. Deep schema gives AI the structured data it needs to name you with confidence. No schema, and the machine skips you. It'll find someone with structured data and name them instead.

The website can look beautiful. If the machine can't read it, it doesn't count.

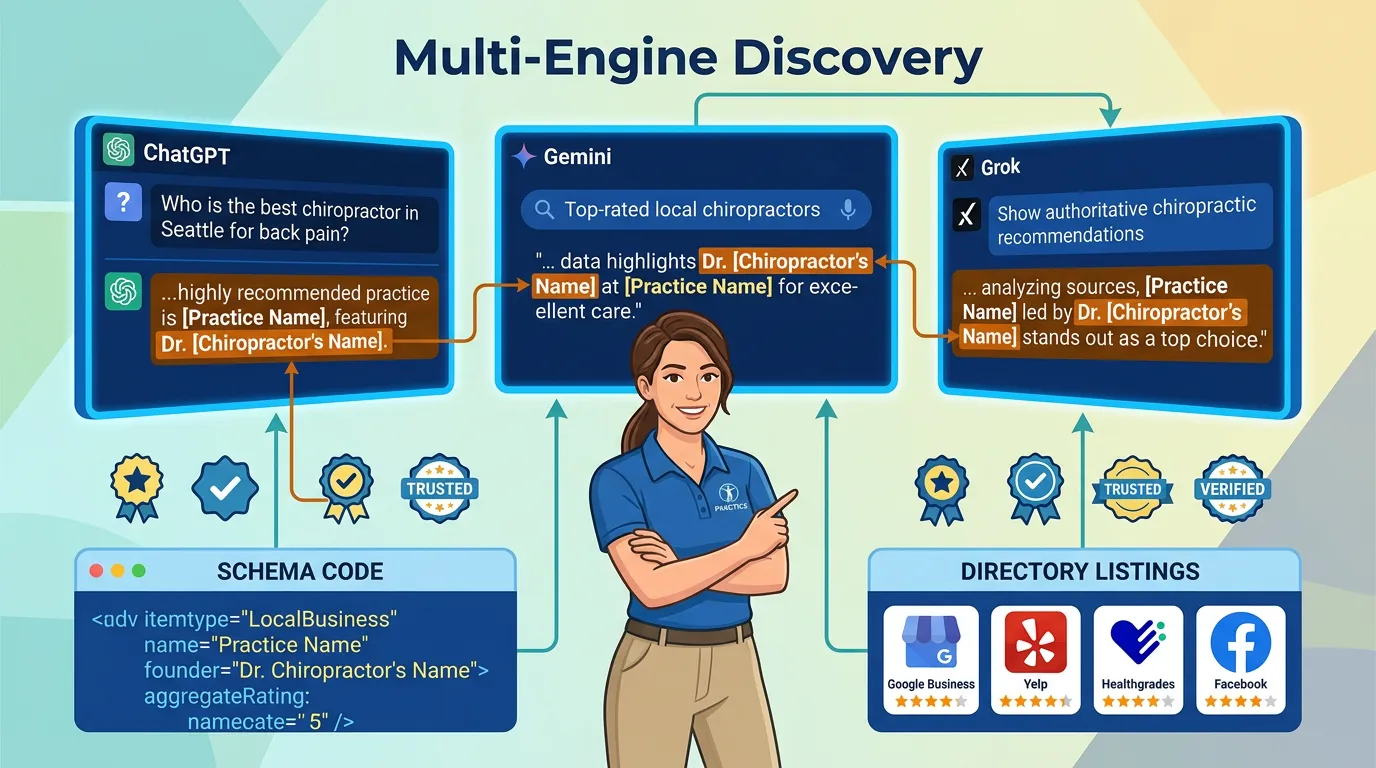

Citation Frequency: The Verdict Signal

Citation Frequency is the closest real measurement to "is AI actually recommending this practice."

It asks the question directly: when patients query ChatGPT, Gemini, or Grok with condition-specific language — "chiropractor for lower back pain near [city]," "who should I see for a herniated disc in [neighborhood]" — does this practice's name appear?

Research from SOCi confirms that AI is nearly 30 times more selective than Google's local 3-Pack. Only 1.2% of business locations receive regular AI recommendations. That's the 1.2% Rule. For every 100 practices in a market, fewer than two are getting named consistently.

Here's the uncomfortable part. You can't track Citation Frequency in any dashboard on the market. Not Google Search Console, not Semrush, not any AI visibility tool currently for sale. The only reliable method is systematic multi-engine discovery — running actual patient-language queries and documenting whose name comes up. If Entity Consensus and Schema Depth aren't established, Citation Frequency stays at zero. The green dashboard will never surface that.

| Signal Type | Metric | What It Measures | What It Doesn't Tell You | Who Uses It |

|---|---|---|---|---|

| Vanity | Keyword Rankings | Page position in Google | Whether AI engines can identify the practice | Legacy SEO dashboards |

| Vanity | Organic Traffic | Website visits from search | Whether patients found the practice via AI | Agency monthly reports |

| Vanity | Impressions | Times a page appeared in results | Whether any patient acted on an AI recommendation | Google Search Console |

| Vanity | Domain Authority | Third-party backlink score | Entity trust as seen by AI engines | Moz, Ahrefs |

| Authority | Entity Consensus Score | NAP consistency across 15+ directories | — | AI reasoning engines |

| Authority | Schema Markup Depth | Structured clinical data parseable by AI | — | AI reasoning engines |

| Authority | Citation Frequency | How often AI names the practice | — | The practice getting recommended |

The Economic Autopsy of a Green Report

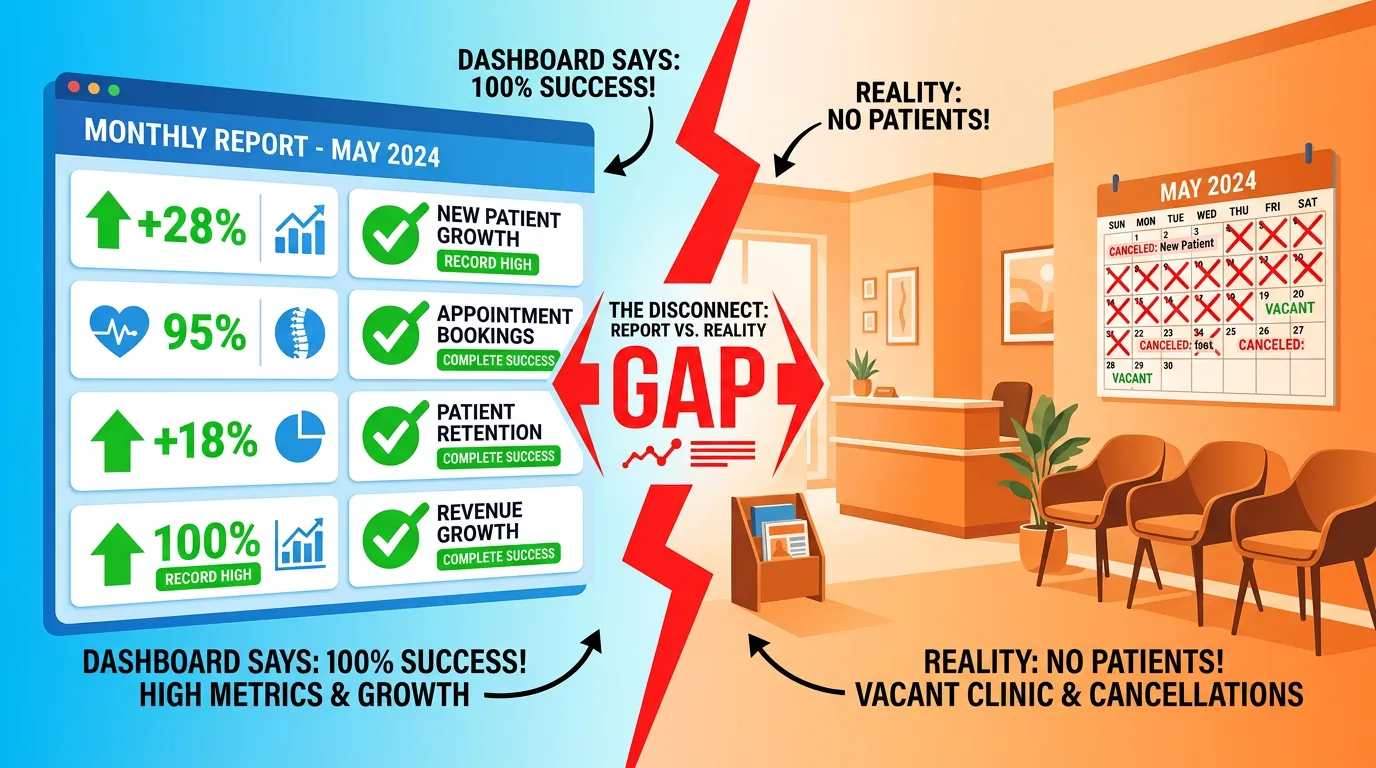

I call it an Economic Autopsy.

You take the green report and trace each metric back to its actual relationship with patient acquisition. Not in theory. In practice.

The results are almost always the same: the metrics look fine, the discovery channel is broken, and nobody in the relationship has any reason to say so — because the agency's business model is built around the metrics that look fine. That's the Hopium Cycle. The doc feels like progress is happening. The patients who asked ChatGPT for a chiropractor this week are sitting in someone else's office.

Performing the Autopsy: What the Numbers Actually Mean

Here's how the math actually looks.

The practice is ranking #1 for "chiropractor [city name]." That means they're at the top of a Google list. ClickVision research shows that more than 80% of searches end without any click — which means most of the patients making this query never scroll a list at all. They asked an AI chatbot. They got a name. They called. The ranking had nothing to do with it.

Same practice: 4,200 monthly website visitors. HubSpot research shows generic informational blog traffic fell 70-80% for domains that failed to establish unique topical authority. Those 4,200 visitors aren't the high-intent new patient queries the practice thinks they are. And none of them came from AI discovery.

200 backlinks. Domain authority 42. Completely irrelevant to whether ChatGPT knows this practice exists, can verify its NAP data, and trusts its schema enough to name it in a response.

The green report is answering "how is this website performing in traditional search?" It can't answer "is AI recommending this practice when a patient asks?" Those are different questions. The report you're paying for can only answer one of them.

The Hopium Cycle in Practice

The Hopium Cycle runs in four stages. I've watched practices cycle through all of them.

- Stage 1: The green report arrives. The metrics trend upward. The practice owner exhales. The agency keeps the retainer. Everyone feels good.

- Stage 2: New patient flow flatlines or drops. The explanation is always external — algorithm changes, more competition, seasonal variation. The metrics still look fine, so the agency isn't the culprit. Everyone looks elsewhere.

- Stage 3: The practice doubles down. More budget into the same tactics. More content that generates traffic but builds zero entity trust. The gap between the dashboard and the waiting room gets wider, not smaller.

- Stage 4: The practice walks. From that agency. From the tactic. Sometimes from digital marketing entirely. Then they find the next vendor selling the same system with a different name on the deck.

I've talked to docs who've been through this loop two and three times. The sunk cost is real. The frustration is real. And in every month they spent chasing rankings, the practices building entity authority were compounding a lead.

| Agency Report Says | What AI Engine Actually Sees | The Impact |

|---|---|---|

| #1 Keyword Ranking | A page. Not a verified entity. | Zero AI recommendations regardless of rank. |

| 4,200 Monthly Visitors | Irrelevant — zero-click era | No patient discovery benefit from AI channel. |

| 200 Backlinks / DA 42 | Legacy signals; no entity verification | Structurally invisible to AI reasoning engines. |

| 4.8-Star Google Rating | Partial signal; needs directory consensus | Incomplete authority — possible but not probable recommendation. |

| "Green" Overall SEO Score | Missing schema, inconsistent NAP, no AI citations | The Invisibility Cloak is still fully active. |

How iTech Valet Measures What Actually Fills Waiting Rooms

Short version: we don't measure traffic. We measure whose name AI says.

That's it. That's the metric with a direct relationship to new patient discovery right now. Everything else flows from that.

A Word to the Budget-First Buyer

Before I explain how this works — let me be direct about who it's actually for.

If your first question is "how does this compare to my $500/month SEO retainer" — we're not your fit. That's not a judgment. It's just that those aren't competing versions of the same service. One optimizes for a ranking list. The other builds the entity trust infrastructure that controls AI recommendations. Comparing price points between them is like comparing the cost of a sign on the door to the cost of building the door.

The Budget-First Buyer measures spend on a spreadsheet and decides from there. That's the same measurement error this entire article is about. We build for docs who understand that the cost of AI invisibility isn't a line item — it's the patients leaving the parking lot to go call the practice AI just named.

If you're still price-comparing, keep looking. Plenty of vendors will sell you a green dashboard.

If you're asking "how do I actually know if AI is recommending me" — keep reading.

The AI Recommendation Frequency Audit

Measuring AI recommendation frequency means running real queries.

Not branded queries. Not "Coastal Chiropractic." Actual patient language — the way someone types into ChatGPT when their back goes out on a Sunday and they need someone tomorrow. "Best chiropractor for lower back pain near [city]." "Who do I see for a herniated disc in [neighborhood]." "Chiropractor that takes [insurance] in [area]."

Those are the queries that produce names. We run them across ChatGPT, Gemini, and Grok. We document who appears, how consistently, and whether what AI says about that practice is even accurate.

That measurement has a direct relationship to new patient flow. A #1 ranking doesn't have that relationship anymore. Traffic volume doesn't. This does.

Building Authority vs. Tracking Shadows

Alright, quick reality check on the most common counter-argument.

"Can't I just track how many times AI has mentioned my practice?"

No. Not reliably.

Seer Interactive's analysis confirms that tracking generic AI visibility in prompts is subjective and inconsistent — the only citations that matter are the ones that actually turn into patients walking through the door. Any vendor selling a dashboard that tracks AI citation counts with keyword-ranking precision is selling data that doesn't exist in a verified format.

That's The Measurement Lie. It's the Hopium Cycle in a new interface. The number looks like signal. It's noise.

High-volume traffic is not the primary driver of clinic growth in 2026. That assumption made sense years ago. Consensus trust from AI engines matters more than click volume now. The whole premise of building authority that compounds over time is that entity trust doesn't expire. It builds. Traffic spikes don't compound. They just cost more every month to sustain.

The free AI Visibility Check is the fastest way to see where a practice actually stands across all three Authority Signals — before spending another dollar on a system that's measuring the wrong game.

| Category | Vanity Metric | Authority Signal | Relationship to AI Recommendations |

|---|---|---|---|

| Visibility | Keyword Ranking (Google) | Citation Frequency (Multi-Engine) | Keyword rank has no measurable relationship to AI citation |

| Trust | Domain Authority (backlinks) | Entity Consensus (directory confirmation) | DA is not an AI trust factor; directory consensus is |

| Data Readability | Website Content (for humans) | Schema Markup (for machines) | AI cannot extract clinical data from unstructured content |

| Discovery Channel | Click-through Traffic | AI Recommendation Frequency | AI discovery bypasses click-based traffic entirely |

| Measurement | Dashboard Score | Systematic Multi-Engine Audit | No dashboard tracks real AI citation frequency reliably |

Frequently Asked Questions

Why is website traffic considered a vanity metric in 2026?

Clicks are decoupled from patient acquisition. When a patient asks ChatGPT for a chiropractor recommendation and gets a specific name in the response, they never visit your website.

They got everything they needed inside the AI response. A practice name. Sometimes a phone number. Enough to act. Your website traffic metric for that patient reads zero — because no click happened. Meanwhile, a competitor who hasn't touched their website in six months just got a new patient because their entity data was clean and their schema was in place.

Traffic measures activity on a channel. It doesn't tell you whether that channel is the one your patients are using to find a provider anymore.

What are the most important Authority Signals for a chiropractor?

The three highest-weight signals are Entity Consensus (identical NAP data across 15 or more authoritative directories), Schema Markup Depth (technical JSON-LD mapping of clinical specialties and service areas), and Citation Frequency (how often the practice appears as the named recommendation in AI-generated responses for specific condition queries like "sciatica help near me").

Entity Consensus is typically the most broken one — because directory data drifts over time and nobody's watching it. A phone number changes. Three directories get updated. Eight don't. Six months later, the machine can't confirm you're the same entity across all sources.

Can I rank number one on Google and still have zero AI recommendations?

Yes. Google ranks pages based on relevance signals. AI engines recommend entities based on verified trust. These are entirely different systems with different criteria. A practice with strong on-page SEO but missing schema, inconsistent directory listings, and no structured entity data will be invisible to AI reasoning engines regardless of its Google ranking.

I've run this check with practices that were convinced they were in great shape. The ranking was real. The AI visibility was zero. Both things were true at the same time — because they were being evaluated by two entirely different systems looking for two entirely different things.

How does iTech Valet measure success if not through traffic?

We measure AI recommendation frequency — running systematic multi-engine discovery across ChatGPT, Gemini, and Grok using condition-specific and location-specific queries to determine which practices are actually being named. We also audit entity consensus scores, schema depth, and citation patterns across structured data platforms. Traffic is not on the scorecard.

If you want to know where your practice stands right now across all three Authority Signals, the AI Visibility Check gives you that answer — not as a ranking, but as a structured audit of what AI engines can actually find, verify, and trust about your practice.

What is the 1.2% Rule and how does it affect my clinic?

Research from SOCi shows that AI engines are nearly 30 times more selective than Google's 3-Pack, recommending only 1.2% of verified business locations when responding to discovery queries. In practical terms, this means that for every 100 chiropractic practices in a market, fewer than two will regularly receive AI recommendations. If your practice lacks verified entity authority, you are statistically invisible in the AI discovery channel.

This isn't a number to feel bad about. It's a number to act on. The practices in that 1.2% didn't get there by accident. They got there because their entity data is clean, their schema is deep, and their content is structured for machine reasoning — not just for human readers. Scaling from local visibility to recognized regional authority starts with exactly these signals, applied consistently.

What is the zero-click problem and how does it affect chiropractic practices?

Over 80% of searches now end without a click to an external website. Patients receive a conversational answer — including a specific practice recommendation — inside the AI response and act on it directly. This makes website traffic an increasingly unreliable proxy for patient discovery. If your practice is not being named in that AI response, the search happened and you were not a factor.

The zero-click reality means the entire premise of traffic-based marketing is deteriorating. Impressions, CTR, visitor volume — all of these measure behavior on a channel that patients are using less for provider discovery. The discovery event happens inside the AI response. The practice either appears in that response or it doesn't.

How long does it take for Authority Signals to influence AI recommendations?

Authority infrastructure compounds over time. Entity consensus, schema architecture, and content depth each need time to be indexed and validated by AI engines. There is no reliable timeline to guarantee when recommendations will appear — anyone offering specific timelines is selling a promise they cannot verify. What is consistent is that the gap between verified authorities and invisible practices widens every month.

This is why waiting costs more than it looks like. Every month a competitor spends building entity authority is a month of compounding advantage. The practices that move now are building a lead that doesn't erode — because verified trust accumulates the same way debt does: quietly, consistently, until the gap is too wide to close quickly.

The Verdict Is the Only Metric That Matters Now

Here's what I keep coming back to when I talk to docs about this.

The question isn't "where do I rank." The question is: when a patient opens ChatGPT and asks for a chiropractor for their specific condition in their specific area — is your name the answer?

That's binary. You're in the answer or you're not. There's no page two. No third option. No "close enough."

The gap between the practices that get named and the ones that don't is compounding right now. Every month a practice spends optimizing for a ranked list, the practices building entity authority pull further ahead. AI trust doesn't reset. It accumulates. The lead the early movers build is not easy to close.

The green dashboard has no ability to fix AI invisibility. Zero. That requires a completely different infrastructure — entity verification, schema depth, structured content built for machine reasoning. Traditional search still moves some patients, but optimizing for it instead of building authority infrastructure is trading a shrinking channel for a growing one.

Stop optimizing for the list. The patients most worth reaching aren't using one anymore. They're asking a machine. The machine is saying someone's name.

Make sure it's yours.

Your SEO report is answering the wrong question.

The metrics it tracks — rankings, traffic, impressions, domain authority — measure performance inside a system patients are leaving. None of them can tell you whether ChatGPT, Gemini, or Grok named your practice this week when a patient asked for a chiropractor.

That answer lives somewhere else entirely.

The AI Visibility Check is the structured audit built specifically for this gap. It evaluates Entity Consensus across your directory footprint, Schema Markup Depth across your website, and Citation Frequency across the AI engines your patients are actually using. Not as rankings. As verified authority signals.

Find out whether AI is naming you — or someone else — before your next competitor does.

The practices closing this gap now are building a compounding lead. The ones waiting are watching it get wider. That math only runs one direction.