Clinical Transparency: Why AI Recommends Chiropractors Who Publish Outcomes

AI doesn't care how good your marketing sounds. It cares what it can verify.

When someone asks ChatGPT or Gemini who the best chiropractor near them is, the engine scans for proof. Documented outcomes. Published case studies. Measurable results in machine-readable formats. If you're not publishing verifiable clinical data, you're not in the conversation — no matter how good your care actually is.

Here's the thing: your patient outcomes are already there. You're tracking pain scores. You're measuring range of motion. You're seeing functional improvements every single week. The problem isn't that you're not producing results. The problem is AI can't verify them.

A website full of marketing copy and generic testimonials doesn't register as proof to an answer engine. A repository of anonymized case studies with tracked outcome metrics does. Schema markup on those case studies. Third-party validation across platforms. Semantic depth that proves you understand what you're doing, not just what you're selling.

The gap between invisible and recommended isn't clinical skill. It's documentation infrastructure.

The practices AI trusts are the ones publishing receipts.

Last Updated: April 24, 2026

Why AI Trusts What It Can Verify

The old patient acquisition model worked like this: convince someone you're good through marketing copy. Get them on the phone. Close the appointment.

That model assumed humans were doing the filtering.

They're not anymore.

When someone asks an AI engine who the best chiropractor is, the machine scans for verifiable proof before it even looks at your messaging. No schema markup? AI can't read your case studies. No structured outcome data? AI has nothing to validate. No documented treatment protocols? AI sees no repeatable process.

Marketing copy isn't proof to a machine. Data is.

The Federal Precedent for Healthcare Transparency

The federal government mandates transparency in healthcare pricing through CMS's Hospital Price Transparency rule. Not a suggestion. Policy.

Patients expect access to clinical and financial data before making decisions. AI expects the same thing. If hospitals are legally required to publish pricing and you're publishing zero clinical outcome data, you're structurally misaligned with where the industry is moving.

Transparency isn't a marketing edge anymore. It's baseline.

Patient-Reported Outcomes as Verifiable Data

The National Cancer Institute defines Patient-Reported Outcomes (PROs) as measurements of a patient's health status coming directly from the patient, without clinical interpretation.

That's verifiable data.

When a patient tells you their pain dropped from an 8 to a 2 after six adjustments — that's not marketing. That's a documented clinical outcome. Publish it anonymized, structured, schema-marked — and AI reads it as evidence.

Most chiropractic websites talk about helping patients. AI-recommended chiropractors publish proof that they helped, in formats machines validate.

| Trust Signal | Traditional Ranking Value | AI Answer Engine Value | Why AI Weights It Differently |

|---|---|---|---|

| Keyword density ("best chiropractor") | High — triggers ranking algorithm | Low — keyword stuffing ignored | AI prioritizes semantic meaning over keyword frequency |

| Generic testimonials | Medium — signals social proof | Very Low — anecdotal, unverifiable | No structure, no data, no validation layer |

| Published case studies with outcome metrics | Low — seen as "content" | Very High — verifiable clinical proof | Structured data AI can cross-reference |

| Schema markup on clinical data | Low — invisible to users | Critical — required for machine reading | Without schema, AI can't parse what the data represents |

Why "Trust Me" Doesn't Work on Machines

AI doesn't care how compelling your copy sounds.

The marketing industry built entire business models on persuasion. Emotional triggers. Resonant stories. All of that worked when humans read your website first.

AI reads it first now. It's not looking for a story. It's looking for receipts.

"I've helped hundreds of patients" means nothing to ChatGPT. "I've published 47 anonymized case studies showing an average 73% reduction in reported low back pain after 8 visits using Cox Flexion-Distraction technique" means everything.

First statement: claim. Second statement: verifiable data.

AI trusts the second. Ignores the first.

Most chiropractors are still writing for humans when the first filter deciding visibility is a machine that doesn't care about tone, personality, or brand voice. It cares about structure, verification, proof.

You're not competing against better marketers. You're competing against better documenters.

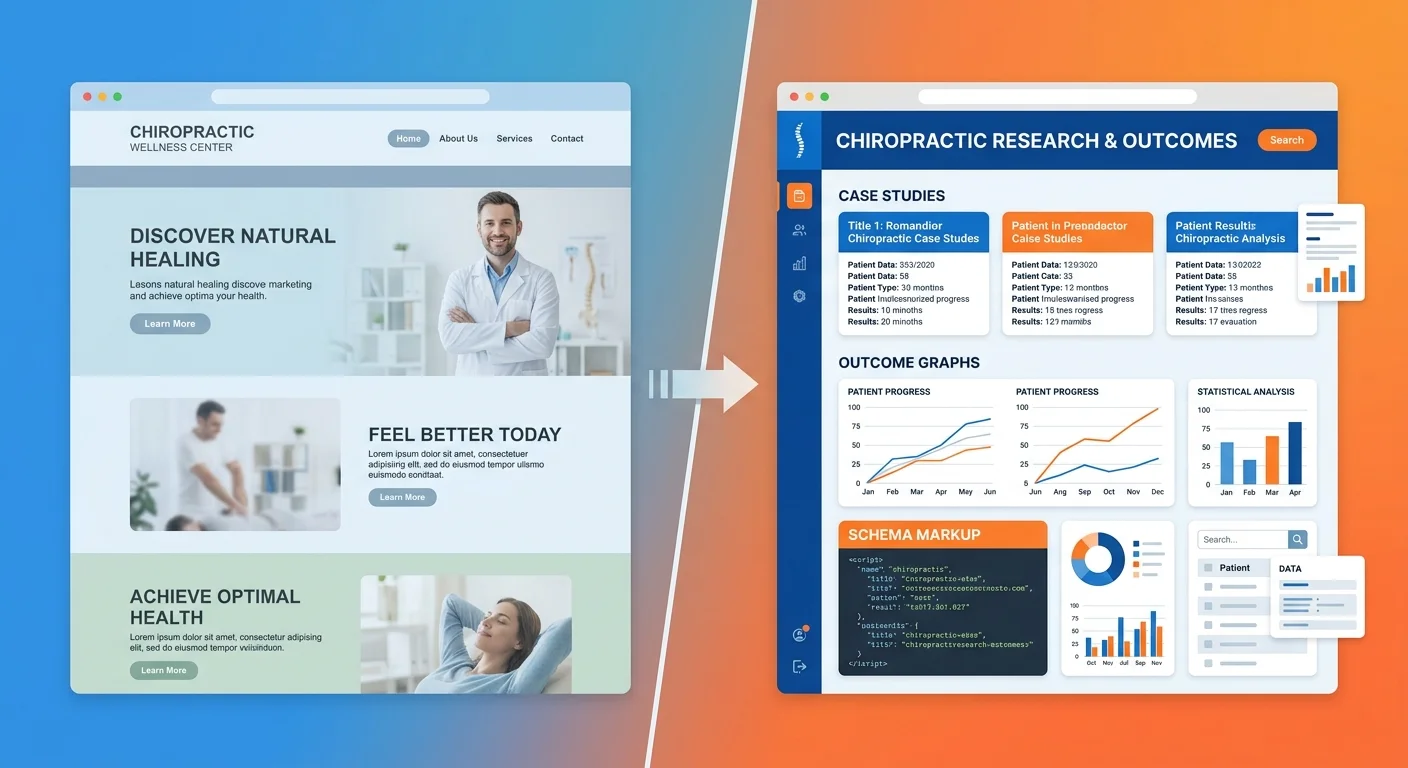

The Transparency Gap: What Most Chiropractic Websites Lack

Most chiropractic websites are digital brochures.

They describe what you do. Explain your philosophy. List conditions treated. Maybe there's a blog about posture.

None of that is verifiable proof.

AI scans your website the same way it scans a medical journal — looking for data it can validate. If your site doesn't contain structured, machine-readable evidence of clinical outcomes, AI moves on. It doesn't rank you lower. It excludes you entirely.

The gap isn't that your website looks bad. The gap is it contains nothing AI can verify.

What AI Sees When It Scans Your Website

When ChatGPT or Gemini crawls your site, it's looking for three things:

- Schema markup — data structured in formats machines parse

- Third-party corroboration — reviews, citations, directory listings that validate claims

- Semantic density — depth proving genuine expertise versus surface marketing

If all three are missing, your website is functionally invisible.

You might have 50 pages. Beautiful design. Five-star Google rating. But if the content isn't structured to tell AI what it's looking at, AI can't use it.

This is why practices with objectively better outcomes still lose to competitors in AI recommendations. The competitor's outcomes are machine-readable. Yours aren't.

To build the authority infrastructure AI trusts, you need more than content. You need structure.

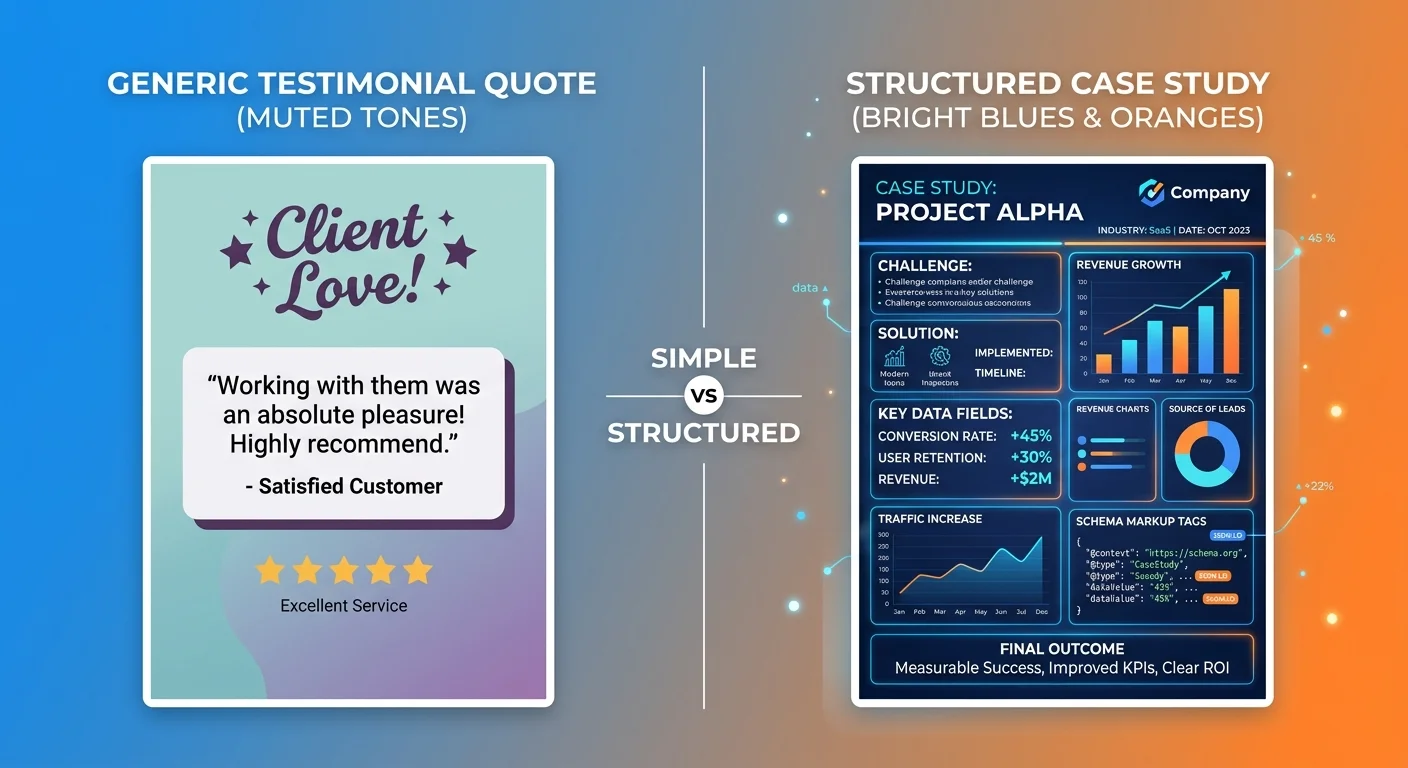

The Cost of Generic Testimonials

Testimonials feel like social proof. To AI, they're noise.

"Dr. Smith changed my life! I couldn't walk without pain for years, and after just a few visits, I'm back to running again. Highly recommend!"

Nice story. Completely unverifiable.

- No documented baseline measurement

- No treatment protocol described

- No outcome metric quantified

- No schema markup telling AI what this represents

AI can't validate it. So AI ignores it.

Compare that to a structured case study:

Patient Presentation: 42-year-old male, chronic low back pain (8/10 VAS), limited flexion ROM (40 degrees), Oswestry Disability Index 62%.

Treatment Protocol: Cox Flexion-Distraction 3x/week for 4 weeks, then 2x/week for 4 weeks.

Outcome: Pain reduced to 2/10 VAS, flexion ROM improved to 78 degrees, ODI dropped to 18%. Patient discharged after 20 visits.

That's verifiable. AI can compare that protocol and outcome to published data. It can validate methodology. It can cite it as proof.

The chiropractor with 200 testimonials and zero case studies loses to the one with 20 case studies and schema markup. Every time.

| Website Element | AI Can Validate? | Why or Why Not |

|---|---|---|

| Marketing headline ("Huntington Beach's #1 Chiropractor") | No | Subjective claim with no third-party verification |

| Generic testimonial quote | No | Anecdotal, no structure, no measurable data |

| Case study with VAS pain scores and ROM measurements | Yes | Structured outcome data AI can cross-reference |

| Schema markup on service pages | Yes | Machine-readable format telling AI what content represents |

| Blog post with external citations to peer-reviewed sources | Partial | Semantic depth signals expertise, but only if schema present |

What Clinical Transparency Actually Means for Chiropractors

Clinical transparency doesn't mean publishing everything.

It means publishing specific, structured, anonymized outcome data in formats machines can read.

You're not writing a journal article. You're documenting what you already do — patient presentations you see, protocols you use, measurable outcomes you achieve — and structuring it so AI can verify.

That's it. That's the mechanism.

Most chiropractors think transparency means exposing themselves to liability or giving away trade secrets. It doesn't. It means proving your clinical process is consistent, repeatable, effective by publishing receipts.

Anonymization ≠ Weakness

HIPAA requires removing all personally identifiable information from published clinical data.

That doesn't weaken proof. It protects the patient.

An anonymized case study showing a 45-year-old construction worker with chronic neck pain improving from 7/10 VAS to 1/10 after 12 visits using Gonstead technique is just as verifiable as one with the patient's name attached.

Clinical data intact. Treatment protocol documented. Outcome measurable. Patient identity protected.

AI doesn't need to know who the patient is. It needs to know the outcome was real, the protocol documented, the process repeatable.

Anonymization is how you stay compliant while building machine trust.

The Three Layers of Clinical Transparency

Clinical transparency for AI visibility breaks into three layers:

Layer 1: Published Treatment Protocols

Document what you do. Specific technique. Frequency. Duration. Adjunctive therapies. This tells AI you have a process, not just a philosophy.

Layer 2: Aggregated Outcome Statistics

Over 100 low back pain cases treated in the last 12 months, average pain reduction from 7.2/10 VAS to 2.1/10, average visits to result: 14. This proves consistency.

Layer 3: Detailed Case Studies

Individual patient presentations with baseline metrics, treatment protocols, documented outcomes. This provides the depth AI uses to validate the first two layers.

You don't need all three on day one. But to be the answer AI recommends, you build toward all three.

Research shows transparency about data and methodology increases public trust in experts. AI engines operate on the same principle — they trust what they can verify.

How Answer Engines Validate Clinical Data

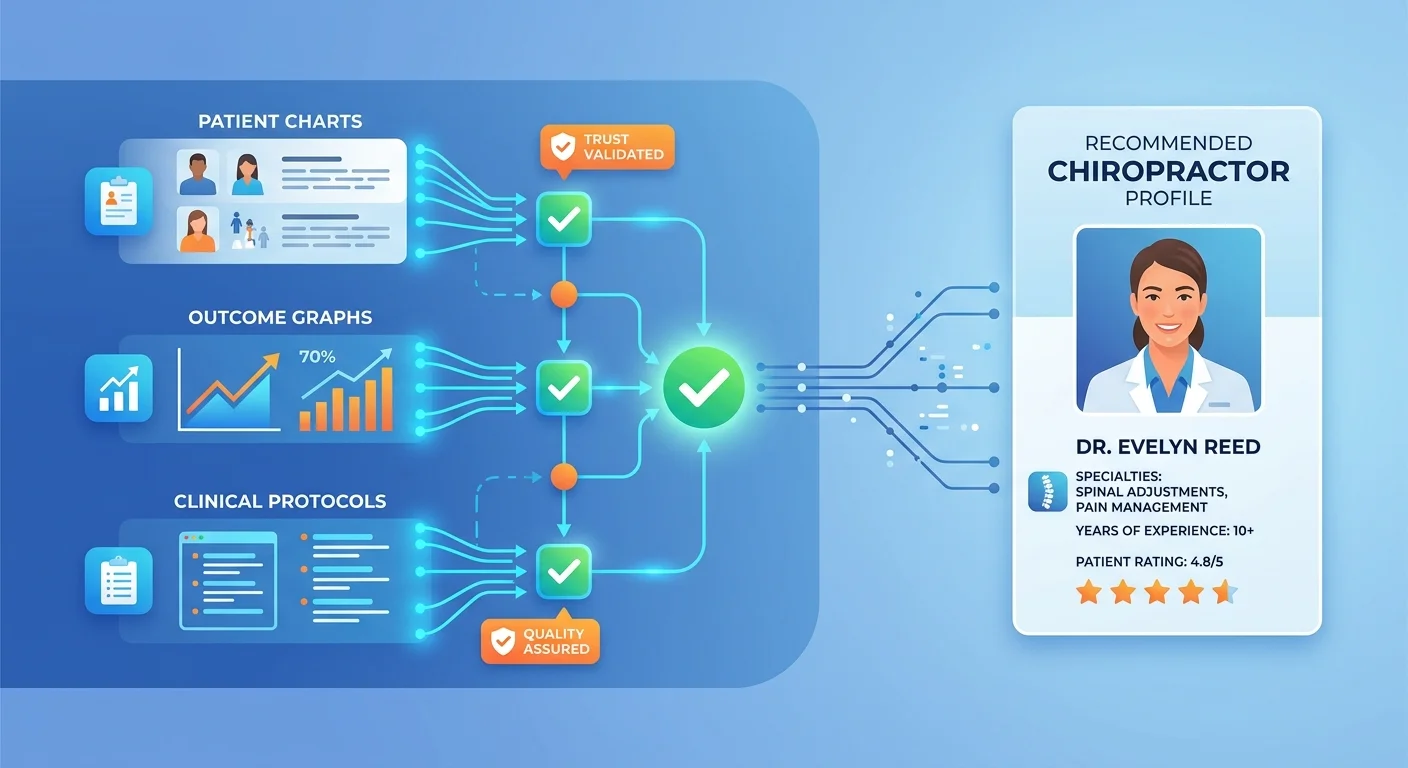

AI doesn't just read your case studies and take your word for it.

It runs a validation stack.

Four trust signals have to align before AI cites you as trusted:

- Schema markup (tells AI what the data is)

- Third-party corroboration (confirms claims match external sources)

- Semantic density (proves depth of expertise)

- Entity coherence (validates consistent identity across platforms)

If any signal is missing, trust erodes. If all four are present, trust compounds.

This is why some chiropractors with objectively weaker outcomes get recommended over practices with better results. The weaker practice documented outcomes in a format AI could validate. The stronger practice didn't.

Schema Markup: The Machine-Readable Layer

Schema markup is structured data that tells search engines and AI what your content represents.

Without schema, AI reads your case study as unstructured text. It might extract meaning through natural language processing, but it's guessing.

With schema, you're explicitly telling AI: "This is a case study. This section is patient presentation. This section is treatment protocol. This section is measurable outcome."

AI doesn't have to guess. It knows.

Schema doesn't make bad content rank. But it makes good content verifiable. And in the age of answer engines, verifiable is the only thing that matters.

Third-Party Corroboration

AI cross-references your claims against external sources.

If your website says you specialize in sports injury rehab and your Google Business Profile says the same thing — that's corroboration. If your case studies show consistent outcomes and your Healthgrades reviews mention the same conditions — that's corroboration.

If your website claims one thing and every external source says something different, AI flags you as inconsistent. Inconsistent entities don't get recommended.

This is why cleaning up directory listings, reviews, third-party profiles matters. Not because patients see them — because AI uses them to validate claims.

When your published outcomes align with third-party data, verifiable trust compounds.

Semantic Density and Methodology Explanation

AI rewards depth. Surface content gets ignored.

If you publish a case study that says "patient had back pain, got adjusted, felt better," AI has nothing to validate. No methodology. No measurable outcome. No proof of expertise.

If you explain why you chose Cox Flexion-Distraction over manipulation, how you measured ROM improvement, what functional outcome scale you used to track progress — that's semantic density.

Depth signals genuine expertise. Generic claims signal marketing copy.

Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) prioritizes content demonstrating first-hand experience and proof. AI answer engines use the same logic.

You're not writing for SEO anymore. You're writing to prove to a machine you know what you're doing.

| Trust Signal | What It Proves | How AI Verifies It | How to Provide It |

|---|---|---|---|

| Schema markup on case studies | This is structured clinical data, not marketing copy | Parses schema.org markup to identify data types | Add MedicalWebPage and MedicalCondition schema to case study pages |

| Third-party corroboration | Claims are consistent across platforms | Cross-references website with GBP, Healthgrades, Zocdoc | Ensure service descriptions, specialties, protocols match across all listings |

| Semantic density in methodology | Chiropractor has genuine expertise, not surface knowledge | Analyzes depth of clinical reasoning and process documentation | Explain why you chose a technique, how you measured outcomes, what protocols you follow |

| Aggregated outcome statistics | Clinical success is consistent, not anecdotal | Compares individual case studies to aggregated data for pattern validation | Publish quarterly or annual outcome reports showing averages across patient cohorts |

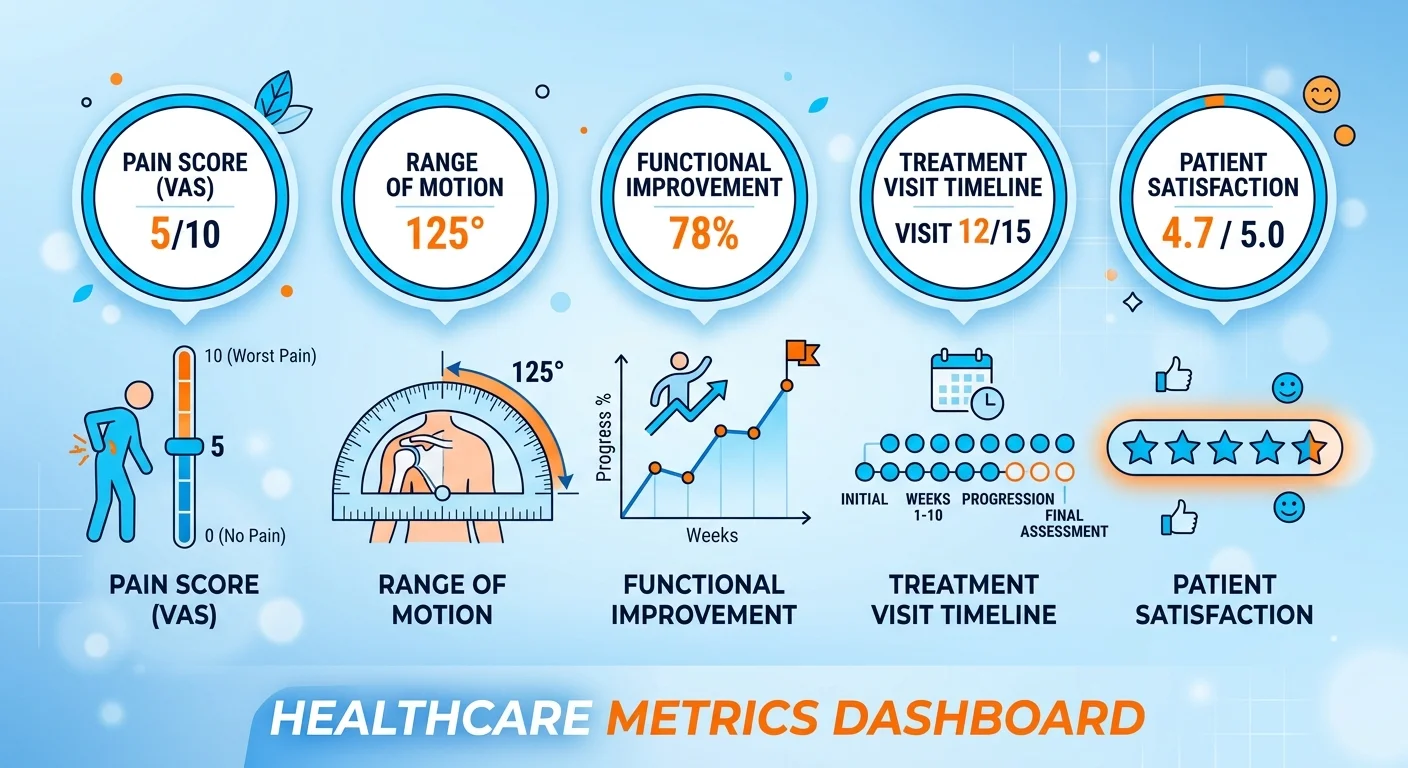

The Five Outcome Metrics AI Can Read

Not all metrics are created equal in the eyes of AI.

Subjective claims like "most patients feel better" don't register. Standardized, measurable, repeatable metrics do.

These five outcome measures are structured, verifiable, machine-readable. If you track and publish any of them, AI can validate your clinical success.

Pain Score Reduction (VAS/NRS)

The Visual Analog Scale (VAS) or Numeric Rating Scale (NRS) is the gold standard for pain measurement.

Patient rates pain 0-10. You document baseline at intake. Track it at each visit. Publish the pre/post comparison.

AI trusts this because it's standardized. A 7/10 pain score means the same thing across every practice using the scale. That makes it comparable. Comparable makes it verifiable.

Saying "we reduce pain" is a claim. Publishing "average pain reduction from 7.3/10 to 2.1/10 across 150 low back pain cases" is data.

Range of Motion Improvement

ROM is objective. You measure it in degrees. Document it at baseline and discharge.

Cervical rotation improved from 45 degrees to 78 degrees. Lumbar flexion improved from 40 degrees to 85 degrees.

AI reads that as verifiable clinical improvement because it's measurable, repeatable, standardized.

You don't need expensive equipment. A goniometer works. Consistent documentation works better.

Functional Improvement Scores

Functional outcome measures like the Oswestry Disability Index (ODI) for low back pain or Neck Disability Index (NDI) for neck pain are validated, peer-reviewed tools.

These aren't subjective. They're scored questionnaires quantifying how much pain impacts daily function.

Patient comes in with ODI 68% (severe disability). After 16 visits, scores 14% (minimal disability).

That's verifiable functional improvement AI can validate against published clinical norms.

Number of Visits to Achieve Result

Treatment efficiency is a trust signal.

If you document achieving specific outcomes in a predictable number of visits, that proves you have a consistent process.

"Average visits to achieve 50% pain reduction in acute low back pain cases: 8 visits over 4 weeks."

That's not marketing. That's clinical process documentation. AI reads it as proof you're not running patients through endless care plans with no endpoint.

Patient-Reported Outcome Measures (PROMs)

The NIH validates PROMs as direct measures of treatment effectiveness from the patient's perspective.

This isn't a testimonial. It's a structured questionnaire the patient completes at intake and discharge. You publish the data — anonymized, structured, schema-marked.

AI trusts PROMs because they're standardized tools, not anecdotal feedback.

To document chiropractic outcomes AI can validate, you don't need all five metrics. You need one or two tracked consistently and published transparently.

Case Studies vs. Testimonials: The Machine-Readable Difference

Testimonials are stories. Case studies are data.

AI can't verify stories. It can verify data.

This is the single biggest gap between practices AI recommends and practices AI ignores. Invisible practices lean on testimonials because they're easy to collect. Recommended practices publish case studies because they're verifiable.

One sounds nice. The other proves clinical competence.

What Makes a Case Study Machine-Readable

A machine-readable case study has three components:

1. Structured Patient Presentation

Age range, sex, presenting complaint, baseline pain score (VAS/NRS), baseline functional limitation (ROM or ODI/NDI). This is the "before" data.

2. Documented Treatment Protocol

Specific technique used. Frequency of visits. Duration of care. Adjunctive therapies. This proves you have a repeatable process.

3. Measurable Outcome

Post-treatment pain score. Post-treatment ROM or functional score. Number of visits to achieve result. This is the "after" data.

All three sections tagged with schema markup so AI knows what each represents.

That's a machine-readable case study. Verifiable. Comparable. Proof.

Why Aggregated Statistics Matter More Than Individual Stories

One case study is interesting. Fifty case studies showing the same protocol achieving consistent outcomes is a verifiable pattern.

AI doesn't trust outliers. It trusts patterns.

If you publish one dramatic success story, AI reads it as anecdotal. If you publish quarterly outcome reports showing "treated 120 cervicogenic headache cases over 12 months, average pain reduction from 6.8/10 to 1.9/10, average treatment duration 10 visits," AI reads it as systematic clinical proof.

The practices that win this shift are the ones building repositories of verifiable outcomes, not individual hero stories.

Why Testimonial Pages Fail

Traditional practice websites lean on testimonials because they're easy. Collect quotes, slap them on a page, call it social proof.

AI doesn't read testimonials as proof. It reads them as unverifiable anecdotes.

No structure. No data. No corroboration.

The chiropractor who publishes 30 structured case studies with tracked outcome metrics will always outrank the one with 300 testimonials — because AI trusts what it can validate, not what sounds nice.

You can keep the testimonials page. Patients still read it. But if you think testimonials are your authority signal, you're optimizing for the wrong filter.

The filter that decides whether you're visible isn't human anymore. It's a machine. And machines don't trust vibes.

| Element | Testimonial Format | Case Study Format | AI Trust Value |

|---|---|---|---|

| Patient identity | Name or initials, sometimes photo | Fully anonymized (age range, sex, general occupation) | Case study — anonymization doesn't reduce trust |

| Presenting complaint | Vague ("had back pain for years") | Specific (chronic low back pain, 7/10 VAS, limited flexion ROM) | Case study — specificity allows validation |

| Treatment documented | Not mentioned or generic ("got adjusted") | Detailed protocol (Cox Flexion-Distraction 3x/week for 4 weeks) | Case study — proves repeatable process |

| Outcome | Subjective ("feel so much better!") | Measurable (pain reduced to 2/10, ROM improved to 78 degrees) | Case study — objective data AI can verify |

| Schema markup | None | MedicalWebPage, MedicalCondition, Patient schema | Case study — required for machine reading |

| Verification mechanism | None — reader either believes it or doesn't | Cross-referenceable against aggregated data and third-party sources | Case study — AI can validate claims |

Privacy and HIPAA: Publishing Outcomes Without Breaking the Law

Privacy concerns are valid. HIPAA compliance is mandatory.

Anonymization solves both.

You can publish clinical outcomes without violating patient privacy. The key is removing all personally identifiable information while keeping the clinical value intact.

That's not a workaround. That's how peer-reviewed medical journals operate. How clinical research gets published. How healthcare transparency functions at scale.

Your case studies follow the same model.

What HIPAA Actually Requires

Here's the rule: HIPAA protects 18 specific patient identifiers. Remove all of them, and the data is no longer PHI.

You can't publish:

- Patient name

- Exact date of birth or age (use age ranges: "mid-40s")

- Exact dates of service (use month/year or generalized timelines: "treated over 8 weeks in early 2025")

- Address more specific than state

- Phone numbers, email addresses, social security numbers

- Medical record numbers

- Any photos showing the patient's face

What you can publish:

- Age range and sex

- Presenting complaint and baseline metrics

- Treatment protocol

- Outcome metrics

- Geographic region (city or metro area, not street address)

Clinical data ≠ patient identity. The outcome data you need to prove expertise doesn't require identifying the patient.

How to Anonymize Case Studies Correctly

Rule: if someone who knows the patient personally couldn't identify them from the case study, it's anonymized correctly.

Instead of: "John Smith, 46-year-old electrician from Huntington Beach, treated for low back pain starting January 15, 2025."

Use: "Male in mid-40s, electrician, treated for chronic low back pain over 8 weeks in early 2025."

Clinical value identical. Patient unidentifiable.

Generalize the occupation if it's too specific. "Construction worker" instead of "crane operator." "Office worker" instead of "VP of Marketing at [Company Name]."

Remove any detail that makes the case uniquely identifiable. Keep every detail that makes the outcome verifiable.

This Is Not for Practices Unwilling to Do the Work

If you're looking for a shortcut that gets you AI visibility without documenting clinical success — this isn't it.

Building a repository of verifiable outcomes takes discipline. You have to track metrics. Anonymize data correctly. Structure it so machines can read it.

If that sounds like too much work, you're not the chiropractor AI is going to recommend.

The ones who win this shift are the ones willing to publish receipts, not vibes. The ones who understand authority isn't claimed — it's documented, structured, verified.

If you want to keep running your practice the way you always have and hope AI figures out you're good — walk away now. This model isn't for you.

But if you're already producing clinical outcomes worth documenting and you're just not structuring them in a way AI can see — you're closer than you think.

Why Publishing "Negative" Outcomes Can Build More Trust

Not every case is a miracle.

Transparency about complexity signals authenticity.

AI values honesty over perfection.

Most chiropractors think publishing anything other than ideal outcomes will hurt their reputation. The opposite is true — especially when competing for AI recommendations.

A practice that only publishes simple success stories signals selective reporting. A practice that transparently documents complex cases, suboptimal outcomes, clinical reasoning in difficult situations signals depth.

AI reads depth as expertise. It reads perfection as marketing.

When a Suboptimal Outcome Demonstrates Expertise

Managing a complex case with co-morbidities, partial improvement, realistic patient expectations shows more clinical competence than pretending every case resolves in three visits.

Example: 58-year-old male with chronic low back pain, diabetes, prior lumbar fusion. Baseline pain 8/10 VAS. After 20 visits over 12 weeks using multimodal care, pain reduced to 5/10. Patient discharged with home exercise protocol and realistic expectation of pain management, not elimination.

That's not failure. That's honest clinical documentation.

A patient reading that case study who has a similar presentation is more likely to trust you — because you're not promising miracles. You're documenting real-world outcomes in complex cases.

AI reads it the same way. It validates your expertise in managing difficult cases. It doesn't penalize you for not achieving perfection.

The Trust Signal of Methodology Over Marketing

Explaining your clinical reasoning process in a difficult case proves you have one.

Marketing hides complexity. Authority documents it.

When you publish a case study explaining why you chose manual therapy over manipulation for a patient with osteoporosis, how you modified the treatment protocol when initial progress plateaued, what functional improvements you achieved despite pain scores remaining elevated — that's expertise.

Surface marketing would bury that case. Authority-level transparency publishes it with full methodology explained.

AI doesn't care if every outcome is perfect. It cares if your process is documented, your reasoning sound, your outcomes verifiable.

Consumer research shows personalization and relevance drive trust. In healthcare, that translates to patients (and AI) wanting proof you can handle their specific problem — not just the easy cases.

Publishing transparent outcomes, even when they're not perfect, proves you understand real-world clinical complexity.

Building Your Outcome Documentation System

The system isn't complex. It's intentional.

You don't need custom software. You don't need a research team. You don't need to become a data scientist.

You need one metric, one case study, one documented protocol — and a commitment to doing it consistently.

Start small. Build the habit. Let the repository compound.

Step 1: Choose Your Primary Outcome Metric

Pick one measurable outcome metric you can track consistently across every patient.

- Pain reduction — VAS or NRS scale (0-10)

- Range of motion improvement — measured in degrees

- Functional improvement — Oswestry (low back), NDI (neck), or other validated tool

You don't need all three. Pick the one most relevant to your patient population and track it at intake and discharge for every case.

That's your baseline verifiable data.

Step 2: Document Treatment Protocols

Write down what you do. Not the philosophy. The process.

- What technique do you use for specific presentations?

- What's your visit frequency for acute vs. chronic cases?

- How do you determine when to discharge?

- What adjunctive therapies do you include and why?

This doesn't need to be a textbook. It needs to be a consistent, documented process proving you're not winging it.

AI reads documented protocols as proof of systematic expertise. Generic descriptions of "holistic care" don't register.

Step 3: Anonymize and Structure Case Studies

Once a month, take 2-3 closed cases and document them as anonymized case studies.

- Patient presentation (age range, sex, baseline metrics)

- Treatment protocol (what you did, how often, for how long)

- Outcome (post-treatment metrics, number of visits to result)

Remove all PII. Add schema markup. Publish on your website.

Repeat monthly.

In 12 months, you'll have 24-36 verifiable case studies. That's more structured clinical proof than 95% of chiropractic practices will ever publish.

To compete in the age of Answer Engine Optimization, you don't need to be the best chiropractor. You need to be the best documenter.

The practices that build entity trust are the ones publishing verifiable proof of what they already do.

| Phase | Action | Output | AI Trust Impact |

|---|---|---|---|

| Phase 1: Foundation (Month 1-2) | Select one outcome metric (VAS, ROM, or functional scale). Document baseline intake process. | Standardized tracking system for every new patient. | Low initially — system being built, not yet published. |

| Phase 2: Protocol Documentation (Month 2-3) | Write treatment protocols for top 3-5 presenting complaints. Include technique, frequency, discharge criteria. | 3-5 documented protocols published on website with schema markup. | Medium — proves systematic approach, not yet verified with outcomes. |

| Phase 3: First Case Studies (Month 3-6) | Publish 2-3 anonymized case studies per month. Track baseline → protocol → outcome. | 6-12 case studies with measurable outcomes. | High — AI can now validate claims with structured proof. |

| Phase 4: Aggregated Data (Month 6-12) | Compile outcome statistics across patient cohorts. Average pain reduction, average visits to result, common co-morbidities. | Quarterly or annual outcome report published. | Very High — pattern validation across multiple cases. AI cites aggregated data as authoritative. |

Frequently Asked Questions

Does clinical transparency violate patient privacy or HIPAA?

No, when done correctly.

HIPAA compliance is achieved through anonymization. You remove all 18 protected identifiers — name, exact age, exact dates, address, contact info, medical record numbers, photos showing faces.

What remains is clinical data that's completely unidentifiable.

A case study showing "male in mid-40s, construction worker, chronic low back pain reduced from 7/10 VAS to 2/10 after 12 visits using Cox Flexion-Distraction" contains zero PHI. The patient cannot be identified. The clinical outcome is intact.

HIPAA protects patient identity, not clinical methodology or anonymized outcome data. Transparency and privacy coexist when you structure the data correctly.

What kind of chiropractic outcomes can be tracked and published?

The five most verifiable metrics:

- Pain score reduction — VAS or NRS scale (0-10 rating)

- Range of motion improvement — measured in degrees

- Functional improvement scores — Oswestry for low back, NDI for neck, validated tools

- Number of visits to achieve result — treatment efficiency metric

- Patient-Reported Outcome Measures — standardized questionnaires completed at intake/discharge

You don't need all five. Pick one or two most relevant to your patient population and track them consistently.

All of these are measurable, verifiable, machine-readable. AI can validate them. Generic claims like "most patients feel better" can't be validated.

How does AI even know if my clinical data is real?

Four-layer validation stack:

1. Schema markup — You tag the case study data with structured schema so AI knows what each section represents (patient presentation, treatment protocol, outcome).

2. Third-party corroboration — AI cross-references your published outcomes with external sources like Google reviews, Healthgrades ratings, directory listings. If your case studies say you treat sports injuries and your reviews mention the same thing, trust compounds.

3. Semantic density — AI analyzes the depth of your methodology explanation. Surface claims get ignored. Detailed clinical reasoning signals genuine expertise.

4. Entity coherence — AI checks if your published data is consistent across all platforms. If your website, GBP, and third-party profiles all say the same thing, you're validated. If they conflict, you're flagged as inconsistent.

All four have to align. That's how AI distinguishes verifiable clinical proof from marketing copy.

Isn't just having a testimonials page enough for transparency?

No.

Testimonials are anecdotal. AI gives weight to structured, data-driven proof — aggregated outcome statistics, detailed case studies showing a consistent verifiable process.

A chiropractor with 200 testimonials and zero structured case studies loses to one with 20 case studies and schema markup. The difference isn't volume. It's verification.

Testimonials sound nice. Case studies prove clinical competence.

AI doesn't trust vibes. It trusts data.

You can keep your testimonials page — patients still read it. But if you think that's your authority signal for AI visibility, you're optimizing for the wrong filter.

Will publishing a negative outcome hurt my practice?

No.

Transparently discussing complex cases with suboptimal outcomes builds more trust than pretending every case is simple.

A 60-year-old patient with chronic low back pain, diabetes, prior surgery, and realistic expectations of pain management (not elimination) who achieves 50% pain reduction is a clinical success — even if the outcome isn't perfect.

Documenting that case with full methodology, explaining why you modified the protocol, showing realistic functional improvement demonstrates expertise in managing difficult situations.

AI values honesty over perfection. It reads transparent complexity as depth. It reads selective reporting of only perfect cases as marketing.

The practices AI recommends are the ones publishing real-world outcomes in real-world patient populations, not cherry-picked hero stories.

Is this something I can set up once and forget?

No.

Authority decays without ongoing execution.

You need monthly case study publication, quarterly outcome reporting, continuous schema validation. This is infrastructure maintenance, not a one-time project.

The practices that stay visible are the ones that keep publishing. The ones that stop publishing lose ground to competitors who keep executing.

This isn't a 90-day tactic. It's a compounding authority asset that requires consistent monthly work.

How long does it take to see results from clinical transparency?

Authority compounds over time.

Earliest visibility signals appear 3-6 months after you start publishing structured case studies consistently. That's when AI engines begin citing your content as a trusted source.

Full recommendation authority — where your name is the first one ChatGPT or Gemini says when someone asks — typically takes 9-12 months of monthly execution.

This is not a 90-day miracle. It's a long-term infrastructure build.

The practices that win are the ones that commit to the timeline and keep executing while competitors quit.

Do I need to hire someone to document outcomes?

You need a system.

White-glove execution like the Local AI Authority Engine handles documentation, anonymization, schema implementation, monthly case study publishing. You treat patients. The system builds the authority infrastructure.

You don't need to learn schema markup. You don't need to become a technical writer. You don't need to spend hours every week structuring data.

You need someone who understands how AI validates clinical proof and can structure your existing outcomes in machine-readable formats.

If you're invisible to AI right now, it's not because you're a bad chiropractor. It's because your outcomes aren't structured in a way AI can verify.

The verifiable case studies AI recommends don't write themselves. But the infrastructure to create them can be built for you.

Conclusion

The chiropractors AI recommends six months from now are publishing verifiable proof today.

Not marketing copy. Not testimonials. Structured clinical data. Anonymized case studies. Tracked outcome metrics. Documented treatment protocols. The infrastructure that proves to answer engines you are the answer.

This isn't about being a better marketer. It's about being a better documenter. Your clinical success already exists — the question is whether AI can verify it.

Most practices are invisible because they never structured their proof in formats machines can read. The gap between invisible and recommended is clinical transparency.

You don't need to become a different chiropractor. You need to publish receipts for the outcomes you're already producing. That's the authority infrastructure AI trusts.

Every month you wait, a competitor in your market is building the documentation repository AI will cite when patients ask who to trust. That gap doesn't close by accident. It widens.

If you're invisible to AI right now, it's not your clinical skill — it's your infrastructure.

The AI Visibility Check runs your practice through ChatGPT, Gemini, and Grok and shows you exactly what answer engines see when patients ask who to trust. Fifteen minutes. Real data. If the results don't prove the gap exists, walk away. But if they do — you'll know exactly what needs fixing.