What is Consensus Trust? How AI Validates Your Clinic's Reputation

Last Updated: May 11, 2026

- • How AI Cross-References Your Reputation

- • What Sources AI Uses to Validate Your Identity

- • The Entity Resolution Process

- • Why Geographic Consistency Matters for Local Practices

- • Why Consensus Trust is Not "Just NAP Consistency"

- • NAP Was Built for Humans, Consensus Trust is Built for Machines

- • The Schema Layer AI Actually Reads

- • The Knowledge Graph and Your Clinic's Identity

- • How Generalist Positioning Weakens Your Entity Signals

- • E-E-A-T and Entity Authority

- • Common Consensus Trust Failures (And How to Spot Them)

- • Old Addresses and Disconnected Phone Numbers

- • Missing or Conflicting Specialty Information

- • Inconsistent Business Names Across Platforms

- • Why You Can't See These Problems Without an External Audit

- • Frequently Asked Questions

- • How is consensus trust different from just having good patient reviews?

- • What are the most common sources AI uses to build consensus trust?

- • Can having an old address on one directory really hurt my AI visibility?

- • Is this something I can fix myself over a weekend?

- • But doesn't traffic still matter more than consensus trust?

- • What's the first step to improving my clinic's consensus trust?

- • Conclusion

How AI Cross-References Your Reputation

Your website isn't what AI reads first.

It reads what everyone else says about you first.

That's the part most chiropractors miss. You built a beautiful site. Fast. Modern. Clear messaging. Great photography.

None of it matters if the data surrounding your practice tells a conflicting story.

Or no story at all.

Because AI doesn't trust your website by default. It validates your website against external sources.

That validation process? That's consensus trust.

And if those external sources disagree with each other — or worse, don't exist — AI moves on. It finds a competitor whose signals align. Your beautiful website becomes invisible. Not because of what it says. Because of what the rest of the web doesn't confirm.

What Sources AI Uses to Validate Your Identity

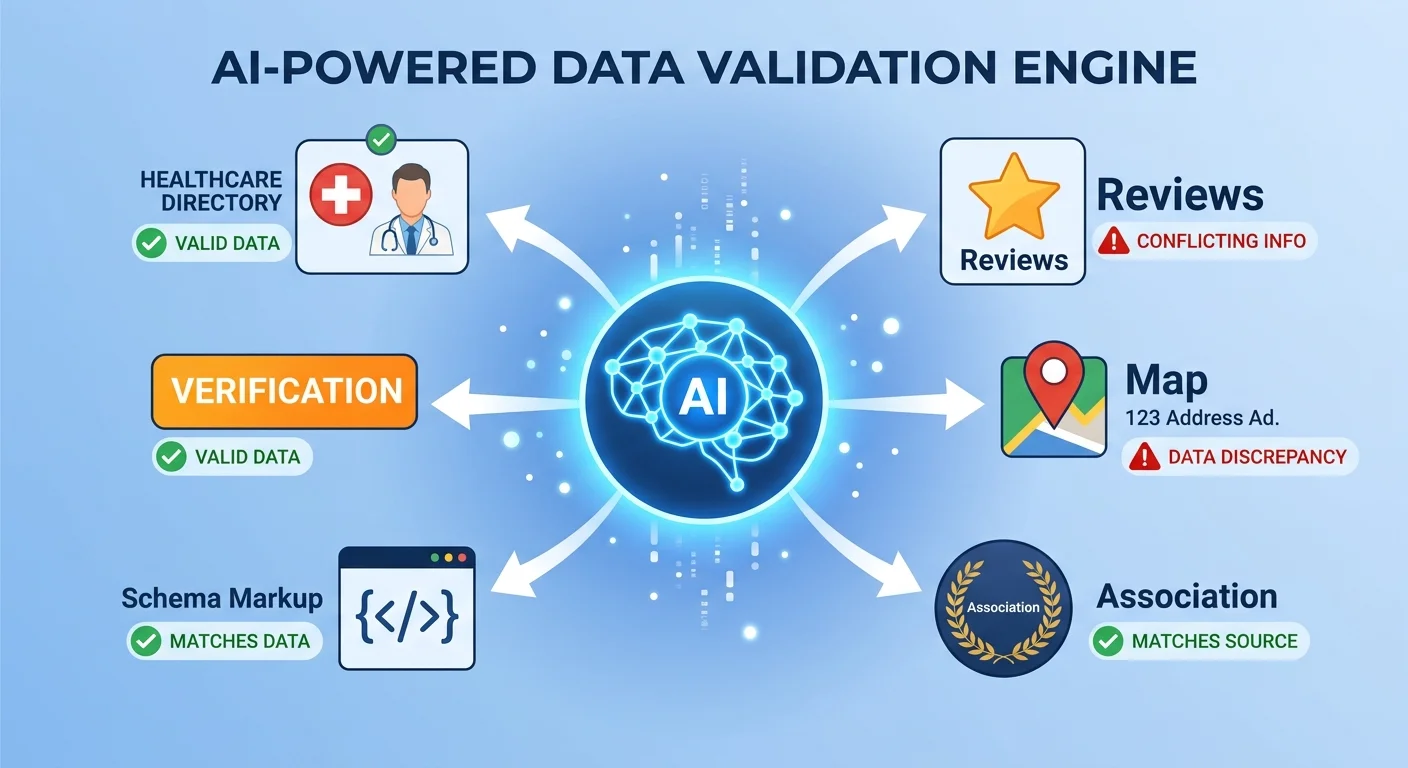

AI doesn't weight all sources equally. There's a hierarchy.

Tier 1 sources carry the most authority. For healthcare practices, that's professional directories like Healthgrades, Zocdoc, and Vitals. Your Google Business Profile. Official member directories from professional associations. These are platforms AI engines already know are authoritative.

When these sources agree on your core entity data — name, address, phone number, services, credentials — AI gains confidence.

Tier 2 sources add depth. Review platforms. Local business directories. Healthcare-specific databases. These validate secondary signals like patient satisfaction, geographic service area, specialty focus.

AI cross-references them against Tier 1 data to build a richer picture of your authority.

Tier 3 sources are your website's structured data. Schema markup tells AI what services you provide, who you are, where you're located — in a format machines can actually read.

But here's the catch.

Your schema only reinforces consensus trust if it matches what Tier 1 and Tier 2 sources already say. If your website claims one specialty and your Healthgrades profile claims another? That's not reinforcement. That's a trust break.

Schema doesn't override external validation. It either confirms it or contradicts it.

Google's Knowledge Graph works this way. Google doesn't just read your website and believe it. It builds a knowledge graph by understanding facts about people, places, and things — and the relationships between them.

Those facts come from multiple independent sources. The more sources that agree, the stronger the graph connection becomes.

| Source Type | Examples | Trust Weight | What AI Verifies From This Source |

|---|---|---|---|

| Tier 1: Professional Directories | Healthgrades, Zocdoc, Vitals, Google Business Profile | High | Core entity data (name, address, phone, credentials, specialty) |

| Tier 2: Review & Local Platforms | Yelp, Facebook, Thumbtack, healthcare-specific review sites | Medium | Patient satisfaction, service area, secondary authority signals |

| Tier 3: Website Structured Data | Schema.org markup on your site | Medium | Services offered, contact info, operating hours—validated against Tier 1/2 |

| Tier 4: Unverified or Low-Authority Sources | Aggregator sites, scraper databases, outdated listings | Low | Often ignored or treated as noise if data conflicts with higher tiers |

If your data is consistent across Tier 1, reinforced by Tier 2, and confirmed by your Tier 3 schema — consensus trust is high.

If any tier contradicts another? Trust drops.

If Tier 1 sources are missing or incomplete? There's no foundation for AI to build on.

That's not theory. That's how entity resolution works.

The Entity Resolution Process

Entity resolution is the computer science concept that powers consensus trust.

It's how machines match records for the same entity across different data sources — even when formatting varies.

Let's say your practice is listed as "Smith Chiropractic Clinic" on Google Business Profile. On Healthgrades, it's "Smith Chiropractic." On Zocdoc, it's "Dr. John Smith, DC."

Those aren't identical strings. But if your address, phone number, and services align across all three, AI can resolve that these are the same entity.

Stanford's research on entity resolution shows this matching process is probabilistic. Not certain. The algorithm assigns confidence scores based on how many data points agree.

Here's where it breaks.

Let's say Google Business Profile has your current address. Healthgrades has an old address from three years ago. Zocdoc has the right street but the wrong suite number.

AI now has three conflicting location signals. It can't resolve which one is correct.

Confidence score drops. The entity match becomes uncertain.

Now add a disconnected phone number on one platform. A missing specialty designation on another. A website schema that lists services you no longer offer.

AI doesn't see three minor inconsistencies. It sees three pieces of evidence that this entity is unreliable.

And when AI can't validate your identity with confidence, it doesn't cite you. It moves to the next practice. One whose signals align.

This is why an AI Authority Agency focuses on authority infrastructure first. Before you publish content, before you optimize keywords, before you run ads — you fix the foundational data AI uses to determine whether you exist.

If that foundation is shaky, everything built on top of it fails.

Why Geographic Consistency Matters for Local Practices

AI recommendations are local for chiropractic care. That's not a preference. It's a structural requirement of how AI recommendations are local, not global.

When a patient asks ChatGPT or Gemini for a chiropractor recommendation, the AI needs to know two things: where the patient is, and which practices serve that area.

If your address data is inconsistent across platforms, AI can't confidently place you in the right geographic service area. You might be ten minutes from the patient. But if AI sees conflicting location signals, it doesn't take the risk.

It recommends someone else. Someone whose service area is unambiguous.

This is brutal for practices that moved locations and didn't systematically update every listing. The old address lingers. Maybe it's on a healthcare directory you set up five years ago and forgot about. Maybe it's in an aggregator database that scraped outdated data.

Doesn't matter. AI sees both addresses. It doesn't know which one is current.

Geographic consensus breaks. Citation likelihood drops to near zero.

Local consensus trust requires every single platform — Tier 1, Tier 2, Tier 3 — to agree on your current physical location. Not "mostly agree." Fully agree.

One conflicting address is enough to kill the match.

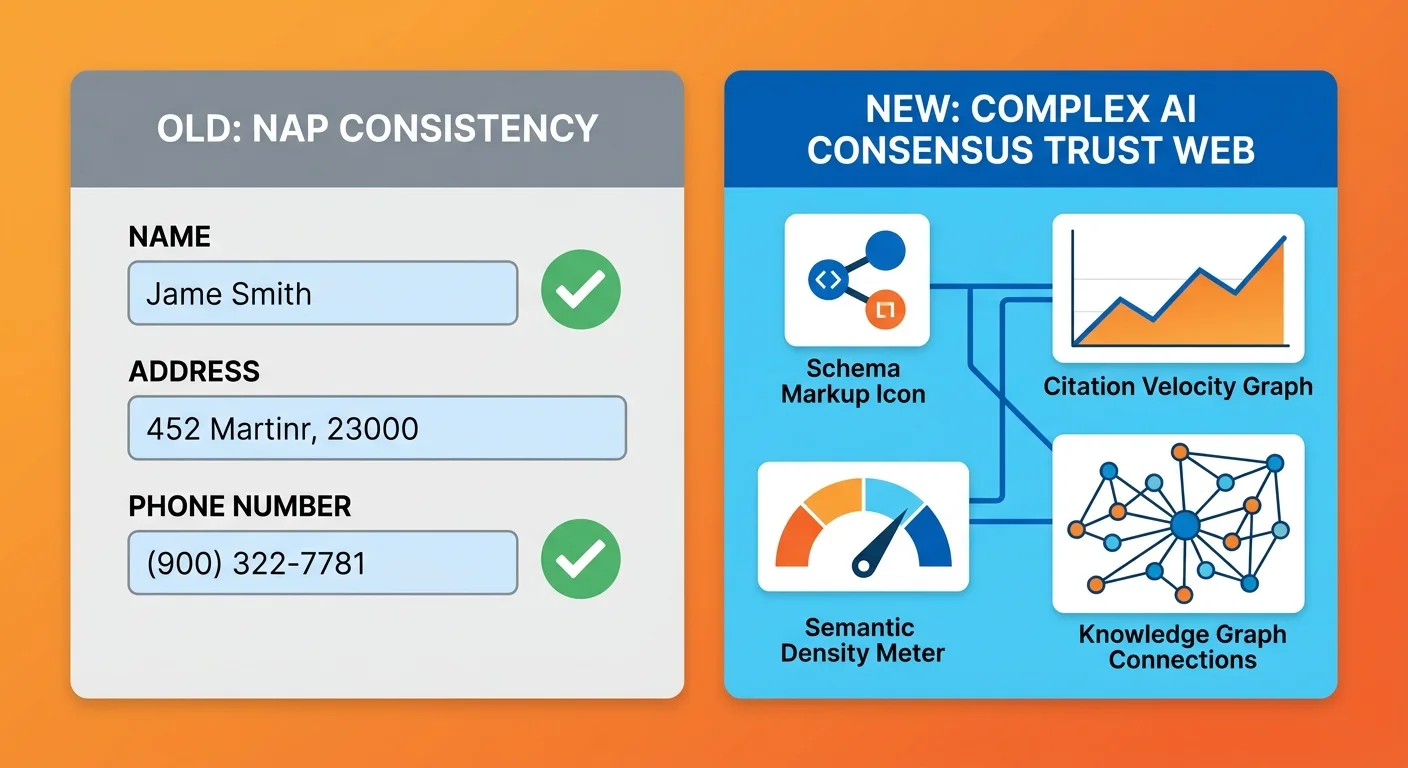

Why Consensus Trust is Not "Just NAP Consistency"

Most chiropractors hear "consistency across directories" and think: yeah, I updated my Google listing. I'm good.

You're not.

NAP consistency — name, address, phone number — was a local SEO ranking factor built for humans reading business directories. It told the algorithm that your business existed and where it was located.

That was enough when the goal was to rank on page one of a search results list. Patients would click through, evaluate options, make a choice.

AI doesn't work that way. It doesn't return a list. It returns a verdict.

One name. One recommendation.

And to make that recommendation confidently, it needs far more than NAP data.

Consensus trust includes NAP. But it also requires schema markup, citation velocity across multiple platforms, semantic clarity about what services you provide and who you serve, and validation of your credentials and specialty from authoritative healthcare sources.

NAP was a checkbox. Consensus trust is an interconnected web of signals AI cross-references before it says your name.

Updating your Google Business Profile and calling it done is like painting the front door of a house with no foundation. It might look better. It doesn't fix the structural problem.

NAP Was Built for Humans, Consensus Trust is Built for Machines

NAP worked because humans could fill in gaps.

If your phone number was missing on one directory but your address matched, a person could still figure out you were the right business.

AI can't fill in gaps. It flags them as data quality issues.

NAP also worked because the algorithm weighted the listing platforms themselves — Yelp, Yellow Pages, local directories. If your NAP appeared consistently on those platforms, the algorithm inferred your business was legitimate.

But AI engines today don't just check that your data exists. They validate what your data says about your authority.

Moz's explanation of local citations was written for the old model. Citations built trust with the ranking algorithm.

Consensus trust builds trust with AI's recommendation algorithm. Different problem. Different solution.

The old NAP model assumed your website was the source of truth and listings reinforced it. Consensus trust assumes listings are the source of truth and your website either confirms or contradicts them.

If your website schema says "sports injury chiropractic" and your Healthgrades profile says "family wellness," AI doesn't know which one to believe. It downgrades both.

The Schema Layer AI Actually Reads

Your website's structured data is a Tier 3 validation source.

Schema.org markup tells AI what services you offer, your credentials, your location, your specialty — in a format machines can parse without ambiguity.

But schema doesn't exist in a vacuum.

Structured data is only valuable if it aligns with what Tier 1 and Tier 2 sources already say. If your schema markup says one thing and your Google Business Profile says another, that's not validation. That's a conflict.

And conflicts erode consensus trust.

Most practices either have no schema markup at all, or they have template-level schema that's too generic to be useful. "LocalBusiness" schema with a name, address, and phone number.

That's not enough.

AI needs MedicalBusiness schema with specialty designation, services offered, and credentials verified against external sources.

And here's the part most people miss: your schema has to stay current.

If you add a new service to your practice but don't update your schema — and that new service appears on your Healthgrades profile but not your website — AI sees a mismatch. Your own website becomes a source of conflicting data.

This is why building AI-readable infrastructure isn't a one-time project. It's an ongoing process of keeping every signal aligned as your practice evolves.

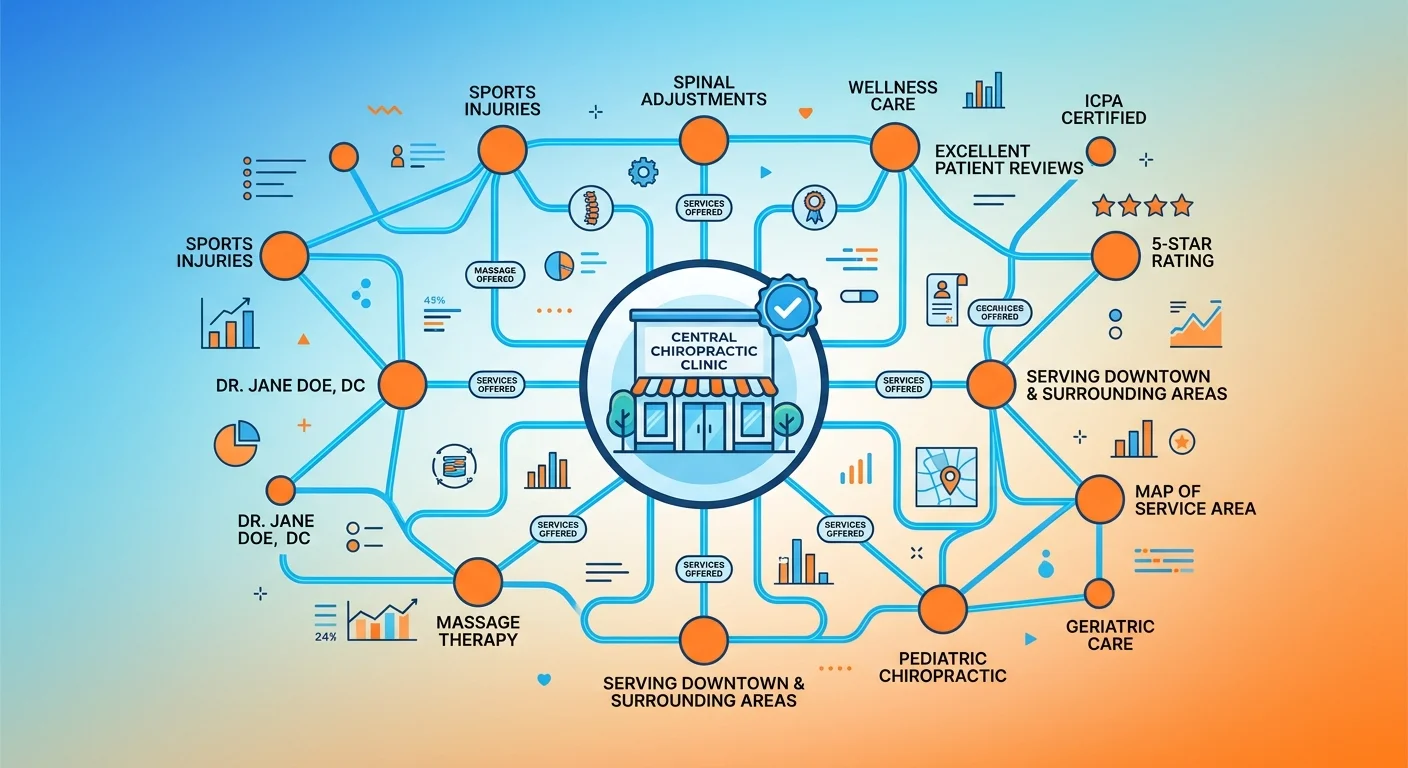

The Knowledge Graph and Your Clinic's Identity

Google's Knowledge Graph is a structured database of entities and their relationships.

Your clinic is an entity node. The graph maps your relationships to services, credentials, locations, specialties, and other entities in your market.

AI doesn't see you as a collection of web pages. It sees you as a node with defined attributes.

When those attributes are clear, consistent, and validated by multiple authoritative sources, your node strengthens. When they're vague, conflicting, or missing — your node weakens.

And weak nodes don't get cited.

This is where most practices fail without realizing it.

How Generalist Positioning Weakens Your Entity Signals

Here's the thing about trying to be everything to everyone: AI can't figure out what you're the authority for.

If your Google Business Profile says "family chiropractic," your website says "sports injury specialist," and your Healthgrades profile says "wellness and pain management," AI doesn't know which specialty to associate with your entity.

Three conflicting signals. No consensus. No citation.

The "Everything to Everyone" Practitioner thinks casting a wide net increases patient volume. It does the opposite. It dilutes authority.

AI is brutally selective. It recommends specialists, not generalists.

A patient asking for a sports injury chiropractor doesn't want someone who "also does sports injuries." They want someone whose entire practice is built around sports injuries. And AI wants to see that focus validated across every platform.

Generalist positioning creates weak, diffuse entity signals. Your knowledge graph node has too many relationships in too many directions.

None of them are strong enough to make AI confident you're the answer for any specific query.

Specialization isn't about turning away patients. It's about making your authority legible to the machines that decide whether to say your name.

E-E-A-T and Entity Authority

E-E-A-T — Experience, Expertise, Authoritativeness, and Trust — used to be a ranking factor.

Now it's a citation factor.

AI validates E-E-A-T by looking for consistent, verifiable signals of expertise across authoritative sources. Not just on your website. Across the web.

Your credentials listed on Healthgrades. Your specialty designation on Zocdoc. Your professional association membership. Your patient reviews on Google. Your published content on external platforms.

If all those sources agree you're an expert in a specific area — sports injury rehabilitation, prenatal chiropractic care, chronic pain management — your E-E-A-T score is high.

If those sources contradict each other or stay silent, your E-E-A-T score is low. And low E-E-A-T means low citation likelihood.

| E-E-A-T Component | How AI Validates It | What Breaks Consensus Trust |

|---|---|---|

| Experience | Patient reviews, case volume indicators, years in practice listed consistently across platforms | Conflicting "years in practice" data, missing review presence, no verifiable patient interaction signals |

| Expertise | Credentials verified on professional directories, specialty focus consistent across all platforms, published content in your field | Generic "chiropractor" designation with no specialty, credentials missing or mismatched, conflicting service claims |

| Authoritativeness | Citations from other authoritative healthcare sources, professional association memberships, content published on high-authority platforms | No external citations, missing association affiliations, no third-party validation of authority |

| Trust | Consensus across Tier 1/2/3 sources, clean entity resolution, no conflicting data | Inconsistent NAP, conflicting specialty claims, missing schema, old/disconnected listings |

E-E-A-T isn't a score you claim. It's a score AI calculates by cross-referencing what everyone else says about you.

If those signals don't align, the score drops. And with it, your visibility.

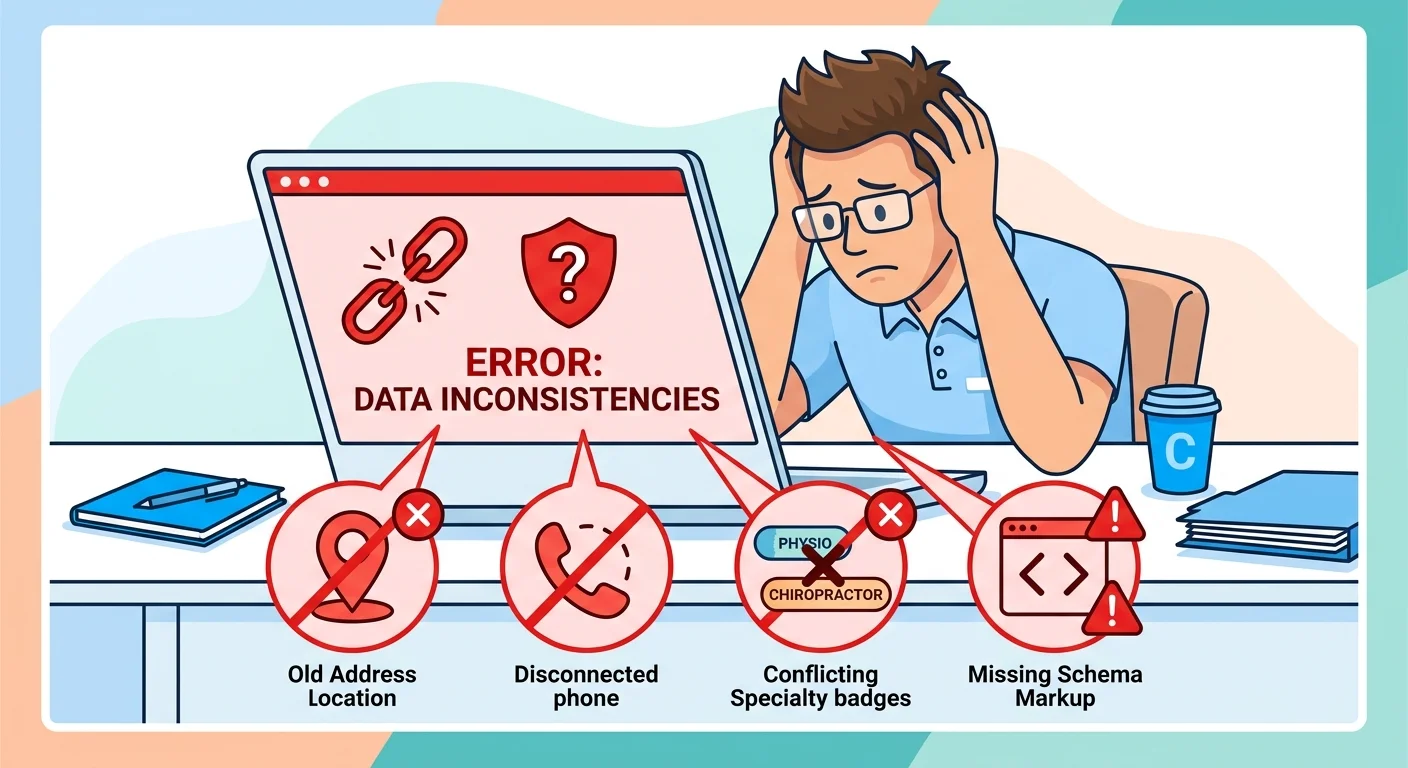

Common Consensus Trust Failures (And How to Spot Them)

Most practices assume their data is clean because they "set it up once."

They claimed their Google listing. Filled out a Healthgrades profile. Built a website. Done.

Then they run an audit and discover their entity signals are a mess.

This section is a diagnostic mirror. These are the problems most chiropractors have right now. And don't realize until they see what AI actually says when someone asks for a recommendation.

Old Addresses and Disconnected Phone Numbers

You moved locations three years ago. Updated your website. Updated Google. Maybe updated Healthgrades.

But Zocdoc still has the old address. So does an aggregator database you didn't even know existed. So does a Yelp listing you created in 2015 and forgot about.

AI cross-references all of them. It doesn't know which one is current. It sees conflicting location data.

Entity resolution fails. Your practice gets flagged as unreliable. Citation likelihood drops.

Same problem with phone numbers. Maybe you switched to a new office line. Updated your website. Forgot to update Vitals. Or Thumbtack. Or an old directory listing someone else published on your behalf years ago.

One outdated listing creates a conflict that breaks entity resolution. AI can't tell which data point is correct. So it loses confidence in all of them.

Missing or Conflicting Specialty Information

This is where generalist positioning kills you.

Your Google listing says "chiropractic care." Your Healthgrades profile says "sports injury and wellness." Your website says "chronic pain management." Your Zocdoc bio says "family chiropractic."

AI reads those and tries to figure out what you're an authority for. It can't.

Four different platforms, four different specialty claims. No consensus.

And when there's no consensus, AI defaults to silence. It doesn't cite you for any of those specialties. Because it's not confident you're the clear authority for any of them.

Vague specialty claims are just as bad. "Chiropractic care" tells AI nothing. Every chiropractor provides chiropractic care.

What's your differentiation? What makes you the answer instead of the practice down the street?

Specialty clarity requires specificity. And that specificity must appear consistently across every platform AI cross-references.

Inconsistent Business Names Across Platforms

Let's say your legal business name is "Smith Chiropractic Clinic, Inc."

Your Google Business Profile says "Smith Chiropractic Clinic." Your website header says "Smith Family Wellness." Your Healthgrades listing says "Dr. John Smith, DC."

To a human, those all clearly refer to the same practice. To AI, they look like four different entities. Especially if other data points also vary slightly.

Entity resolution works by matching multiple signals. Name is one of them.

If your name formatting is inconsistent, AI has to rely more heavily on other signals like address and phone number to confirm the match. If those signals also vary, the match confidence drops below the citation threshold.

Consistent business name formatting across all platforms isn't pedantic. It's structural.

AI needs the same name string to appear everywhere. Or at least close enough that the algorithm can resolve the variations with high confidence.

Why You Can't See These Problems Without an External Audit

Here's the brutal part: you can't audit this yourself by clicking through your own listings.

Because you already know you're looking at your own practice. Your brain fills in the gaps.

You see "Smith Chiropractic" on one site and "Smith Family Wellness" on another and you know they're the same business. AI doesn't know that. It treats them as separate data points that have to be matched algorithmically.

You also can't see the platforms you forgot about. The aggregator databases that scraped your old address. The directory someone else created on your behalf in 2012. The review site you signed up for once and never updated.

Entity signals decay over time. Platforms change. Data drifts.

What was accurate two years ago might be wildly inconsistent today. And you'd never know unless you systematically audit every platform AI cross-references.

That's what an external diagnostic does. It shows you what to expect during your AI Visibility Check — a clear view of what ChatGPT, Gemini, and Perplexity actually see when they try to validate your entity.

Not what you assume they see. What they actually see.

| Data Point to Check | Where to Verify | Red Flag Indicators | Impact on AI Trust |

|---|---|---|---|

| Business Name | Google Business Profile, Healthgrades, Zocdoc, Vitals, website header, schema markup | Variations like "Smith Chiropractic" vs. "Smith Family Wellness," inconsistent use of legal entity name | Weak entity match, AI can't confidently resolve that all listings refer to same practice |

| Address | All Tier 1/2 directories, Google Maps, website footer, schema markup | Old address on any platform, suite number inconsistencies, conflicting city/ZIP data | Geographic trust breaks, AI can't place practice in correct service area |

| Phone Number | All listings, website contact page, schema markup | Disconnected numbers, inconsistent formatting (e.g., (555) 123-4567 vs. 555-123-4567), old landlines | Contact validation fails, AI flags entity as outdated or unreliable |

| Specialty Focus | Google Business Profile categories, Healthgrades specialty tags, Zocdoc filters, website meta descriptions | Generic "chiropractic care" vs. specific "sports injury rehabilitation," conflicting specialty claims across platforms | AI can't determine what you're an authority for, no citations for any specialty |

| Credentials | Healthgrades provider profiles, state licensing board listings, professional association directories | Missing credentials, unlisted board certifications, no professional affiliations visible | E-E-A-T score drops, AI questions expertise and authoritativeness |

| Schema Markup | Website source code (view page source, search for "application/ld+json") | Missing schema, generic LocalBusiness instead of MedicalBusiness, data in schema contradicts external listings | Tier 3 validation fails, AI sees website as unreliable or non-authoritative |

If any row in that table shows red flags — your consensus trust is broken.

And if you can't even answer "where to verify" for most of those rows, you need an audit before you do anything else.

Frequently Asked Questions

How is consensus trust different from just having good patient reviews?

Good reviews contribute to reputation signals, but consensus trust is about the AI's confidence in your clinic's factual identity — who you are, where you are, what you do.

AI must verify your entity first before it can weight what patients say about you.

Think of it this way: if AI can't resolve your entity with confidence, it doesn't trust the reviews either. Are those reviews for "Smith Chiropractic Clinic" or "Smith Family Wellness"?

If your entity signals are conflicting, AI doesn't know. So it can't reliably associate the reviews with your practice.

Strong reviews on top of weak entity signals still result in low citation likelihood. The reviews don't fix the foundation. They sit on top of a broken structure and AI ignores both.

What are the most common sources AI uses to build consensus trust?

AI prioritizes high-authority healthcare directories like Healthgrades, Zocdoc, and Vitals. These are Tier 1 sources. Business listings like Google Business Profile also carry high weight.

Tier 2 sources include review platforms like Yelp and Facebook, local business directories, and healthcare-specific databases that validate secondary signals like patient satisfaction and service area.

Tier 3 is your website's structured data — schema markup that tells AI what services you offer, your credentials, your location. But schema only reinforces trust if it matches what Tier 1 and 2 sources already say.

The more of these sources that agree on your core entity data, the stronger your consensus trust becomes.

One source saying something doesn't build trust. Ten sources saying the same thing? AI takes that seriously.

Can having an old address on one directory really hurt my AI visibility?

Yep. Because data inconsistency erodes trust.

An old address is a conflicting signal that forces AI to question which data point is correct. AI doesn't ignore outliers. It treats them as evidence that the entity data is unreliable.

When AI encounters conflicting information, it downgrades confidence in all related data.

Think about how entity resolution works. AI matches records probabilistically.

If nine sources say 123 Main Street and one source says 456 Oak Avenue, AI has to decide: is that one source outdated, or did the practice move and the other nine sources haven't updated yet?

It can't know. So it flags the entire entity as uncertain.

Lower confidence score means lower citation likelihood. One outdated listing — even one you forgot existed — can be the difference between getting cited and getting ignored.

Is this something I can fix myself over a weekend?

You can correct individual listings. That's not hard.

But achieving true consensus trust requires a systematic audit and rebuild of your authority infrastructure. That includes website schema markup, citation velocity across multiple platforms, semantic clarity in your positioning, and ongoing monitoring to catch data drift before it breaks your entity signals.

This is structural work, not a checklist task.

You're not just updating a few fields. You're engineering a coherent, machine-readable identity that AI can validate with confidence across dozens of sources.

Most practices that try to DIY this miss platforms they didn't know existed. Or they update schema incorrectly. Or they fix their Google listing but leave Healthgrades and Zocdoc inconsistent.

The result is partial consensus. Which AI reads as weak consensus.

Partial consensus is often worse than no presence at all, because it signals to AI that your data quality is poor.

But doesn't traffic still matter more than consensus trust?

Traffic mattered when patients evaluated options from a list of search results.

In a zero-click search world, the list doesn't exist. AI gives the answer. One name. One recommendation.

If you're that name, you get the patient. If you're not, the traffic from that query goes to zero.

High-volume traffic isn't useless. But it's becoming less predictive of growth.

Because every month, more patient searches shift from "show me a list" to "tell me who to call." And when AI answers that question, traffic metrics don't matter.

Citation likelihood matters.

Being the cited answer in a zero-click AI response is more valuable than being one of ten links on a search results page that fewer patients are even seeing.

The shift is already happening. Waiting until it's obvious means you're competing for citation spots that early movers already claimed.

Consensus trust isn't future-proofing. It's present-day infrastructure.

What's the first step to improving my clinic's consensus trust?

The first step is a diagnostic to see where your entity signals are inconsistent, missing, or contradictory across the platforms AI cross-references.

An AI Visibility Check audits your presence across ChatGPT, Gemini, and Perplexity. You get a clear baseline.

What do these engines say when someone asks who to trust in your market? Do they name you? Do they name a competitor? Do they return generic answers because they can't resolve any local entity with confidence?

The diagnostic creates a gap map. You see exactly which data points are breaking consensus trust.

Old address on Healthgrades. Missing specialty designation on Zocdoc. Conflicting schema on your website. Disconnected phone number on a directory you forgot about.

Once you know where the breaks are, you can fix them systematically. But trying to fix consensus trust without a diagnostic is like trying to repair a foundation you can't see.

You're guessing. And guessing doesn't build authority.

Conclusion

Consensus trust isn't a new name for an old tactic.

It's a foundational requirement of how AI validates identity in a zero-click search world.

The practices that understand this — and act on it — are building authority assets that compound over time. Every month of clean, consistent entity signals strengthens the AI's confidence.

Every platform that agrees on your specialty, your credentials, your service area adds another validation layer.

That trust doesn't reset when the AI updates its model or a new engine launches. It persists. It grows.

The practices that don't understand this are letting competitors claim the citation spots that used to be neutral ground. Because AI doesn't split recommendations.

It names one practice.

And if your entity signals are weak, conflicting, or missing — that one practice is never you.

This isn't marketing. It's infrastructure.

Your real-world reputation already exists. Patients trust you. Referrals come in. Your practice works.

But the machines that now act as gatekeepers for new patients can't see any of that. Because the data they use to validate your identity is a mess.

Making your reputation legible to AI isn't about gaming an algorithm. It's about making sure the truth about your practice is told consistently, clearly, and authoritatively across every platform AI cross-references.

That's consensus trust.

And in a world where AI gives one answer instead of ten options, it's the only trust that matters.

Doing nothing is a choice. It's a choice to let practices with cleaner signals take your patients.

Every month you wait, the gap widens. The practices building consensus trust today are the ones AI will cite six months from now.

The question isn't whether this shift is happening. It's whether you'll be the answer when it does.

Before you can build consensus trust, you need to know where your entity signals are breaking.

Most practices assume their data is clean because they "set it up once." But platforms change. Old listings persist.

And the machines cross-referencing your reputation don't care about your assumptions. They care about what the data actually says.

Fifteen minutes. Real data from ChatGPT, Gemini, and Perplexity.

You'll see exactly what AI engines say when someone asks who to trust in your market. If the results show clean, consistent signals — great.

If they show gaps, conflicts, or silence — you'll know exactly what needs to be fixed.

No guesswork. No assumptions. Just a clear baseline.