The AI Hallucination Problem: Why AI Invented a Founder for My Business

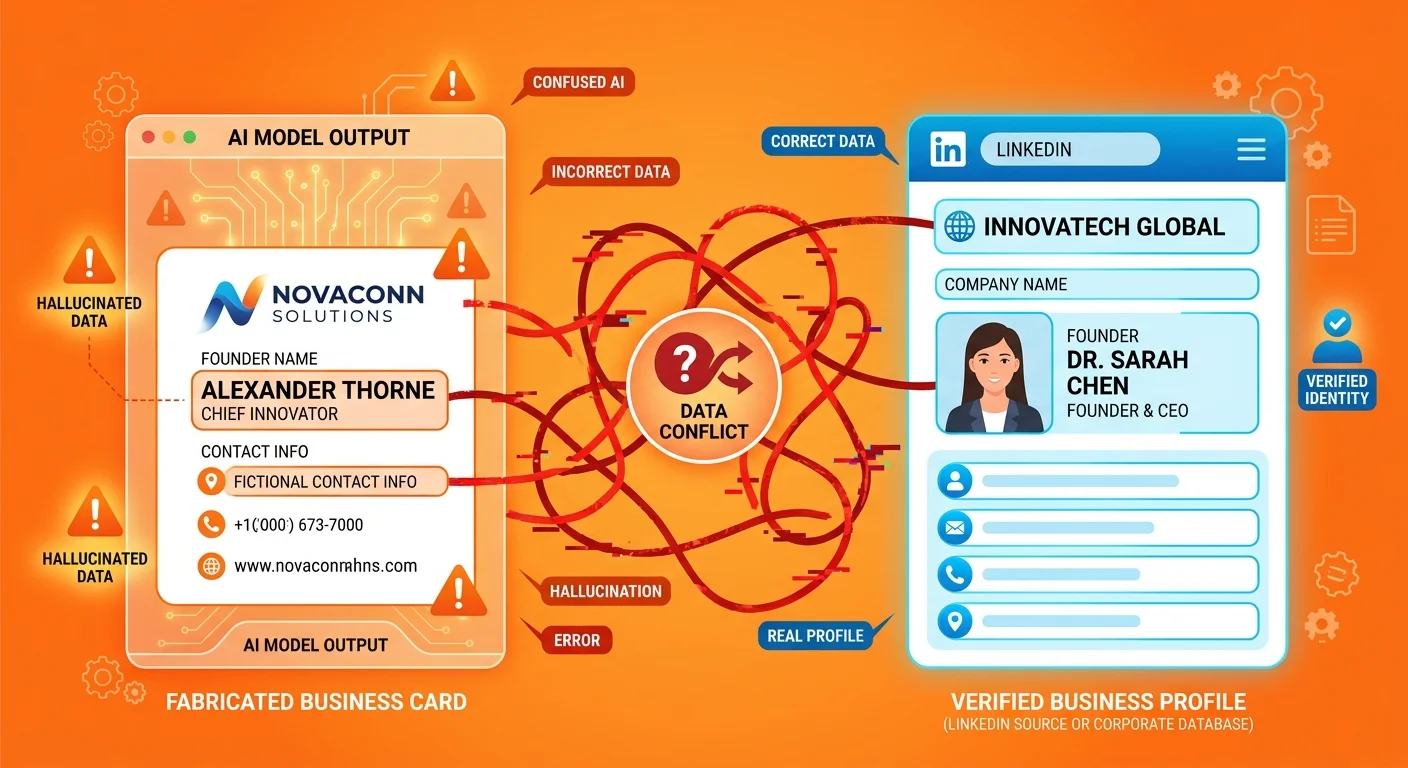

An AI hallucination occurs when a large language model generates information that is factually incorrect but presents it with complete confidence. These errors happen because AI models construct responses by identifying patterns in their training data rather than consulting verified databases of truth. When a business lacks clear, consistent, and authoritative digital signals across the web, AI engines are forced to infer or synthesize information from fragmented or contradictory sources. This creates significant risk for businesses whose online presence consists of scattered data points rather than structured, machine-readable authority infrastructure.

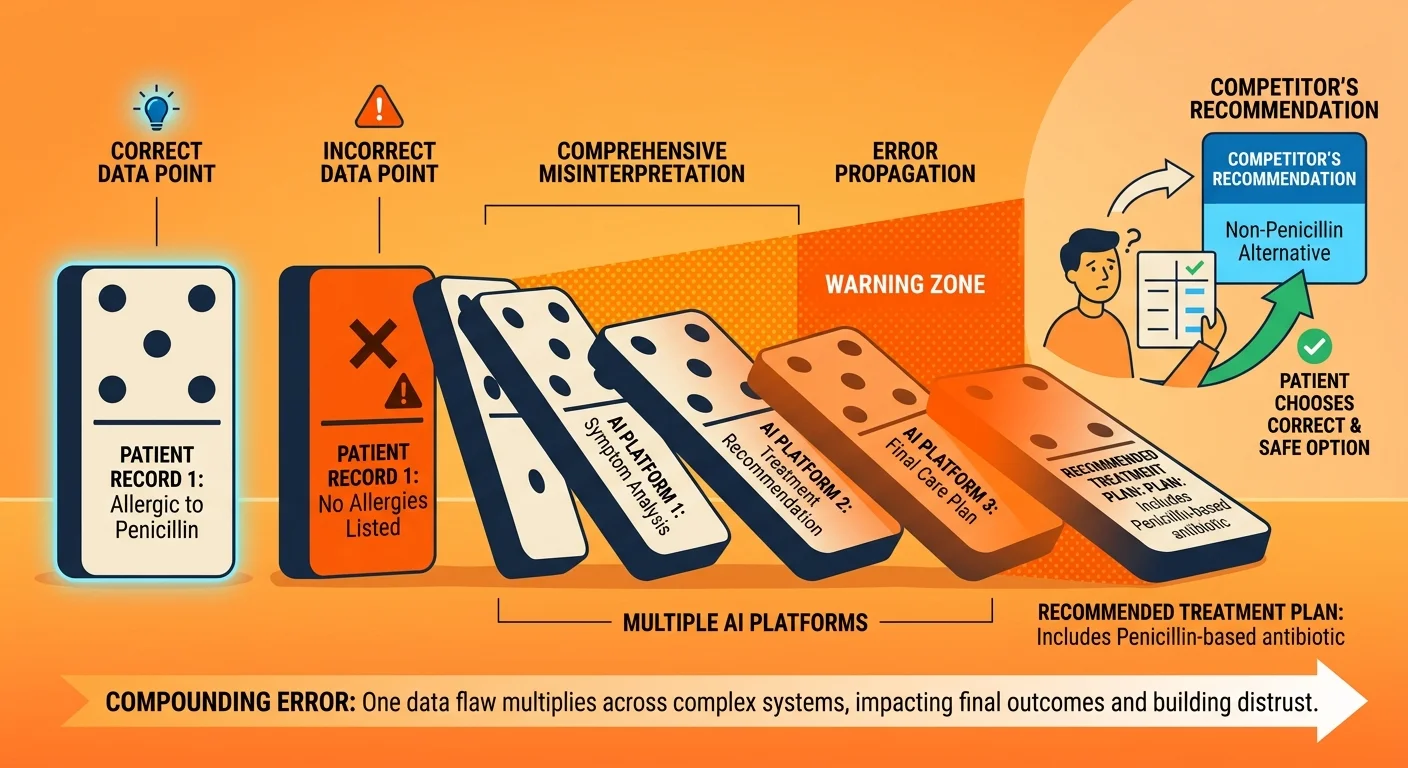

The most common form of business-related AI hallucination involves basic entity information such as founder names, addresses, service offerings, or credentials. AI models pull from multiple sources simultaneously and may conflate information from similar businesses, outdated directories, or unreliable third-party sites. When your digital footprint lacks the schema markup, citation consistency, and entity validation signals that AI engines use to determine trustworthiness, the model fills gaps with plausible-sounding fabrications. These hallucinations compound over time as newer AI models train on outputs from previous models, creating a feedback loop where incorrect information becomes more deeply embedded in the AI's knowledge base.

The solution requires rebuilding your business's authority infrastructure using structured data formats, consistent citations across verified platforms, and content hierarchies that establish clear entity relationships. This is not a website redesign project or a traditional SEO campaign focused on keyword optimization. Authority infrastructure engineering addresses the machine-readable signals that determine whether AI engines trust your entity data enough to cite it accurately. Without this foundation, every query about your business becomes an opportunity for the AI to generate incorrect information that patients will accept as fact because the source appears authoritative.

Last Updated: May 11, 2026

- • Why AI Invented a Founder Named "Michael Walen" for Our Business

- • What AI Hallucinations Actually Are and Why They Happen

- • The Three Entity Signals AI Uses to Determine What's True

- • Why a Pretty Website Doesn't Prevent AI from Making Things Up

- • The Cascading Business Consequences of Entity Drift

- • How to Check If AI Is Hallucinating About Your Practice

- • FAQ

- • What is an AI hallucination in simple terms?

- • How can I find out if AI is saying wrong things about my business?

- • Why does AI trust some business information more than other information?

- • Can I just email Google or ChatGPT to fix incorrect information about my practice?

- • Isn't this just a rare technical glitch that doesn't really affect patient acquisition?

- • What is the very first step to fixing my business's AI identity?

- • Conclusion

Why AI Invented a Founder Named "Michael Walen" for Our Business

Here's what nobody tells you about beautiful websites: if AI can't read them, they're expensive business cards.

They'll look professional. They'll convert the people who already know your name. They won't do a damn thing when a patient asks ChatGPT who the best chiropractor in their area is.

I know this because it happened to us.

At least one major AI engine decided the founder of iTech Valet was someone named Michael Walen.

Not me. Not Gerek Allen. Michael Walen.

We caught it during routine testing — the kind of AI Visibility Check most practices have never run. When asked directly who founded iTech Valet, the AI responded with complete confidence. Cited a name that doesn't exist in our business filings, our website, our verified profiles, or anywhere in reality.

Michael Walen does not exist. Never has. And yet an AI engine trusted that fabricated identity enough to present it as verified fact.

The AI Didn't "Glitch" — It Filled a Gap

This wasn't random. It was systematic inference failure.

AI models don't guess randomly when they hit gaps in data. According to research from ACM's FAccT conference on Stochastic Parrots, large language models stitch together patterns from training data without understanding factual reality. When the AI detected weak entity signals for iTech Valet — scattered data, missing schema, conflicting directory listings — it generated a plausible name to complete the pattern.

That's how pattern-matching systems behave when your infrastructure is weak.

The model found fragments. It recognized the structure: business name, founder field, industry context. It filled the blank with "Michael Walen" because that name fit the statistical pattern of founder names in similar contexts.

Plausible. Confident. Completely made up.

What This Means for Your Chiropractic Practice

If this happened to an AI Authority Agency whose entire business model is engineering AI visibility, you're exponentially more vulnerable.

You're not monitoring AI citations. You're not testing what ChatGPT says when patients ask for recommendations. You're assuming your professional-looking website means AI understands who you are.

It doesn't.

The founder hallucination proves that "looking professional online" prevents nothing. Your site can look perfect to human eyes while being structurally invisible to AI. The infrastructure problem stays hidden until AI cites you incorrectly — or worse, doesn't cite you at all.

What AI Hallucinations Actually Are and Why They Happen

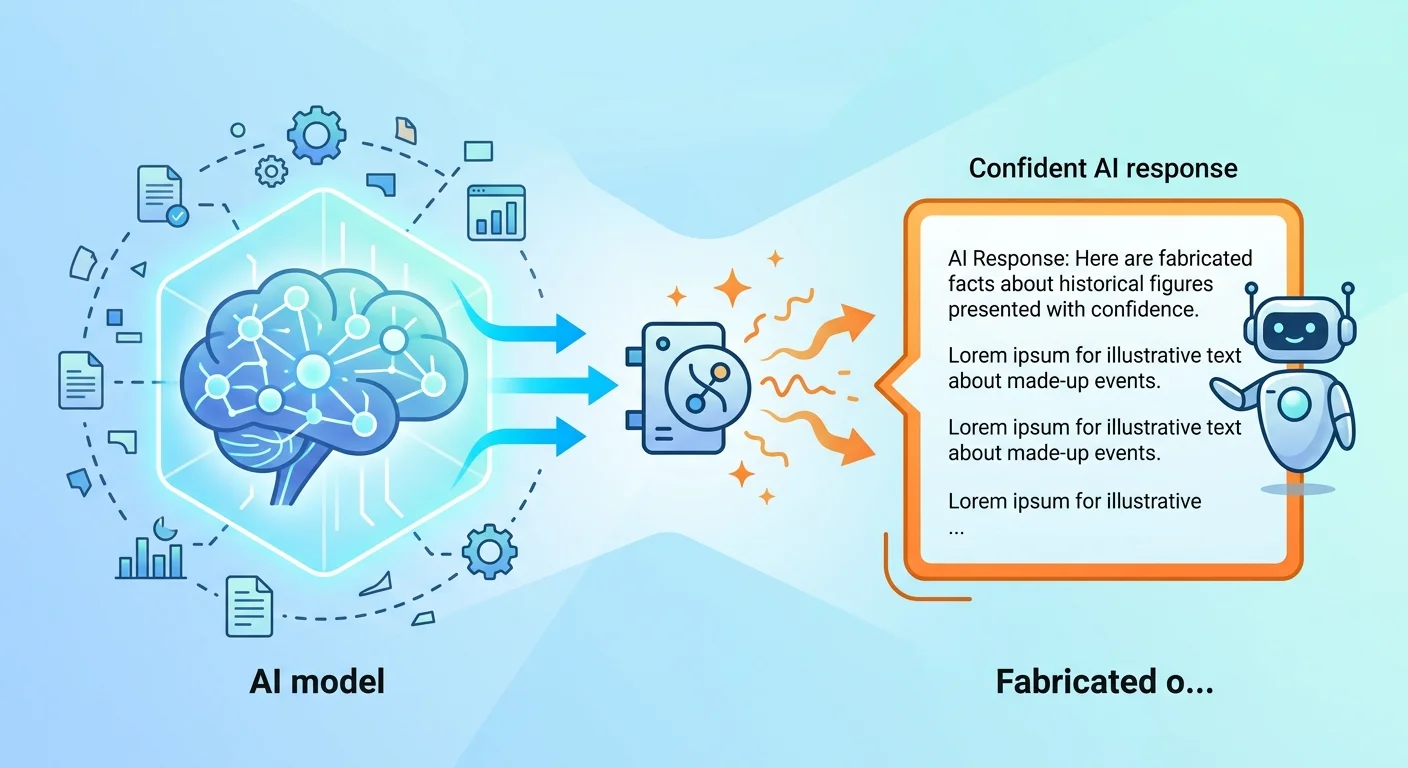

AI hallucinations aren't glitches. They're not rare bugs that get patched.

They're fundamental behavior. How these systems work.

Large language models construct answers by predicting the next most likely word based on patterns in their training data. They don't consult fact databases. They don't verify claims against authoritative sources in real time. They generate text that statistically resembles correct information based on billions of examples they've seen before.

According to Gartner's analysis of AI hallucination risks, these fabrications are a top concern for enterprise AI adoption. When a patient asks ChatGPT about chiropractors and receives incorrect information about your credentials or services — that patient treats it as fact.

The source looks authoritative. The response sounds confident. The fabrication becomes truth in their decision-making.

Why AI Engines Don't "Know" Facts the Way Humans Do

LLMs are pattern-matching systems. They identify statistical relationships between words based on frequency and proximity in training data.

When asked a question, the model generates the most probable continuation of that sentence based on examples it's seen. If your entity data is weak, contradictory, or missing, the AI fills gaps with plausible-sounding fabrications that match the pattern.

Your website says you specialize in sports injuries. An outdated directory lists you as a general practitioner. Your Google Business Profile has incomplete service listings. The AI sees three versions of your identity and picks the one that appears most frequently — or invents a fourth that statistically fits.

| Information Type | Human Process | AI Model Process |

|---|---|---|

| Founder Name | Reads About page, verifies business filings | Identifies pattern: [Business Name] + [Founder Field] → generates statistically likely name from training data |

| Business Address | Checks Contact page, cross-references Google Maps | Pulls from multiple sources, selects version that appears most authoritative or frequent |

| Service Offerings | Reviews Services page, calls to confirm | Synthesizes list based on industry patterns and incomplete directory data |

| Credentials | Checks state licensing boards | Infers credentials from job titles and industry context without verification |

The Three Conditions That Trigger Hallucinations About Your Business

AI hallucinations about your practice aren't inevitable. They're the result of three specific structural failures:

Sparse entity signals — Not enough authoritative data points across the web. Your practice exists in AI's training data as scattered fragments rather than a complete entity profile.

Conflicting entity signals — Directory A lists your address as 123 Main Street. Directory B has 123 Main St. Your website footer says 123 Main Street, Suite 100. The AI sees three different businesses.

Weak authority signals — No schema markup labeling your content. No verified citations from institutional sources. No structured data telling AI which version to trust.

When these three conditions exist simultaneously, AI has no reliable framework for understanding your business.

It fills the gaps with statistically plausible fabrications.

The solution isn't better marketing. It's stronger Entity Trust.

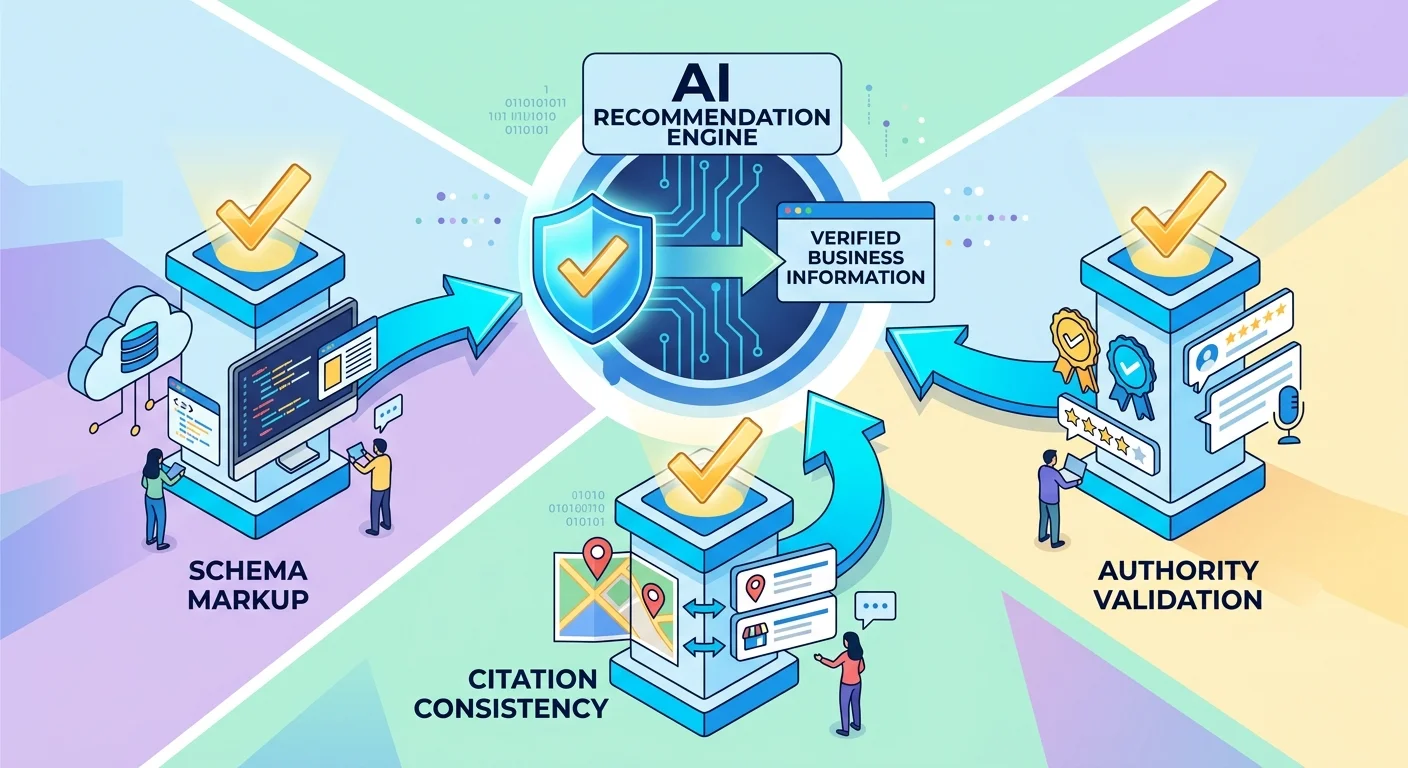

The Three Entity Signals AI Uses to Determine What's True

AI doesn't guess randomly when sources conflict. It applies a hierarchy of trust signals to determine which data to believe.

Understanding these three signals is the difference between being cited accurately and having AI invent your founder.

Schema Markup: The Language AI Actually Reads

Schema is machine-readable code that explicitly labels entity data on your website. It tells AI engines "this is the founder's name" or "this is the business address" — not "this is text on an About page that might be marketing copy."

Without schema, your beautifully written About page is unstructured text. AI treats it the same way it treats blog posts, testimonials, and service descriptions: contextual language that might or might not contain factual claims.

With schema, your founder's name becomes a verified data point wrapped in code that says "Person" + "founder" + "iTech Valet." The AI understands the relationship between entities.

Google's E-E-A-T guidelines — Experience, Expertise, Authoritativeness, and Trust — directly inform how AI engines evaluate content quality and entity reliability. Schema markup is the technical implementation of E-E-A-T. It transforms your claims into structured assertions that AI can verify against other sources.

Most web designers don't build schema. They build websites that look professional to humans.

Those aren't the same thing.

Citation Consistency: Why One Wrong Directory Breaks Everything

If your practice name is spelled three different ways across Healthgrades, Zocdoc, and your Google Business Profile, the AI sees three separate entities.

It doesn't intelligently merge them. It doesn't assume they're the same business. It picks the version that appears most frequently or from the most authoritative source — and if that source is wrong, the hallucination becomes accepted truth.

This is why Zero-Click Search is so dangerous for practices with weak citation profiles. AI presents one answer. Not a list of ten options. If your entity data is inconsistent, you're not in the running.

Citation consistency means:

- Identical business name spelling across all platforms

- Identical address formatting (including suite numbers, abbreviations, zip codes)

- Identical phone number format

- Consistent service descriptions using the same terminology

One outdated Yelp listing with the wrong address becomes the seed for a hallucination that spreads across multiple AI models.

Authority Validation: Why AI Trusts .edu and .gov More Than Your Website

AI engines use domain authority hierarchies borrowed from search engine ranking systems. A citation from a .gov health department directory carries exponentially more weight than your website's self-published claims.

This is why professional directories like Healthgrades and Zocdoc matter for AI visibility. They function as institutional validators. When AI sees your practice cited on these platforms with consistent entity data, it treats that information as verified truth.

If those institutional sources are outdated, incomplete, or missing — your website loses the validation battle. AI defaults to the most authoritative source it can find, even if that source is wrong.

| Source Type | Trust Level | Example |

|---|---|---|

| Government Sites | High | State medical board licensing databases, CDC provider directories |

| Educational Institutions | High | University hospital directories, medical school faculty listings |

| Healthcare Directories | Medium-High | Healthgrades, Zocdoc, Vitals, WebMD physician finder |

| Business Website | Medium | Your practice's self-published content and schema markup |

| Social Media | Low | Facebook, Instagram, LinkedIn profiles without institutional validation |

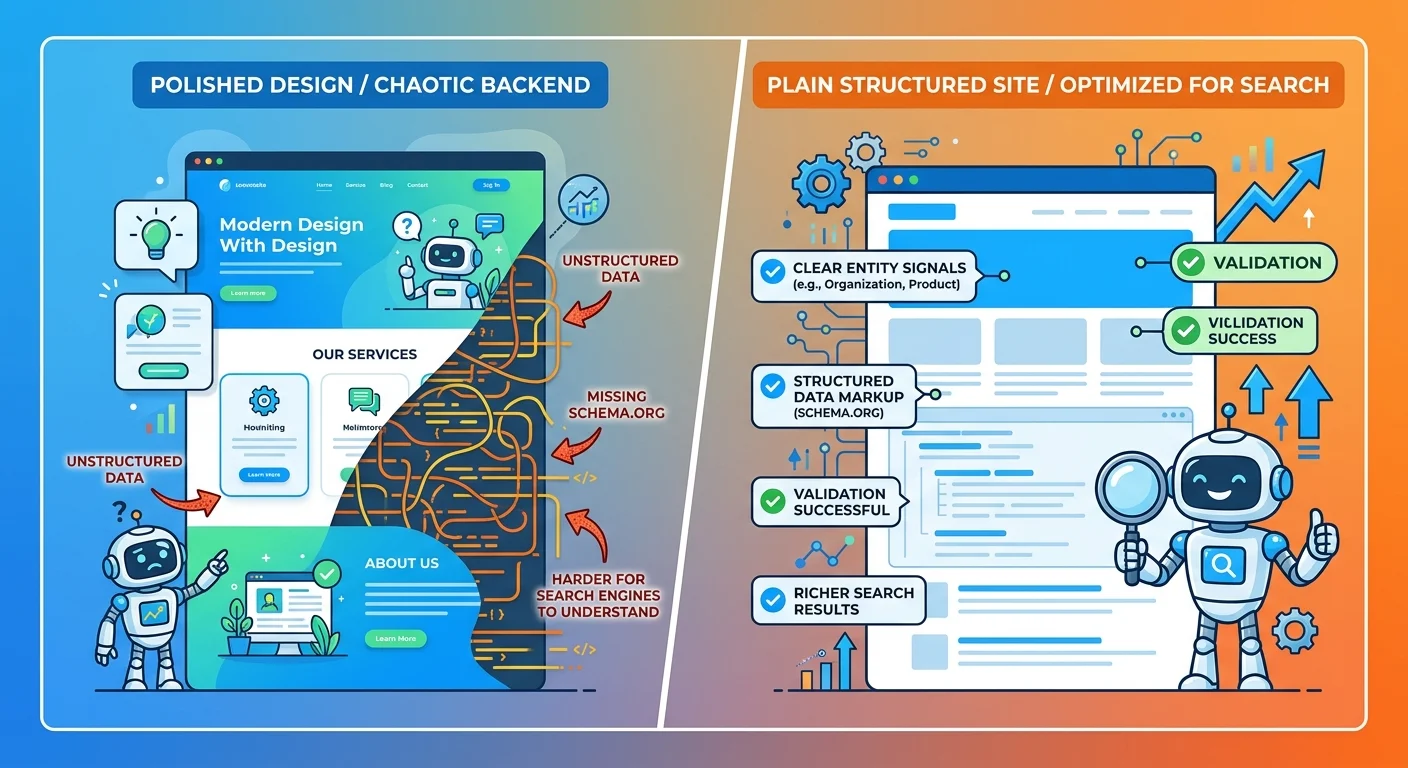

Why a Pretty Website Doesn't Prevent AI from Making Things Up

The Digital Brochure Fallacy

Most web design firms build for human eyeballs. Color schemes. Hero images. Testimonial sliders. Contact forms that feel inviting.

None of that prevents the Michael Walen problem.

A beautiful website that AI can't read is an expensive business card. It might convert visitors who already found you through word of mouth. It does nothing to make AI recommend you when patients ask for suggestions.

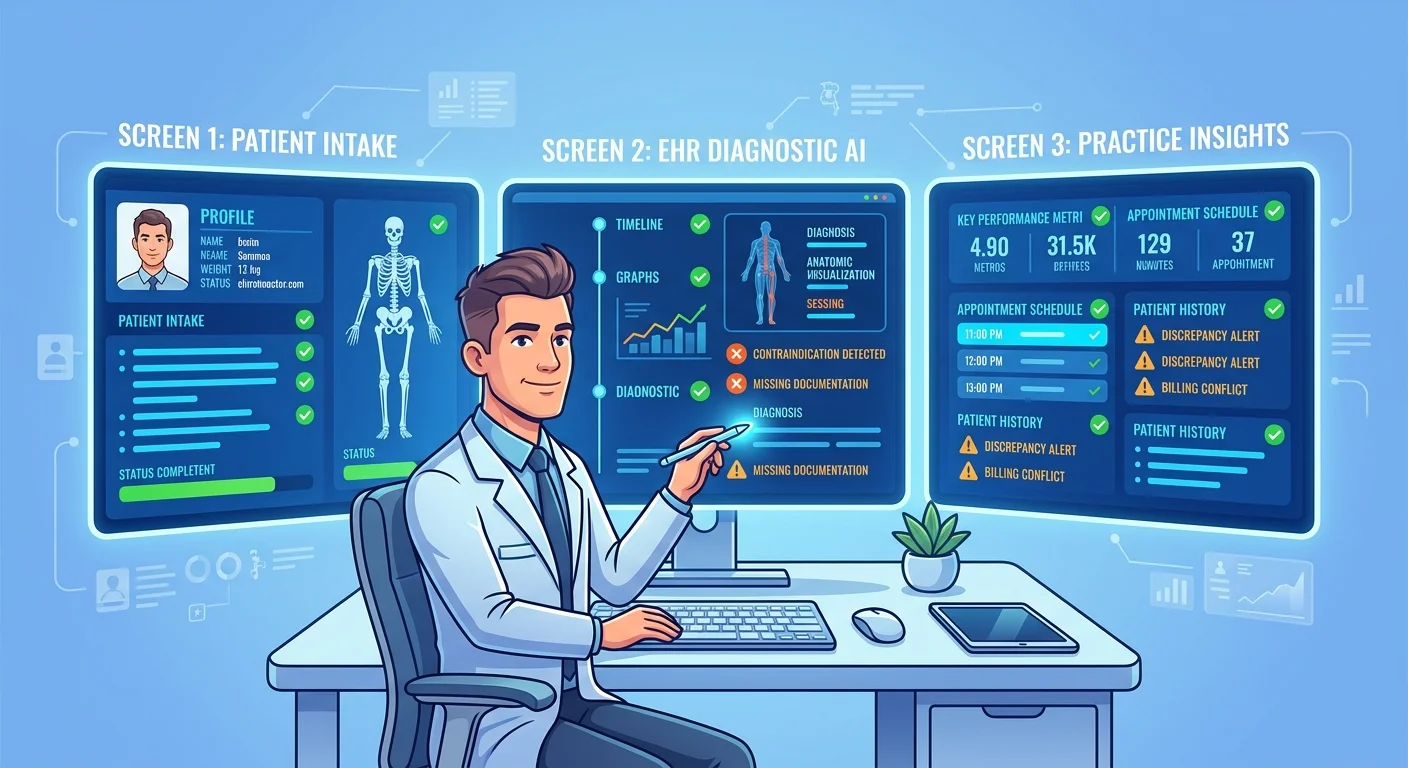

AI reads code. Schema markup. Structured data hierarchies. Internal linking patterns that establish content relationships and entity context.

If those layers are absent, your site is decorative noise. The AI sees marketing language with no machine-readable structure to validate your identity, credentials, or authority.

That's why we exist as an AI Authority Agency instead of a web design firm. The work we do isn't visible to humans.

It's infrastructure.

What "AI-Readable Infrastructure" Actually Means

Infrastructure is the invisible code layer that labels your content for machine comprehension.

It's schema markup that identifies the founder by name and role — wrapped in structured data that says "this person founded this organization on this date."

It's internal linking architecture that establishes content hierarchy — telling AI which pages are foundational authority content and which are supporting detail.

It's citation profiles across professional directories that validate your entity with consistent data — so AI sees one authoritative version of your practice instead of scattered fragments.

This isn't a feature you buy from a web designer. It's a complete rebuild of how your digital presence is structured for machine interpretation.

| Website Element | Designed for Humans | Designed for AI |

|---|---|---|

| Hero Image | Visual appeal, brand identity, emotional connection | Alt text with entity labels, image schema linking to business profile |

| Testimonials Section | Social proof, trust-building through patient stories | Review schema with structured rating data, author entity markup |

| About Page | Founder story, mission statement, practice philosophy | Person schema for founder, Organization schema for practice, founding date, credentials |

| Contact Form | User experience, mobile-friendly input fields | ContactPoint schema with verified phone/email, address markup with geographic coordinates |

| Blog Post | Educational content, conversational tone, readability | Article schema with author entity, publication date, topical authority signals |

The Cascading Business Consequences of Entity Drift

Entity drift is the slow accumulation of data inconsistencies that AI engines use to build their understanding of your business.

One wrong citation becomes the seed for a hallucination. That hallucination gets cited by another AI. Patients accept it as fact. You never know it happened until someone asks why your website says one thing and ChatGPT says another.

By then, the damage compounds.

Why Correcting One AI Engine Doesn't Fix the Problem

AI models don't share a central database. ChatGPT, Gemini, and Grok each build their understanding from different training sets and real-time retrieval sources.

Fixing one engine's error does nothing to address the weak infrastructure that caused the hallucination in the first place.

The Michael Walen fabrication didn't appear in all AI engines simultaneously. It showed up in one. If we'd only tested that one engine and submitted a correction request, we would've missed the underlying problem: iTech Valet's entity signals were weak enough that pattern-matching inference filled gaps with plausible fabrications.

The problem will resurface elsewhere. Different engines. Different fabrications. Same root cause.

The only fix is rebuilding the authority infrastructure so AI engines have no gaps to fill. That's how chiropractors win in a zero-click world.

The Authority Gap Compounds Every Month

While you're unaware of the drift, your competitor with stronger entity signals gets cited correctly. AI recommends them. Not you.

The gap widens.

This isn't a one-time fix. Authority infrastructure requires ongoing maintenance because new platforms launch, directories get updated, and AI models retrain on new data.

Every month you operate with weak entity signals, the citation advantages your competitors are building become harder to overcome. They're not just getting recommended today. They're establishing the authority patterns that AI will use to make recommendations six months from now.

Quick pause before we go further.

If your practice refuses to specialize or clearly define what you do, AI has no framework for understanding your entity.

"General chiropractic care" isn't an identity. It's a category that includes thousands of practices.

When you dilute your authority by trying to serve everyone with every technique for every condition, AI can't distinguish you from competitors. The model sees generic service descriptions that match hundreds of other practices in your market. It has no specific signals to anchor your identity.

The hallucination risk multiplies.

This is the "Everything to Everyone" Practitioner. It's not a marketing preference. It's a structural vulnerability that makes AI citation impossible.

If you want AI to recommend your practice, you must be specific enough that the model can differentiate you from the generic category.

How to Check If AI Is Hallucinating About Your Practice

The only way to know if AI is inventing information about your practice is to systematically test what multiple AI engines say when asked direct questions.

You can't guess. You can't assume everything's fine because your website looks professional.

You must query ChatGPT, Gemini, and Grok with the exact questions patients are asking — and document what comes back.

That's what the AI Visibility Check does. It's a diagnostic, not a sales pitch. We run the test queries. You see what AI says. The gaps become self-evident.

The Questions You Must Ask Every AI Engine

Test these queries across all three major AI platforms:

- Who is the owner of [Your Practice Name]?

- What services does [Your Practice] offer?

- What are the hours for [Your Practice]?

- Where is [Your Practice] located?

- What credentials does the chiropractor at [Your Practice] hold?

Document every answer. Compare them to your verified data.

Any conflict is a hallucination. Any missing information is a gap the AI will eventually fill with a fabrication.

Why Manual Checking Isn't Scalable

You can run these queries yourself. But the diagnostic is incomplete without understanding which structural weaknesses caused the errors.

Schema gaps? Citation conflicts? Weak directory profiles? Missing authority validation?

The AI Visibility Check reveals both the hallucinations and the infrastructure failures that produced them. You get a prioritized list of what needs fixing and why it matters for AI recommendations.

Guessing doesn't fix entity drift. Data does.

FAQ

What is an AI hallucination in simple terms?

It's when an AI model confidently states something that's factually incorrect or nonsensical, presenting it as if it were verified truth.

Think of it like autocorrect on your phone. The AI predicts the next most likely word based on patterns it's seen before. Sometimes that prediction is completely wrong but sounds plausible enough that the model outputs it with full confidence.

The difference is that autocorrect errors are obvious. AI hallucinations about your business sound authoritative because the source — ChatGPT, Gemini, Grok — appears trustworthy.

When a patient asks "Who is the best chiropractor near me?" and the AI fabricates credentials or services for a competitor while citing outdated information about your practice, that patient treats the response as fact.

How can I find out if AI is saying wrong things about my business?

You must systematically query multiple AI engines with direct questions about your practice and document every response.

Ask ChatGPT: "Who is the owner of [Your Practice Name]?"

Ask Gemini: "What services does [Your Practice] offer?"

Ask Grok: "What are the hours for [Your Practice]?"

Compare every answer to your verified data. Any conflict is a hallucination. Any missing information is a gap the AI will eventually fill with a fabrication.

This isn't something you do once and forget. AI models retrain on new data. Directories get updated. New platforms launch. The diagnostic needs to be ongoing.

Why does AI trust some business information more than other information?

AI uses a hierarchy of source authority borrowed from search engine ranking systems.

Government sites (.gov) carry the highest trust weight. Educational institutions (.edu) are next. Professional healthcare directories like Healthgrades and Zocdoc rank above self-published website content. Social media profiles carry the lowest authority.

When multiple sources conflict, AI defaults to the most authoritative source — even if that source is outdated or wrong.

This is why citation consistency across institutional platforms matters more than what your website says. Search engines build knowledge graphs of real-world entities by consolidating data from multiple sources — and when those sources conflict, AI inherits the same confusion. If a state medical board directory lists incorrect credentials and your website claims different credentials, AI will trust the .gov source.

Your website becomes supporting evidence, not primary truth.

Can I just email Google or ChatGPT to fix incorrect information about my practice?

No.

No direct customer service mechanism exists to "fix" an AI's knowledge base.

OpenAI, Google, and other AI developers don't maintain manual override systems where you can submit correction requests for individual businesses. These models train on massive datasets scraped from the web. Correcting one output doesn't change the underlying training data or source infrastructure.

The only reliable method is fixing the source data itself — rebuilding your authority infrastructure so AI engines have consistent, structured, validated entity signals to trust.

That means correcting directory citations. Adding schema markup. Building institutional backlinks. Establishing content hierarchies that label your entity relationships for machine comprehension.

The work happens at the infrastructure level, not by contacting AI companies directly.

Isn't this just a rare technical glitch that doesn't really affect patient acquisition?

This isn't a rare glitch. It's systematic behavior of AI models operating without strong entity signals.

Every week you remain unaware of these hallucinations, patients are receiving incorrect information about your practice and choosing competitors whose data AI trusts more.

The question isn't whether this affects patient acquisition. The question is how many patients you've already lost to recommendations you never knew were happening.

Patients don't call to tell you ChatGPT recommended someone else. They don't leave reviews saying "Gemini cited a competitor instead of you." The visibility gap is silent.

You discover it when revenue plateaus and you can't figure out why marketing isn't working. By then, the authority gap has compounded for months.

What is the very first step to fixing my business's AI identity?

Run a comprehensive diagnostic to establish a baseline of what AI engines currently say about your practice.

You can't fix entity drift without knowing what conflicts exist, which sources AI is trusting, and where your infrastructure gaps are causing hallucinations.

That's what the AI Visibility Check does. We query ChatGPT, Gemini, and Grok with patient-intent questions. We document every response. We map the structural failures causing incorrect citations.

You get a prioritized action plan showing which infrastructure fixes will have the most immediate impact on AI recommendations.

The alternative is guessing. Most practices guess their way through this for six months before they realize Answer Engine Optimization (AEO) isn't optional anymore.

Conclusion

AI invented a founder for our business. Not because the technology is broken. Because our entity infrastructure had gaps the AI filled with plausible fabrications.

If this happened to an AI Authority Agency whose entire model is engineering AI visibility, your chiropractic practice is exponentially more vulnerable.

The hallucinations aren't coming. They're already here.

Patients are already receiving incorrect information about your services, credentials, and identity. The only question is whether you're gonna discover this before or after they've chosen the competitor AI recommended instead of you.

There's no version of this where waiting makes sense.

Every month you operate with weak entity signals, the authority gap compounds. The practices building machine-readable infrastructure now are locking in the citation advantages that determine whose name AI says when patients ask for recommendations.

You can run the diagnostic and see exactly what AI currently believes about your practice — or you can assume everything's fine and discover the Michael Walen problem six months too late.

Want to know if AI is hallucinating about your practice — or recommending your competitor because your entity signals are too weak to trust?

The AI Visibility Check takes 15 minutes. We query ChatGPT, Gemini, and Grok with the exact questions patients are asking. You see what they see.

If the answers match your verified data, you're in good shape. If they don't — you'll know exactly which infrastructure gaps are causing the hallucinations and what it's costing you in lost patient recommendations.

No guessing. No assumptions. Just the data.