The Invisible Practice: Why AI Ignores 98% of Chiropractic Websites

AI engines like ChatGPT, Gemini, and Perplexity ignore approximately 98% of chiropractic websites because most sites suffer from what we call the Digital Brochure Fallacy. The site looks professional to a human visitor. But to a machine reasoning engine, it's unreadable — there's no underlying authority infrastructure to verify, trust, or recommend.

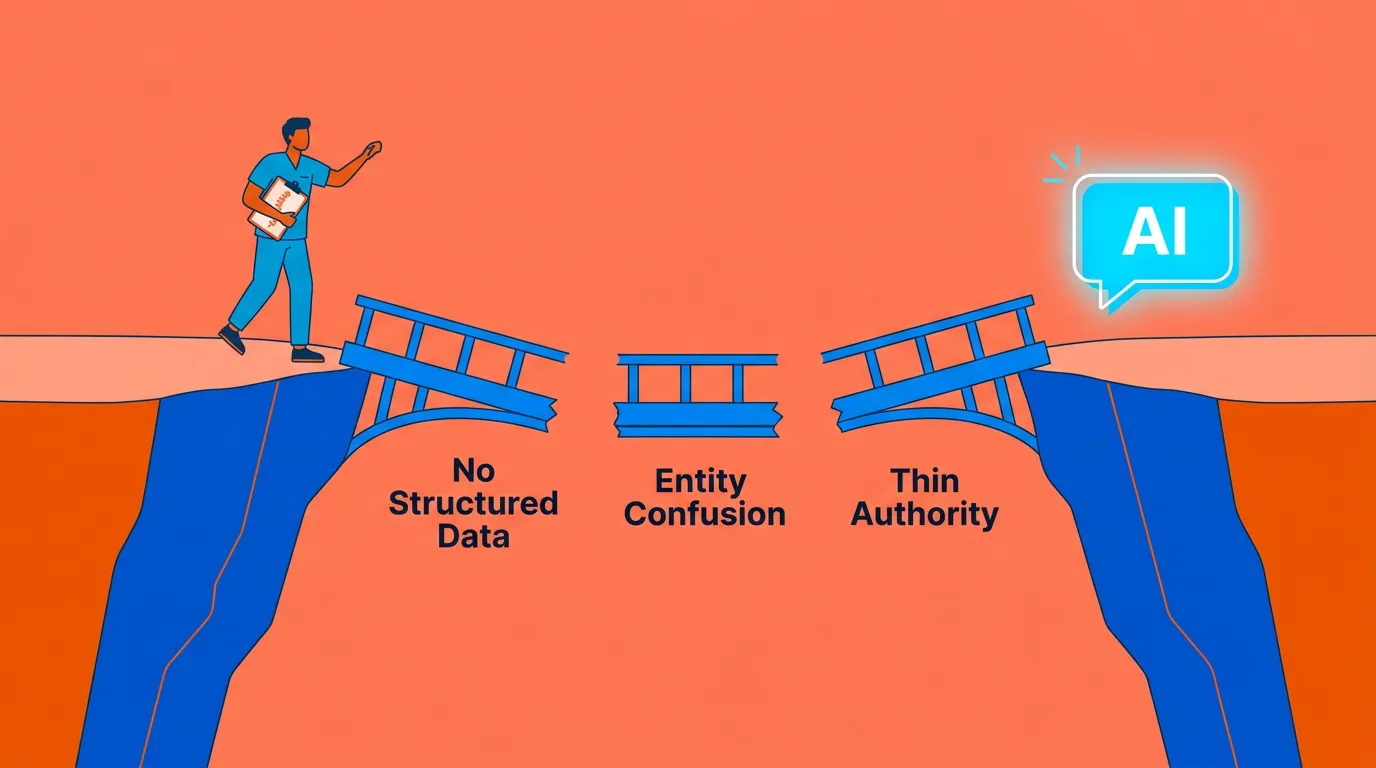

Three specific gaps cause this invisibility.

First, most chiropractic websites have no structured data. Without schema markup — LocalBusiness, MedicalBusiness, FAQPage — AI cannot parse your services, location, or credentials with any certainty. It won't guess. It'll skip you entirely.

Second, entity confusion. If your name, address, phone number, and credentials are inconsistent across Healthgrades, Zocdoc, and Google Business Profile, AI treats your practice as an unverified entity. Not flagged as wrong — just absent.

Third, thin topical authority. Traditional blog content written around keyword density fails the machine trust threshold. AI engines don't rank — they recommend. And they only recommend practices that have demonstrated deep, consistent, structured expertise across a topic cluster.

This article breaks down all three gaps in detail, explains what AI actually looks for when deciding who to recommend, and shows why a website that scores a ten out of ten for human aesthetics can score a zero for machine readability.

If you've ever wondered why your new patient numbers are flat despite a "good" digital presence — this is likely the reason.

Last Updated: April 10, 2026

You Have a Great Website. AI Has Never Heard of You.

Picture a Tuesday afternoon. A new patient — low back pain, two weeks of it, finally fed up — pulls out her phone and asks ChatGPT for the best chiropractor near her. The AI responds in seconds. It names a practice, gives two specific reasons why, and she books. That practice has fewer reviews than yours. Its website is older than yours. But it had the one thing AI actually requires: a verifiable identity it could read. Your name wasn't mentioned. Your 20 years of experience wasn't mentioned. Your 4.9-star average wasn't mentioned.

The AI didn't skip you because you're not good enough. It skipped you because it couldn't find you.

Most chiropractic websites were built to impress humans. And for a long time, that made sense — someone found you on Google, scanned your site, called the office. The funnel worked.

It doesn't work that way anymore.

That patient didn't Google you. She didn't scan ten blue links. She asked a question and expected one answer. And ChatGPT doesn't pick the nicest-looking site — it verifies. It cross-references. It looks for an entity with enough consistent, structured signal that it can stake a recommendation on your name.

As an AI authority agency, we call what most practices have built the Digital Brochure Fallacy. Beautiful to look at. Functionally invisible to any machine reasoning engine that's now sitting between you and your next patient.

Why Traditional SEO Created This Problem in the First Place

Here's what I tell docs when they ask how we got here.

Your SEO agency didn't lie to you. They optimized you for the platform that existed. Keywords, rankings, impressions — all of it mattered, and they delivered. The problem is that "mattered" is past tense.

Gartner projects traditional search volume will drop 25% by 2026 as patients shift to AI chatbots. That's not a warning about what's coming. That's a description of what's already happening. And AI-powered search is experiencing double-digit monthly growth in 2025 — every single month, the gap between the old channel and the new one gets wider.

Your SEO agency is running your lap times. The race moved to a different track.

Traditional SEO gets you onto a list. AI search produces one verdict. Those aren't two versions of the same thing — they are completely different games with completely different winners. And right now, if your agency is measuring keyword impressions while Perplexity is sending patients to your competitor, that monthly report isn't a progress update. It's a distraction.

The Three Technical Gaps Killing Your AI Visibility

I want to be clear about something before we get into the specifics.

This isn't a vague "AI doesn't like your site" problem. There are three exact reasons AI passes over a practice. Three specific gaps — each one fixable, each one measurable, each one sitting in your digital footprint right now either addressed or broken.

Gap #1: No Structured Data

AI doesn't read your website the way a patient does.

A patient scans your homepage, reads a few sentences, looks at your photos, and forms an impression. That whole process is human — emotional, contextual, impressionistic.

AI can't do any of that. AI reads structure. And if the structure isn't there, neither are you.

Schema markup is the code layer underneath your design — invisible to visitors, but the only thing AI can actually parse with confidence. It declares what type of business you are, what services you offer, where you're located, what credentials you hold. Not in copy. In machine-readable format.

Without it, AI is guessing. And AI doesn't guess when it's recommending a healthcare provider to a real patient.

Properly implemented schema increases AI recommendation frequency by 3x to 5x. Sit with that for a second. Not 10% better. Not marginally improved. Three to five times more likely to get recommended — just because the infrastructure exists to confirm who you are.

Here's what that schema layer has to declare:

- Business type and classification — LocalBusiness and MedicalBusiness schema confirming you are a licensed healthcare provider, not an ambiguous service entity

- Physical location and service area — Address, geolocation coordinates, and service radius mapped consistently

- Credentials and practitioner identity — Degree, license, specialty, and practitioner name in structured format

- Services and conditions treated — Every service and condition mapped explicitly, not just described in copy

- FAQPage schema — Structured Q&A content AI can extract and cite directly in a conversation

Most template websites have none of this. The ones that do usually have a generic tag worth about as much as a sticky note on a server rack.

| Gap | What's Missing | What It Costs You |

|---|---|---|

| No Structured Data | Schema markup (LocalBusiness, MedicalBusiness, FAQPage) | AI can't parse your services, location, or credentials |

| Entity Confusion | Consistent citations across Healthgrades, Zocdoc, Google Business Profile | AI treats your practice as unverified — not wrong, just absent |

| Thin Topical Authority | Intent-mapped content covering the full patient question journey | AI can't classify you as a trusted subject matter expert |

Gap #2: Entity Confusion

I've seen practices with spotless Google Business Profiles get completely ignored by AI.

Great reviews. Current photos. Accurate hours. Still invisible.

Here's what was happening. Their Healthgrades listing had an old phone number. Their Zocdoc profile used a slightly different version of the clinic name. Their NPI record had a different address format than their website schema.

To a human, those are just minor inconsistencies. Nothing serious.

To AI, that's a practice that can't confirm its own identity.

AI doesn't pick the "right" version of your information. It looks for consensus. When the signals conflict — even slightly — trust scores drop. When trust scores drop far enough, you stop being a candidate for recommendation entirely. Not penalized. Just gone.

Every one of these platforms needs to match:

- Google Business Profile — your most visible local identity signal

- Healthgrades, Zocdoc, Vitals, and WebMD — the medical directories AI treats as authority validators

- NPI record — your National Provider Identifier, cross-referenced against your website identity

- State licensing board listings — credential verification that gives AI confidence in your professional standing

- Your own website schema — the structured data on your own domain that ties all of it together

This is the work entity clarity and machine trust is built around — and it's almost always where we find the biggest invisible gap when a new practice comes to us.

BCG's healthcare research confirms patients are increasingly using AI chatbots not just to answer health questions but to choose providers. The AI doing that recommending is cross-referencing your entity data right now, today. If anything conflicts, you're not in the conversation.

Gap #3: Thin Topical Authority

This one stings a little.

I talk to docs who have 40, 50 posts on their site. Neck pain, back pain, sciatica, posture, headaches. Published consistently for years. Good topics, decent content.

AI has never recommended any of it.

The post count doesn't matter. The format does.

Those posts were written to tell Google's keyword algorithm "this site covers these topics." That was a 2019 goal. AI doesn't need to be told you cover a topic — it needs to be able to extract a complete, verified, expert answer to the specific question a patient is asking right now. That's a different kind of content entirely.

Zero-click searches now make up roughly 60% of US search activity. Sixty percent. Patients get the answer inside the AI interface and never visit a website. If your content isn't built for extraction — structured, intent-mapped, and deep — it doesn't matter how much of it exists.

What AI Engines Actually Need to Recommend You

I get this question constantly. "What does AI actually look for?"

Docs expect a complicated answer. It's not.

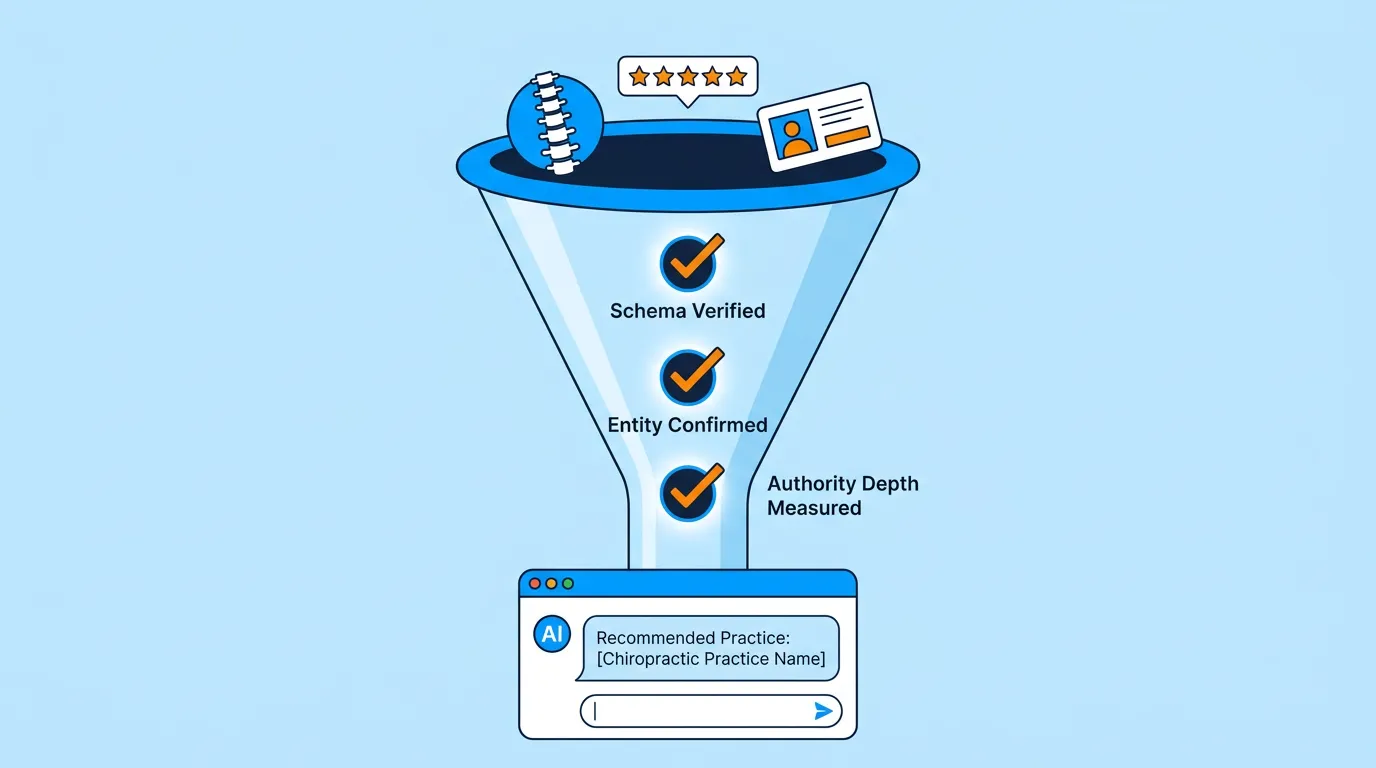

AI isn't looking for the best chiropractor. It can't evaluate that. No AI engine can review your clinical outcomes or assess how well you treat patients. What it can do is verify — and that's exactly what it's doing. Running a trust check against every signal it can find.

The practice that wins isn't the most talented. It's the most verifiable. Understanding what an AI Authority Engine actually does is really understanding that distinction — and most doctors haven't made it yet.

Machine Readability Is Not a Design Feature

Two audiences visit your site. One's a patient. One's a machine.

The patient reads your words, looks at your photos, and makes an emotional call. "I like this place. I trust this person." That's a valid process. It matters — eventually.

But the machine gets there first.

The AI evaluating your practice doesn't read your copy. It reads your code. It checks your schema. It cross-references your entity citations. It scans your content hierarchy to see if you've organized your expertise in a way it can parse and cite.

If none of that infrastructure exists, the result is the same regardless of how good the copy is. Zero. Not a low score. Zero. The practice doesn't enter the recommendation pool.

- Declaring your business type — so AI can categorize your practice precisely

- Mapping your credentials — so AI can verify you are who you say you are

- Structuring your content into intent clusters — so AI can cite specific answers from your site with confidence

- Aligning your entity data across the web — so every signal AI finds points to the same verified practice

A website that looks like a 10/10 to a human visitor can score a zero with an AI engine. Not because the design is bad. Because the infrastructure machine reasoning requires doesn't exist underneath it.

The Entity Consensus Standard

Here's a test I give docs.

Ask any AI right now — ChatGPT, Perplexity, Gemini, your choice — "Who is [your name] and where is their practice?"

If it can't answer confidently, or gets details wrong, or hedges — that's not a glitch. That's your entity consensus score, live.

AI doesn't trust one source. It looks for agreement. When your NPI record, your Google profile, your medical directories, and your website schema all say the same thing, AI can make a recommendation without second-guessing itself. When they conflict, it can't — and it won't.

Simple standard. Most practices don't meet it.

| What AI Checks | Human-Optimized Website | AI-Optimized Infrastructure |

|---|---|---|

| Business Identity | Logo and colors | Schema declaring business type, address, credentials |

| Service Clarity | Copy describing services | MedicalBusiness schema mapping exact services and specialties |

| Credential Verification | Bio page mentioning degrees | Structured data cross-referenced with NPI and medical directories |

| Location Trustworthiness | Contact page with address | Consistent entity citations across 15+ medical and local directories |

| Topic Authority | Blog posts targeting keywords | Intent-mapped content clusters covering the full patient question journey |

The Hopium Cycle — Why Your Reports Look Green and Your Phone Still Doesn't Ring

I call it the Hopium Cycle.

You hire an agency. Monthly reports show up. Everything looks like progress — rankings improving, impressions up, traffic trending in the right direction. New patient calls: flat.

So you give it another quarter. Still flat. You switch agencies. New dashboard, new faces, same green graphs, same result.

The Hopium Cycle isn't about bad agencies. It's about the wrong channel.

Most marketing agencies aren't lying to you. They're measuring exactly what they promised to measure. The problem is the channel they're measuring is the one your patients are already leaving.

The Measurement Lie

Let me ask you something honest.

When was the last time you opened Google, browsed through ten websites, and called the one that looked best?

Because your patients aren't doing that either. They're asking ChatGPT. They're asking Perplexity. They're getting one answer and acting on it. The "browse ten blue links" behavior is disappearing — and it's disappearing faster than most agencies will admit, because admitting it means admitting their core service is losing relevance.

Your green report is an accurate measurement of a shrinking platform. That's the honest version.

One other thing worth naming directly: some providers are now selling dashboards that claim to track how often AI cites your practice in real time. That data doesn't exist in a reliable format. AI engines don't publish citation APIs. The whole premise is built on something that can't be verified. We don't publish vibes. We publish receipts. If someone's selling you a live citation count, ask them to explain the methodology. You won't get a clean answer.

This Isn't for Every Doctor

I want to save us both some time here.

If your first reaction after reading all of this is "okay, how does this compare to what I'm paying now?" — we're not the right fit. And I'd rather tell you that upfront than waste your time.

The Budget-First Buyer is the most common reason I watch authority infrastructure fail. Not because the system doesn't work. Because building real AI authority takes 12-18 months of consistent, compounding execution — not a sprint that shows results in next month's report. That kind of work costs real money. It's not comparable to a $500/month SEO retainer because it's not doing the same thing.

The two-AI validation system we use isn't a plugin. It's a proprietary methodology — Gemini researches, Claude writes, Gemini validates, Claude refines — built specifically to produce content that satisfies machine reasoning standards. That's not a commodity. It's not priced like one.

If you want to build the kind of AI authority that makes you the answer when a patient asks ChatGPT for the best chiropractor in your city — this works. I've watched it work.

If you want the cheapest option that does something digital, there are plenty of agencies ready to take that budget. We're not one of them.

| Marketing Approach | What It Measures | What It Misses |

|---|---|---|

| Traditional SEO | Keyword rankings, impressions, click-through rates | AI recommendation frequency, entity consensus score, machine readability |

| Paid Advertising | Cost per click, conversion rate, short-term lead volume | Compound authority, long-term patient trust, zero-click recommendation |

| AI Authority Infrastructure | Entity verification, schema compliance, intent cluster depth | Nothing — this is what AI actually reads |

Frequently Asked Questions

Why isn't my 5-star Google rating enough for AI to recommend me?

It's a strong signal. Just not the only one AI requires.

AI is building a trust profile — and that profile requires consensus from dozens of sources, not a 4.9 on a single platform. Your Google rating looks great. But if your Healthgrades listing has outdated information, your Zocdoc entry shows a different phone number, and your website schema doesn't confirm the same identity — AI has no way to know which version is real.

So it doesn't recommend anyone it can't verify across the full picture.

One great rating on one platform doesn't override a fractured identity everywhere else. The whole footprint has to hold.

What exactly is "Machine Readability"?

Machine readability is the technical infrastructure layer — schema markup, structured content hierarchy, entity citation consistency — that allows AI to identify your practice as a specific, verified entity rather than a generic collection of web pages.

Your website looks fine to a patient. To AI, it's a locked room. If the structured layer underneath the design doesn't exist, AI can't get inside. It can't verify who you are, what you offer, or why it should stake a recommendation on your name.

Pretty design doesn't open the door. Infrastructure does.

Do I have to rebuild my entire website to fix this?

Usually not the aesthetic. But the infrastructure underneath almost always needs to be restructured.

Think of it this way: you don't tear down the building to rewire the electrical. The walls stay. The exterior stays. But what's running inside the walls gets rebuilt to a standard the building never had.

That's what we do. We leave your design alone and build the engine that was never there to begin with.

How does AI decide which chiropractor is the "best" in my city?

It doesn't look for the "best." It looks for the most verifiable.

I want to be specific about what "verifiable" means here, because this is the part most docs haven't fully processed. AI can't evaluate your adjustment technique. It can't assess your patient outcomes. It has no way of knowing that you're genuinely better at treating sciatica than the practice three blocks over. None of that data exists in a format AI can read.

What it can evaluate is your entity footprint — how consistently and completely your practice identity appears across every platform AI uses to cross-reference local providers. That's what gets you recommended. Not talent. Not reviews alone. Verifiable infrastructure.

Is this just another version of SEO?

No. These are fundamentally different strategies with fundamentally different goals.

SEO asks: "How do I rank on this list?" AEO asks: "How do I become the name AI says?" Those questions don't belong in the same conversation. The channel is different. The goal is different. The execution is different. The patient behavior driving both of them is completely different.

I tell docs: if your current agency is measuring keyword impressions, they're playing a different game than the one your patients are actually playing. Understanding what an AI Authority Engine actually does versus an SEO package isn't comparing two types of the same service. It's comparing two different eras of patient discovery.

Can't I just add schema to my existing website myself?

You can add a basic schema tag. That doesn't create AI authority.

I've seen docs do this — add a LocalBusiness tag, run a schema validator, get a green checkmark, and wonder why nothing changed. Because a LocalBusiness tag is one layer of a much deeper structure. Service-level schema. Credential mapping. FAQPage layers. Cross-platform entity alignment. All of it has to work together.

A single tag in isolation is like buying a front door for a house with no walls. The door is technically there. That's about all you can say for it.

Why is my competitor getting recommended when I have better reviews?

Because AI isn't measuring reviews alone. It's measuring the totality of your entity signals.

This is the one that genuinely frustrates docs, and I get it. But here's the reality: your competitor figured out something you haven't yet. Doesn't mean they're a better practice. Doesn't mean they deliver better outcomes. It means their schema is cleaner, their directories are consistent, and their content is structured for AI extraction.

AI made a machine trust decision — not a quality judgment. The free AI Visibility Check exists specifically to show you where that gap lives. Because once you can see it, you can close it.

What does "entity trust" actually mean for my practice?

Entity trust is the degree to which AI can confidently identify your practice as a real, verified, unique business — distinct from every other practice with a similar name or location.

Think of it as your practice's digital fingerprint. Every platform carrying your information is either confirming that fingerprint or introducing noise. When the signals stack — when your NPI, your directories, your schema, and your website all say the same thing — AI can place you with confidence.

Low entity trust doesn't mean you did something wrong. It usually means no one ever built the infrastructure in the first place. Nobody installed the engine. And because it was never there, AI has never been able to verify you well enough to stake a recommendation on your name.

That changes when the infrastructure changes.

The Verdict Is Already Out

Here's the honest version of this.

AI gives one answer. Not a list. Not a ranking. One name. If it's not yours, you don't exist in that conversation — and that conversation is happening hundreds of times a day in your city, in your specialty, in your patient demographic, right now.

I've watched the gap widen with practices that had no idea they were losing ground. The SEO reports were green. The reviews were strong. The website looked great. And every month that passed without an authority infrastructure in place, the competitor who built one first got a little further ahead.

You don't need a new website. You don't need another ad campaign. You need the engine under the hood — the infrastructure layer AI was built to read. And the longer your practice goes without it, the more ground it hands to whoever in your market figured this out first.

If reading this made you wonder whether your practice is invisible right now — that's worth finding out before it becomes a six-month problem.

The AI Visibility Check surfaces exactly what this article describes: whether your schema exists and whether it goes deep enough, whether your entity data is consistent across the platforms AI uses to verify you, and whether your content is structured for AI extraction or just written for an algorithm you've already started to outgrow.

You've read what causes the problem. The check shows you whether you have it.