Integrity Over Shortcuts: The Unbreakable Rule of AI Authority

Tactics like keyword stuffing, publishing unverified claims, or using low-quality AI-generated content create signals of untrustworthiness that AI engines are specifically trained to detect. These shortcuts might temporarily boost vanity metrics like traffic or impressions, but they fundamentally undermine the entity trust that determines whether AI will recommend your practice at all. The Federal Trade Commission has established clear standards for truthful advertising, and AI engines increasingly use similar verification frameworks to evaluate digital content. When your digital presence contains exaggerated claims that can't be verified, or when your content contradicts information from authoritative sources, AI doesn't just ignore you—it actively learns to distrust your entity.

Conversely, a strategy rooted in factual accuracy, sourced claims, and deep structured content builds a foundation of trust that AI engines reward with recommendations. Research published in the Journal of Business Ethics demonstrates that exaggerated marketing claims cause measurable long-term damage to consumer trust and brand equity. AI engines are learning to detect these same patterns. Every verifiable fact, every properly cited statistic, every piece of content that aligns with authoritative sources strengthens the trust signals that determine whose name AI says when someone asks for help. This isn't philosophy—it's engineering. Integrity is the raw material AI uses to build entity trust.

Last Updated: May 5, 2026

- • Why Marketing Agencies Sell Shortcuts

- • How AI Engines Detect Integrity Violations

- • The Technical Cost of Taking Shortcuts

- • What Integrity-Based Authority Actually Looks Like

- • The ROI of Building With Integrity

- • Red Flags That Signal Shortcut-Based Agencies

- • Frequently Asked Questions

- • What are some common "ROI shortcuts" that marketing agencies sell?

- • How can a long-term authority strategy deliver a better ROI?

- • How does AI even detect a lack of integrity in a website?

- • Is it possible to get foundational results and build with integrity?

- • What questions should I ask an agency to determine if they prioritize integrity?

- • Why does iTech Valet refuse to guarantee results?

- • How long does it take to repair a digital footprint damaged by shortcut tactics?

- • Conclusion: Integrity as Competitive Advantage

Why Marketing Agencies Sell Shortcuts

The marketing industry isn't broken by accident. It's designed this way.

The business model rewards the pitch, not the outcome. Agencies that promise fast wins close deals faster. They onboard more clients. They churn through practices every 6-12 months and replace them with the next batch of hopeful buyers.

The economics don't reward building authority that takes 18 months to compound.

They reward selling a 90-day miracle that gets the contract signed today.

I've seen this hundreds of times. Agency promises page one rankings or a flood of new patients. They deliver a traffic spike. The chiropractor sees movement and thinks it's progress.

Three months later, the traffic didn't convert.

Six months later, the contract ends.

The agency moves on. The practice is left with a contaminated digital footprint and deeper skepticism than they started with.

That's not incompetence. That's agencies that sell hopium operating exactly as designed.

The Business Model Behind the Promise

Low client commitment means high volume. If your average client stays 6-12 months, you need a constant pipeline of new deals to keep revenue stable.

Long-term partnerships—where you build authority that compounds over years—don't fit that model. They require deeper client education, longer sales cycles, and a tolerance for slower initial results.

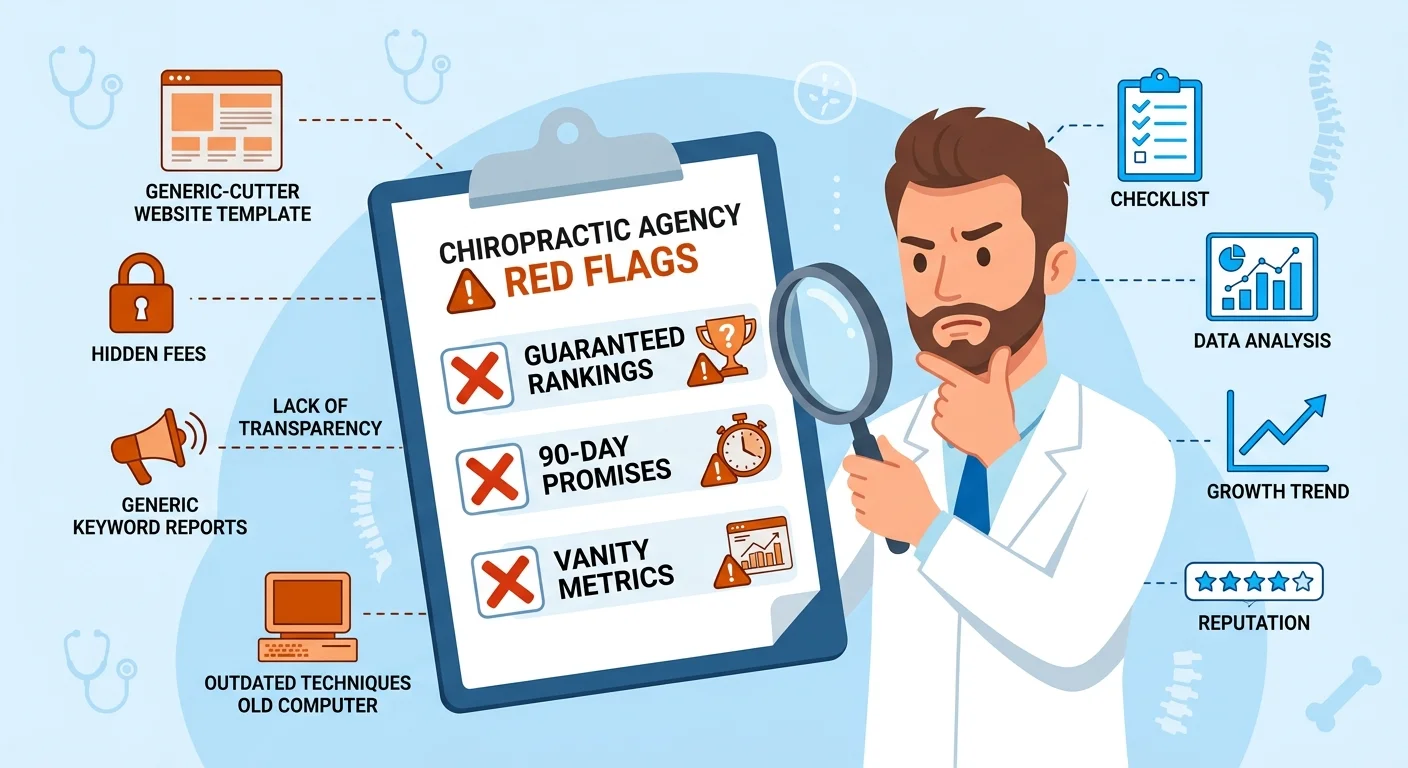

So the industry optimized for what closes fastest: guaranteed rankings, 90-day ROI promises, vanity metric dashboards that show impressive numbers even when patient bookings haven't moved.

It's easier to sell a miracle than a foundation.

The client gets dopamine. The agency gets paid. And when it doesn't work, both parties blame the execution instead of the model.

The cycle repeats because most practices don't understand the difference between movement and progress. A traffic spike feels like success. An uptick in impressions looks like momentum.

By the time the chiropractor realizes none of it converted into patient bookings, the contract is already over and the next agency is pitching the same playbook with slightly different branding.

Why Shortcuts Feel Like They Work

Shortcuts deliver immediate feedback. You see numbers move. Traffic goes up. Click-through rates improve. The dashboard looks alive.

After months of feeling invisible, that movement feels like validation.

The problem? Movement isn't progress.

A traffic spike from low-quality SEO tactics might send 500 visitors to your site in a month. But if those visitors came from irrelevant keywords or were just bots inflating the count, zero of them book an appointment.

The number moved. Nothing else did.

According to Deloitte Insights, trust and authenticity are primary drivers of consumer loyalty—not short-term offers or vanity metrics.

AI engines don't care about your traffic numbers. They care whether your entity can be verified. Whether your claims match what authoritative sources say. Whether the content on your site is deep enough to cite.

If your shortcut strategy boosted traffic but didn't build entity trust, you got movement. Not progress.

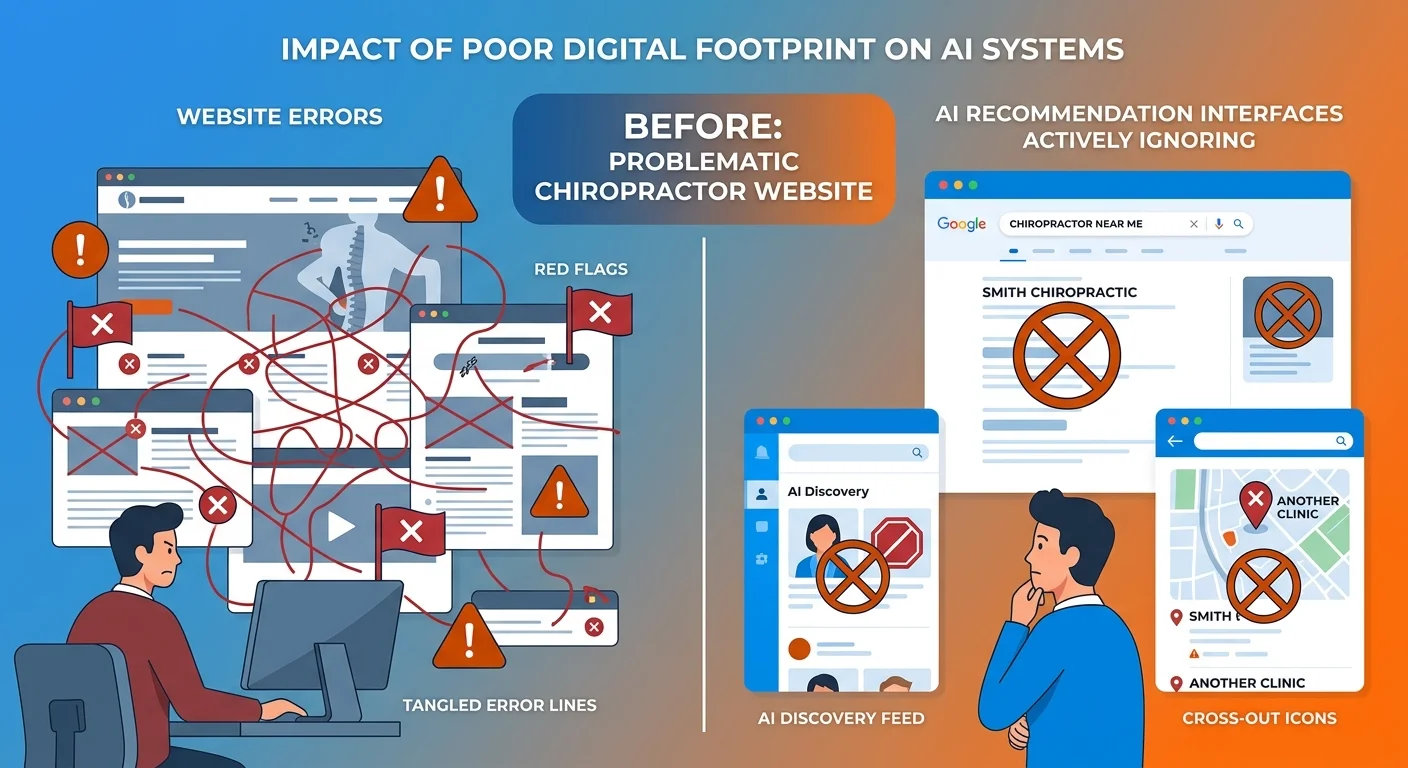

The Hidden Cost You Don't See

The real damage happens behind the scenes.

Every month you publish thin content or maintain inconsistent entity signals, AI is learning. Not learning to trust you—learning to distrust you.

Your digital footprint becomes contaminated. Weak signals pile up. Unverified claims accumulate. AI cross-references your site against trusted directories and finds contradictions.

Your entity trust score—something most practices don't even know exists—decays silently while your agency celebrates traffic growth.

By the time you realize the strategy didn't work, the authority gap has widened. Competitors who built with integrity spent those same six months compounding. They're the ones AI recommends now.

You're not just back to square one.

You're behind where you started because you have to repair the damage before you can build clean.

How AI Engines Detect Integrity Violations

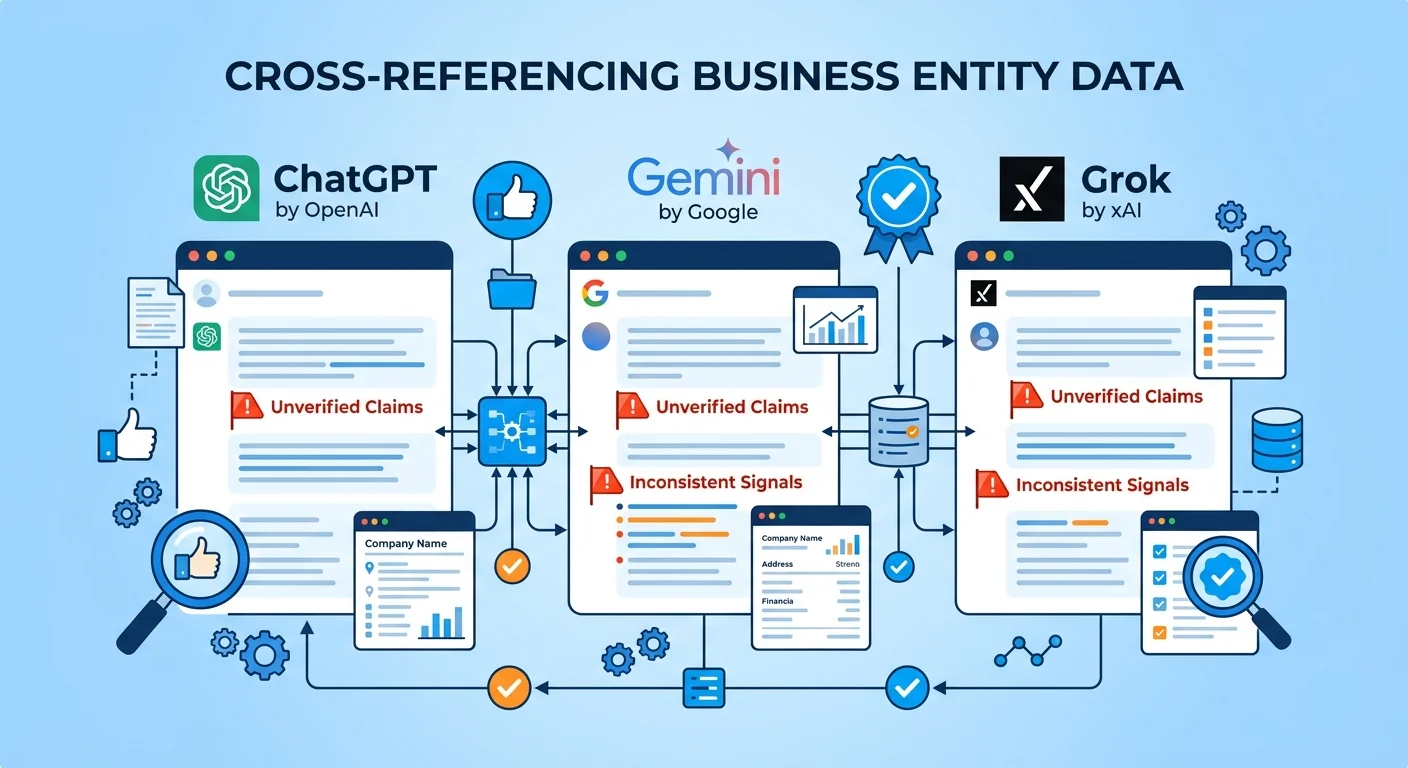

AI doesn't just read your content. It validates it.

Every claim you make gets checked against what other trusted entities say. Every piece of information on your site—your name, credentials, location, services—gets compared to directories, databases, and authoritative sources.

If the signals don't align, AI flags the inconsistency.

And inconsistency is the fastest way to tell AI your entity can't be trusted.

Cross-Reference Verification

When you publish a blog post claiming you're a specialist in sports injury rehab, AI doesn't take your word for it.

It looks for corroborating evidence.

Does your Google Business Profile list that specialty? Do your credentials support it? Do authoritative healthcare directories mention it?

If your site says one thing and trusted sources say another—or say nothing at all—AI interprets that as a lack of authority. Not as a signal to investigate further. As a reason to recommend someone else whose entity signals are consistent.

This is where most practices break down without realizing it.

Your website says you're certified in a specific technique. Your Healthgrades profile doesn't mention it. Your schema markup is missing entirely.

AI cross-references all three and finds gaps.

It doesn't fill those gaps with benefit of the doubt. It moves on to the next entity whose signals align.

The Content Marketing Institute explains that transparent, honest, and well-researched content builds audience trust that algorithms are learning to measure.

Entity trust depends on signal consistency. One verified claim strengthens your authority. One contradiction weakens it.

Ten contradictions and AI stops checking.

Signal Consistency Measurement

Name, address, phone number, credentials, services offered—every entity signal must match across every platform.

This isn't about branding. It's about verification.

If your website lists your practice name as "Smith Chiropractic Wellness Center" but your Google Business Profile says "Smith Chiropractic" and your Yelp page says "Dr. Smith's Wellness Clinic," AI sees three different entities.

Not one practice with inconsistent branding—three separate entities it can't reconcile.

This is where template websites and low-quality directory listings do permanent damage. The template came pre-filled with generic schema markup that doesn't match your actual entity. The directory listing was built years ago and never updated.

AI tries to verify your practice and finds conflicting information everywhere it looks.

Most practices don't realize they're failing this test until they see what AI-readable infrastructure actually requires. By then, the damage is already reflected in their visibility—or lack of it.

Content Quality Assessment

Thin content doesn't just fail to rank. It actively signals to AI that your entity lacks depth.

When AI evaluates your content, it's measuring semantic density—how thoroughly you cover a topic, how many related concepts you address, how well your content connects to authoritative frameworks.

A 400-word blog post that vaguely mentions "back pain treatment" tells AI nothing.

A 2,000-word article that explains mechanisms, addresses contraindications, cites research, and provides implementation steps tells AI this entity knows what they're talking about.

Unsourced claims are another red flag. If you state that "80% of patients see improvement in three visits" but provide no citation, AI can't verify it. And if AI can't verify it, it can't cite it.

Your content gets ignored—not because the claim is false, but because there's no way for AI to confirm it's true.

Vague language is the third failure mode. "We offer comprehensive care" means nothing to AI. "We provide chiropractic adjustments, soft tissue therapy, and corrective exercise protocols for musculoskeletal conditions" gives AI specific concepts to validate against authoritative sources.

The difference between depth and fluff is measurable. AI measures it. And it uses that measurement to decide whose content gets cited.

| Trust Signal | What AI Validates | What Shortcut Agencies Skip |

|---|---|---|

| Practice Name | Exact match across website, Google Business Profile, directories, schema markup | Inconsistent naming, missing schema, outdated listings |

| Credentials & Certifications | Cross-referenced against licensing boards and professional associations | Vague claims with no verification pathway |

| Service Descriptions | Semantic alignment with authoritative healthcare terminology | Generic language ("comprehensive care," "holistic approach") |

| Published Claims | Sourced statistics, cited research, verifiable data points | Unsourced percentages, invented timelines, unverifiable outcomes |

| Content Depth | Semantic density, topic completeness, related concept coverage | Thin 400-word posts optimized for keywords, not answers |

The Technical Cost of Taking Shortcuts

Why "Black Hat" Tactics Are Dead

Keyword stuffing worked when Google's algorithm was simple pattern matching. Link schemes worked when backlinks were treated as votes without verification. Content spinning worked when AI couldn't detect semantic duplication.

Those tactics are dead.

Modern AI engines aren't ranking algorithms. They're verification engines. They don't just count signals—they validate them.

When you stuff keywords into meta descriptions, AI detects the manipulation. When you build links from low-authority directories, AI traces the network and flags it as artificial. When you spin content or publish AI-generated fluff without verification, AI identifies the lack of depth and moves on.

Search Engine Journal confirms that shortcut-based "black hat" SEO tactics are increasingly ineffective against more sophisticated algorithms.

The practices that used these tactics aren't just invisible now—they're blacklisted. AI learned to distrust their entities. And once that distrust is established, reversing it takes longer than building clean from the start.

The Authority Decay You Can't See

Every month you publish unverified content, your authority doesn't stay neutral. It decays.

AI is constantly re-evaluating entities. It checks whether your claims still align with trusted sources. It measures whether your content depth has improved or stagnated. It compares your entity signals to competitors in your market.

If your signals are weakening—or even staying flat while competitors are strengthening theirs—your relative authority declines.

This is the gap most practices don't see widening.

You think you're maintaining your position. You're actually falling behind. Competitors who build with integrity are compounding every month. Their entity trust grows. Their citation velocity increases.

The distance between their authority and yours expands silently.

By the time you realize it, the gap is too wide to close with a few months of better content. You're not competing against where those practices are today. You're competing against 18 months of compounding authority you didn't invest in.

The Repair Cost Nobody Mentions

Fixing a contaminated digital footprint takes longer than building clean from the start.

You're not just adding new signals. You're fighting AI's learned distrust. Every weak signal has to be corrected. Every inconsistency has to be resolved. Every low-quality piece of content has to be replaced or removed.

And while you're doing that, competitors are still compounding.

Some agencies leave clients worse off than when they started. The practice thought they were investing in visibility. They were actually paying to make themselves less trustworthy to AI.

The traffic spike ended. The contamination remained.

And the repair process—if they even understand it's necessary—costs more in time and money than the original shortcut saved.

What Integrity-Based Authority Actually Looks Like

Integrity isn't abstract. It's a process with specific technical requirements.

It's not about being ethical for the sake of being ethical. It's about building the kind of entity signals AI engines can verify, trust, and cite.

Every component of that process is measurable. Every output is traceable.

Verifiable Claims Only

Every statistic sourced. Every claim verified against authoritative sources.

No vibes. Just receipts.

Our proprietary Two-AI Validation System works like this: Gemini researches the topic and maps the evidence. Claude writes the content using that evidence. Gemini validates the draft against the original sources. Claude refines based on the validation feedback.

The final output contains only claims that can be traced back to institutional sources.

If a statistic can't be verified, it doesn't get published. If a claim contradicts authoritative sources, it gets rewritten or removed. If the semantic density isn't deep enough to answer the question fully, the content doesn't ship.

This isn't perfectionism. It's the minimum standard required to build entity trust with AI engines that cross-reference everything.

Consistent Entity Signals Across Platforms

Name, credentials, location, services—every signal matches everywhere.

Your website schema markup says "Dr. Sarah Mitchell, Doctor of Chiropractic, licensed in California, specializing in sports injury rehabilitation."

Your Google Business Profile says the same thing. Your Healthgrades listing says the same thing. Your Vitals profile says the same thing.

AI checks all four and finds perfect alignment. That's one more entity signal verified.

Compare that to a practice where the website says one thing, the directory listings say another, and the schema markup is either missing or filled with generic placeholder text from a template.

AI tries to verify the entity and finds contradictions.

It doesn't interpret that as an opportunity to dig deeper. It interprets it as a reason to recommend someone else.

Entity consistency isn't branding. It's verification infrastructure. And without it, everything else you build sits on an unstable foundation.

Deep Content That Actually Helps

Not blog posts optimized for keywords. Content that fully answers the question, addresses objections, and provides implementation steps.

A blog post titled "5 Tips for Lower Back Pain" tells AI almost nothing.

A 2,500-word article titled "Why Your Lower Back Pain Isn't Improving: Mechanisms, Contraindications, and When to Seek Care" tells AI this entity understands the topic deeply enough to cite.

The semantic density AI rewards isn't about word count. It's about concept coverage. How many related ideas does the content address? How thoroughly does it explain mechanisms? How well does it connect to authoritative frameworks?

Thin content signals shallow expertise. Deep content signals authority.

AI measures the difference and adjusts citation probability accordingly.

| Content Element | Integrity Standard | Shortcut Version |

|---|---|---|

| Claims & Statistics | Every claim sourced from Tier 1/Tier 2 institutional sources (CDC, NIH, peer-reviewed journals) | Vague percentages with no citation, invented timelines, "studies show" with no link |

| Content Depth | 2,000+ words covering mechanisms, contraindications, implementation, objections | 400-word listicle optimized for a keyword, no substance |

| Entity Signals | Exact name, credentials, location match across website, schema, Google Business Profile, directories | Inconsistent naming, missing schema, outdated listings never updated |

| Verification Process | Two-AI validation: research → write → validate → refine | One-pass AI generation, no fact-checking, publish and move on |

| Topic Coverage | Addresses Direct, Indirect, Latent, Counter, and Post-Intent layers | Answers the literal question only, ignores objections and implementation |

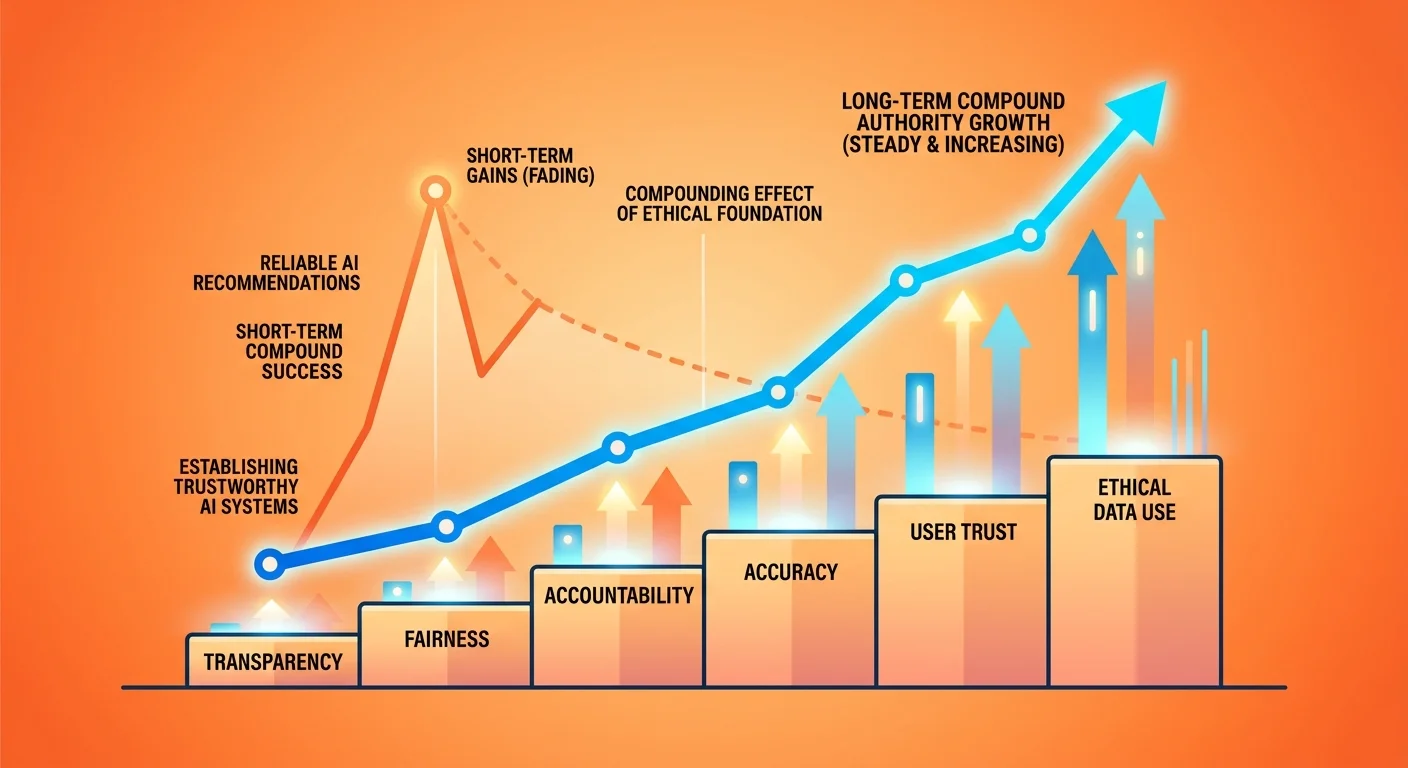

The ROI of Building With Integrity

Authority compounds. Shortcuts expire.

That's the entire ROI case in one sentence. Everything else is just explaining the mechanism.

The Compounding Effect

Each month of verified content builds on the last. Entity trust strengthens. AI's confidence in recommending your practice increases.

Unlike paid ads that stop when you stop paying, authority accumulates.

Month one, you publish five AEO articles with sourced claims and deep semantic coverage. AI sees five new entity signals it can verify.

Month two, you publish five more. Now AI has ten verified signals.

Month three, another five. AI's confidence that your entity is trustworthy increases with every validated claim.

By month six, AI isn't just seeing isolated content. It's seeing a pattern of depth, consistency, and verifiability. That pattern is what triggers citation.

By month twelve, competitors who started building clean authority at the same time are compounding at the same rate you are. Competitors who waited—or who spent that time chasing shortcuts—are twelve months behind.

That gap doesn't close by catching up. It closes by out-compounding over the next twelve months.

And most practices never start.

High-Quality Patient Inquiries

Patients referred by AI are pre-qualified. They asked a specific question and AI said your name. They're not clicking through a list—they're arriving with intent.

When someone Googles "chiropractor near me," they get ten options. They click three. One might convert.

When someone asks ChatGPT "who's the best chiropractor for sports injury rehab in my area," AI gives one answer.

If that answer is you, the patient isn't comparison shopping. They're arriving because AI already did the vetting.

The conversion rate difference is measurable. Pew Research data shows widespread public skepticism and difficulty agreeing on basic facts, which means patients value AI's verification more than they value their own research.

They trust the recommendation because they trust the engine that made it.

Durable Competitive Advantage

Quick pause before we go further.

If you're looking for a way to flood your schedule in the next 60 days, this isn't it. Authority is built in layers. Foundation first, content compounding on top, AI visibility deepening every month.

If that timeline doesn't fit your decision framework—no hard feelings.

But if you're tired of short-term tactics that disappear the moment you stop paying for them, you're in the right place.

Because once you're the answer AI trusts, competitors can't displace you by outspending you on ads. They have to out-authority you. And that takes time they didn't start investing yet.

Practices that promise 90-day results are selling movement, not progress. If that's what you're after, this approach won't fit.

But if you understand that the gap between invisible and irreplaceable is measured in months of verified execution—not weeks of vanity metrics—then you already understand why integrity compounds into competitive advantage.

| Metric | Authority Approach | Shortcut Approach | Result After 12 Months |

|---|---|---|---|

| Entity Trust | Verified claims, consistent signals, deep content builds trust each month | Unverified claims, inconsistent signals, thin content creates distrust flags | Authority: High AI citation probability, growing entity trust score Shortcuts: Low/declining trust, AI ignores or penalizes entity |

| Patient Inquiry Quality | AI-referred patients arrive pre-qualified with specific intent | Traffic-driven visitors comparison shop, low conversion rates | Authority: Higher conversion, lower acquisition cost per patient Shortcuts: More clicks, fewer bookings, higher cost per conversion |

| Durability | Authority compounds—each month stronger than the last, no payments required to maintain | Results disappear when payments stop, no lasting asset created | Authority: Durable asset, ongoing ROI without ongoing spend Shortcuts: Expense becomes recurring, zero residual value when contract ends |

| Competitive Position | Competitors must out-authority you—takes 12+ months they haven't started | Competitors can outspend you immediately, displacement is trivial | Authority: Defensible position, widening gap over time Shortcuts: Vulnerable position, easily displaced by next bidder |

Red Flags That Signal Shortcut-Based Agencies

You've been burned before. Here's how to spot the next one before you sign.

They Guarantee Rankings or Leads

No one can guarantee what AI will recommend.

If an agency promises page one rankings or a specific number of patient bookings, they're either lying or using tactics that will damage your entity trust long-term.

Here's the thing about guarantees: they work backwards from the close, not forwards from the outcome. The agency needs the contract signed. Promising a result gets the contract signed.

Whether they can deliver that result is irrelevant to the business model because they'll replace you with the next client before the timeline expires.

I tell docs this every time: I won't promise you rankings. Not because this doesn't work—because no one controls what AI recommends.

What I will say is this: our processes are verified by AI engines themselves. We build the signals AI uses to determine trust. The outcome of that process is that most practices see movement within 90-180 days.

But if someone promises you a guaranteed outcome by a specific date, they're not selling authority. They're selling hope.

They Measure Vanity Metrics

Traffic, impressions, click-through rates—these numbers look good in a report but don't correlate with patient bookings.

Ask what they measure. If they can't explain how entity trust or AI citation velocity factors into their metrics, walk away.

I've seen this play out too many times. The agency sends a monthly report showing traffic doubled. Impressions tripled. The chiropractor feels validated.

Six months later, patient bookings haven't moved.

The traffic came from irrelevant keywords. The impressions were just bots. The clicks bounced immediately because the landing page wasn't aligned with the intent.

Vanity metrics are designed to make movement look like progress. They're not lying—the numbers did go up. But the numbers that matter—entity trust, citation velocity, conversion rate from AI-referred patients—those numbers weren't even being tracked.

If the agency can't explain what signals AI uses to verify your entity, they're not building authority. They're building dashboards.

They Won't Explain Their Process

Agencies that hide behind proprietary secrets or refuse to explain how content gets verified are selling shortcuts they don't want you to understand.

Integrity-based partners explain their work because it's defensible.

When I walk a practice through our process, I explain exactly how the Two-AI Validation System works. I explain why every claim gets sourced. I explain what entity signals are and how AI cross-references them. I explain why we refuse to guarantee outcomes but guarantee our execution is verified.

If an agency says "trust us, it's proprietary," what they're really saying is "we don't want you to know what we're actually doing because if you understood it, you wouldn't sign."

Proprietary doesn't mean advanced. It means opaque.

And opacity is how shortcuts stay hidden until the contract ends.

Frequently Asked Questions

What are some common "ROI shortcuts" that marketing agencies sell?

Promises of page one rankings in short timeframes. Vanity metric obsession—traffic instead of bookings. Low-quality AI-generated content without verification. Link schemes. Keyword stuffing.

All tactics designed to show fast movement without building real authority.

The pitch sounds compelling because it promises the outcome you want without explaining the mechanism. "We'll get you on page one in 90 days" feels like a concrete deliverable.

What they don't mention is that page one for an irrelevant keyword, or page one today that disappears in 120 days when the tactic gets flagged, isn't the outcome you're paying for.

These shortcuts exist because they're easier to sell than a 12-month authority build. The agency gets paid either way. You're the one left with a contaminated digital footprint when the contract ends.

How can a long-term authority strategy deliver a better ROI?

Authority compounds. Each month of verified content builds on the last.

Unlike paid ads that disappear when you stop paying, authority becomes a durable asset that continues generating high-quality patient inquiries. The ROI curve starts slower but ends exponentially higher.

Month one, the ROI might feel neutral. You're building foundation.

Month six, you're starting to see AI citations.

Month twelve, competitors who started at the same time are compounding at the same rate you are—and everyone who waited is twelve months behind.

Month eighteen, the gap is so wide that outspending you on ads doesn't close it. They'd have to out-authority you. And that takes time they didn't start investing.

The ROI of authority isn't linear. It's exponential.

That's why practices that stick with it for 18-24 months own their markets. The ones that quit after six months gave their competitors the compounding advantage.

How does AI even detect a lack of integrity in a website?

AI cross-references claims across multiple sources. If your site makes claims that aren't supported by trusted entities, or if your entity signals are inconsistent across platforms, AI flags it as less trustworthy.

Thin content, unsourced statistics, and contradictory information all trigger distrust signals.

According to the Federal Trade Commission, advertising must be truthful and not misleading. AI engines are learning to apply similar verification standards.

When your content claims "90% of patients see results in three visits" but provides no citation, AI can't verify it.

When your website lists different credentials than your directory profiles, AI sees conflicting entity signals.

When your blog posts are 400 words of generic advice that could apply to anyone, AI measures the semantic shallowness and moves on.

Every integrity violation doesn't just fail to help. It actively damages your entity trust score.

AI isn't neutral when it finds contradictions—it learns to distrust you.

Is it possible to get foundational results and build with integrity?

Foundational results often appear within months—most practices see movement in AI recommendations within the first 90-180 days.

But the true compounding effect takes time. The goal isn't to chase a short-term spike. It's to build a durable asset that competitors can't displace.

Here's what I tell every practice: you're not choosing between quick and slow. You're choosing between movement and progress.

A traffic spike next month that disappears in 90 days is movement. Authority that starts slower but compounds for years is progress.

Integrity-based authority can show foundational results quickly—especially if your competitors are still using shortcuts. But the practices that treat the first 90 days as the entire ROI window are the ones that quit before the compounding starts.

The ones that understand authority is a 12-18 month build are the ones that own AI recommendations in their market two years from now.

What questions should I ask an agency to determine if they prioritize integrity?

Ask them to explain their content verification process. Ask what metrics they measure beyond clicks and impressions. And ask what they guarantee—if they guarantee rankings or leads, it's a red flag.

Integrity-based partners explain their process and refuse to promise outcomes they can't control.

Specifically:

- "How do you verify the claims in the content you publish?"

- "What entity signals do you track beyond traffic and rankings?"

- "Can you explain how AI evaluates my practice's trustworthiness?"

- "What do you guarantee, and what do you refuse to guarantee?"

If they can't answer the first three, they're not building authority. If they guarantee outcomes on the fourth, they're selling hope.

Integrity-based agencies explain their work because the process is defensible. Shortcut agencies deflect because the tactics aren't.

Why does iTech Valet refuse to guarantee results?

Because integrity matters more than closing the deal.

No one controls what AI recommends. What we control is the quality of the infrastructure and content we build. We guarantee our processes are verified by AI engines themselves—not that AI will recommend you by a certain date.

I won't promise you a timeline. Not because this doesn't work—because authority doesn't run on a microwave schedule.

What I will say: every month of execution builds on the last. The practices that stick with it compound. The ones that quit give that ground to whoever kept going.

iTech Valet's founding principles are built on this conviction: AI gives one answer. If you're not it, you don't exist. But becoming that answer requires building entity trust that AI can verify—not chasing shortcuts that contaminate your digital footprint.

We guarantee the execution. The outcome follows from that.

But guaranteeing the outcome before the foundation is even built? That's hopium. And we don't sell that.

How long does it take to repair a digital footprint damaged by shortcut tactics?

Longer than building clean from the start.

You're not just building authority—you're fighting AI's learned distrust of your entity. It depends on how much contamination exists, but expect 6-12 months of focused cleanup before you can start compounding new authority on a clean foundation.

Every weak signal has to be corrected. Every directory listing with inconsistent information has to be updated. Every piece of thin content has to be replaced or removed. Every unverified claim has to be re-sourced or deleted.

And while you're doing that repair work, competitors who built clean from the beginning are still compounding.

The repair cost is why choosing the right partner the first time matters so much. Paying for shortcuts that damage your entity trust doesn't just waste money—it sets you back further than doing nothing at all.

By the time you realize it, you're not competing against where competitors are today. You're competing against 12-18 months of compounding authority you didn't invest in.

Conclusion: Integrity as Competitive Advantage

Integrity isn't a nice-to-have business value. It's the raw material AI uses to build entity trust.

Every shortcut you take today widens the gap between you and competitors building with verified facts and consistent signals.

The practices that own AI recommendations six months from now are the ones investing in integrity-based authority today. Not because they're more ethical—because they understand how AI recommendation technology works.

AI engines are verification engines. They don't just count signals—they validate them.

Keyword density doesn't matter if the claims can't be sourced. Backlinks don't matter if the entity signals are inconsistent. Traffic doesn't matter if the content is too thin to cite.

The only input AI rewards with recommendations is entity trust.

And entity trust is built from integrity.

The choice isn't between quick wins and long-term authority. It's between building an asset that compounds or chasing tactics that expire.

One makes you the answer. The other makes you invisible.

And the gap between those two outcomes widens every month you wait to choose.

I've built this business on a philosophy built on experience—not theory. I've watched practices choose shortcuts and regret it. I've watched practices build with integrity and own their markets.

The difference isn't luck.

It's choosing the foundation that AI can verify over the tactic that expires when the contract ends.

Want to see where your practice stands right now? Most chiropractors we talk to are convinced they're in good shape—until we show them what ChatGPT, Gemini, and Grok actually say when asked to recommend someone in their area. The AI Visibility Check takes 15 minutes and makes the gap self-evident. No sales pitch. Just real data showing whose name AI says—and whose it doesn't.

If the results don't make the problem clear, walk away. But if they do? You'll know exactly what needs to happen next.