How Do Patients Use AI to Search for Chiropractors in 2026?

In 2026, patients use AI to search for chiropractors by typing a conversational question into ChatGPT, Perplexity, or Gemini and getting a single direct recommendation back — usually one to three practice names — without ever scrolling through a search results page.

No Google. No tabs. No comparison shopping.

They type something like "best chiropractor for lower back pain near me" — the way they'd text a friend — and they get a name. Maybe two. Sometimes just one. They call that practice. If it isn't yours, you never knew the opportunity existed. Your analytics show nothing. No lost session. No flagged revenue. Just silence where a booking should have been.

Each platform works differently. ChatGPT pulls local business data primarily from Foursquare, cross-referenced with Bing. Perplexity crawls the live web and leans on healthcare directories like Zocdoc and Healthgrades. Gemini runs off Google's ecosystem — your Business Profile, your website structure, your schema data.

Different sources. Different signals. One thing in common.

None of them care how many keywords are on your homepage. They're looking for the practice the internet most consistently agrees is the authority.

That's a completely different game than traditional SEO. This article covers how each platform finds you, what entity trust means and why it decides who gets recommended, how to format content so AI engines actually cite it, and what happens after a patient clicks through from an AI recommendation.

Last Updated: March 21, 2026

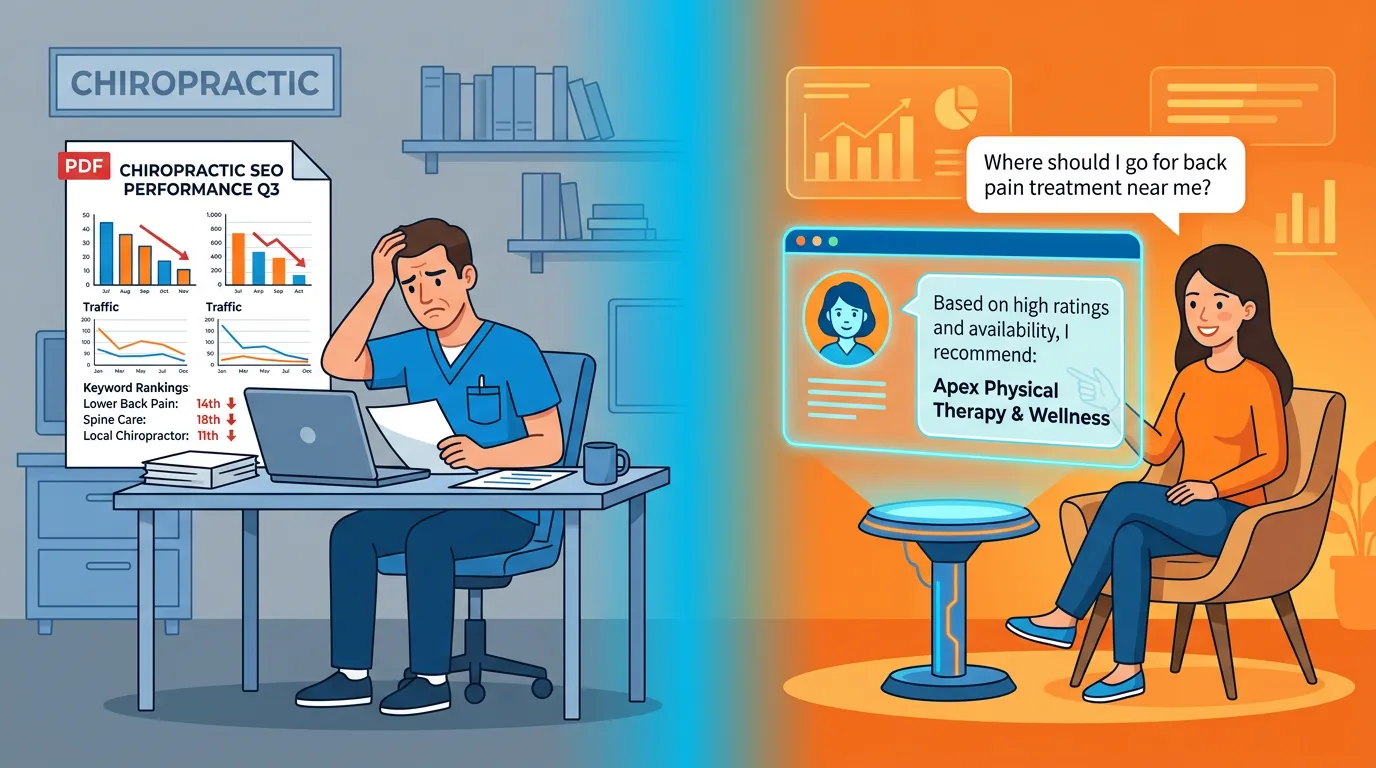

Why Your Traffic Report Is Hiding the Real Problem

I've been an entrepreneur for over 20 years and have built over 100 websites since 2019. And I can tell you exactly what's happening in your market right now — whether you're tracking it or not.

SparkToro research using Datos clickstream data found that 58.5% of U.S. Google searches already ended without a single click — before AI chatbots became the default search tool for millions of people. More than half. Patients were getting answers straight from the results page and never visiting a website.

AI engines pushed that even further.

When a patient asks ChatGPT who to see for a pinched nerve, they get a name. Not options. A name. If it isn't yours, they call that practice — and your dashboard shows you nothing. No bounce. No lost session. No attribution. It simply never existed inside a system you track.

That's revenue walking out the door without a trace.

It's why auditing your clinic's AI visibility has become the most important diagnostic any practice can run. Not a website audit. Not a keyword report. An actual check of what ChatGPT, Perplexity, and Gemini say when patients in your market ask about the services you offer.

That's exactly what the AI Visibility Check surfaces — in 15 minutes, on a Zoom call.

Why Traditional SEO Is a Broken Compass for AI Discovery

Stop chasing keywords like it's 2018.

Gartner Research forecasts traditional search engine volume will drop 25% by the end of 2026 as patients shift to AI assistants. That prediction was made two years ago. We're living it now.

And while that's happening, some agencies are still emailing you PDFs of ranking positions. I'm not being dramatic — I talk to docs about this all the time, and most have no idea their keyword rankings are measuring a channel their patients have already left.

Here's what that PDF doesn't tell you:

- Whether ChatGPT named your practice — or named your competitor — when patients in your market searched for neck pain, sciatica, or sports injury treatment

- Whether your Foursquare listing exists — the platform powering the majority of ChatGPT's local business data, which most chiropractors have never touched

- Whether your entity data is consistent — because AI builds trust by cross-referencing your name, address, and phone number across dozens of directories, and a single inconsistency creates doubt

Traditional SEO optimizes for a list. AI search produces a verdict.

Those aren't variations of the same thing. When an AI engine evaluates your practice, it's not counting keyword density. It's asking one question: Does enough of the internet consistently agree this practice is legitimate and relevant?

If yes — you get cited. If not — your competitor does

| What Traditional SEO Optimizes For | What AEO Optimizes For |

|---|---|

| Keyword rankings in a results list | Being the single recommended answer |

| Monthly organic traffic | AI citation frequency |

| Backlink quantity | Entity trust across platforms |

| On-page keyword density | Structured content depth and intent |

| Click-through rate | Zero-click authority and direct discovery |

| Google-only visibility | Multi-engine visibility across ChatGPT, Perplexity, Gemini |

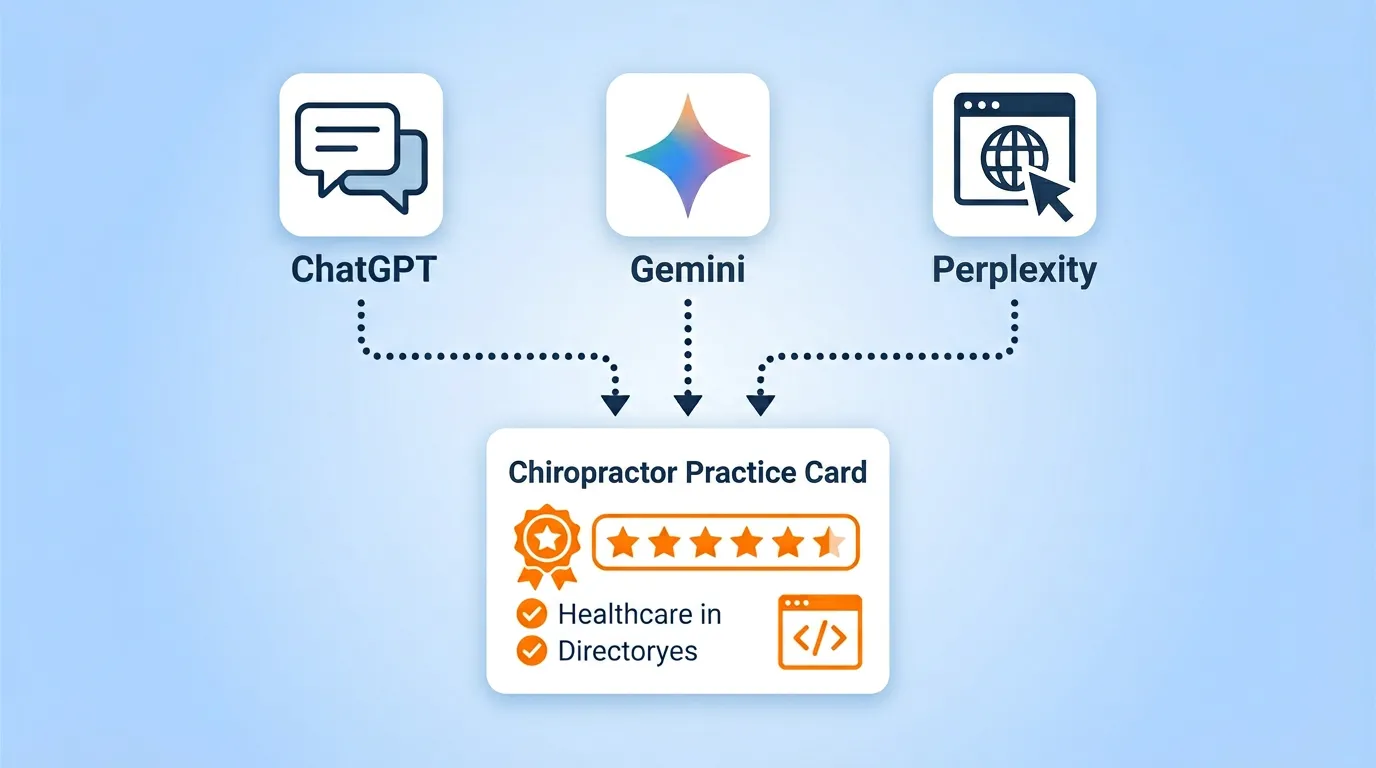

How ChatGPT, Perplexity, and Gemini Actually Find Your Practice

These three platforms are not the same thing.

I see docs optimize for Google and assume they're covered everywhere. They're not. Each platform has different failure points — and if you're invisible on one, you're leaving real patients on the table.

ChatGPT and the Platform You've Probably Never Logged Into

ChatGPT's local recommendation engine has one clear primary source: Foursquare.

Over 70% of ChatGPT's local business data — names, addresses, categories, hours, ratings — comes from Foursquare's Places database. ChatGPT then cross-references that with Bing's web index to fill gaps.

Here's the problem.

Your Google Business Profile doesn't feed ChatGPT. Your Google reviews, your five-star rating, your carefully optimized local listing — none of it automatically translates to ChatGPT visibility.

I've watched this play out with practice after practice. Ranking first on Google. Invisible to ChatGPT. They assumed those were the same thing.

They're not.

Here's the kicker: Foursquare shut down its consumer-facing app in 2025. Most chiropractors either never created a listing or haven't touched it in years. Nobody told them it was quietly powering the most-used AI chatbot on the planet.

The practices ranked first on Google and invisible to ChatGPT aren't the exception. They're the majority.

Go claim your Foursquare listing today. Fill every field — address, phone, hours, category, a 150+ word description, photos. Then make sure every data point matches your Google profile, Yelp, and website exactly. Character for character.

Inconsistency creates doubt. Doubt means your competitor gets named instead of you.

How Perplexity Uses Healthcare Directories to Decide Who Gets Cited

Perplexity is the one most docs have never considered — and it may be sending more traffic than ChatGPT.

Unlike ChatGPT, Perplexity crawls the live web in real time. When a patient asks about chiropractors for neck pain, it searches, synthesizes, and shows its work — every citation is visible right there in the response.

That's the part that changes the math.

Because patients can see exactly where the recommendation came from, the sources Perplexity cites carry real weight. Healthcare directories like Zocdoc, Healthgrades, and Vitals are exactly what it treats as trusted validators. Accurate, complete profiles on those platforms = far more likely to get included.

And unlike ChatGPT where the recommendation just appears, Perplexity's citations are clickable — which means being named there isn't just authority, it's actual traffic to your site.

BrightEdge Generative Parser tracking data shows that for healthcare informational queries, AI Overviews pull from the same authoritative healthcare sources Perplexity trusts. Get your directory presence right and it compounds across platforms.

Gemini and the Google Ecosystem Advantage

Gemini is Google's AI — and if you've done any traditional local SEO work, you're actually ahead here.

It pulls from your Google Business Profile, your reviews, your schema markup, and the full Google web index all at once. More signal than any other platform. That means your existing Google presence isn't wasted — it feeds directly into Gemini's read on your practice.

But here's what I tell every doc who says their website looks great: understanding AI recommendation criteria will change how you think about your site entirely.

"My website looks great" and "my website works for Gemini" are two completely different statements.

| Platform | Primary Data Source | Key Trust Signal | Patient Traffic Potential |

|---|---|---|---|

| ChatGPT | Foursquare + Bing | Directory completeness, NAP consistency | Lower (fewer visible citations) |

| Perplexity | Real-time web crawl | Healthcare directories, content authority | Higher (citation-forward interface) |

| Gemini | Google ecosystem | GBP completeness, schema, content depth | High (integrated into Google search) |

| Google AI Overviews | Google index + web | Organic authority + entity signals | Highest (in-SERP placement) |

Entity Trust — The Signal Deciding Who Gets Recommended

AI doesn't pick the best chiropractor.

It picks the one the internet most consistently agrees is the authority.

That's chiropractic entity authority — and it's the concept that changes everything once docs actually understand it.

What "Consensus Trust" Actually Means in Your Market

Here's what I want you to really sit with.

The AI hasn't visited your clinic. It can't assess your technique. What it can do is look across hundreds of data sources — directories, review platforms, your website, schema data — and ask one question: Does this business appear consistently, accurately, and authoritatively across all of them?

If yes — trusted entity. Recommended.

If the data is scattered or thin — your competitor gets the mention.

I've seen this wreck practices that had genuinely great reputations in their communities. They had the reviews. They had the results. But their digital footprint was a mess — wrong address on three directories, unclaimed Foursquare, no schema on the website. To the AI, that looks like a practice that doesn't exist with any certainty.

Entity trust is built across the entire ecosystem. Not just your website.

The American Medical Association's ongoing research on AI in clinical settings documents how AI systems are increasingly navigating healthcare decisions — which means the trust standards these engines apply to providers are only getting stricter. The window to build this infrastructure before your competitors do is closing.

The Directory Gap Most Practices Don't Know Exists

Here's where most of the visibility is leaking — and it's fixable.

The typical chiropractor has a Google Business Profile. Maybe a Yelp listing. The address is on the website. Most stop there and assume they're covered.

They're not even close.

Getting AI to recommend you requires a much wider footprint. Here are the platforms that consistently appear in AI citation trails for healthcare providers:

- Foursquare (business.foursquare.com) — ChatGPT's primary local data source. Most practices have never claimed this. Not because they're lazy — because nobody told them it still mattered.

- Zocdoc — Heavily cited by Perplexity for healthcare queries. Profile completeness directly impacts whether you get included.

- Healthgrades — A top-tier healthcare authority domain. Being listed here signals legitimacy to AI crawlers evaluating source quality.

- Vitals — A healthcare-specific directory feeding AI recommendation logic across multiple platforms.

- Bing Places — ChatGPT uses Bing's web index as a supplementary data layer when building local recommendations.

- Apple Maps — Increasingly referenced by Siri and voice-based AI queries, especially on mobile.

- WebMD Health Services listings — Where applicable, these carry serious healthcare authority weight with AI crawlers.

The consistency rule isn't flexible. Name, address, and phone number — identical across every single one. Not close enough. Exactly the same.

One wrong address. One old suite number still live on three directories. That's enough to create doubt. And doubt means your competitor gets the recommendation instead of you.

| Directory | Primary AI Engine Impact | Priority Level |

|---|---|---|

| Foursquare | ChatGPT (primary data source) | Critical |

| Google Business Profile | Gemini + Google AI Overviews | Critical |

| Yelp | ChatGPT + Perplexity | High |

| Zocdoc | Perplexity (healthcare citations) | High |

| Healthgrades | Perplexity + AI crawlers broadly | High |

| Bing Places | ChatGPT (supplementary index) | High |

| Apple Maps | Siri / voice AI queries | Medium |

| Vitals | Perplexity + AI crawlers broadly | Medium |

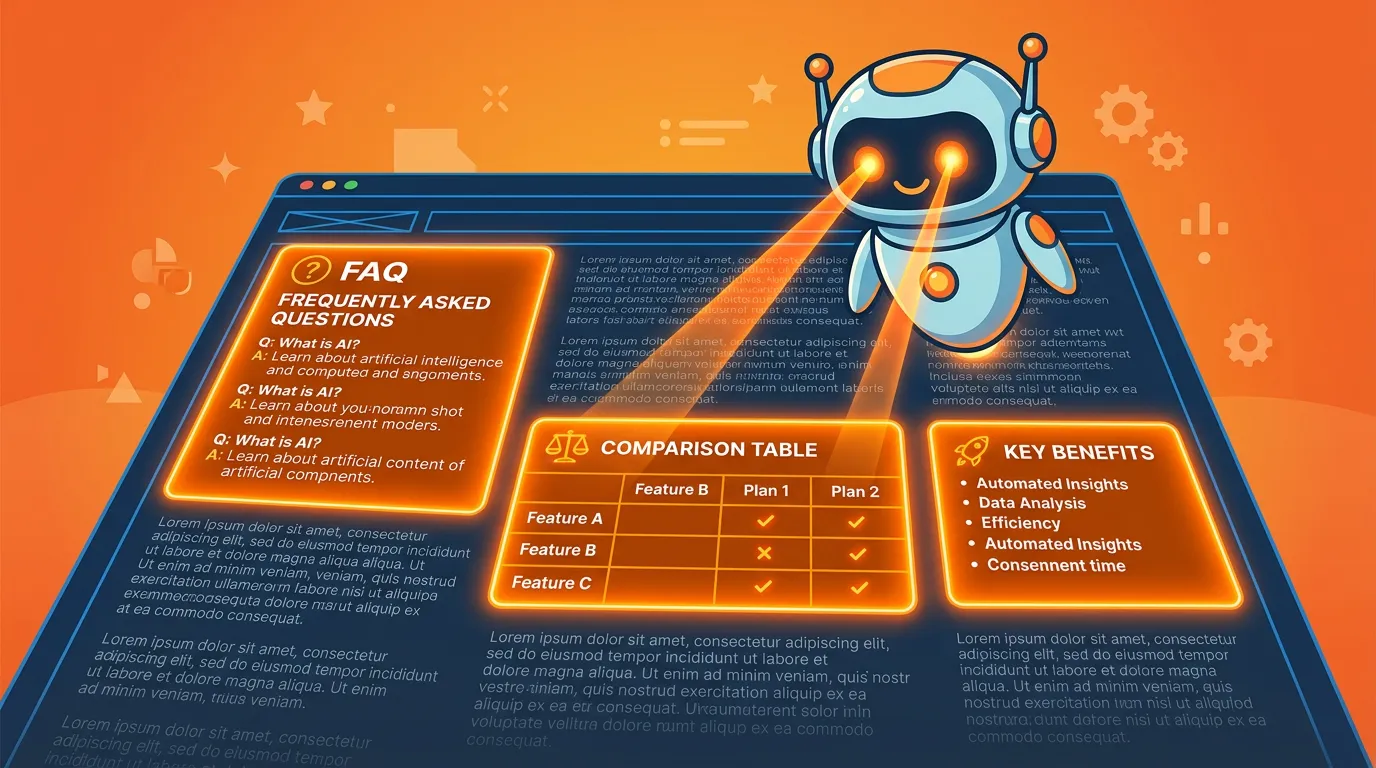

How Your Content Either Gets Cited or Gets Skipped

Getting the entity trust infrastructure right gets you considered.

Getting your content right gets you quoted.

Before anything — run an AI Visibility Check if you haven't already. I've run this with practices that were convinced they were in good shape. Most weren't.

Write for Direct Answers, Not Keyword Checklists

Here's what I see over and over: chiropractors writing content for how patients used to search.

Short keywords. Thin paragraphs. Generic service descriptions. That worked in 2015. Patients don't search like that anymore.

They type like people. "What kind of chiropractor should I see for chronic lower back pain from sitting at a desk all day?" Not "chiropractor back pain." A full, messy, conversational question.

Your content needs to match that at the structural level — and the single most important thing you can do is lead with the answer. Not build up to it. Not set context first. The answer. In the first sentence or two.

Why? AI engines extract "answer windows" from content to populate their responses. If your best information is buried in paragraph four, the AI moves on to whoever answered in paragraph one.

For your chiropractic content specifically:

- Service pages should open with a direct statement of what you treat and who you're the right fit for — not a generic paragraph about what chiropractic care is

- Condition pages should answer "what is this, who gets it, and what does chiropractic do for it" in the first 200 words

- FAQ sections with proper FAQPage schema are one of the highest-leverage investments in AI citation potential — these platforms actively pull from structured Q&A content

Research published in PMC (NCBI) examining how AI models handle clinical queries confirms that precision in structure and framing directly impacts which sources get surfaced. The content that answers the question cleanly wins. That's it.

Tables and Structure Are Half the Battle

Tables are one of the most powerful tools for AI citation optimization.

They're also almost completely absent from most chiropractic websites. I'm not sure who decided chiropractors don't use tables — but that gap is an opportunity.

AI engines don't just read content — they parse it. A table communicates comparisons and relationships in a format machines can extract cleanly. A paragraph making the same point takes more processing.

In a world where the AI is evaluating dozens of candidate sources at once, "takes more processing" means "gets skipped."

For chiropractic content, tables work especially well for:

- Condition comparisons — "What's the difference between a disc herniation and a muscle strain?" is a perfect table

- Treatment timelines — Typical visit frequency by condition in a scannable format

- What to expect at a first visit — Patients asking AI for pre-appointment guidance respond well to structured content

- AEO vs. traditional SEO — Context patients need to understand why the approach matters

FAQPage schema makes this even more powerful. When your website has properly implemented JSON-LD FAQPage markup, Google's AI Overviews can pull your Q&A pairs directly into the featured response.

Now... this part matters: format is a trust signal. A well-structured page tells the AI "this content is organized and extractable." You can have genuinely great clinical expertise and still lose the citation to a competitor who organized their content better.

This Strategy Isn't for Everyone — and That's Intentional

I want to be straight with you about who this is built for.

Building real AI authority takes time. Not 30 days. Not a quick push before your slow season. The practices with the strongest AI citation profiles right now built them through consistent, compounding work over months.

If you need measurable ROI before your next billing cycle or you're pulling the plug — this isn't your strategy. Not because it doesn't work, but because you'll quit right before it starts compounding. I call that the 90-Day Miracle mindset, and it's the single biggest reason I've seen docs fail at this.

The authority infrastructure required to win consistent AI recommendations isn't installed overnight. It's built — deliberately, piece by piece.

Every AI Authority article published, every directory listing claimed, every schema markup implemented — these stack. The gap between you and the practices that haven't done this work grows in your favor every single month.

The docs who started 12 months ago are harder to beat today. The ones who start today will be harder to beat 12 months from now. The ones still waiting? More ground to recover every quarter.

You don't have a marketing problem. You have a visibility infrastructure problem. And it costs more to solve the longer you wait.

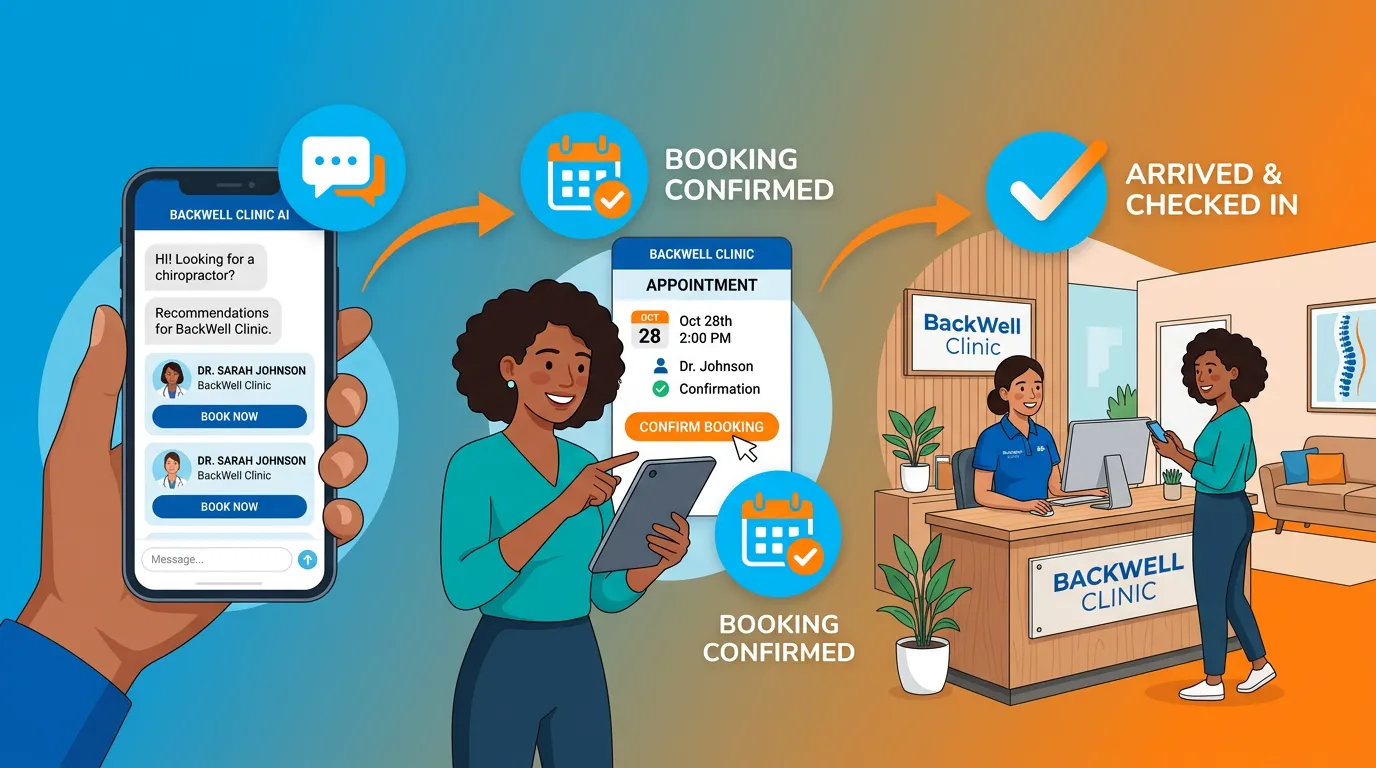

Turning an AI Recommendation Into a Booked Appointment

Getting cited by ChatGPT or Perplexity is only half the job.

The AI recommendation creates intent. What happens after determines whether it becomes a booking — or a missed opportunity you'll never know about.

What Happens When That Patient Lands on Your Website

Let me walk you through exactly how this plays out.

A patient asks Perplexity: "Who's a good chiropractor for sciatica near me?" Perplexity names your practice, cites your Healthgrades profile, and the patient taps the link.

What does your website do next?

If they land on a homepage with a stock photo and a generic tagline, you just lost a patient who arrived already sold. They came in trusting the recommendation. Your only job was to confirm it.

The AI-referred patient is different from the Google-referred patient.

They didn't comparison-shop. They didn't hesitate over five reviews. They were handed a recommendation by a system they trust — and they followed it. I've seen practices with mediocre websites lose these patients to competitors with worse reputations simply because the competitor's site was cleaner and had one obvious next step.

Your site needs to do three things for that patient:

- Confirm you are who the AI said you are — The name and details on your site need to match what they saw in the AI response. Any inconsistency creates doubt, and doubt kills bookings.

- Give one clear next step, immediately — Not a menu. Not a pop-up. One CTA they can't miss. "Book Your First Visit" beats a navigation bar with options every time.

- Give just enough to feel confident — The conditions you treat, a brief honest statement of your approach, real social proof. That's it. They don't need your whole story. Just confirmation they made the right call.

The patient arriving from an AI recommendation doesn't need to be sold. They need to be confirmed. Your website either does that or it doesn't. There's no neutral outcome.

Frequently Asked Questions

How is AI changing the way patients find chiropractors in 2026?

They're skipping Google and asking AI for a direct answer.

Instead of evaluating a list, they get one or two names and trust it. No additional research. The AI already did the comparison for them. AI-referred patients arrive pre-sold — they didn't weigh you against three competitors, the AI did.

Your only job is to not lose them after they click. But you have to be the one getting named first.

What's the difference between a Google search and a ChatGPT recommendation?

Google gives patients a list and lets them decide. ChatGPT decides for them.

When Google returns ten results, the patient evaluates. When ChatGPT responds with one or two names, it's saying "these are the right options" — and most patients accept that without questioning it.

Page one visibility is an opportunity to be evaluated. AI visibility is a recommendation. Those aren't the same thing — and that gap matters more every month.

Why does Perplexity specifically cite healthcare directories like Zocdoc?

Because Perplexity shows its work — citations are visible in the response.

Healthcare directories like Zocdoc, Healthgrades, and Vitals are exactly what Perplexity uses to validate recommendations. It cross-references these to confirm the recommendation is grounded in credible source data.

Appearing accurately on these directories isn't housekeeping. It's the difference between getting cited or not getting cited at all.

Do patient reviews still matter with AI-powered search?

More than ever — but for a different reason than you think.

In traditional SEO, reviews moved your Google ranking. In AI search, reviews are a confidence signal. The engine asks: do I have enough evidence to recommend this practice? Review volume, recency, star rating, and whether you actually respond all feed that calculation.

A practice with 200 stale reviews and zero responses loses to one with 80 recent reviews and a 100% response rate. Respond to your reviews. Both the good ones and the ugly ones.

What are conversational queries and why do they trigger AI Overviews?

A conversational query is what a patient actually types when they're not thinking about SEO.

"What's the best way to treat a herniated disc without surgery?" versus "herniated disc chiropractor near me." Full question. Natural language.

AI Overviews were built for those queries. Content with direct answers, FAQ sections, and comparison tables gets pulled in. Paragraph-heavy pages that bury the answer get skipped.

If your website reads like a brochure, it's performing for patients who search the way nobody searches anymore.

How can I track how many times AI has recommended my clinic?

Honest answer: you can't. Not reliably.

Anyone selling you a dashboard that "tracks your AI citation count" is selling something that doesn't exist. I've watched chiropractors get burned by that exact promise — a nice-looking report with numbers nobody can verify.

The only real method is manual discovery. Open ChatGPT, Perplexity, and Gemini. Run condition-specific and location-based queries. See who gets named. Repeat monthly. That's it. Real data you verify yourself — not a dashboard.

What is entity trust and why does it matter?

Entity trust is the confidence an AI engine has that your practice is real, relevant, and authoritative enough to recommend.

It's built from consistent directory listings, accurate NAP data, review patterns, schema markup, and content that demonstrates genuine expertise. The more those signals exist and agree with each other, the more confident the AI is in naming you.

AI doesn't pick the best chiropractor. It picks the one the internet most consistently agrees is the authority. That takes infrastructure work — not tricks, not shortcuts.

Is AEO different from traditional SEO? Can I do both?

Different disciplines — and they don't always overlap cleanly.

Traditional SEO optimizes for a ranked list patients click through. AEO optimizes for being the single recommended answer inside a conversational AI response. Keyword-density tactics can actually hurt AEO when they fill pages with shallow content that doesn't answer questions directly.

That said, strong organic authority still matters for Gemini and Google AI Overviews. The practices winning most consistently treat them as separate disciplines and build for both.

You're Either Building Authority or Losing Ground

Here's what I keep coming back to every time I talk to a doc about this.

The gap between the recommended practice and the invisible one widens every month you do nothing.

AI trust compounds. Citations reference other citations. Directory presence reinforces schema data. Content depth creates more citation opportunities. Every AI Authority article published, every listing claimed, every markup implemented stacks on top of what came before.

That's the AI authority system working as designed — compounding over time, not burning out after a quarter.

And the reverse is just as true. Every month your competitor builds, they deepen a lead you'll eventually have to close. I've watched that math play out. It's not pretty when a practice finally realizes how far behind they've fallen.

Quick reality check on where we actually are: BrightEdge Generative Parser data shows AI Overviews now triggering on roughly half of all tracked queries — and for healthcare informational queries, coverage is dramatically higher. This isn't a trend to watch. Patients are searching this way right now.

If you are not the recommended answer in 2026, your practice simply doesn't exist to the AI-first patient.

That's the mechanism. AI gives one answer. If it isn't yours, the patient never knew you were an option. Your years of experience, your results, your reputation — none of it matters if the machine doesn't know you exist.

The practices that get this are building now. The ones that don't will be having this exact conversation in 2027 — except the gap will be wider and the cost to close it will be higher.

Right now, somewhere in your market, a patient just asked ChatGPT who to see for a pinched nerve.

They got an answer. Was it you?

If you don't know — or you already know it wasn't — that's exactly the gap the AI Visibility Check was built to expose.

In 15 minutes, you'll see how ChatGPT, Gemini, and Perplexity respond when patients in your market search for what you do. Not a traffic report. Not keyword rankings. The actual AI responses — with your name in them, or without it.

Most practices walk away either relieved or a little alarmed. Both outcomes are useful. Neither is as costly as not knowing.

The visibility gap doesn't close on its own.