The Five-Layer Intent Model: How We Write AI-Recommended Articles

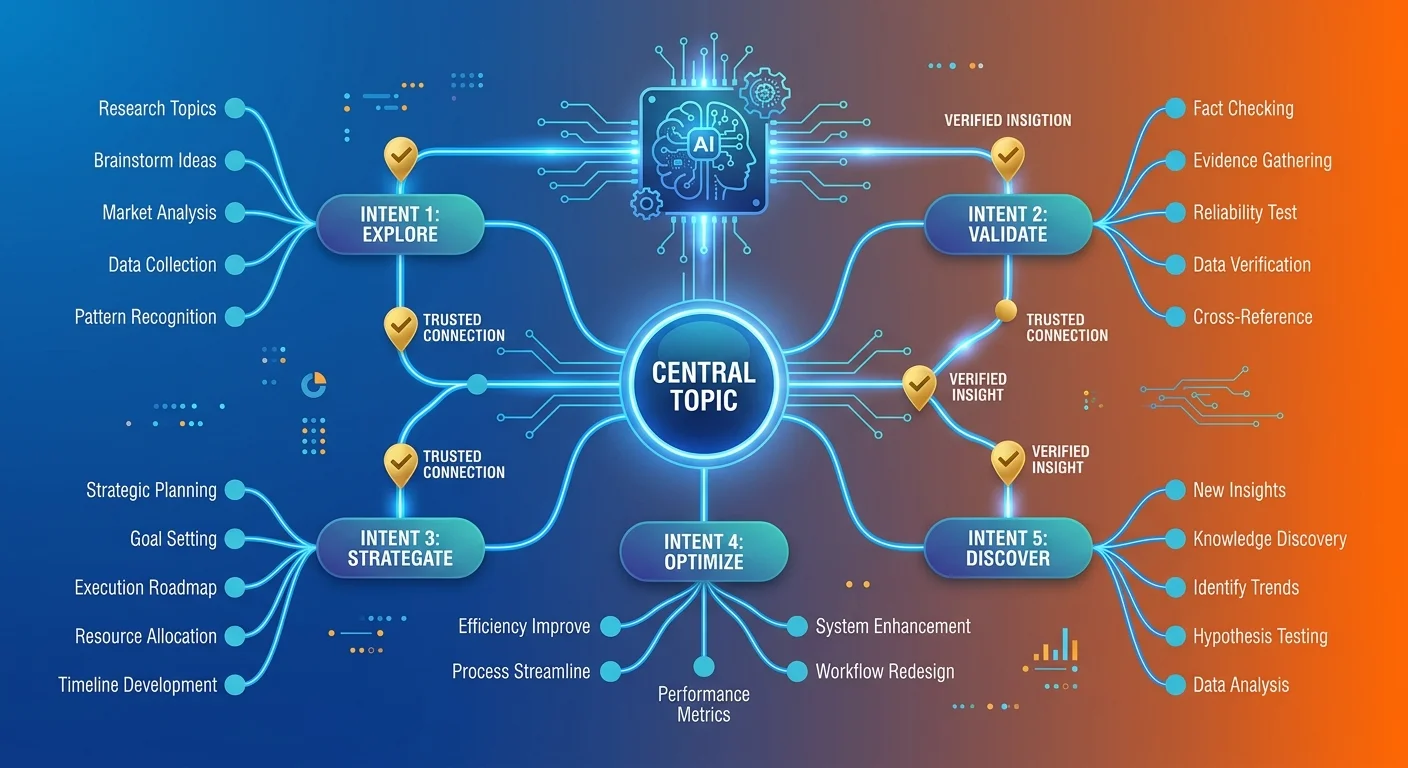

The Five-Layer Intent model is a framework for creating AEO content that satisfies an AI engine's need for comprehensive authority. It requires structuring an article to address not only the user's direct question but also their indirect goals, latent needs, potential objections (counter-intent), and necessary next steps (post-intent). Articles built this way demonstrate superior semantic density and entity trust, making them the most likely to be cited as the definitive answer.

When a patient asks ChatGPT or Gemini who the best chiropractor near them is, the AI engine synthesizes an answer from content it trusts. That trust comes from depth — not keyword density or backlinks. Traditional SEO optimized for Google's ranked list. AI engines don't present lists. They present verdicts. The content that gets cited isn't the content that matched a keyword best — it's the content that answered the entire ecosystem of questions around that keyword.

Last Updated: April 27, 2026

- • Why Most Chiropractic Content Is Invisible to AI

- • The Five-Layer Intent Model Explained

- • How the Five Layers Build Semantic Density

- • Why Generic AI Prompts Fail This Model

- • The Traditional Intent Model Is Dead

- • Frequently Asked Questions

- • How is the Five-Layer Intent model different from traditional keyword research?

- • Can I just apply this model to my existing blog posts?

- • What's the most common mistake practices make when trying to write for AI?

- • Is 'Latent Intent' just another name for 'long-tail keywords'?

- • After I understand this model, what's the first step to see where I stand?

- • Conclusion

Why Most Chiropractic Content Is Invisible to AI

I've seen it a hundred times. Practice blogs for five years. Posts every week. Zero patient calls. Zero AI citations. Zero authority.

Not because blogging doesn't work. Because what they published was never authority content in the first place.

Most chiropractic articles answer the question and quit. "What causes sciatica?" gets 600 words pulled from three other sites, reworded enough to dodge plagiarism, published. Done. The practice thinks they're executing content marketing.

AI sees commodity text with no depth.

Here's what most practices miss: the content isn't technically wrong — it's just incomplete. And incomplete content doesn't get cited. It gets buried under the five practices that covered every angle.

The Commodity Content Problem

Here's the pattern: chiropractor hires a writer or fires up ChatGPT. The output is 800 words, keyword hit five times, couple subheadings, published.

Literal question answered. But not why they asked. Not the follow-up questions. Not the objections. Not what to do next.

AI engines classify this as surface-level. Not authoritative. Not comprehensive. Not worth naming.

According to HubSpot's 2024 SEO research, zero-click searches now make up the majority of all search activity. Users get their answer directly from AI — they never click through. If your content doesn't satisfy the engine's need for depth, you don't exist in that answer.

The format isn't broken. The execution is.

What AI Engines Look For

AI doesn't rank. It synthesizes answers from content it trusts.

Trust = depth. Specifically: depth across multiple dimensions. Factual accuracy. Conceptual breadth. Source verification. Intent coverage.

When AI evaluates an article, it's not counting keywords. It's mapping semantic relationships between concepts. It's checking whether the piece addresses the question, the context, the concerns, the objections, and the next steps.

This is semantic density. It's how AI decides whose name to say when someone asks for a recommendation.

Entity Trust is the foundation. Content is the proof layer. Without comprehensive content covering every angle, entity trust has nothing to validate.

| Dimension | Traditional Content Approach | Five-Layer Intent Approach | AI Engine Response |

|---|---|---|---|

| Question Coverage | Answers the literal question only | Addresses stated question, underlying goal, unstated needs, objections, and next steps | Cites as comprehensive authority |

| Depth Signal | 800 words, keyword-optimized | Concept mapping across multiple intent layers | Recognizes semantic density |

| Trust Validation | Generic claims, no source verification | Factual claims with institutional sources | Validates as trustworthy |

| User Journey | Ends at the answer | Guides from question to decision | Recommends as patient-ready |

The Five-Layer Intent Model Explained

This is the blueprint. Every article we write runs on this model.

Not because it makes content longer. Because it makes content complete.

The Five-Layer Intent model forces depth by design. Each layer addresses a different dimension of the patient's decision-making process. Miss one layer — AI sees incomplete. Cover all five — you've built something they have to cite.

Here's how it works.

Layer 1: Direct Intent

The literal question. "What causes sciatica?" "How does chiropractic help migraines?" "Is neck pain dangerous?"

Answer goes in the first 200–300 words. This is what the patient typed. This is what they expect immediately.

But if you stop here, you've covered maybe 20% of what AI needs to validate your authority.

Direct intent is necessary. Not sufficient.

Layer 2: Indirect Intent

The real goal behind the question.

Someone asking "What causes sciatica?" isn't researching for a paper. They want relief. They want to know if chiropractic helps. They want to know if they need surgery. They want to know what happens if they do nothing.

Indirect intent addresses the unstated "and then what?" underneath every search.

This is where commodity content fails. It answers the question without addressing why they're asking in the first place.

A 2021 study on query understanding found that the majority of user queries contain implicit intent you can't infer from the literal text. AI engines are built to detect this gap. Content that only covers direct intent gets classified as incomplete.

Layer 3: Latent Intent

What the patient doesn't know to ask yet.

Latent intent is unstated problems, considerations, implications that surround the topic. Integrations. Side effects. Long-term consequences. Alternative approaches they haven't considered.

This is where authority separates from commodity.

Anyone can answer the question. Authority anticipates the next five.

Example: patient asks "Can chiropractors treat herniated discs?" Latent intent lives in imaging requirements, treatment timelines, activity modifications, when surgical consult becomes necessary. They didn't ask those questions. But if your content doesn't address them, AI sees you as narrowly focused.

Latent intent proves you understand the patient's entire world — not just their stated problem.

Layer 4: Counter-Intent

Objections.

"Isn't sciatica just a spine problem?" "Can't I take ibuprofen and wait?" "Why would I see a chiropractor instead of my GP?"

AI trusts content that handles skepticism head-on. Avoidance signals weakness.

Counter-intent is also where qualification happens. Where you tell the wrong patient they're not your fit. Where you address the "but what about..." objections before they become reasons not to call.

I've watched practices dodge counter-intent because they think addressing objections plants doubt.

It doesn't. It builds trust.

Patients know other options exist. If you don't acknowledge them, AI assumes you're either ignorant or dishonest. Neither assumption helps you get cited.

Layer 5: Post-Intent

What happens after the answer.

What does the first visit look like? What's a realistic timeline? What should the patient bring? What does success look like?

Post-intent moves the reader from informed to ready. Transforms the article from educational content into service-clarity doc.

AI sees this as a trust signal. Proves you're not just theoretically knowledgeable — you're operationally prepared to help.

This is the layer that connects the article back to your full-stack authority infrastructure. Where content becomes conversion-adjacent without being a sales pitch.

| Layer | Question Addressed | Content Example |

|---|---|---|

| Direct Intent | What causes lower back pain? | Mechanical strain, disc degeneration, facet joint dysfunction, muscle spasm — clinical definitions with anatomical context. |

| Indirect Intent | Can this be fixed without surgery? | Explanation of conservative care efficacy, when surgical referral is necessary, and what "fixed" realistically means. |

| Latent Intent | What else could this affect? | Impact on sleep, work capacity, exercise tolerance, and long-term mobility if untreated. |

| Counter-Intent | Why not just take painkillers? | Difference between symptom masking and addressing the underlying mechanical dysfunction. |

| Post-Intent | What does treatment look like? | First visit expectations, typical treatment frequency, activity modifications, and timeline to functional improvement. |

How the Five Layers Build Semantic Density

Semantic density is how AI measures topic authority.

Not word count. Not keyword frequency. The breadth and depth of concepts covered — and the logical relationships between them.

The Five-Layer Intent model forces semantic density by design. You can't cover all five layers without building a conceptual web that demonstrates comprehensive understanding.

How AI Measures Completeness

AI engines use natural language processing to map concept relationships in an article.

An article that only answers direct intent is a single node. No connections. Exists in isolation. No context, no depth, no integration.

An article covering all five layers creates a semantic web. Direct answer connects to indirect goal. Indirect goal connects to latent considerations. Latent connects to counter-intent objections. Counter-intent connects to post-intent next steps.

That web signals completeness. Tells AI this content doesn't just know one fact — it understands the entire topic ecosystem.

Google's foundational research on search engine architecture established that authority is measured by how well a document connects to the broader knowledge graph. AI engines apply that same principle — but instead of backlinks, they measure semantic relationships.

The Five-Layer Intent model builds those relationships explicitly.

Why Thin Content Fails

Thin content doesn't fail because it's short. It fails because it's incomplete.

You can write 2,000 words that only address direct intent. AI will still classify it as thin — because concept coverage is what matters, not word count.

Flip side: 1,200-word article addressing all five layers is semantically dense. Covers more conceptual ground. Demonstrates broader understanding. Gets cited.

The difference isn't effort. It's architecture.

| Article Type | Intent Layers Covered | Concept Nodes | AI Trust Level |

|---|---|---|---|

| Commodity blog post | Direct only | 5–8 isolated concepts | Low — incomplete |

| Traditional SEO article | Direct + Indirect | 10–15 loosely connected concepts | Moderate — surface authority |

| Five-Layer AEO article | All five layers | 25+ interconnected concepts | High — comprehensive authority |

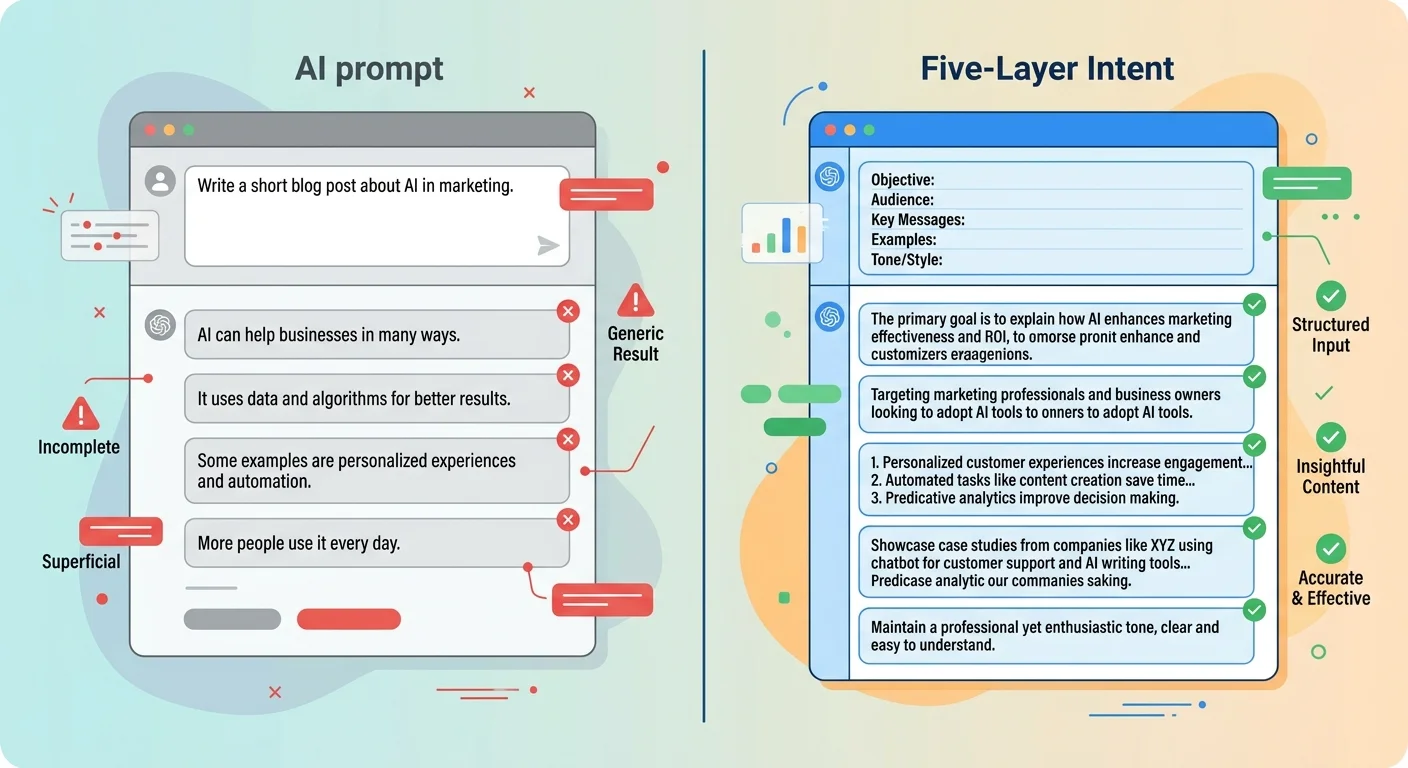

Why Generic AI Prompts Fail This Model

Most practices think they can type "write a blog post about sciatica" into ChatGPT and get authority content.

They can't.

Output matches input. Shallow prompt, shallow content.

Generic AI prompts produce articles that answer direct intent and stop. They don't cover indirect goals. They don't anticipate latent needs. They don't handle objections. And they don't guide the reader toward next steps.

Why? Because the prompt didn't ask for any of that.

The Depth Problem

Generic prompts produce surface-level answers because they don't specify depth requirements.

Tell ChatGPT "write a blog post about lower back pain" — it generates content that answers the literal question. What it is. What causes it. Maybe treatment options.

It has no way to know you needed latent intent coverage, counter-intent handling, or post-intent direction unless you explicitly structure the prompt to demand it.

Most practices don't. They use AI the same way they used a freelance writer: hand over a topic, expect a deliverable, publish, wonder why nothing happens.

The AI isn't the problem. The instruction set is.

The Factual Accuracy Problem

Generic prompts also produce hallucinations.

When an AI doesn't have verified data, it fills gaps with plausible-sounding fabrications. Statistics that don't exist. Studies never published. Treatment protocols that sound credible but aren't clinically accurate.

Without a validation layer, those hallucinations make it into published content. When AI engines cross-reference that content against institutional sources, they detect factual inconsistencies.

Trust drops. Citations disappear.

This is why we use the Two-AI Validation System. Gemini researches and verifies. Claude writes. Gemini validates. Claude refines.

Every claim sourced. Every statistic verified.

We don't publish vibes. We publish receipts.

| Dimension | Generic Prompt | Five-Layer Structured Prompt | AI Validation Result |

|---|---|---|---|

| Depth | Answers literal question only | Covers all five intent layers | Complete — citeable |

| Factual Accuracy | Prone to hallucinations | Cross-verified against institutional sources | Trustworthy — validated |

| Semantic Density | Low concept coverage | High concept coverage with explicit relationships | Authority signal detected |

| User Journey | Ends at the answer | Guides from question to decision | Patient-ready — actionable |

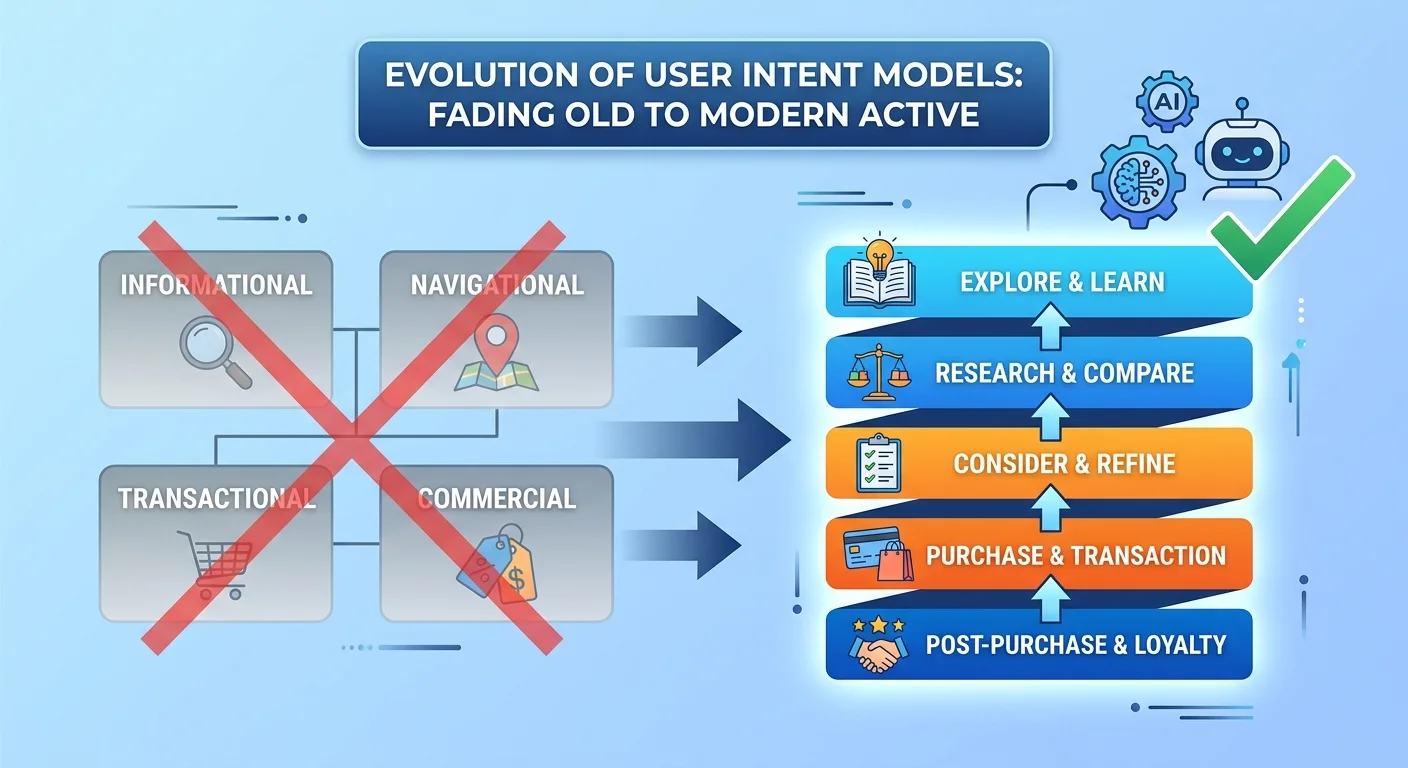

The Traditional Intent Model Is Dead

Quick pause before we go further.

If you're looking for a quick trick to paste into your content calendar, this isn't it.

The Five-Layer Intent model is an architectural requirement, not a content hack. You can't retrofit it onto existing blog posts and expect AI to suddenly cite you.

This is a rebuild. Takes understanding patient psychology, clinical context, decision architecture. Takes time. Takes experience. Takes willingness to reject the shortcuts that produced invisible content in the first place.

If that timeline doesn't fit your decision framework — no hard feelings.

But if you're tired of publishing content that disappears the moment it goes live, keep reading.

Why the Old Model Fails Now

The traditional intent model — Informational, Navigational, Transactional, Commercial — was built for Google's ranked list.

Helped you match content type to where someone was in the buying journey. Informational for top-of-funnel awareness. Transactional for bottom-of-funnel conversion.

That model worked when search engines returned ten blue links. Patients clicked through, read your page, evaluated your credibility, decided whether to book.

AI engines don't return lists. They synthesize single answers.

The old model can't account for that. It was designed for a user journey that no longer exists.

Traditional SEO intent models assume the user's stated question is the full picture. It's not. The stated question is the entry point. The real decision happens in the layers underneath.

What AI Needs That Google Didn't

AI needs context.

Needs to understand not just what the user asked, but what they meant, what they didn't ask, and what objections they might have.

Google's algorithm could rank an article based on keyword relevance and backlink authority. AI synthesizes answers based on comprehensiveness and trust.

That's a fundamentally different evaluation model.

The traditional intent framework never accounted for counter-intent or latent needs. Assumed the user's stated question was the full picture. When search was a list, that assumption worked. When search became conversational, it broke.

According to BrightLocal's 2024 voice search study, 58% of consumers have used voice search to find local business information. Voice queries are longer, more conversational, more context-dependent than typed searches.

AI engines processing those queries need content that addresses the full conversational context — not just the literal keyword phrase.

That's what the Five-Layer Intent model was designed to provide.

Frequently Asked Questions

How is the Five-Layer Intent model different from traditional keyword research?

Traditional keyword research focuses on matching a specific phrase. You identify a keyword, check search volume, evaluate competition, and optimize content around that phrase.

The Five-Layer Intent model focuses on building comprehensive answers to the entire ecosystem of questions — spoken and unspoken — around that phrase.

Keyword research asks: "What are people searching for?" Five-Layer Intent asks: "What does someone searching for this actually need to know — and what will they ask next if we answer this well?"

Keyword research isn't irrelevant. It's insufficient. Tells you where to start. Doesn't tell you how to finish. Keyword research tells you where to start. Five-Layer Intent tells you how to finish.

Can I just apply this model to my existing blog posts?

You can try. It won't work as well as building fresh. Here's why: most existing posts were written with a different intent framework.

Here's why: most existing posts were written with a different intent framework. They answered direct, maybe touched indirect, stopped. Structure isn't designed for five-layer depth.

Retrofitting requires rewriting 60–80% of content anyway. At that point, you're better off starting fresh.

Exception: if you've got an article already ranking well or getting decent traffic, it's worth auditing against the five layers and filling gaps. But don't expect a quick revision to transform commodity into authority.

Structure is as important as the words.

What's the most common mistake practices make when trying to write for AI?

Using generic AI prompts that produce surface-level, factually thin content.

I've seen practices hand ChatGPT a list of 20 blog topics and expect 20 authority articles back. What they get is 20 variations of the same shallow answer — reworded just enough to look different, but with zero semantic density.

AI engines detect this immediately. No depth. No source verification. No integration with related concepts. Reads like commodity text because that's exactly what it is.

Second most common mistake: not validating factual claims. AI-generated content is prone to hallucinations. If you're not cross-checking every statistic and clinical claim against institutional sources, you're publishing misinformation. When AI engines detect that, trust collapses.

Is 'Latent Intent' just another name for 'long-tail keywords'?

No.

Long-tail keywords are specific, longer search phrases. "Best chiropractor for sciatica in Orange County" is a long-tail keyword. More specific than "chiropractor" but still a search phrase.

Latent intent is about underlying, unstated problems or needs that a reader has — which may not be captured in any single keyword.

Example: someone searching "chiropractic treatment for migraines" has latent intent around visit frequency, insurance coverage, whether they can continue meds during treatment, what to do if symptoms worsen. None of those are long-tail keywords. They're unstated concerns influencing the decision to book.

Latent intent addresses those concerns even if the patient didn't explicitly search for them.

That's the difference. Long-tail keywords are search behavior. Latent intent is decision psychology.

After I understand this model, what's the first step to see where I stand?

First practical step: run a diagnostic to see what AI engines currently say about your practice.

Establishes a baseline. You'll see whether AI is citing your content, recommending your practice, or ignoring you entirely.

Most practices assume they're in decent shape because they've blogged for years. Then they run the check and discover they're not showing up in any AI recommendations.

That gap is the problem.

Once you know where you stand, you can prioritize. If content is semantically thin, Five-Layer Intent becomes the rebuild blueprint. If content is solid but Semantic Anchoring is weak, different fix.

But you can't fix what you can't see. Diagnostic comes first.

Conclusion

The Five-Layer Intent model isn't optional. It's the baseline.

Content that doesn't cover all five layers is incomplete by definition — and AI treats incomplete content as untrustworthy. The gap between practices that get this and practices still using the old SEO playbook widens every month.

Authority compounds. So does invisibility.

Here's what I've watched happen over the last three years: practices that invested in comprehensive, five-layer content early are now the default AI recommendations in their markets. They didn't get there by publishing more. They got there by publishing deeper.

The practices still using commodity content — 800-word blog posts that answer the question and stop — are invisible. Not because they're doing something wrong. Because they're not doing enough.

This entire model is the blueprint for our AEO Content Writing services.

There's no neutral position here. Every month you publish content that only addresses one or two intent layers, a competitor is publishing content covering all five. That gap doesn't close. It accelerates.

If you're ready to stop wasting time on content that doesn't move the needle, next step is simple: see what AI currently says about your practice. Check takes 15 minutes. It's free. And it'll show you exactly where you stand — not where you hope to be.

Want to see what AI currently says when someone asks who the best chiropractor in your area is? The AI Visibility Check takes 15 minutes. Shows you exactly where you stand — and whether your current content strategy is building authority or wasting time.