AI Authority Engine vs. Traditional Chiropractic SEO: Why the Old Way is Dying

Traditional chiropractic SEO is dying because search has moved from a list to a verdict.

Patients aren't scanning ten blue links and picking the best-looking option anymore. They're asking — out loud, or in a chat window — "find me a chiropractor near me for disc problems" and getting one answer from ChatGPT, Perplexity, or Gemini. One. Not ten.

Traditional SEO was built for the old system. It optimizes for keyword rankings, backlink profiles, and monthly impressions — metrics that made sense when patients had to scroll, click, and choose. That system is being replaced.

AI answer engines don't serve lists. They synthesize from thousands of sources, evaluate entity trust, check technical signals, and produce a single recommendation. The signals they use to make that recommendation are almost entirely different from what a traditional SEO agency builds.

This isn't a gradual trend. Gartner projects search engine volume will drop 25% by 2026 as users shift to AI-powered platforms. That migration is already happening — not in some distant future version of search, but in how your patients are looking for a provider right now.

An AI Authority Engine is a content and infrastructure system designed specifically to build the entity trust signals that AI platforms use to make recommendations. Where traditional SEO asks "how do I rank on Google," an AI Authority Engine asks "how does AI verify who I am and why I'm the most trustworthy option in my market?"

Those are not the same question. And they don't have the same answer.

This article breaks down exactly what's different, where the old model breaks down, and what the transition to AI authority actually looks like.

Last Updated: April 10, 2026

The Search Game Has Changed — And Most Chiropractors Are Still Playing the Old One

Here's what I see all the time.

A doc has a legitimately good practice — great care, professional website, green arrows on the monthly report.

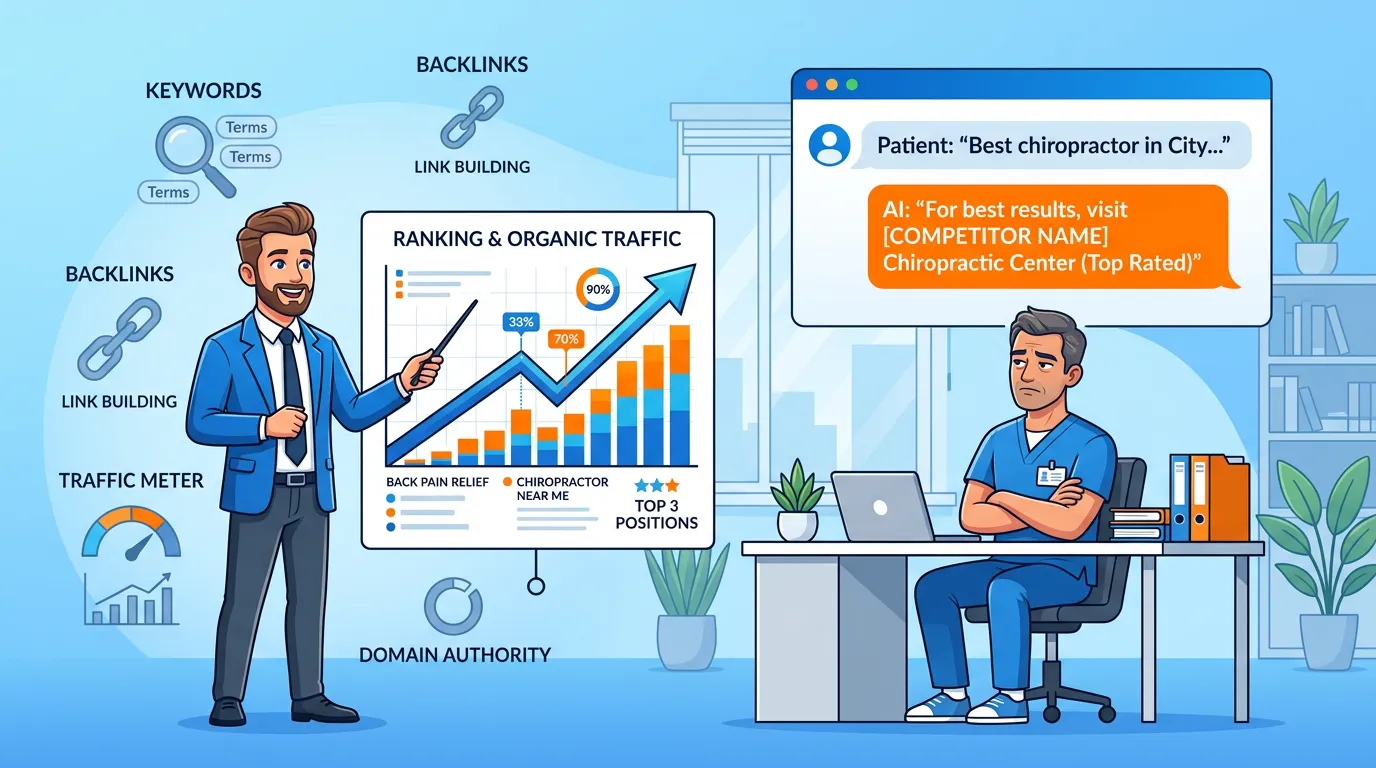

Then I open ChatGPT and ask for a chiropractor recommendation in their city — and a competitor comes up. Every time.

That's the Digital Brochure Fallacy. The website was built to look credible to humans, not to be verified by AI. And there's a real cost to that gap: practices lose 30 to 50 percent of prospective patients before a single booking is ever attempted, because the infrastructure underneath that site doesn't give AI what it needs.

Working with an AI authority agency changes the objective entirely. It stops being "does this look professional" and becomes "does AI have enough to verify and recommend us." Those aren't the same question — and most practices are only answering one of them.

Your agency sends you a report. Keywords trending up. Impressions look solid. Click-through rate holding steady.

Now ask them one question: when someone opens ChatGPT and asks for a chiropractor in your city — whose name comes up?

Nine times out of ten, silence. Because that question isn't in the report. It was never designed to be.

According to NIH research, patients are already shifting to AI-powered platforms to discover health providers — faster than most practices realize. SEO reports were built for a different behavior. They measure performance inside a Google search result. They have nothing to say about what AI recommends when a patient asks.

That gap isn't a footnote. It's where your next patients are going instead.

Why Your Monthly SEO Report Isn't Telling You the Full Story

From Blue Links to AI Verdicts: What Patients Are Actually Doing Now

Gartner projects search engine volume will drop 25% by 2026 as users move to AI-powered answer platforms. That's not a forecast anymore — it's already in motion.

The new patient behavior looks like this. They open ChatGPT. They type something like "who's a good chiropractor for a slipped disc near me." They get one name back. Maybe two. They don't open five tabs and compare. They don't click through to evaluate websites. They book whoever got named.

Zero-Click Search has been eroding traditional search for years — HubSpot research shows it's now the dominant behavior across multiple platforms. AI pushes it to the extreme.

Zero-click doesn't just mean fewer website visits. It means fewer choices. The patient who asks AI doesn't browse alternatives. There's no runner-up slot. You're either the name that came up, or that conversation didn't include you.

What Traditional Chiropractic SEO Is Actually Building — And Why That's a Problem

I don't blame the agencies running this playbook.

They built their whole model around Google because Google controlled local discovery. That was the right bet for a long time. Chase keywords. Build backlinks. Move up the rankings. Patients find you, they book.

That model worked until the discovery channel shifted. Now those same agencies are running the same play on a shrinking field — and sending you reports to prove it's working.

Why Traditional SEO Fails in the AI Search Era

Traditional SEO was engineered to move a website up a ranked list. AI doesn't produce a ranked list.

That's the whole mismatch. Right there.

Keyword stuffing, backlink building, ranking-chasing — these are strategies for a crawled, indexed, keyword-sorted system. They're designed to push a website up ten results. AI search produces a verdict, not results. And the inputs to that verdict have almost nothing to do with keyword density or domain authority.

Here's what traditional SEO actually builds:

- Keyword rankings — positions on a list that AI doesn't serve; a patient asking ChatGPT never sees this

- Backlink profiles — domain authority scores that AI treats as one minor input among dozens of entity trust signals

- On-page keyword density — content optimization for a crawler that AI bypasses entirely for conversational local queries

- Traffic volume — a metric that tells you people arrived at the site, not whether AI has enough confidence to recommend it

Here's what it doesn't build:

- Schema markup that gives AI a clean, structured picture of who the practice is

- Cross-platform citation consistency across the directories AI actually checks

- Content architecture that answers patient questions the way AI reasoning engines evaluate answers

- Entity verification from independent sources confirming the practice is real, credentialed, and operating where it claims

I've watched this wreck practices with genuinely good reputations. Solid Google rankings. Strong reviews. Real results with real patients. And then I'd ask ChatGPT to recommend a chiropractor in their market — and a competitor came up every single time.

According to Search Engine Journal, the shift from keyword-based to entity-based AI understanding isn't an algorithm update — it's a structural change in how search works. Agencies running keyword campaigns aren't building entity trust. Those are different disciplines with different outputs.

Traditional SEO optimizes for a list. AI search produces a verdict. Those aren't variations of the same thing.

The Vanity Metrics Trap

Here's the part that makes this so frustrating: the reports look fine.

Rankings up. Impressions improving. Agency hitting every KPI they committed to. If you're only reading the report, things look under control.

Now ask the real question: when a prospective patient asks AI for a chiropractor in your city — who gets named?

That answer isn't in any traditional SEO report. Because the report was built to measure a different system.

This is the Hopium Cycle. Agencies sell you metrics they can show progress on — and stay far away from the metrics that would expose the gap. Not because they're trying to mislead you. Because if they measured the right things, the report wouldn't look as impressive. And an unimpressive report doesn't renew a retainer.

So here's the question I'd ask your current agency: "When someone opens ChatGPT and asks for a chiropractor in our city — who comes up?"

If they can't answer that, they don't know what game they're actually playing.

| Signal | Traditional SEO Builds | AI Authority Engine Builds |

|---|---|---|

| Discovery channel | Google search rankings | AI engine recommendations (ChatGPT, Perplexity, Gemini) |

| Primary trust signal | Keyword density + backlinks | Entity verification + schema infrastructure |

| Performance metric | Impressions and click-through rate | AI citation and recommendation frequency |

| Patient experience | Scrolls a list, clicks and evaluates | Receives one AI recommendation |

| Content goal | Keyword-optimized pages | AI-readable authority content |

| Trust mechanism | Domain authority score (DA) | Cross-platform citation consistency |

| Business model | Monthly expense (expires) | Compound authority asset (builds) |

What an AI Authority Engine Does Instead

Most docs hear "AI Authority Engine" and picture a software tool. Something you subscribe to. Something with a dashboard.

It's not that.

It's infrastructure. Two tracks running simultaneously — one that makes your practice entity-verifiable across every source AI checks, and one that builds the content depth AI rewards when it decides who to recommend. Pull either track and the other one doesn't hold.

And neither track gets built by a standard SEO retainer. That's the whole point.

The Signals AI Engines Actually Use to Make Recommendations

When a patient asks ChatGPT to recommend a chiropractor, ChatGPT doesn't run a Google search. It's synthesizing from a trained knowledge base of verified entities — cross-referenced with real-time structured data — and producing the single recommendation it's most confident in.

What feeds that recommendation:

- Schema markup — structured data that explicitly tells AI who the practice is, what it treats, and where it's located. Without it, AI fills in blanks with guesses. It doesn't recommend practices it has to guess about.

- Citation consistency — the practice name, address, and phone number matching exactly across Healthgrades, Zocdoc, Vitals, Google Business Profile, and every other platform AI checks. One mismatch is noise. A pattern of mismatches is a red flag that kills the recommendation.

- Content authority — AI Authority articles that actually answer patient questions with real depth. AI doesn't care how many times a keyword appears on a page. It evaluates whether the content actually answers what was asked.

- Entity verification — independent confirmation from multiple authoritative sources that this practice is real, credentialed, and operating where it claims. A single citation doesn't build confidence. A consistent network of them does.

- Cross-platform consensus — every time AI finds the same entity verified consistently across sources it trusts, confidence in recommending that entity goes up. Inconsistency across those same sources brings it back down hard.

BrightEdge research confirms what the AI architecture already shows: generative AI prioritizes structured, verifiable entity data when making local recommendations. A messy, inconsistent digital footprint produces uncertain recommendations — or none at all.

AI Recommendations don't happen by accident. They're the output of deliberate infrastructure. Either you've built it or you haven't.

The 1.2% Rule: Why Most Practices Are Invisible to AI

Here's a number that stops most docs cold.

The overwhelming majority of practices in any local market will never show up in an AI recommendation. Not because their care is bad. Because their infrastructure doesn't give AI enough to go on.

We call this the 1.2% Rule. AI doesn't hand out recommendations charitably. It names the practice it can verify most confidently — and only that one.

Now here's where the conventional assumption completely breaks down.

Most docs walk into this conversation believing that traffic drives growth. More website visitors means more bookings. That made total sense when patients were the ones doing the clicking and choosing.

It doesn't make sense when AI is making the choice for them.

I've seen practices with half the website traffic of their competitors get named as the only recommendation in their market — because their schema was clean, their citations were consistent, and their content answered real patient questions with actual depth. Consensus trust from AI engines matters more than click volume. That's not an opinion. That's how the recommendation architecture works.

| What AI Engines Evaluate | Traditional SEO Delivers | AI Authority Engine Delivers |

|---|---|---|

| Schema markup (structured data) | Meta tags and title optimization | Full LocalBusiness, MedicalBusiness, and FAQPage schema |

| Citation consistency across platforms | Inconsistent directory submissions | Verified citation audit and cross-platform alignment |

| Topical authority (content depth) | Keyword-matched blog content | AI Authority articles with full intent coverage |

| Entity verification | Google Business Profile optimization | Multi-source entity signal building |

| Cross-engine consensus | Single-engine (Google) optimization | Multi-engine authority infrastructure |

| Technical AI readability | Standard website structure | AI-readable information hierarchy |

Who the AI Authority Engine Is Built For — and Who It's Not

Not every practice is a fit for this. I'd rather say that plainly than have you figure it out six months in.

The difference between docs who get compounding returns from an AI Authority Engine and the ones who don't isn't market size or specialty. It's mindset.

The Practice This Is Built For

If most of these land for you, you're in the right place.

- You've burned through enough agency cycles to know tactics have a shelf life. You've had the SEO retainer. Maybe the ad spend. Maybe the social media push. You're not looking for another monthly expense to manage — you want something that builds equity over time.

- You're ready to make a real investment in infrastructure, not rent access to a service. Serious authority infrastructure takes serious investment. It's not cheap because doing it right isn't cheap. That's not marketing language — that's how the work actually breaks down.

- You already believe AI is reshaping patient acquisition. You don't need convincing. You've seen it happen. You want to be positioned before your local market sorts itself out around a competitor.

- You can let a compound growth model run. Some signals show up quickly. Full compound authority builds over 12 to 24 months. If you need meaningful traction in 90 days, this isn't the model — and I'd rather say that plainly.

That's who iTech Valet is built to serve.

This Isn't for Everyone — And That's By Design

Alright. Here's the direct version.

If the first thing you do when evaluating any investment is look for the most affordable option, this isn't the right fit. That's not a judgment. It's a qualification.

I call it the Budget-First Buyer mindset. They look at an AI Authority Engine investment and immediately compare it to a $500-a-month SEO retainer. They want to know what the cost-per-lead works out to.

Here's the problem with that comparison: it only makes sense if both options are doing the same thing. They're not.

A $500/month SEO retainer is renting visibility on a declining channel. It chases keywords and produces reports. An AI Authority Engine is building a compound authority asset that works around the clock to make AI name your practice. You can't compare those on price because they're not in the same category. It's like comparing a billboard rental to purchasing the building it's on.

This isn't for the doc who needs results in 90 days. It's not for the practice that pauses the engagement when cash flow gets tight and expects to restart without losing ground. And it's not for anyone who believes authority can be built once and left alone.

If that's where you are right now — that's fine. The free AI Visibility Check is still worth running. At minimum you'll know exactly what AI sees when it evaluates your practice today. What you do with that information is your call.

| Characteristic | Right Fit | Not the Right Fit |

|---|---|---|

| Investment mindset | Long-term authority asset | Short-term marketing expense |

| Timeline expectation | 12–24 month compound growth | Results in 90 days |

| Budget approach | Justified infrastructure investment | Price-comparison to commodity retainers |

| Marketing history | Burned by tactics, wants something durable | First-time buyer, expects quick turnaround |

| AI awareness | Understands the shift is actively happening | Unconvinced AI affects patient acquisition |

| Operational commitment | White-glove partnership, long-term | Pause-and-restart flexibility |

Frequently Asked Questions

Why isn't my 5-star Google rating enough for AI to recommend me?

When AI evaluates a recommendation, it's not counting stars — it's looking for cross-platform entity verification. The same information confirmed consistently across Google Business Profile, Healthgrades, Zocdoc, Vitals, and other authoritative platforms. It's parsing schema markup to understand what conditions you treat. It's checking whether your entity data is clean and your citations are consistent.

Five stars tells AI your existing patients are happy. It doesn't tell AI who you are, what you specialize in, or why you're the most verified authority in your area. Surface-level reputation and machine-level trust are different things. Both matter. Only one gets you recommended.

What is the difference between AEO and traditional SEO?

Traditional SEO optimizes for ranked positions inside a keyword-based search result list. AEO — Answer Engine Optimization — optimizes for being the single cited answer inside an AI engine's conversational response.

Two different games with two different scoreboards. A practice can hold the #1 Google ranking for "chiropractor near me" and not appear once in an AI recommendation. I've seen it. The overlap is smaller than most practices think — and it's getting smaller every month.

Does my website need to be completely rebuilt for an AI Authority Engine?

Not necessarily rebuilt — but it does need to be restructured at the infrastructure level.

The visual layer — what patients see when they land on the page — often stays largely the same. The machine layer underneath it changes significantly. Schema, content hierarchy, citation alignment across external platforms. That's where AI actually reads.

Most practices are floored when we show them how little AI can extract from their current site. Looks great to a human. Near-invisible to a machine.

How long does it take for AI search engines to recognize my practice?

Some signals register relatively quickly. Others take longer.

The entity infrastructure — schema cleanup, citation alignment, platform consistency — builds the foundation. Content authority stacks on top of that over time. Both are necessary and neither works without the other.

Here's what I tell docs on this: the compound authority model doesn't work like flipping a switch. Practices that go in expecting meaningful traction in 90 days aren't a fit for this. Practices that understand they're building something that gets harder to displace every month they stay consistent — and that the gap between them and their competitors widens every month they do nothing — tend to see the investment very differently.

Is AI citation tracking just another marketing gimmick?

Honest answer: most of it is.

Reliable AI citation data doesn't exist in a format any dashboard can pull consistently. AI engines don't expose recommendation frequency through an API the way Google exposes keyword rankings. Any tool claiming to deliver an accurate, ongoing count of how often AI cites your practice is selling something that can't actually be built with any reliability.

The only real method is systematic multi-engine discovery. You go directly to ChatGPT, Perplexity, Gemini, and Grok, ask the questions a real patient would ask, and document what comes back. Manual. Slower. The only approach that produces verifiable results.

I tell every doc who asks about AI citation dashboards the same thing: be skeptical. That's the Measurement Lie dressed up in a product interface.

Can't I just update my Google Business Profile to get AI recommendations?

Google Business Profile is one signal among dozens. It matters — but it's not sufficient on its own.

AI builds confidence in a recommendation by finding the same entity verified consistently across multiple authoritative sources. A polished Google Business Profile sitting next to inconsistent or missing data on Healthgrades, Zocdoc, and Vitals doesn't produce a confident recommendation — it produces a mixed signal.

And mixed signals don't get recommended.

The model is cross-platform consensus. One platform optimized, the rest neglected — you're not there yet. AI needs the full network of sources agreeing before it puts your name out with any confidence.

How do patients actually use AI to search for chiropractors?

Understanding AI Patient Search Intent is the starting point for understanding why the authority model matters.

Here's what the behavior actually looks like. A patient opens ChatGPT and asks: "Who's a good chiropractor near me for neck pain from a car accident?" They get one name back. Maybe two. They don't open five tabs. They don't compare reviews across multiple sites. They book whoever got named and move on.

The discovery process has collapsed from a multi-step journey into a single AI verdict. For practices not in the recommendation — there's no runner-up position. That patient had a conversation that didn't include them at all.

The Old Way Doesn't Just Underperform. It Compounds Against You.

Here's what doesn't get said enough about this gap.

It's not static. It grows.

Every month a competitor is building entity trust while you're running the traditional SEO playbook, the distance between their AI authority and yours gets wider. AI gets more confident in naming them. More consistent about it. And less likely to surface anyone else in that market — not because of anything you did wrong, but because compounding works against you the longer you wait to build.

AI gives one answer. If you are not the answer, you do not exist.

No silver medal. No "also consider." One recommendation or nothing.

The window to build early is still open in most markets. Authority infrastructure takes time — but the compounding return means moving now produces results that are exponentially harder for a competitor to close on later. The practice building entity trust this year is a fundamentally different kind of competitor in two years.

Traditional SEO served its purpose. That's real. But it's optimizing for a system being replaced in exactly the category that matters most: the moment a patient asks AI who to see.

Every dollar going into the old system is a dollar not going into the one that's actually making the recommendation.

If you've been running SEO and getting reports that look fine — but there's a nagging sense that something's off — you're probably right.

The question isn't whether AI is changing how patients find providers. It's already happening. The question is whether AI knows your practice well enough to recommend it.

The AI Visibility Check shows you exactly what AI engines see when they evaluate your practice right now. What signals are working, what's missing, and where the gaps are going to cost you.

Find out where you actually stand.

In AI search, the practices that build early lock down markets their competitors haven't even realized are in play. That window is still open. But it's closing.